For a long time I assumed trust in crypto was fairly straightforward.

You verify a signature, confirm a balance, execute a transaction, and the system moves on. That model works well when humans interact with protocols. But the moment machines begin coordinating with each other, the idea of trust starts to look very different.

I started realizing this while observing a charging station used by delivery robots.

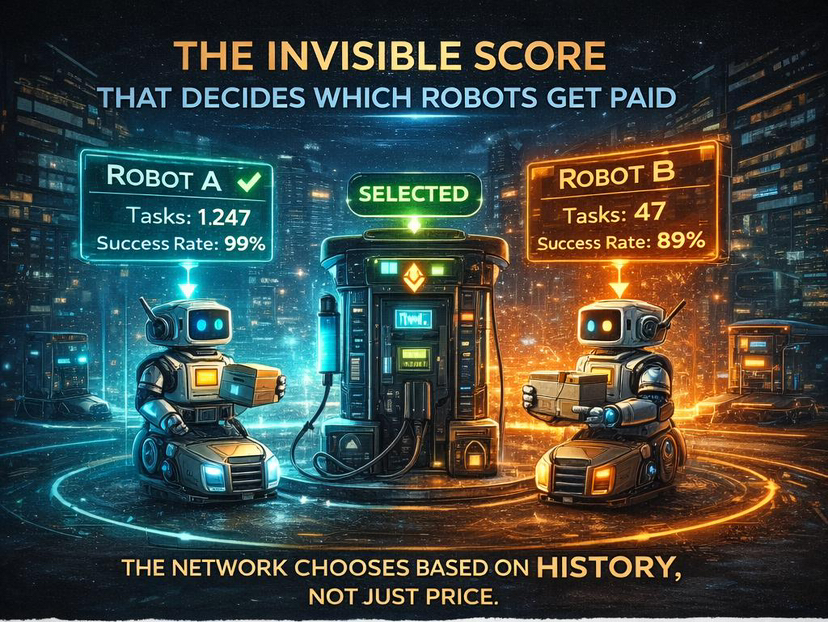

Two robots arrived almost at the same moment. Their battery levels were nearly identical. They were offering the same price for electricity and had traveled roughly the same distance. From the outside, there was no obvious reason to prioritize one over the other.

Yet one of them began charging immediately while the other had to wait.

Curious, I asked the operator why the station chose that robot first.

He pointed to a number on his dashboard that I hadn’t paid attention to before. The first robot had completed more than a thousand tasks with a success rate above ninety-nine percent. The second robot had far fewer completed tasks and a couple of incomplete deliveries flagged by warehouses.

The station wasn’t choosing based on price. It was choosing based on history.

That small moment changed the way I think about machine coordination.

In a machine economy, reputation is not a secondary feature layered on top of the system. It becomes the foundation that everything else depends on.

Machines interacting with each other are essentially strangers.

A delivery robot operating in one city may have never interacted with the charging infrastructure in another. A compute node processing AI workloads in one region has no direct relationship with the cluster requesting resources somewhere else.

Humans usually solve this problem with institutions. Banks, escrow services, contracts, and customer support systems exist largely to establish trust between parties that do not know each other.

Machines cannot rely on those structures.

Instead they need a mechanism that allows them to evaluate reliability instantly, even when encountering another machine for the first time.

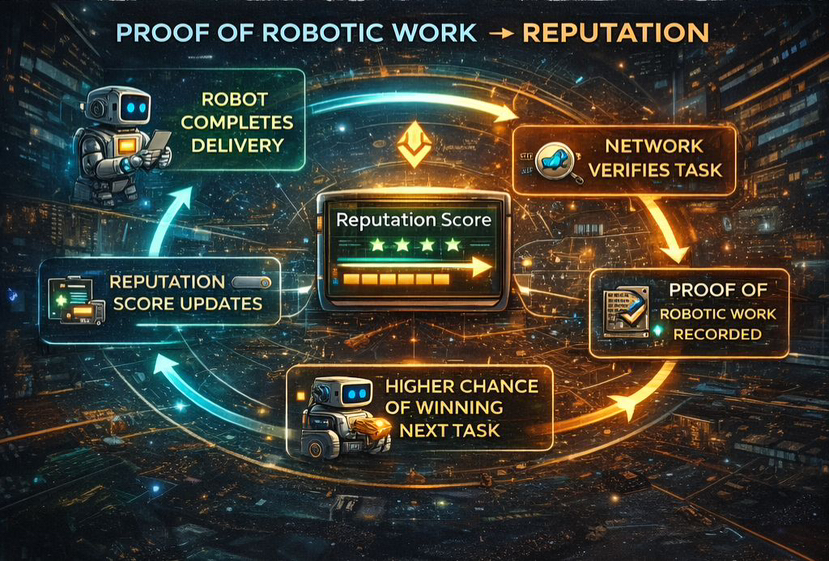

Fabric approaches this problem through something called Proof of Robotic Work, combined with a reputation system that records the outcomes of every completed task.

At first glance it sounds similar to many “Proof of X” ideas that appear in crypto whitepapers. But seeing it applied to real machine tasks makes the concept easier to understand.

Whenever a robot completes a delivery, charges another machine, or performs a warehouse operation, that event is verified by the network. Multiple nodes confirm that the task actually happened and record the outcome. Over time those records form a verifiable history of work.

The machine does not simply claim it completed a task. The network confirms it.

Reputation in this system is also dynamic.

It evolves constantly as new tasks are completed. A robot that consistently finishes jobs successfully becomes more attractive for future tasks. If failures begin to appear, its reputation score adjusts almost immediately.

During one demonstration I watched, a robot lost reputation points within seconds of a failed delivery being verified by the network. There was no manual review process and no dispute waiting period. The adjustment happened automatically once the task result was confirmed.

This creates something closer to a living record than a static rating.

Machines carry their performance history with them wherever they operate.

One design decision in Fabric’s matching process is particularly interesting.

You might assume that the highest reputation machine would simply win every available task. That would maximize efficiency in the short term. However, it would also gradually concentrate work in the hands of a few machines with the longest histories.

Instead the protocol introduces a small amount of randomness when selecting winners.

Machines with stronger reputations are still favored, but they do not automatically receive every task. Other machines occasionally win opportunities as well, allowing them to build their own track records.

This prevents the network from centralizing around a handful of dominant machines and keeps the ecosystem open to new participants.

Since early 2026 I have been watching activity on Fabric’s network fairly closely. One statistic that stands out is the task completion rate, which sits around 98 percent.

That number becomes more interesting when you compare it with human coordination platforms. Marketplaces for freelance work or logistics services often deal with cancellations, disputes, and delayed payments.

Machines appear to handle many of those coordination challenges more efficiently because each action leaves behind verifiable evidence.

Every task is recorded.

Every outcome is confirmed.

Every machine builds a measurable history over time.

Trust gradually becomes something that can be calculated rather than negotiated.

The broader implication is surprisingly simple.

Human economies invest enormous resources into creating trust between participants who may never meet. Banks, courts, insurance systems, and reputation platforms all exist largely to solve that problem.

Fabric approaches the challenge from another direction.

Instead of adding institutions on top of transactions, the network records the work itself. Trust emerges from the accumulated record of completed tasks.

A robot does not claim reliability.

It demonstrates reliability through history.

That charging station example is still the clearest illustration of how the system works.

Two robots arrived offering the same price. On paper they looked identical.

But the network knew their histories.

One had proven itself thousands of times. The other had not.

The algorithm did not need to guess which machine was more trustworthy.

It simply looked at the record.

And that record decided who got the job.