@MidnightNetwork #night $NIGHT

The more I sit with Midnight, the less “privacy” feels like the hard part.

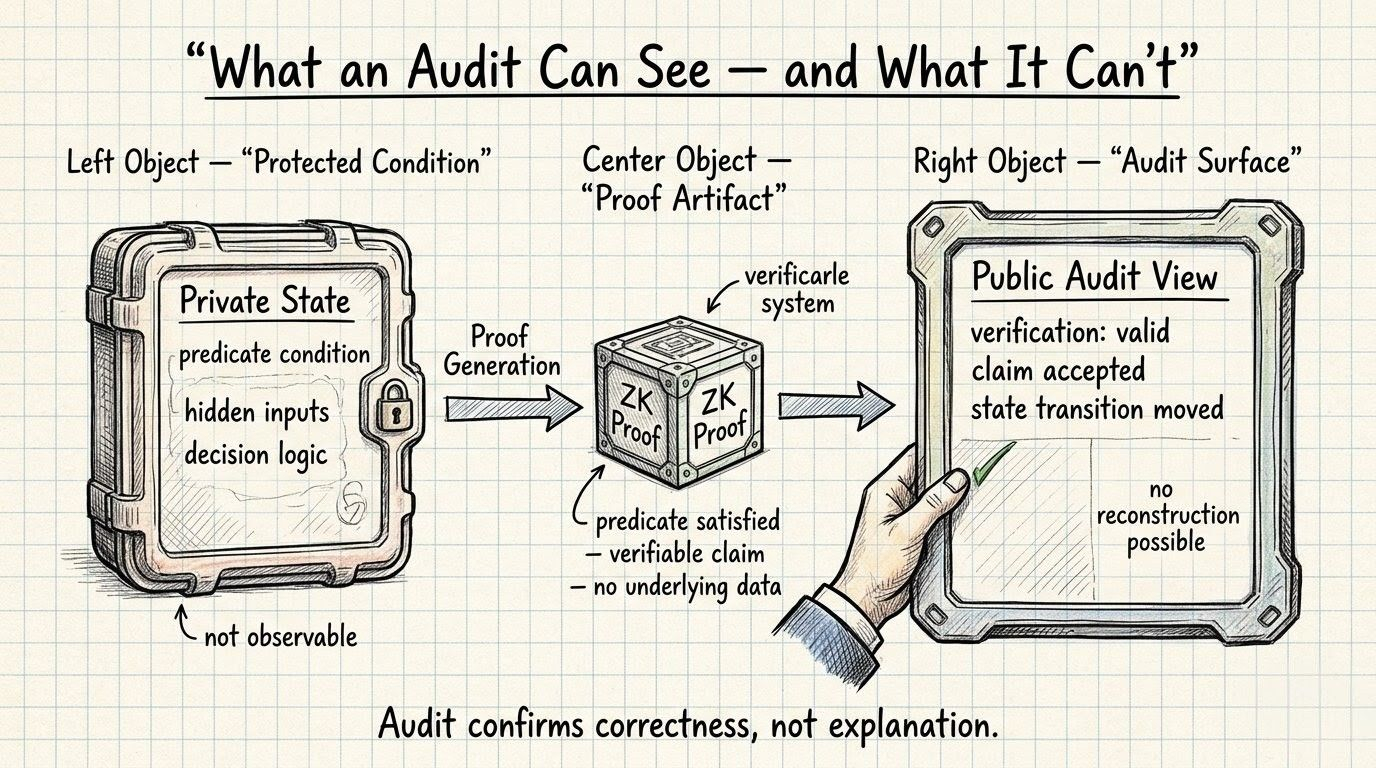

Privacy is the part everybody sees first. Selective disclosure. ZK proofs. Protected data not getting sprayed all over open state just because some workflow wants reassurance. Fine. That’s the visible pitch. Fair enough. A privacy network should do that. If Midnight Network couldn’t, then what exactly are we even doing here.

What keeps bothering me is the thing right behind it.

Audit.

Not whether audit matters. Obviously it does. The weirder question is what the word even means once a system can verify a verifiable claim, move the state transition, and still never let the underlying condition become publicly reconstructable afterward.

That is not the same thing as “privacy with better branding.”

That is a different model.

Take a simple example. Compliance threshold. Eligibility gate. Treasury permission. Some protected condition exists inside private state. User proves it. Proof verification passes. Accepted path moves. Done. Nice. Clean. Modern. Everybody in crypto loves saying they want systems like that until somebody comes back later and says okay then, audit it.

Now what exactly are they auditing?

Do they see the condition? No.

Do they see the underlying inputs? No.

Do they get the full decision logic that made the transition legitimate? Not really.

They get that the proof satisfied the predicate. They get that the system accepted it. They get the consequence.

That’s it.

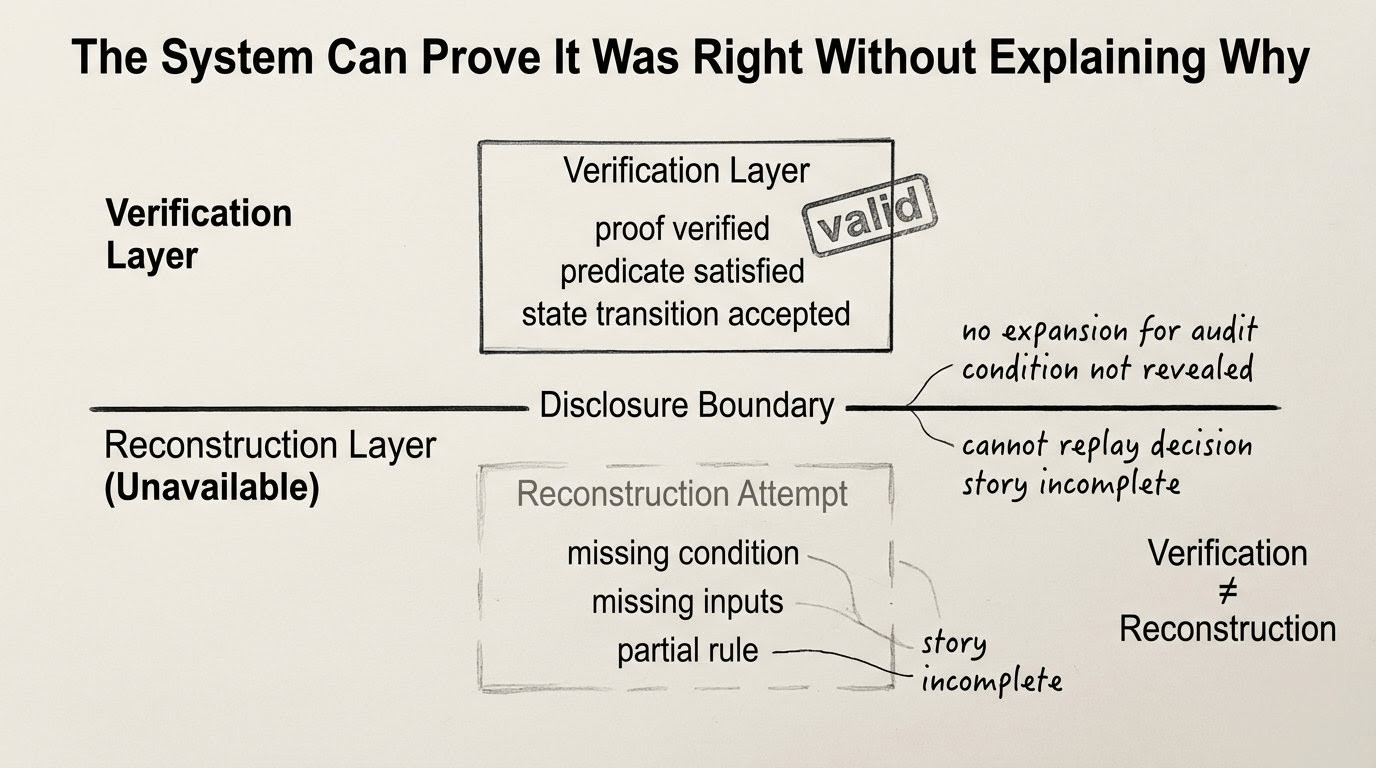

And I think people are still pretending that counts as the same thing as reconstruction when it really doesn’t. On older systems, audit usually means you can go backward. You inspect the material. You retrace the decision. You rebuild the story. Midnight )@MidnightNetwork ) doesn’t really promise that on the public side. It gives you acceptance under protected logic. Which means what, exactly? That the protocol can say yes, this was valid. Good. Valid against what hidden condition? Visible to whom? Enough for the network, sure. Enough for the user? Enough for a counterparty? Enough for the person staring at open state and seeing no public reason the transition should have moved at all?

That’s where it gets a little nasty.

Because once explanation no longer has to enter open state, you’re not just protecting data anymore. You’re changing what accountability feels like. Maybe even what it is.

People keep acting like the hard question is whether privacy makes audit impossible. I don’t think that’s the right question. The harder one is whether Midnight makes people call something an audit when what they actually mean is: the proof checked out and I guess that has to be enough.

Does it? Enough for who?

Enough for the protocol? it already trusts the proof.

Enough for the app? maybe.

Enough for whoever is left outside the disclosure boundary? that part gets shakier fast.

Because here’s the ugly little contradiction sitting in the middle of it. Private state can hold the qualifying condition. Proof verification can pass. The accepted path can move. The disclosure boundary can stay closed. And the public side can still be left with something that is correct but not fully explainable.

Nothing broke.

That’s the part that keeps catching me. If something failed, easy, you debug. If something leaked, easy, you blame. Here everything is internally consistent and still somehow incomplete. Open state isn’t lying. It’s just not the whole story, because it was never meant to be.

And Midnight is basically saying, you don’t need the story. you need the proof.

Maybe that works. Maybe that is the point. But then let’s stop pretending those are interchangeable. Verification is not the same thing as understanding. Acceptance is not the same thing as reconstruction. And audit, on Midnight, might end up meaning something colder than people expect: the system can prove it was correct, while never giving you enough public material to tell yourself the story afterward.

That is a much stranger thing to rely on than “private by default” makes it sound.