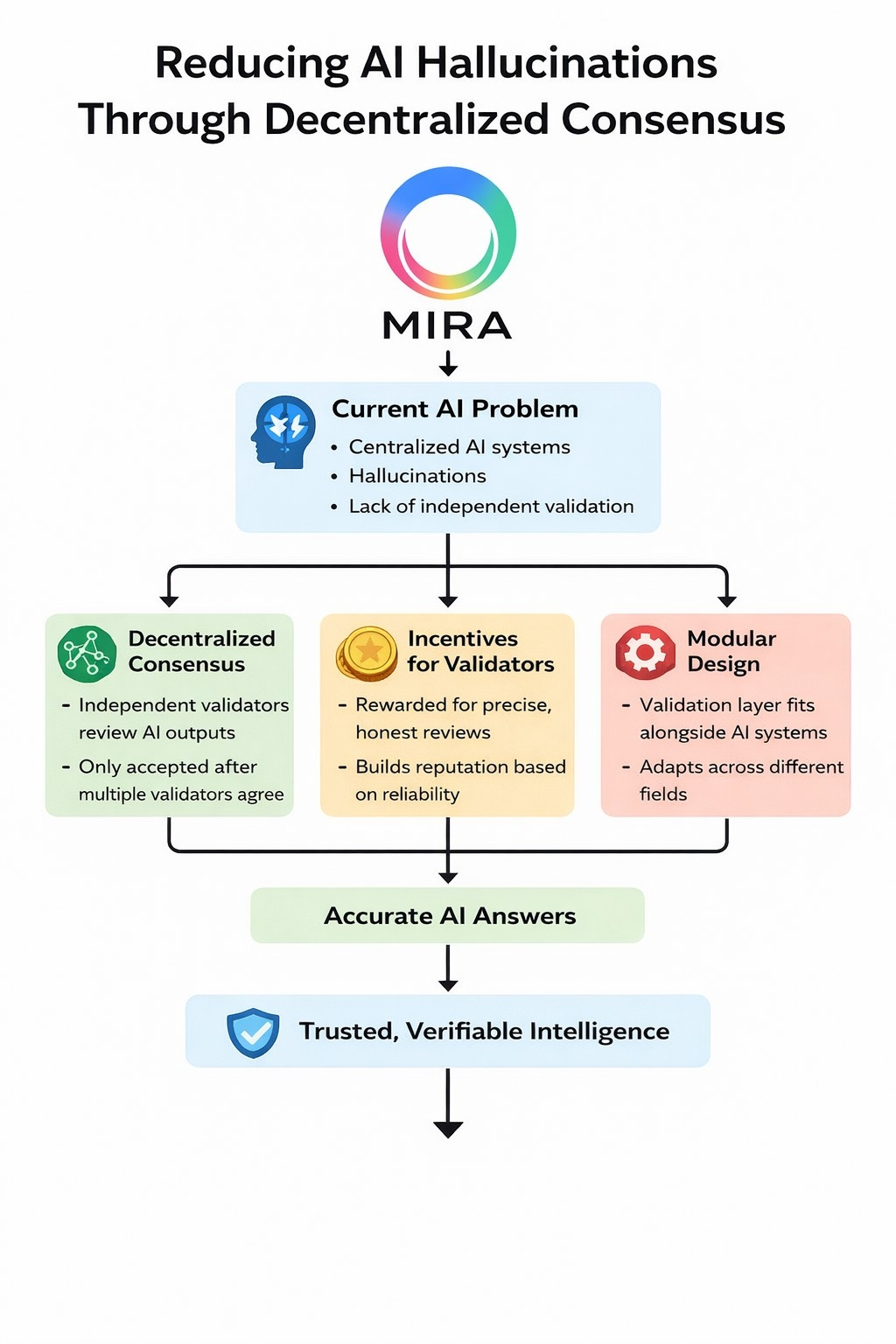

These days,the real problem with artificial intelligence isn’t speed or how much data it can chew through.It’s trust. Sure,AI gets smarter all the time,but it still runs headfirst into a stubborn problem:hallucinations.The model gives you an answer that looks sharp and sounds convincing,but the facts just aren’t there.Sometimes it’s flat out wrong, sometimes made up.That’s not just a technical glitch it’s a deeper flaw in how we design and check these systems.

Right now,most AI models live in centralized silos.One company builds,trains,and runs the thing.When you get an answer,you’re supposed to take it at face value.Maybe there’s a safety check or a confidence score tacked on,but rarely does anything outside that company step in to double check the answer.Without that independent layer, hallucinations slip through sometimes in places where mistakes can cost real money or even lives,like medicine,banking, or law.

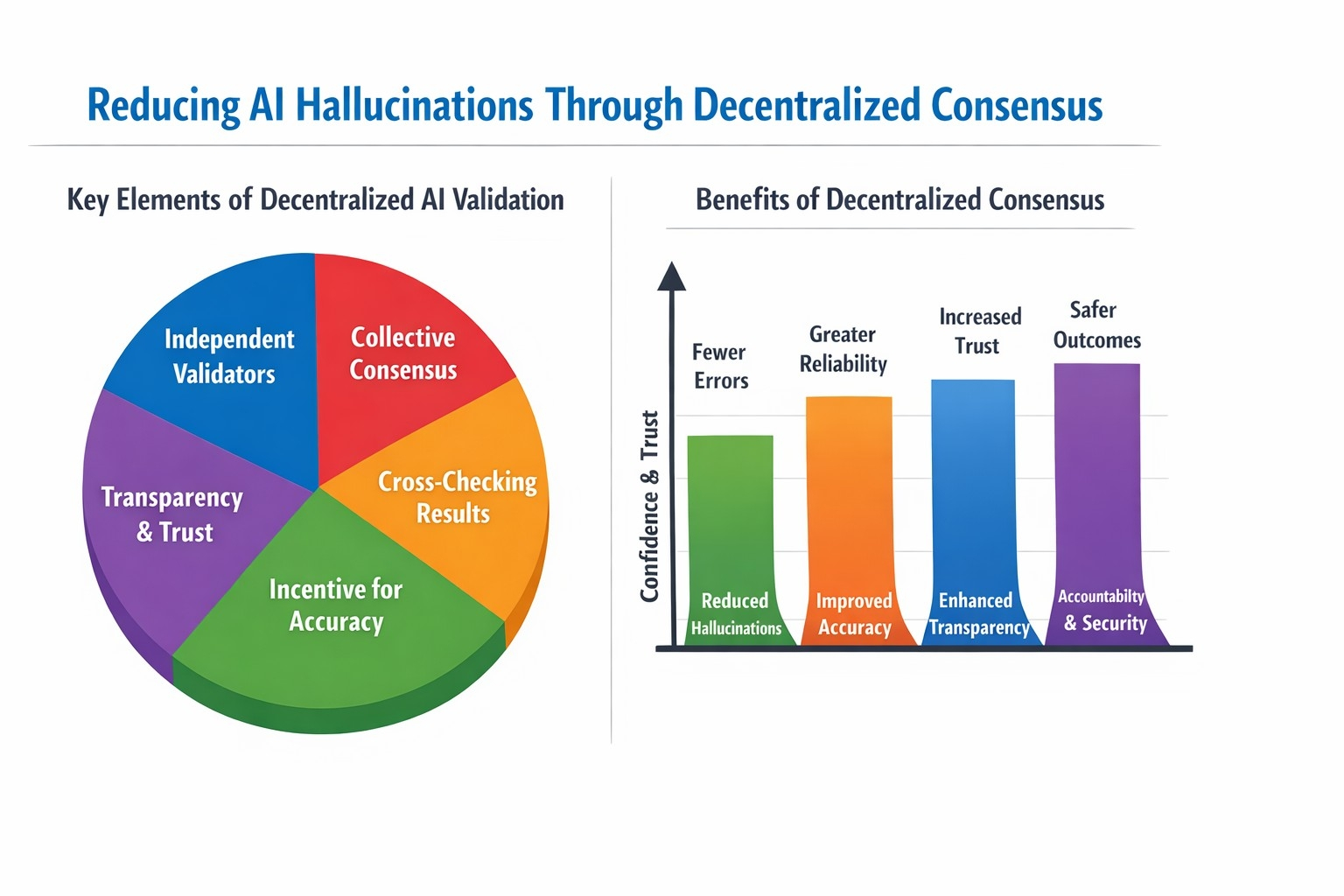

Fixing this isn’t just about feeding the model more data or making it bigger.The whole structure needs a rethink.Decentralized consensus points the way forward.Instead of letting one AI declare the answer,you bring in a network of independent validators who all look at the output.They cross examine it, compare notes,and agree or don’t on whether it holds up to scrutiny.The system only accepts answers that pass this collective review,using real standards for accuracy.

This flips AI from a secretive black box into something a lot more open and accountable. When many eyes spread across different groups watch the outputs,mistakes are harder to hide.Sure,consensus won’t catch every slip,but it cuts down the odds that nonsense gets mistaken for fact.

Incentives matter,too.Validators in a decentralized network get rewarded for honest,precise reviews,not just rubber stamping answers.The more reliable you are, the better your reputation in the network. This creates a feedback loop people have a reason to care about getting it right,and trust in the system grows as a result.It’s a sturdier foundation than just hoping for smarter models.

The architecture needs to play well with others.Decentralized consensus shouldn’t bulldoze over existing AI setups;it should plug in alongside them.Developers can bolt on validation layers without slowing things down.That way,the system stays nimble and can adapt across different fields.

Really,if AI’s going to earn a place in critical systems,it needs verifiable intelligence,not just clever algorithms.Cutting down hallucinations isn’t a side quest it’s a redesign of how we build trust.Decentralized consensus moves AI from “just trust us” to “here’s the proof.”As AI seeps deeper into areas where the stakes are high,this kind of overhaul won’t just help it’ll be essential.

@Mira - Trust Layer of AI $MIRA #Mira