Most AI systems today have one major weakness.

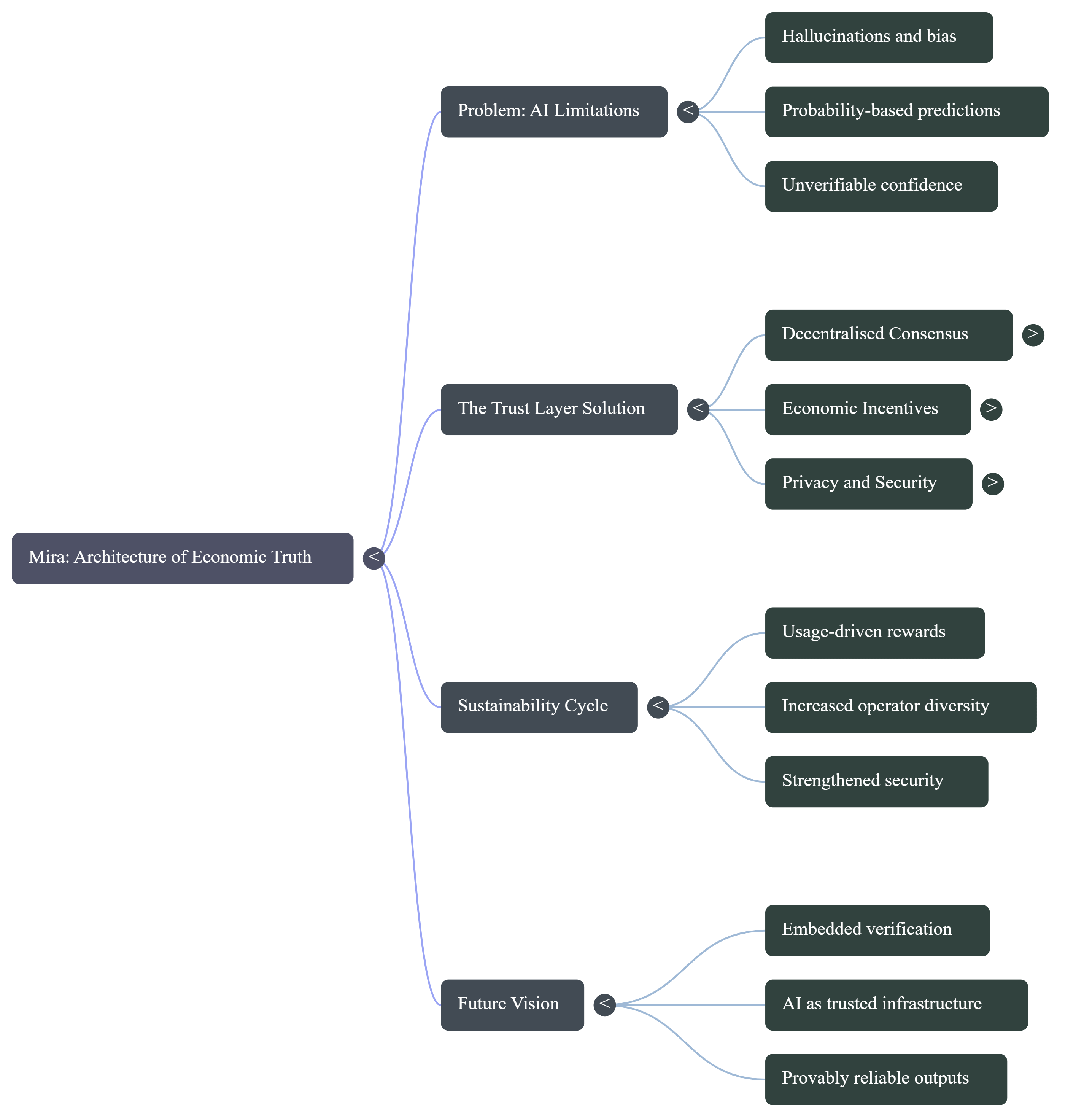

They generate answers that sound confident, but they cannot guarantee they are correct. Hallucinations and bias are not rare glitches. They come from how large models are built. These systems predict probabilities, not verified truths. Even when fine tuned, there appears to be a minimum error rate that a single model cannot fully eliminate.

Mira starts with a different belief.

Instead of trying to build one perfect model, it builds a system where multiple models verify each other.

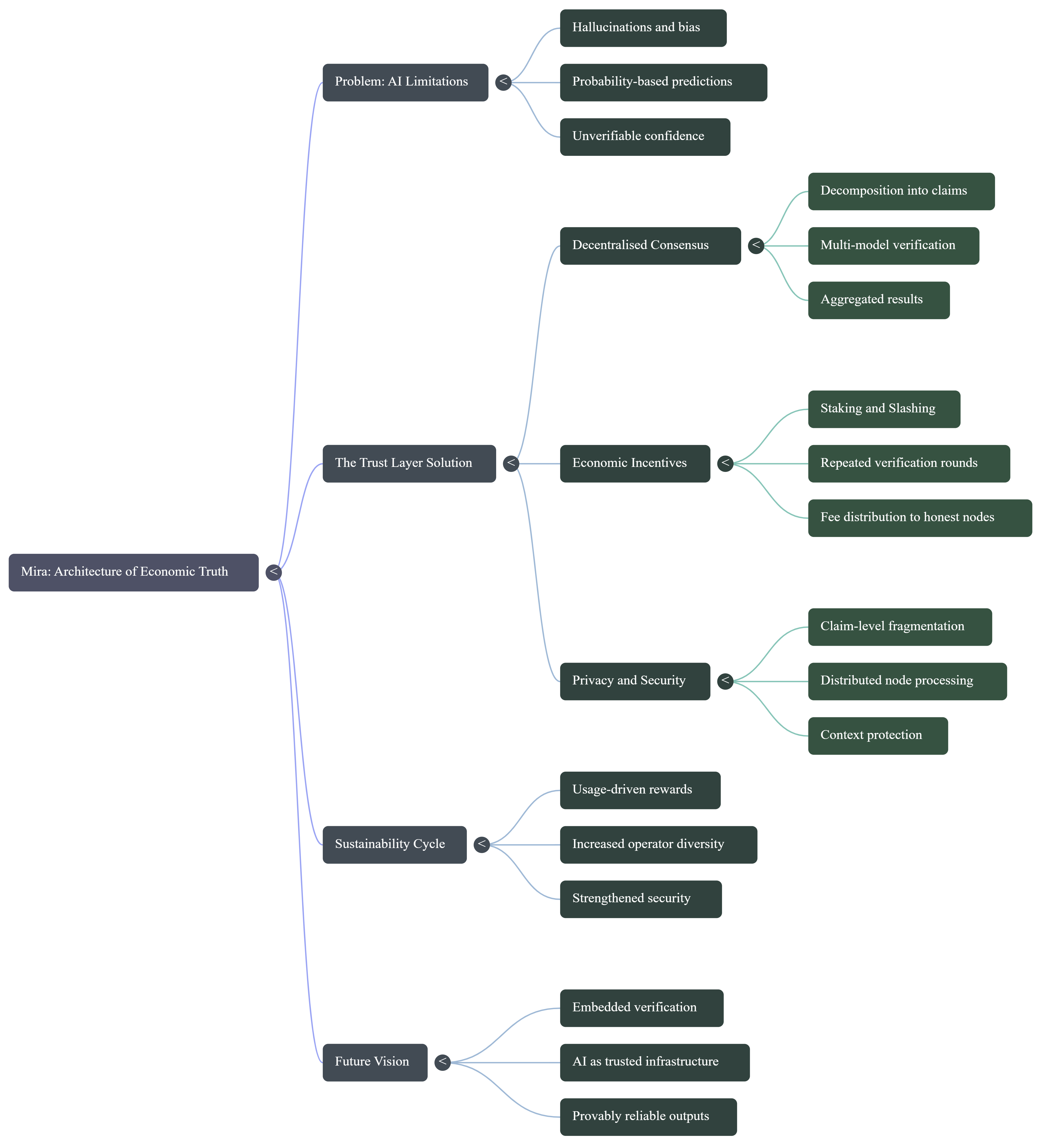

According to the@Mira - Trust Layer of AI whitepaper, $MIRA transforms AI output into smaller, independent claims. For example, a long paragraph is broken into clear factual statements. Each statement is then sent to different verifier nodes. These nodes run their own inference and submit a judgment. The network aggregates the responses and produces a consensus result.

This process reduces both hallucination and bias because no single model controls the outcome.

But verification alone is not enough. Incentives matter.

$MIRA combines inference based work with staking. Validators must lock value to participate in the network. If they try to game the system by guessing answers or submitting careless responses, they risk losing their stake through slashing.

The whitepaper even explains why this is necessary. Because verification questions can sometimes look like multiple choice tasks, random guessing might seem attractive. Mira addresses this by requiring repeated verification rounds and economic penalties. The probability of consistently guessing correctly drops sharply over multiple checks, making dishonest behavior statistically and economically irrational.

This is how sustainability is built.

Users pay fees for verified outputs. Those fees are distributed to honest node operators who perform real inference work. As usage grows, rewards grow. As rewards grow, more operators join. As operator diversity increases, bias decreases and security strengthens. It becomes a reinforcing cycle.

Another important layer is privacy. Instead of sending full documents to a single validator, content is broken into claim level fragments and distributed across nodes. No single participant sees the entire context. This protects sensitive information while still allowing verification.

The long term vision goes further. Mira aims to move from verifying outputs after generation to embedding verification directly into the generation process. That means building AI systems where validation is part of how the answer is created, not an afterthought.

In simple terms, Mira treats truth as something that must be economically secured.

Not assumed.

Not centralized.

But verified through decentralized consensus and aligned incentives.

As AI becomes infrastructure for finance, healthcare, law, and automation, trust cannot depend on confidence alone. It must depend on systems that reward honesty and penalize manipulation.

That is the foundation Mira is trying to build.

Systems like $MIRA aim to build the trust layer that makes AI outputs not just persuasive, but provably reliable.

#Mira #MİRA #AIToken #cforcrypto