I is moving fast maybe faster than any tech we’ve seen so far.Large language models and autonomous agents can reason,whip up code,summarize info,and make predictions across all sorts of industries.But under all that hype,lthere’s still a big problem:reliability.AI doesn’t really“know” things;it just guesses based on patterns it’s seen before.That’s why you get hallucinations,bias,mistakes,and sometimes logic that just doesn’t add up.

If you’re using AI to draft emails or brainstorm creative ideas,these issues aren’t the end of the world.But in places like healthcare, finance,robotics,or law,even a small error can turn into a disaster.Confident sounding but unreliable AI?That’s a recipe for trouble especially when lives or big money are on the line.This is exactly the gap Mira Network aims to close.

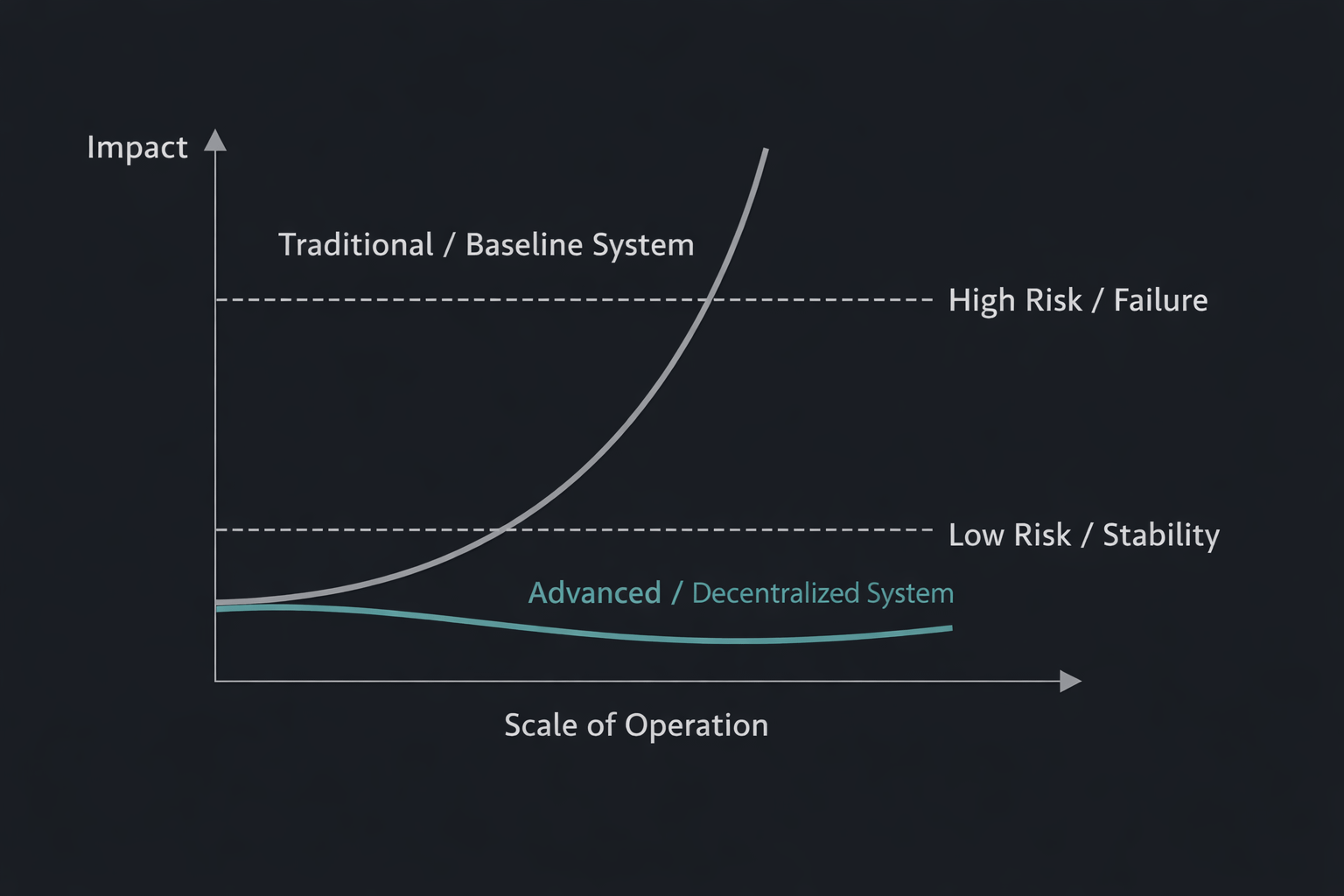

Right now,most AI systems rely on centralized checks human reviewers,internal audits,or closed off test environments.Sure, these improve quality, but they don’t scale. Plus, you’re forced to trust a single company to do the right thing.As AI gets more independent, this“just trust us”model falls apart.Honestly,it’s wild how many people overlook how vital real verification is.

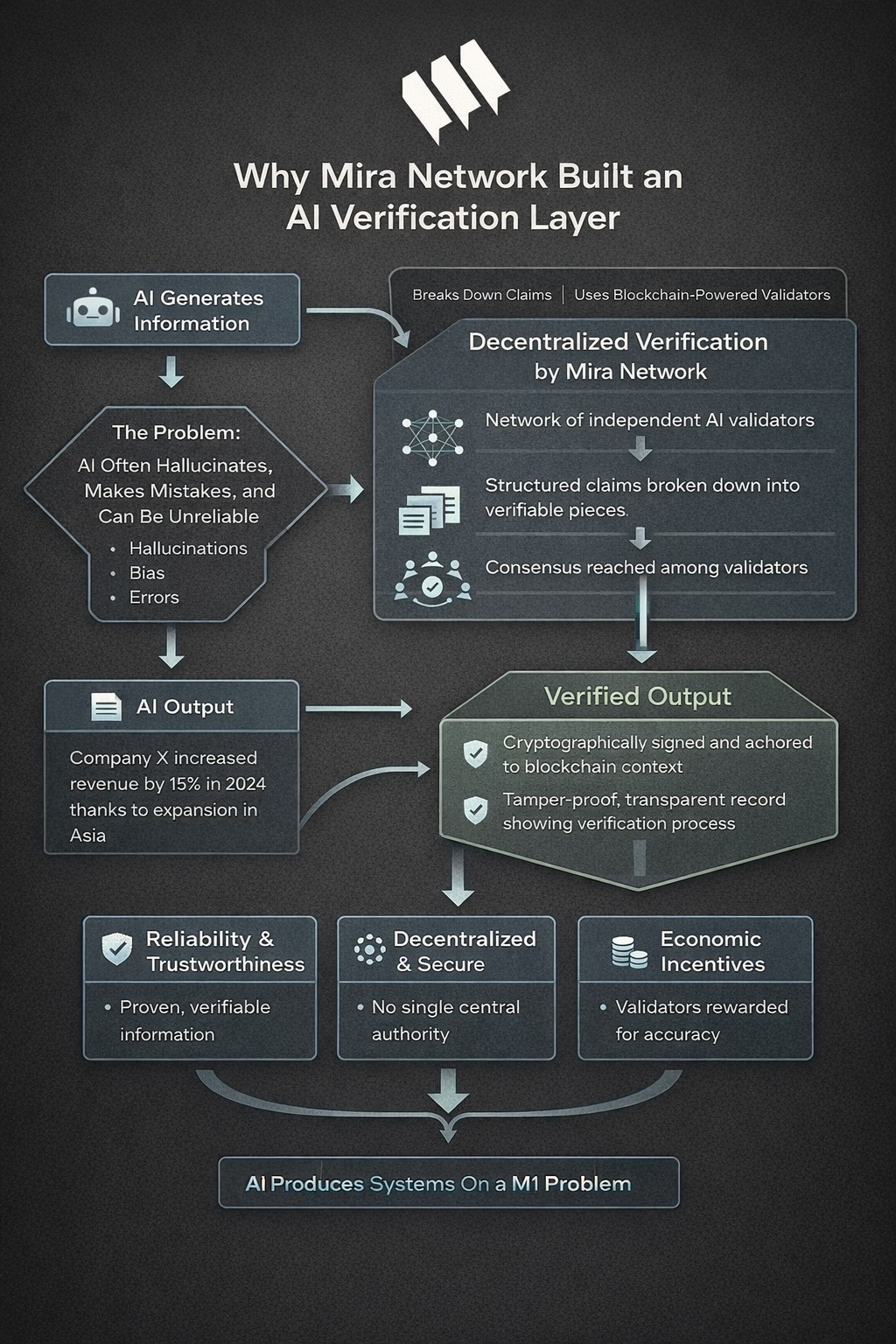

That’s where Mira Network steps in.They’ve built a decentralized verification protocol to make AI more trustworthy no single gatekeeper required.At its heart,Mira works as a blockchain powered verification layer for AI outputs.Instead of just taking an AI model’s answer as gospel,Mira breaks down big outputs into smaller,structured claims and spreads them out over a decentralized network.

Let’s say an AI says,“Company X increased revenue by 15% in 2024 thanks to expansion in Asia.”Mira doesn’t treat that as one chunk. It pulls apart each fact revenue growth,the year,the reason,the location so each piece can be checked on its own.This makes it way easier to spot errors or exaggerations.

Multiple independent AI validators on the network review every claim.They use a consensus system to decide if a claim checks out,doesn’t,or needs more info.If one validator gets it wrong,others can catch the slip up.So,instead of trusting a single model, you get credibility from the whole network agreeing.

Once the network reaches consensus,the verified output gets cryptographically signed and anchored to the blockchain.That creates a permanent,tamper proof record showing the claim passed decentralized verification. You don’t just have to take the answer at face value you can see the whole process that led to it.It turns AI predictions into digital assets you can actually trust.

Mira also bakes in economic incentives. Validators earn rewards for being accurate and get penalized for dishonesty.This nudges everyone to do the right thing,just like blockchains keep themselves secure through incentives.

In the end,Mira’s trustless consensus model gives us something new:autonomous trust. No one company holds all the cards. Reliability comes from the network’s collective agreement,cryptographic proof, and honest incentives.AI has to be verifiable there’s no way around it if we want autonomous systems in places like finance or medicine.Mira isn’t just another tool;it’s laying the groundwork for a future where AI isn’t just smart,but also secure,open,and dependable.

@Mira - Trust Layer of AI $MIRA #Mira