The more I observe the AI industry, the more one question stays in my mind:

Can we truly trust the outputs we are building entire systems around?

AI today is undeniably powerful. I use it daily. I see how efficiently it processes information, generates insights, and supports decision-making. But I’ve also noticed something fundamental.

Even the most advanced models can be confidently wrong.

Not occasionally. Structurally.

AI systems are probabilistic by design. They predict patterns — they do not possess certainty. Hallucinations are not simple bugs. They are a byproduct of how generative intelligence works.

For casual use, this may not be catastrophic. But when AI is integrated into trading strategies, DAO governance, risk modeling, research pipelines, or automated execution systems, the margin for error narrows dramatically.

Intelligence without verification creates hidden systemic risk.

Yet most discussions in the industry revolve around model size, inference speed, and benchmark scores. We celebrate capability.

We rarely prioritize reliability.

That gap is what caught my attention — and what led me to Mira.

The Structural Weakness in Today’s AI Model

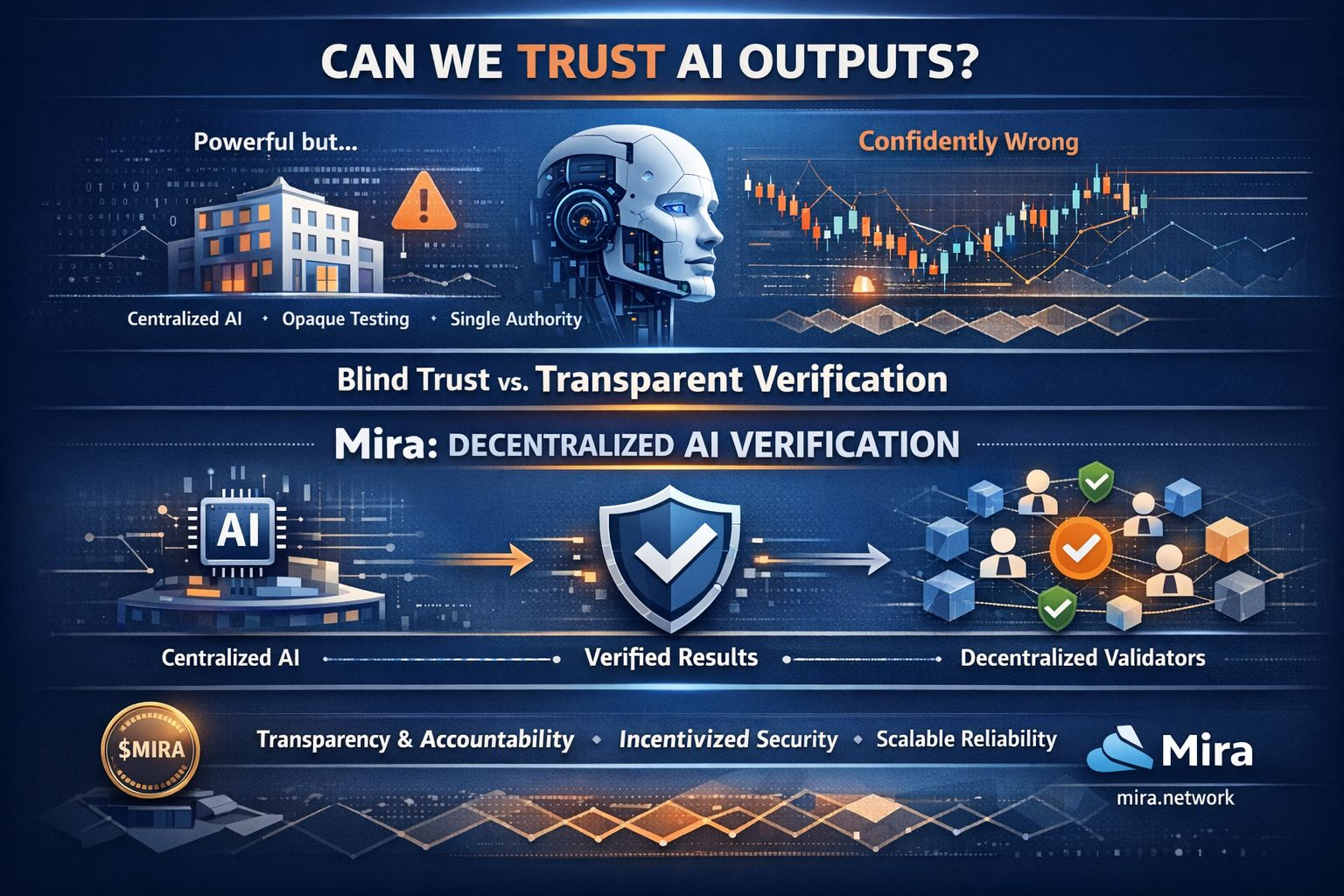

In most centralized AI systems, the same organization builds the model, evaluates it, defines accuracy standards, and deploys it.

Validation happens internally.

From an efficiency standpoint, this makes sense. From a structural standpoint, it creates dependency. Users are required to trust processes they cannot independently observe or audit.

Trust and verification are not the same thing.

Now consider blockchain ecosystems. Transparency is embedded in their architecture. Transactions are verifiable. Smart contracts are auditable. Consensus mechanisms minimize blind reliance on a single authority.

If AI is going to integrate deeply into Web3, DeFi, and autonomous agent systems, shouldn’t it follow similar principles?

Otherwise, we create a contradiction:

Decentralized infrastructure powered by opaque intelligence.

Mira’s Role: Separating Generation from Validation

What stands out to me about Mira is its focus on decentralized AI verification at the protocol level.

Mira does not attempt to compete in the race for the largest model. Instead, it addresses a more foundational problem — how to verify AI outputs before they influence high-stakes systems.

The key insight is structural separation:

Generating an answer and validating an answer are two different responsibilities.

By distributing verification across a decentralized network, Mira reduces reliance on a single authority. It introduces transparency and accountability into the evaluation process.

This is not a cosmetic add-on. It is infrastructure.

If AI agents are going to execute trades, influence governance decisions, or interact with smart contracts, their outputs need a verification layer that aligns with the transparency standards of blockchain systems.

Mira positions itself as that layer.

Incentives, Accountability, and Scale

Another critical dimension is incentive alignment.

Verification cannot depend on goodwill alone. A sustainable network requires economic structure. The role of $MIRA in coordinating participation introduces accountability into the system. Validators are incentivized to act honestly and accurately, while dishonest behavior becomes economically disadvantageous.

This transforms verification from a theoretical concept into an enforceable mechanism.

And that matters for scale.

As AI adoption accelerates, enterprises and institutions will demand more than performance metrics. They will demand provable reliability. Regulators will demand accountability. Capital allocators will demand risk mitigation frameworks.

At that stage, verification will not be optional.

It will be expected.

A Necessary Evolution in AI Infrastructure

I am not against centralized AI. It has driven extraordinary innovation. But centralization alone is not sufficient for the next phase of adoption — especially as AI becomes financially integrated and increasingly autonomous.

Blind trust becomes a structural weakness.

Decentralized verification represents a philosophical and architectural shift. It moves AI from a “trust us” model to a “verify it” model.

That distinction may seem subtle today.

But long term, it may define which AI ecosystems are resilient, scalable, and sustainable.

When I think about the future of AI in Web3, I don’t just imagine smarter agents.

I imagine accountable agents.

Systems where outputs are not only generated efficiently, but validated transparently before they influence capital, governance, or automation.

That is why Mira stands out to me.

Because if AI is going to power the next generation of decentralized systems, reliability cannot be assumed.

It has to be built into the foundation.