There is a hidden cost in crypto markets that I think about often. I call it coordination leakage. It’s the slow erosion of alignment between what a system promises at the interface and what it actually guarantees at the infrastructure layer. Most users never see it. Traders feel it as slippage. Builders feel it as friction. Over time, it compounds into doubt.

When I look at Fabric Protocol, what stands out to me is that it is not trying to solve a token problem. It is trying to solve a coordination problem. And coordination, especially between humans and machines, is less about throughput and more about discipline.

Fabric Protocol positions itself as a global open network for the construction and governance of general-purpose robots, supported by the non-profit Fabric Foundation. On the surface, that sounds like robotics infrastructure. But structurally, it is about verifiable computation and shared state across machines that must act in the real world. That changes the stakes.

Decentralization loses meaning the moment data ownership recentralizes. I’ve seen this in trading systems repeatedly. A decentralized exchange can settle on-chain, but if price feeds are controlled by a narrow oracle committee, execution reality collapses into trust assumptions. You can sign transactions yourself. You can hold your own keys. But if the data driving liquidation logic or robotic instruction is effectively centralized, you are still downstream from someone else’s latency.

That is where Fabric’s architecture becomes interesting.

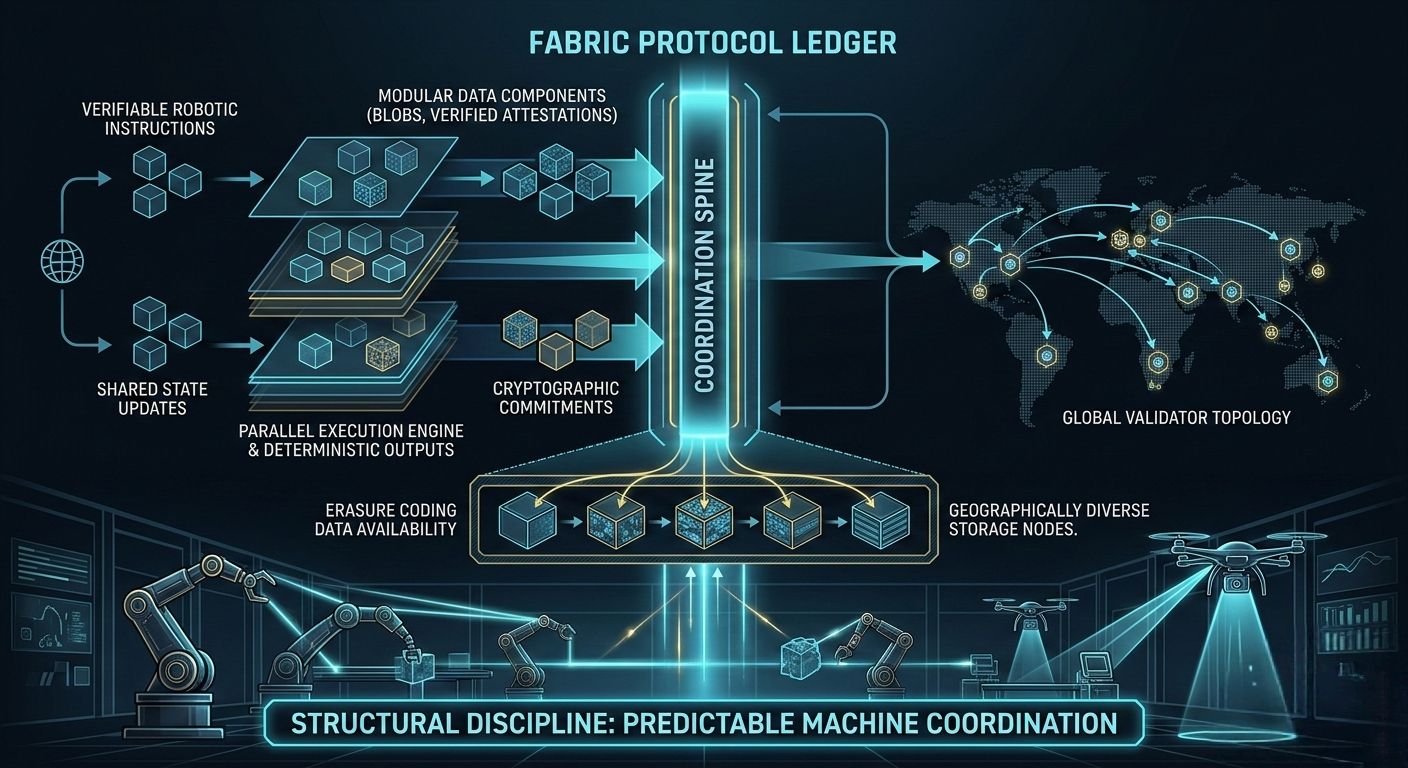

The protocol coordinates data, computation, and regulation through a public ledger. But coordination is not just about writing states to a chain. It is about how data is fragmented, verified, and made available. If robotic agents are operating under verifiable computing assumptions, their outputs must be decomposed into attestable components. This is not unlike how erasure coding distributes fragments of data across nodes to preserve availability while limiting single points of failure. What matters is not just redundancy, but who can reconstruct truth under stress.

In market terms, I think about oracle delays during volatile sessions. A small lag between a centralized exchange and an on-chain oracle can trigger cascading liquidations. Traders don’t see the delay; they see forced exits. They internalize it as “risk.” In reality, it’s a coordination failure between data arrival and state execution.

Now imagine robotic agents relying on similar infrastructure. If their computation is verified but their data pipeline is fragile, the problem isn’t malicious behavior. It’s timing. And timing is where most decentralized systems quietly fail.

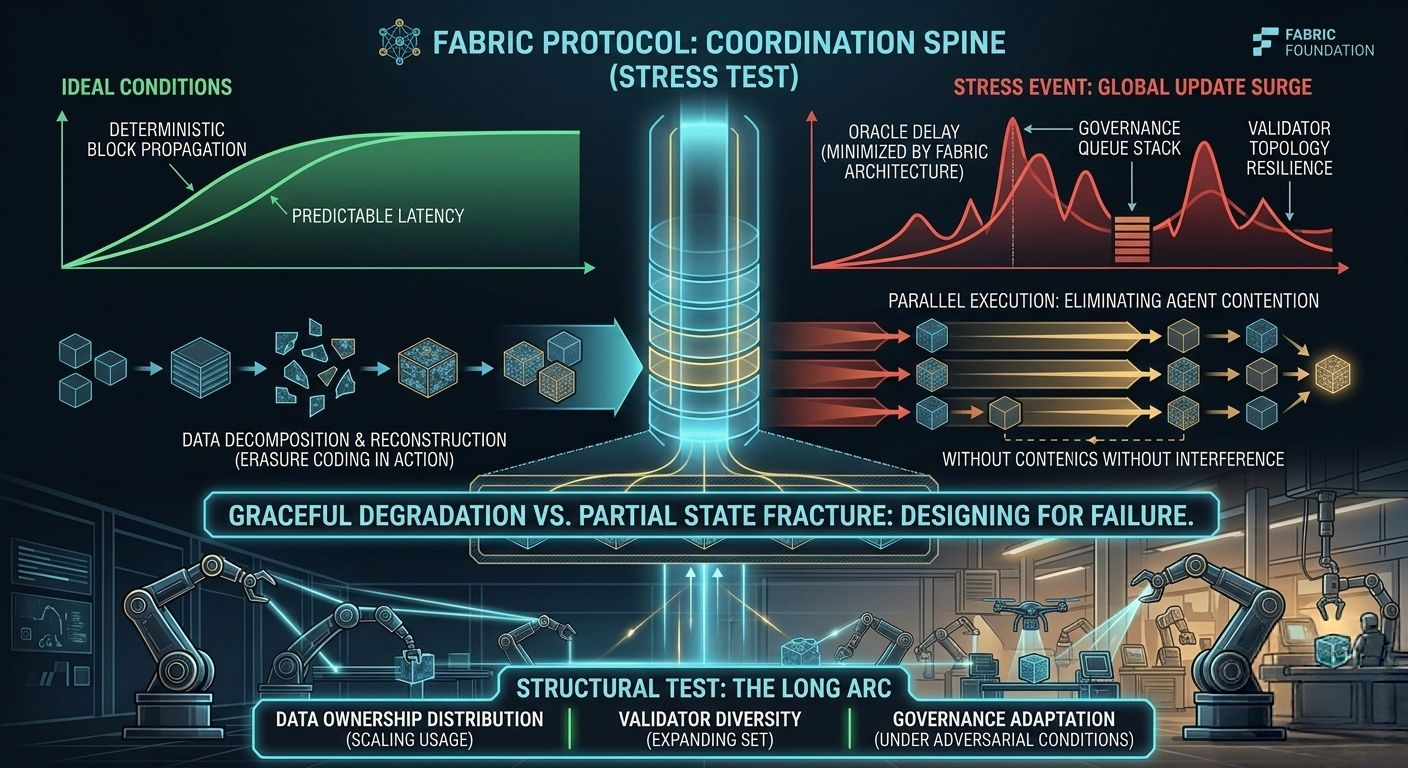

Block time consistency becomes less about headline speed and more about predictability. A two-second block that occasionally becomes twelve seconds under congestion changes user psychology. Builders adjust assumptions. Traders widen spreads. Participants hedge against the chain itself.

What I find compelling about Fabric’s framing is that it treats the ledger as a coordination spine rather than a performance theater. Validator topology, physical infrastructure distribution, and execution parallelism are not just scaling tools; they are trust-shaping mechanisms. If validation is geographically and institutionally diverse, robotic governance becomes harder to capture. If execution is parallelized correctly, contention between agents does not degrade system-wide determinism.

But there are trade-offs here.

High-performance chains often optimize for parallel execution and low latency, yet they introduce complexity in validator hardware requirements. Over time, that can concentrate power among well-capitalized operators. I’ve watched similar patterns emerge elsewhere: strong throughput claims, but subtle validator centralization due to bandwidth and storage demands. It’s not malicious. It’s economic gravity.

Fabric must navigate that gravity carefully.

If data is broken into modular components—stored as blobs, verified via cryptographic commitments, distributed across nodes—privacy and availability improve. But storage costs rise. Network bandwidth increases. Eventually someone pays. Either through higher fees, inflationary rewards, or implicit reliance on off-chain storage providers.

There is no free decentralization.

The question is whether incentives align honest participation with long-term reliability. A native token in such a system is not just gas. It is a coordination instrument. It secures staking, governs parameter changes, and creates feedback loops between usage and validation. If robotic agents depend on the network, token demand reflects functional reliance, not speculative anticipation.

I care less about token volatility and more about whether staking rewards encourage geographically diverse validators. Whether governance processes adapt to stress events instead of freezing under ideological rigidity. Governance, in mature systems, is not control. It is adaptation.

Liquidity and oracle design are another structural hinge. If Fabric integrates external data feeds or bridges to other chains, those pathways become systemic risk vectors. I’ve seen bridge congestion during market stress cause pricing gaps that traders exploit within seconds. In a robotics context, such gaps could mean delayed compliance, mispriced computation, or misaligned regulation triggers.

Ideology does not fix that. Engineering does.

Stress-testing is where serious infrastructure reveals itself. Imagine congestion from a surge in robotic updates during a global event. Block propagation slows. Some validators fall behind. Oracle updates queue. Governance proposals stack. In that moment, does the system degrade gracefully, or does it fracture into partial states?

Designing for failure is a mark of sophistication. It acknowledges that coordination is fragile.

Compared with other high-performance chains that emphasize raw throughput, Fabric’s distinct challenge is that its output may influence physical machines. That introduces a layer of accountability that most DeFi systems never face. A delayed transaction in a trading protocol costs money. A delayed instruction in a machine network could affect real-world operations.

That weight changes design philosophy.

Execution realism extends to user experience. Signing flows, gas abstraction, execution primitives—these shape participant psychology more than whitepapers ever will. If developers must manage complex staking and data availability assumptions manually, friction accumulates. If gas costs fluctuate unpredictably, builders hesitate to deploy critical logic. Over time, friction becomes a hidden tax.

Predictable costs and reliable performance are what real adoption requires. Not narrative.

When I look at Fabric Protocol, I don’t see a robotics headline. I see an attempt to formalize coordination between machines under cryptographic constraints. That is a long arc problem. It will not be solved by marketing cycles.

The real structural test will come as scale increases. Can data ownership remain distributed as usage grows? Can validator sets expand without collapsing into professionalized oligopolies? Can governance adapt quickly during stress without undermining legitimacy? Can oracle and bridge integrations withstand adversarial conditions?

If the answers trend toward resilience, Fabric becomes more than infrastructure. It becomes discipline encoded in software.

And discipline is rare in crypto.

In the end, markets reward predictability more than speed. Builders reward reliability more than novelty. Participants reward systems that behave consistently under pressure.

Fabric Protocol’s future will not be decided by visibility. It will be decided by whether its coordination spine holds when the network is no longer theoretical, when machines depend on it, and when stress reveals the difference between decentralization as branding and decentralization as structure.That is the quiet test.

@Fabric Foundation #ROBO $ROBO