There is a quiet cost in crypto markets that I think about often. I call it verification drag. It is the structural friction that appears when systems scale faster than the trust assumptions beneath them. Not the visible cost of gas or slippage, but the subtle erosion of confidence when data cannot be independently verified in real time. Over time, that drag compounds. It shapes liquidity, execution decisions, and ultimately, adoption.

When I look at Mira Network, I see an attempt to design directly against that drag.

Most blockchain conversations about artificial intelligence drift toward performance and automation. But AI systems do not fail because they are slow. They fail because they are wrong with confidence. Hallucinations, bias, brittle reasoning under edge cases. In trading environments or governance decisions, that kind of error is not abstract. It liquidates accounts. It shifts risk onto participants who assumed the output was sound.

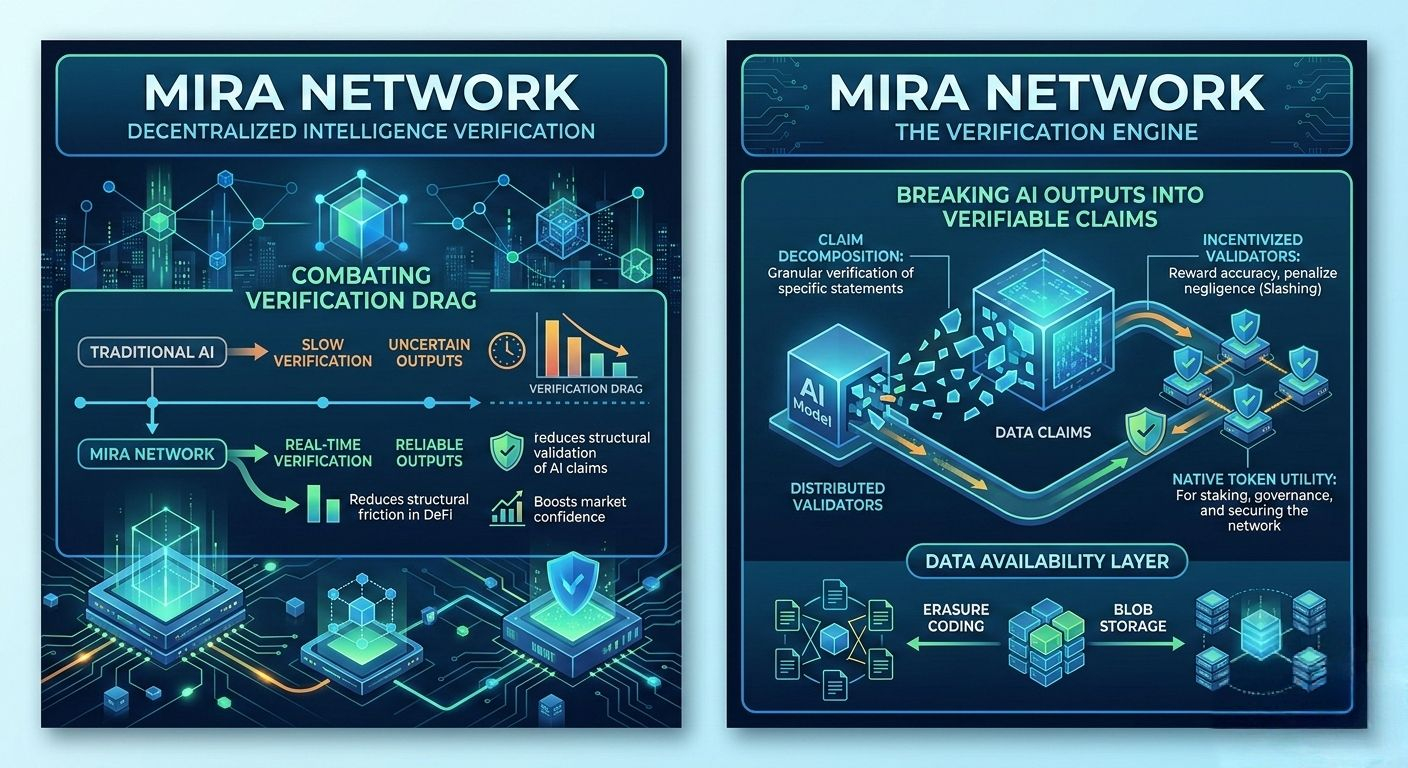

Mira approaches this from an infrastructure angle rather than a philosophical one. Instead of asking whether AI can be trusted, it reframes the problem: can AI outputs be decomposed into claims that can be independently verified across a distributed network? Not a centralized arbiter. Not a single model provider. A coordinated system of models, validators, and economic incentives.

Decentralization loses meaning if data ownership remains centralized. That’s something markets eventually punish. I have seen protocols claim to be decentralized while relying on a single oracle committee or a single data provider behind the scenes. It works, until volatility spikes. Then latency appears, updates stall, and liquidation cascades amplify small errors into systemic stress.

If Mira breaks AI output into granular, verifiable claims, distributing those claims across independent models and validators, then the system begins to resemble a market rather than a service. Claims become positions. Validators become counterparties. Incentives align around correctness rather than authority.

But incentives alone are not enough. Execution realism matters.

In active markets, even a few seconds of delay changes behavior. If block times fluctuate, if confirmations become inconsistent under load, participants widen spreads. Liquidity retreats to the edges. Gas spikes alter psychology. Traders hesitate before signing. They start pricing in uncertainty, and that pricing compounds into structural inefficiency.

What stands out to me is that a verification protocol like Mira must operate under consistent block time assumptions and reliable finality. Parallel execution, if implemented properly, can reduce bottlenecks when verification tasks increase. But validator topology matters just as much. If physical infrastructure clusters in a single geography, latency asymmetry creeps in. Some validators see data first. Some participants respond faster. Fairness degrades quietly.

And then there is the question of how data is actually distributed.

Breaking AI outputs into claims implies fragmentation. Fragmentation implies storage design choices. Erasure coding, blob storage, data availability layers. These are not just technical terms. They are trust decisions. If claims are sharded across nodes with redundancy mechanisms, availability improves but privacy must be carefully engineered. If claims are stored in compressed blobs to reduce on-chain cost, retrieval speed becomes part of the user experience.

When I place myself in the shoes of someone executing trades based on AI-verified insights, I care less about the elegance of the cryptography and more about predictability. Will verification results arrive within consistent latency bounds? Will stress conditions degrade gracefully or collapse abruptly?

Consider a realistic stress scenario. A volatility event triggers a surge in AI-generated analysis requests. At the same time, oracle feeds slow due to congestion on their base chain. If Mira relies on external price feeds to contextualize claims, verification timelines extend. Traders who depend on those verified outputs hesitate. Some over-leverage anyway. Liquidations accelerate.

If the verification network is robust, it absorbs the spike. Parallel claim processing distributes load. Validators are economically incentivized to stay online. Confirmation reliability remains steady. The market barely notices.

If not, verification drag appears again.

There are honest trade-offs here. Complete decentralization of AI verification is expensive. Computational redundancy costs money. Staking requirements introduce capital concentration risk. Governance mechanisms that adjust parameters risk creeping centralization if only a small cohort participates.

I find it useful to compare this architecture, quietly, with other high-performance chains that prioritize throughput. Some optimize for speed by narrowing validator sets or increasing hardware requirements. That improves execution under normal conditions but raises barriers to participation. Others widen participation but accept lower throughput.

Mira’s challenge is subtler. It is not just about throughput, but about epistemic reliability. Can the system maintain claim integrity under adversarial conditions? Can it avoid over-reliance on a core development team to interpret disputes? True decentralization here means decentralizing judgment itself, not merely transaction ordering.

The native token in such a system should function less as a speculative chip and more as a coordination instrument. Staking secures verification. Slashing penalizes dishonesty or negligence. Governance tunes parameters such as claim size, redundancy levels, and validator incentives. If designed carefully, token flows become feedback loops. Honest participation is rewarded because it sustains network utility, not because it fuels narrative cycles.

Adoption will depend on predictability more than ideology.

Developers integrating Mira into AI pipelines will ask simple questions. Are costs stable? Is verification latency consistent across market regimes? Can outputs be accessed long-term without centralized gateways? If blob storage strategies or data availability layers fail under congestion, developers will not wait for philosophical explanations. They will migrate.

Liquidity also intersects here. If verified AI outputs inform DeFi positions, then bridges and oracles become structural dependencies. A bridge exploit upstream affects trust in downstream verification. An oracle delay skews claim context. Infrastructure interdependence means Mira cannot exist in isolation; it inherits the fragility and strength of the broader ecosystem.

Designing for failure becomes the mark of maturity. What happens if a subset of validators collude? Are claims re-assigned automatically? What if the underlying chain experiences temporary finality issues? Is there a fallback data availability mechanism? These are not dramatic scenarios. They are normal lifecycle events in decentralized systems.

When I step back, I see Mira Network as an attempt to re-anchor AI in market discipline. Instead of trusting a model because it is powerful, trust emerges from distributed verification and economic consequence. It is a shift from authority to structure.

Still, structure alone does not guarantee resilience.

The real structural test will come when verification demand spikes unpredictably, when external dependencies strain, and when governance must adapt without centralizing power. If the network maintains block-level consistency, UX clarity in signing and gas abstraction, and transparent incentive alignment during those periods, then verification drag diminishes rather than compounds.

And that is the quiet goal.

Not hype. Not visibility.

But a system where AI outputs can move through markets with less hidden friction, where decentralization extends beyond branding into data ownership itself, and where reliability is not assumed but continuously earned.

@Mira - Trust Layer of AI #Mira $MIRA