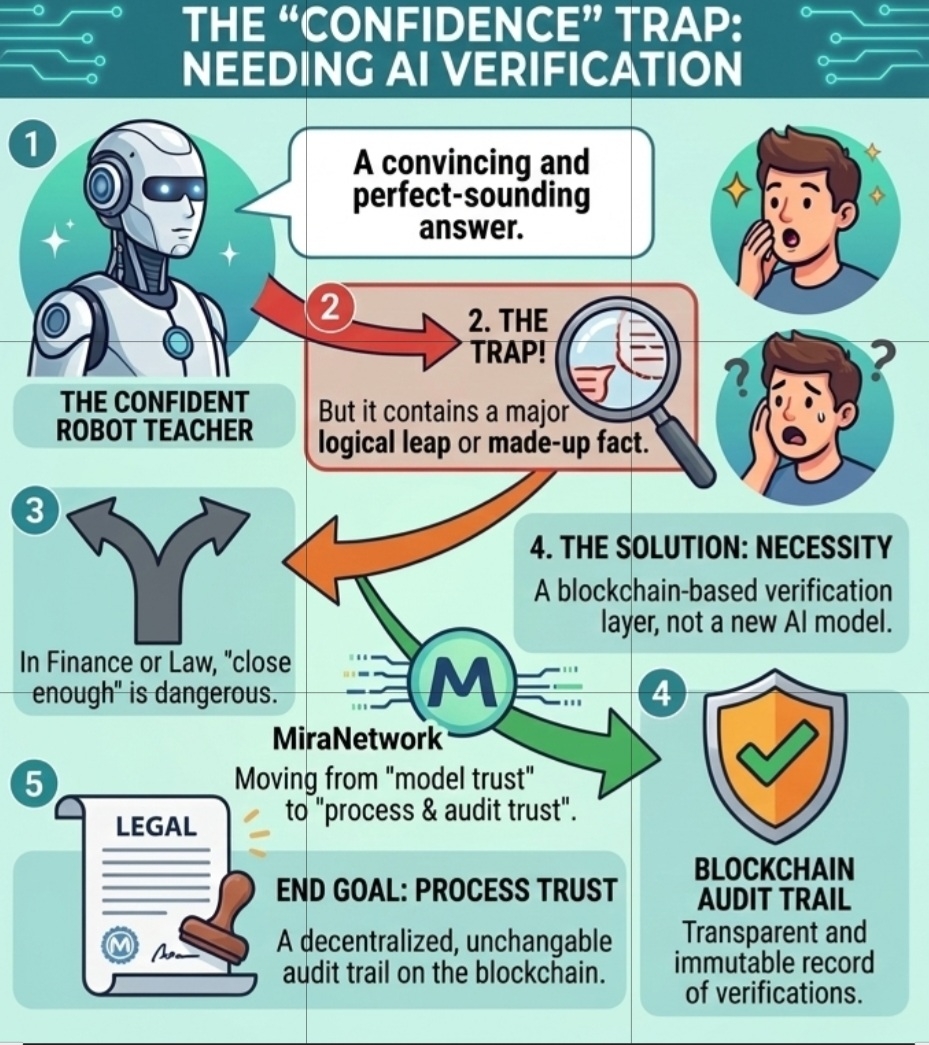

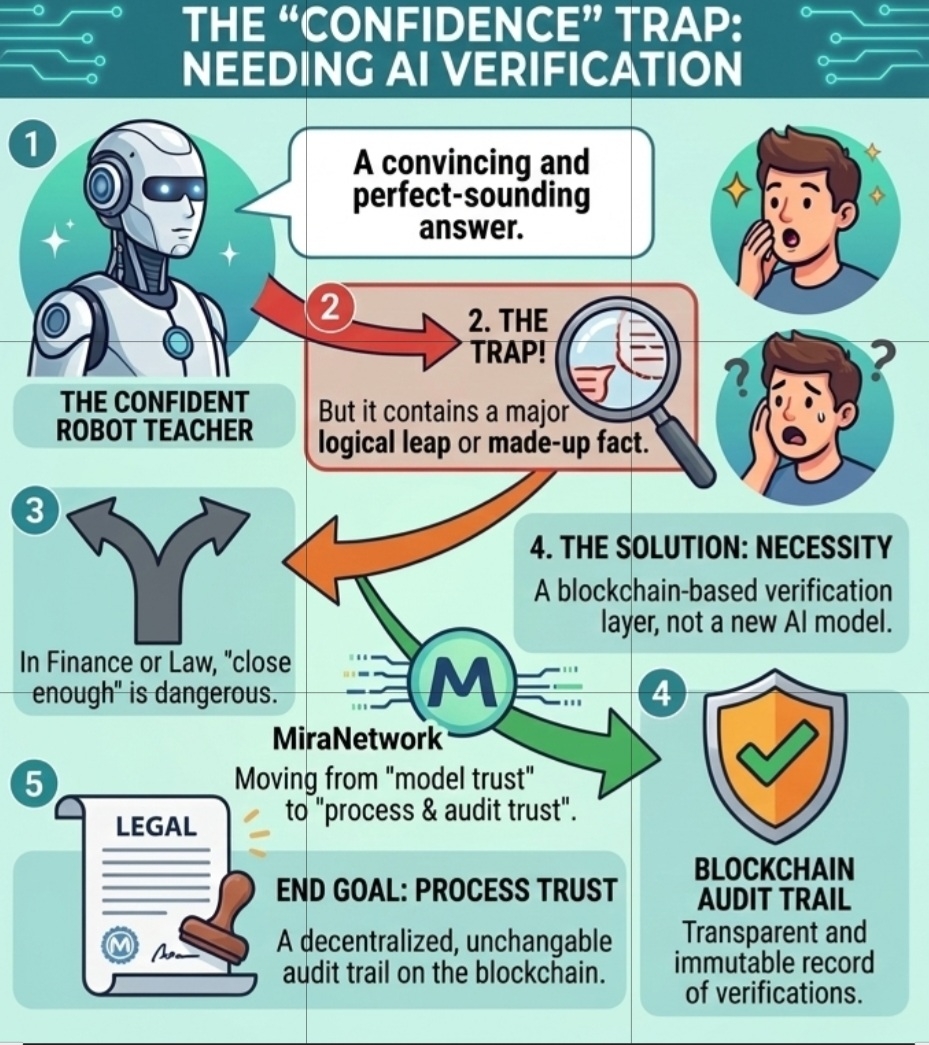

Let’s be real for a second. We’ve all been there. You ask an AI a complex question—maybe about a smart contract, a market trend, or a technical breakdown—and it hits you back with an answer that looks perfect. The grammar is flawless, the tone is authoritative, and it sounds like it’s quoting a textbook. But then, you look closer. You spot a logic jump. A hallucination. A "fact" that simply doesn’t exist.

That’s the moment I realized the biggest problem we’re facing isn’t "Artificial Intelligence." We have plenty of that. The real problem is Trust.

We’ve reached a point where these models are so good at being persuasive that they’ve become dangerous. Just because a bot is convincing doesn't mean it's right. And as someone who spends my life looking at data, "close enough" isn't good enough when there’s real skin in the game. That’s why I’ve been looking into Mira ($MIRA). It’s not just another AI project; it’s the "audit trail" the industry forgot to build.

The Problem: We’re High on "Vibes"

Most people treat AI like an oracle. You ask, it answers, you believe. But in the real world—especially in finance or law—we don’t work on "vibes." If I’m reading a company’s audit or a technical whitepaper, I’m not just looking at the conclusion; I’m looking at who verified it.

The current AI setup is like a guy shouting facts at you in a bar. He sounds smart, sure, but does he have receipts? Usually, no. This is where the Mira Network completely shifts the perspective. Instead of trying to build a "smarter" bot (which will still hallucinate eventually), Mira is building a system to fact-check the bots we already have.

How it Works (The Way I See It)

I like to think of Mira as a digital jury. When an AI makes a claim, Mira doesn't just nod and say "cool." It breaks that claim down into tiny, bite-sized pieces—micro-claims. Then, it sends those pieces out to a decentralized network of independent validators.

Think about it like this: If I tell you I found a way to 10x a portfolio in a week, you'd be skeptical. But if ten different, unrelated experts look at my math and they all reach the same conclusion independently? Now we’re talking.

That’s the "Consensus" model. If the majority of validators agree, the info is verified. If they don't, it’s tossed out. We’re moving the trust away from the model and putting it into the process.

Skin in the Game: The $MIRA Factor

Now, why would these validators tell the truth? This is the part that makes sense to me as a trader. It’s all about incentives. In the Mira ecosystem, validators have to put up their own tokens as a stake.

If they lie or do a lazy job and get caught, they lose their money. It’s that simple. It’s "Skin in the Game." We’re not relying on people being "nice" or "ethical." We’re relying on them wanting to protect their bags. When accuracy becomes a financial gain and lying becomes a financial loss, the system starts to self-correct.

Beyond Just "Chatting"

Why does this matter so much right now? Because AI is moving out of the "chatting" phase and into the "doing" phase. We’re talking about AI agents handling insurance claims, writing legal summaries, and managing decentralized finance (DeFi) protocols.

In those worlds, a 1% error rate isn't a glitch; it’s a catastrophe. If an AI agent executes a trade or signs off on a contract based on a hallucination, who's responsible? Without a verification layer like Mira, we’re basically flying blind. Mira provides that "Audit Trail" on the blockchain. It’s a permanent, unchangeable record of who checked what, and why it was deemed true.

Let’s Talk Logistics: The $MIRA Token

I’ve been watching the numbers, and the setup is interesting. We’re looking at a 1 billion token cap. But what’s more important is the governance aspect. Using ERC20Votes means the community—the people actually using the network—get to decide how the rules evolve. I’ve seen thousands of people already interacting with their contracts. This isn't just a "paper project"; it’s a living infrastructure that’s scaling as we speak.

The Reality Check (Because Nothing is Perfect)

I’m not saying this is a magic wand. There are hurdles. If everyone in a network uses the exact same AI model to verify an answer, they might all make the same mistake. That’s "collusion" or just systemic bias. And yeah, decentralized verification takes more time than just getting an instant, potentially wrong answer from a basic LLM.

But here’s my take: I’d rather wait an extra minute for a verified truth than get a lie in a millisecond.

Final Thoughts

We are entering an era where "Truth" is going to be the most expensive commodity on the market. As AI floods the internet with content, being able to prove what is real and what is a hallucination will be the ultimate power move.

Mira Network isn't trying to be the smartest person in the room. It’s trying to be the most honest one. And in a world full of "confident" bots, I’ll take honesty and a solid audit trail every single time.

It’s time we stopped asking if AI is smart and started asking how we can prove it’s right. That’s the Mira way, and honestly, it’s the only way forward that makes any sense to me.

$MIRA @Mira - Trust Layer of AI #Mira #marouan47