I’ve been watching AI evolve for years, and one thing has become clear: scale always arrives before accountability. You don’t see the arguments first. You see the machines, the logistics, the raw industrial reality of it all. That’s the pattern I keep noticing, and it’s the lens I look through when I try to understand why Mira Network matters.

A modern training cluster isn’t glamorous. It doesn’t have a single shining server at its center. It shows up in the details: trucks unloading hardware at odd hours, crates of optics and power supplies stacked like groceries, racks of GPUs humming quietly but insistently. Running one of these places feels closer to running a factory than coding software. The people who operate them learn to respect small things—microseconds of latency, temperature drifts, error counters that tick upward for days before anything catastrophic happens.

And when you tie those machines together with a high-speed network, what some people shorthand as “Cion,” the network becomes the computer. The math only works if the GPUs can exchange data fast enough, consistently enough, so the entire system remains coherent. If the fabric behaves, everything else looks like progress. If it doesn’t, a single port misbehaving after warming up can waste a week.

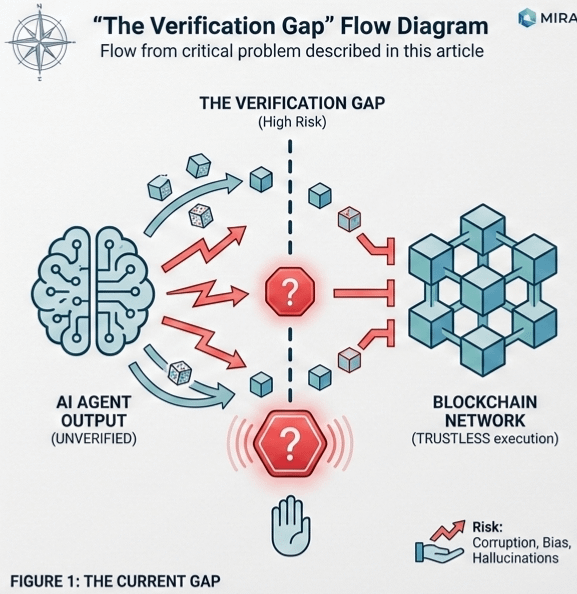

Now, compare that reality to how AI is discussed publicly: model cards, benchmark scores, promises that a system has been “tested.” There’s a mismatch. As the machines grow bigger and more complex, it’s easier for the rest of us to rely on faith because carrying proof is nearly impossible. Capability concentrates faster than accountability.

That’s where Mira enters the picture. Mira isn’t a committee, a law, or a flashy startup promising the next breakthrough. It’s a concept: verification as part of the infrastructure itself. If someone releases model weights and claims, “This is what we trained, and these are its properties,” Mira gives us a way to check those claims without trusting the person or the brand.

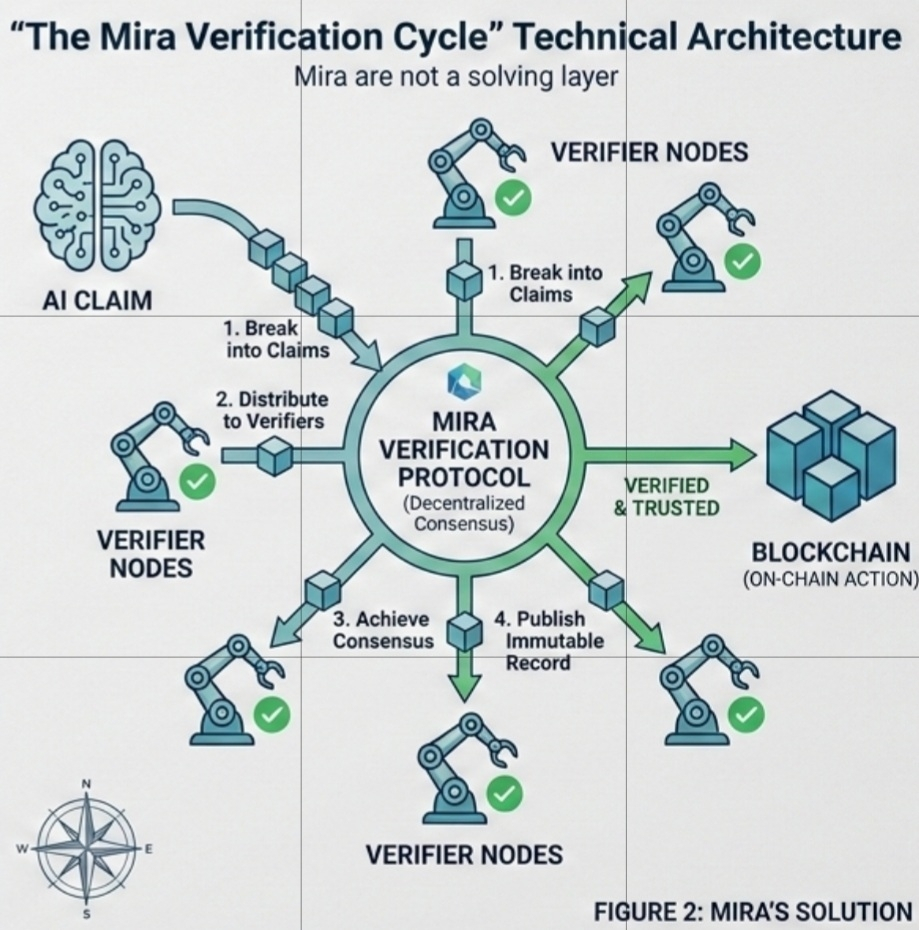

What I like about Mira is that they don’t pretend to fix AI hallucinations with a better model. They do something subtler and more important. They break AI outputs into discrete, verifiable claims, and they let multiple independent parties check those claims. That’s huge. The problem now isn’t whether AI can produce answers—it’s whether you can trust the answer when no one stands behind it.

In a Mira-style workflow, progress isn’t measured in press releases or headlines. It’s measured in signed attestations: what was tested, on which artifacts, in what environment. Hashes of model files. Container images pinned for evaluation. Drivers and hardware versions logged. Even prompt logs are published, warts and all.

This doesn’t make a model inherently safe. It makes it trackable and accountable. It gives others a starting point that isn’t rumor or marketing spin. Immutable logs don’t prevent mistakes, but they prevent confusion. They make it impossible to shrug off bad outcomes as misunderstandings rather than choices.

Here’s what I see every time I dig into how these systems operate. Fabric operators are paid to keep throughput high and errors low. Evaluators are often paid, if at all, to slow things down, reproduce anomalies, and publish caveats. The incentives collide. A Friday-night training run is almost finished, a bug appears in the final mile. Someone proposes a quick fix. Someone else asks whether the fix invalidates the verification. The room goes quiet. Nobody wants to delay the run. Nobody wants to ship a claim they can’t defend.

AI models aren’t static binaries. They’re behavioral surfaces. Small changes—sampling, tool access, hidden prompts, fine-tuning tweaks—shift that surface in ways you won’t notice until you press on a particular corner. At scale, training is subtly non-deterministic. Running the “same” configuration across thousands of devices can give slightly different weights. Verification can’t guarantee perfect replay—it provides a reliable lens, not perfection.

There’s also the human factor on verification. If attestations gain value, they become targets. Keys can be stolen. Results faked. Nodes can race for attention and publish too quickly. Checklists can be gamed. Verification must defend itself from the pressures it exists to counter, which takes culture as much as technology—treating “I don’t know yet” as responsible and reproducibility as more prestigious than being first.

So what is the new reality? Scale will keep coming. Tens of thousands of GPUs. Megawatts of power. Industrial cooling. Networks built like highways with redundancy and contingency plans. Multiple organizations rely on the same vendors. Multiple downstream users build products and policies on top of released weights. In that environment, Mira provides a way to keep the record honest.

Signed manifests don’t make mistakes impossible. They make models identifiable. Reproducible evaluation doesn’t guarantee you’ve caught every bug, but it gives others a starting point that isn’t rumor. Immutable logs don’t prevent error—they prevent misinterpretation.

The future isn’t just bigger models on faster fabrics. The future is an ecosystem where ability to verify travels alongside ability to compute. Without it, the AI race will be fueled by trust, suspicion, and exhausted judgment, with no clear way to separate real progress from well-timed storytelling.

That’s why I watch Mira closely. They’re not flashy. They’re not promising the next breakthrough AI. They’re solving the hardest, least glamorous problem: trust at scale. If they succeed, they aren’t just a project—they’re the foundation for AI that can operate in the real world, on real infrastructure, with real consequences.

Scale is easy to predict. It’s funded, staffed, under construction. Verification is the harder part. And that, I think, is where Mira could change everything.

#Mira @Mira - Trust Layer of AI $MIRA #marouan47