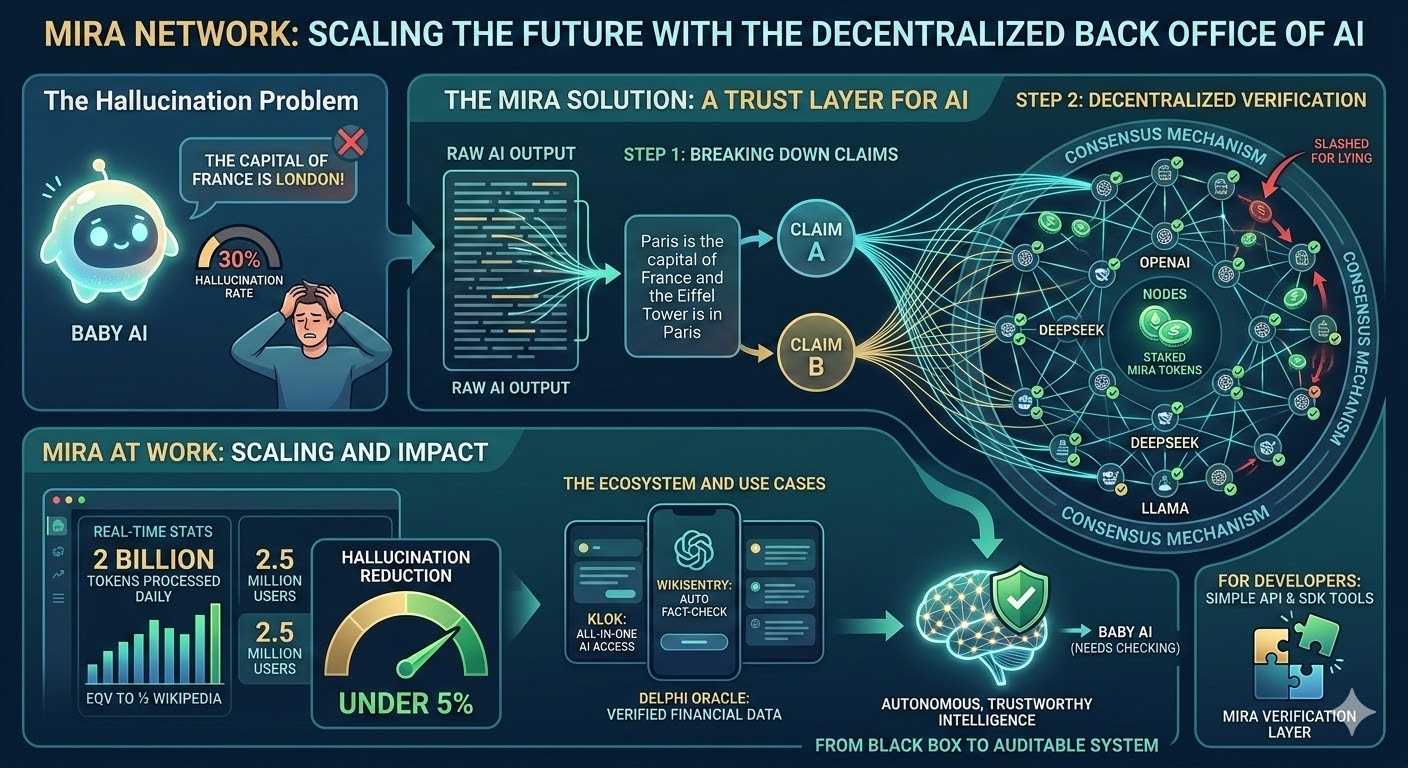

Imagine asking a super-smart assistant a question, but you are never quite sure if the answer is made up. This is the big problem with Artificial Intelligence (AI) today: "hallucinations," where the AI just guesses. Mira Network is building the "back office" to fix this, creating a trust layer for the AI age .

Instead of trusting one single AI model, Mira breaks down every response into tiny, individual facts called "claims" . Think of the sentence "Paris is the capital of France and the Eiffel Tower is in Paris." Mira splits it into two separate sentences to check each one independently . These claims are then sent to a huge network of decentralized nodes running different models (like OpenAI, DeepSeek, and Llama) . These nodes vote on the truth, and if they lie, they lose money they have staked .

This isn't just theory. Mira is already handling massive scale, processing two billion tokens daily (equivalent to half of Wikipedia) for its 2.5 million users . The results are stunning. By cross-checking outputs, Mira cuts AI hallucinations from 30% down to under 5% . Major projects like the Delphi Oracle research tool use it to ensure financial data is accurate .

The ecosystem is growing fast. Klok, a popular app built on Mira, lets users access multiple AIs in one place . Others like WikiSentry automatically fact-check Wikipedia, and Amor provides verified emotional support conversations . Developers can easily plug into this verification layer using Mira's simple API and SDK tools .

By combining the transparency of blockchain with the power of AI, Mira Network is moving us from "Baby AI" (which needs constant human checking) to autonomous intelligence we can actually trust . It is turning AI from a black box into an auditable system .

@Mira - Trust Layer of AI #mira $MIRA