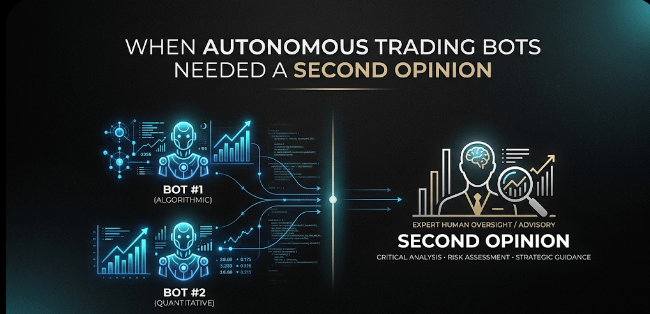

I remember telling a colleague during a strategy review that our trading bots were extremely fast but occasionally a little too confident. They reacted to signals instantly, which is great for execution, but it also meant small model mistakes could ripple through the system. That discussion eventually pushed us to test @Fabric Foundation with the $ROBO verification layer as a trust middleware between AI predictions and automated trading actions.

Our setup runs several machine-learning models that monitor order books, liquidity shifts, and short-term volatility across multiple exchanges. Every few seconds these models generate signals like “liquidity imbalance detected” or “momentum spike forming.” Before introducing Fabric, those outputs fed directly into the trading engine.

The architecture was efficient, but over time we noticed strange edge cases. One model would detect a breakout pattern while another indicated thinning liquidity in the same market. Both predictions looked valid individually, yet they occasionally contradicted each other. Since the trading system treated model outputs as final instructions, the bots sometimes entered positions during unstable conditions.

So we redesigned the decision pipeline.

Instead of letting models trigger trades immediately, every output became a structured claim. Claims such as “volatility breakout likely within the next 20 seconds” were routed through validators using the $ROBO network. In practice, @Fabric Foundation now sits between the prediction layer and the trading logic, acting as a decentralized verification step.

During the first month of operation we processed roughly 41,000 claims through the verification layer. Consensus time averaged about 2.4 seconds, occasionally reaching 2.9 seconds during high-volume market periods. For a trading system this delay sounded concerning at first, but our bots already used buffered execution windows, so the impact on performance was manageable.

The interesting metric was disagreement.

Around 4.6% of claims failed verification. Most of those came from situations where a single model detected a strong signal but other contextual data order book depth, cross-exchange spread behavior, or volatility clustering didn’t support the same conclusion. Without $ROBO validation those predictions would have triggered unnecessary trades.

To better understand the system, we ran a controlled replay experiment using historical market bursts. The models produced confident signals exactly as they had during the original market event, but validators rejected roughly 31% of the claims when additional context was considered. Watching the verification layer filter those signals convinced our team that decentralized validation was doing more than adding complexity.

Of course there are tradeoffs.

Decentralized consensus adds latency and relies on validator participation. During one scheduled validator update, consensus latency briefly increased by nearly 0.8 seconds. It didn’t break the system, but it reminded us that verification infrastructure has operational dependencies of its own.

Something else changed quietly inside the team. We stopped calling model outputs “trading signals.” Instead we began referring to them as AI claims. The $ROBO verification logs created a transparent record explaining why a signal was approved or rejected, which made debugging strategies much easier.

Integration turned out to be less disruptive than expected. The models themselves stayed untouched. We simply wrapped their outputs into claim objects before sending them through the verification network. That separation meant the AI layer could evolve independently while Fabric handled trust and validation.

I still remain cautious about relying too heavily on consensus systems. Validators can compare signals and detect inconsistencies, but they cannot magically produce ground truth if the underlying data is flawed. Shared blind spots in market data can still mislead both models and validators.

Still, the verification layer introduced something our trading stack lacked before: hesitation.

Previously, predictions moved straight from model output to executed trades. Now there is a brief step where decentralized validators evaluate the claim before the system commits. Supported by $ROBO incentives and distributed consensus, that pause adds a small but meaningful layer of discipline to automated decisions.

After several months of running this architecture, the biggest improvement isn’t raw profit metrics. Those fluctuate with the market anyway.

The real difference is clarity. When a trade happens, we now understand why the system trusted the signal in the first place.

In complex AI-driven systems, intelligence alone doesn’t guarantee reliability. Sometimes trust emerges from a quiet verification layer that asks one simple question before action: does this prediction actually deserve to be trusted?