I was bothered not by the robot work itself but by the fact that this was done by a robot that used the name FabricFND. The promise was the one that precedes the work.

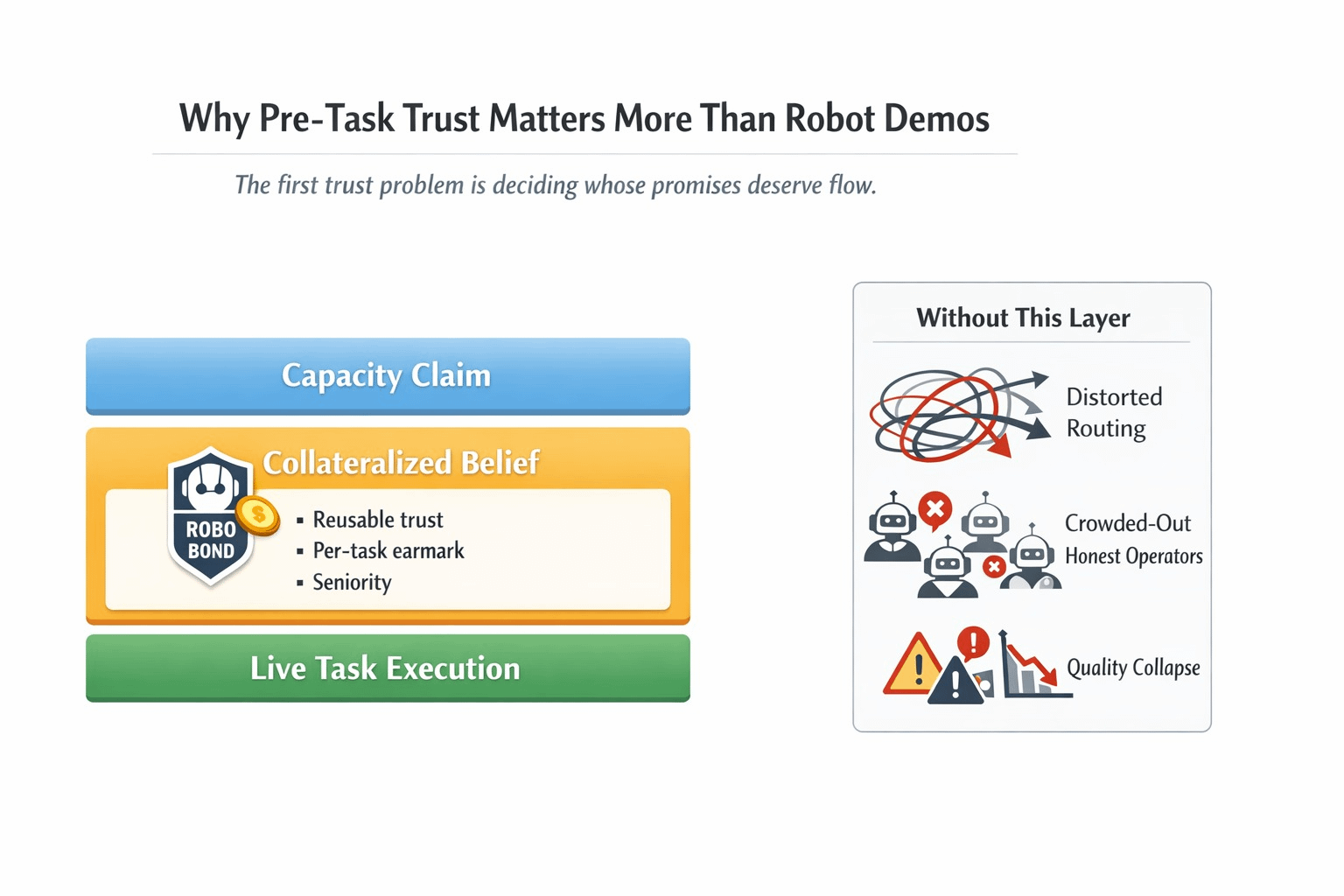

To do a lot of machine-economy writing, one just leaps to the step of execution, as though the difficult part begins when the robot begins to move. The section I could not keep revisiting was far uglier: one of them must think that the machine can do the job when it is not even yet assigned the initial task. The design paper of fabric has filed the over-making of refundable and working bonds in which operators place refundable bonds based on professed capacity, but the bonds are not passive staking.

That is tiny when you imagine the real working process.

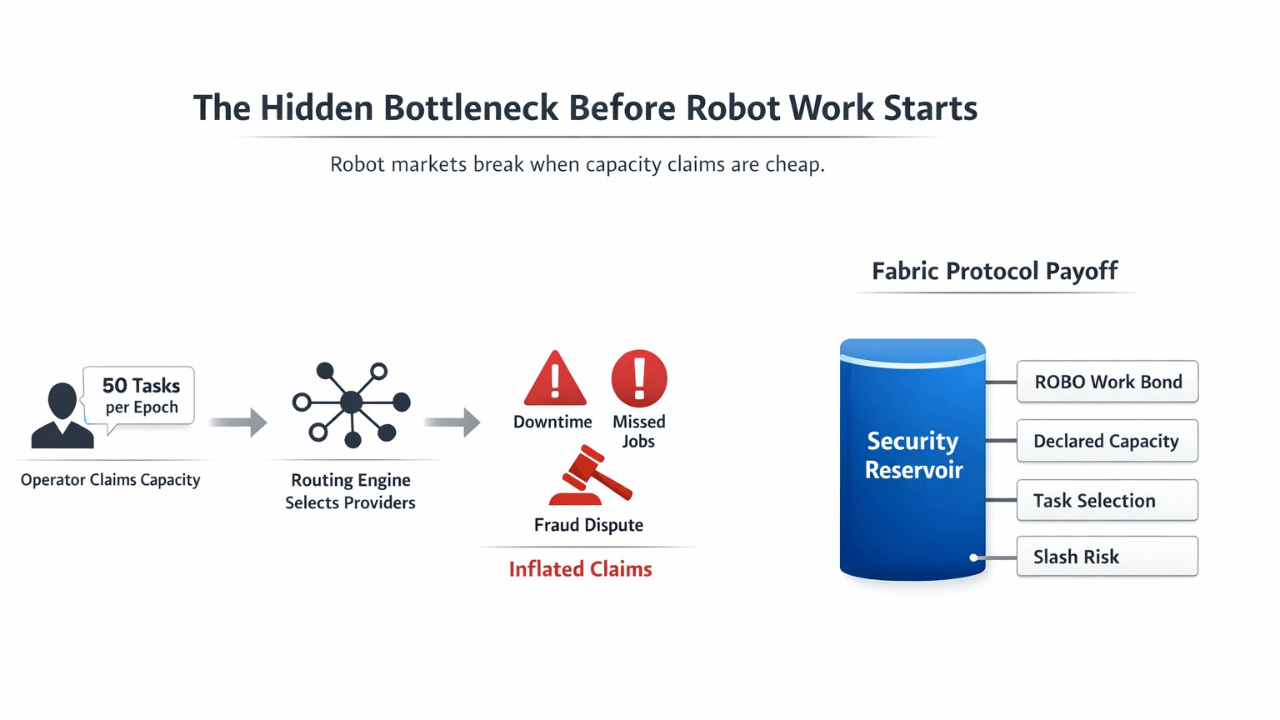

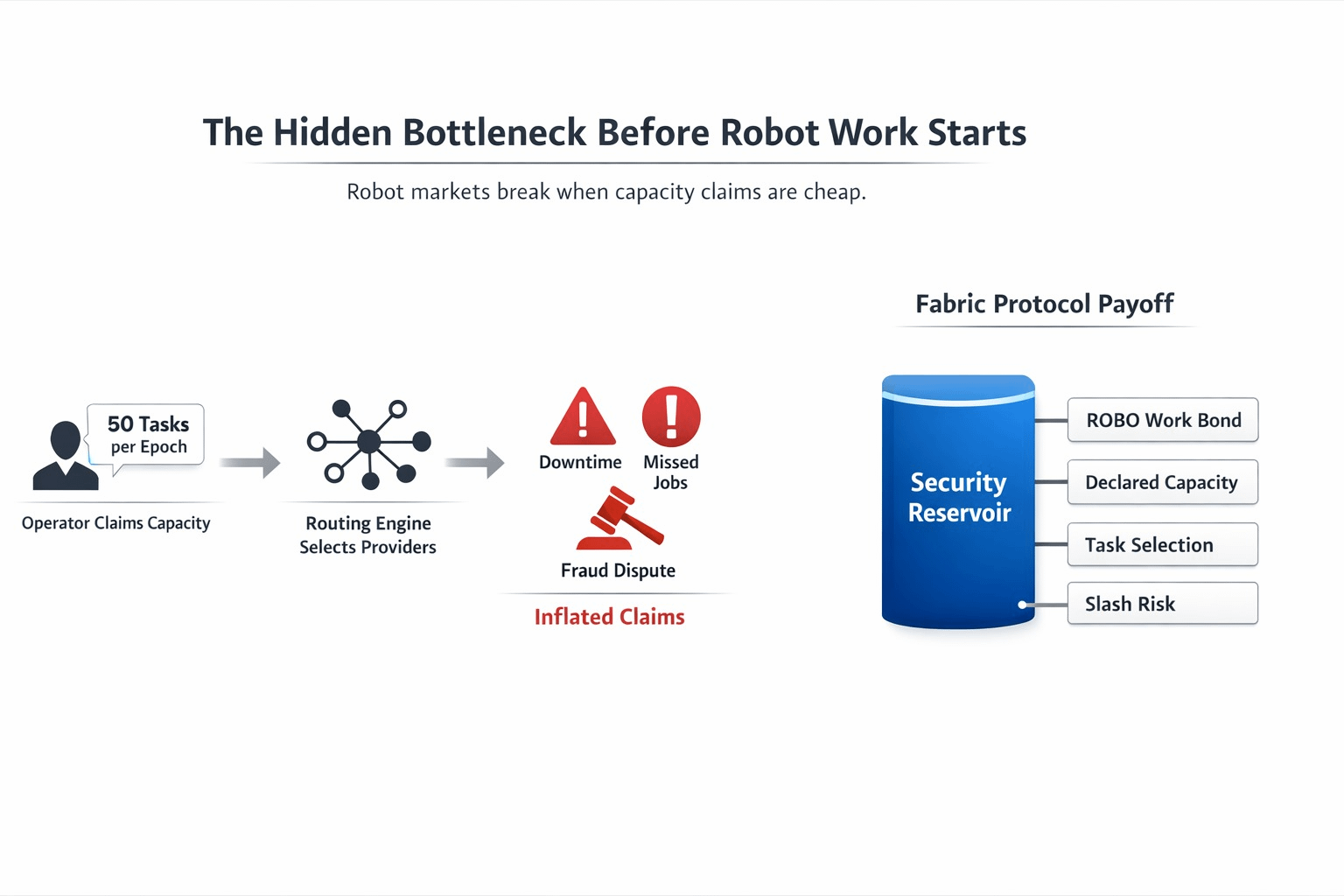

A fleet operator appears and tells them that they can accept a given volume of throughput this epoch with their machines. A buyer would like to have deliveries, inspections, data collection or any other machine task completed in time. The network must choose who is to be selected. When such claims of capacity are easy to make, the whole market will be distorted by the time any robot can demonstrate anything. It is rational to over promise. Selection gets gamed. Whitish operators are trampled by noisier ones. Later the failures manifest themselves in the form of lost work, wasted time, fraud cases, or quality failures. The whitepaper of Fabric is excessively clear in this regard: the necessary minimum bond is proportional to reported capacity, and the choice of tasks is not only proportioned according to reservoir value but also according to the length of hold of the bond with verification by on-chain Merkle proofs.

That is the veiled bottleneck which made this project look graver to me.

The initial trust issue in a robot market is not whether or not the machine can work. It is the question of how costly is it to cheat that you are able to work at scale?

The difference is not just as trivial as most individuals think. Inflated claims are irritating enough already in normal markets. Poisoning of routing, pricing, uptime expectations, and readiness of other actors to develop over the system occur in a machine network due to inflated claims. When the capacity is soft, then it is everything above the capacity which is soft also. This is the reason why I believe that the framing by Fabric of the Security Reservoir is more significant than the cleaner headline stories. Registered operators are not simply entering into a network. They are publishing security against the right to belief. Misconducts that the bond can be decreased can include fraud, spam, down time and the protocol is set in a way that the punishment of possible fraud is higher than possible profit on certain tasks.

That forms quite a different mental model.

The tie will not be there to make holding pretty. It is available there to render false capacity costly. And the per-task design is significant as well. Fabric suggests that multiple stake transactions are unnecessary whenever a robot receives a job, offering an alternative in which the protocol can place an ear-out on part of the existing bond as collateral on a job. Many high-frequency operations can be locked on the same capital and that is a highly specialized solution of a highly specialized workflow problem. The situation in the robot market cannot be real when it is required to freeze all tasks and begin anew in order to restore the trust. Fabric is attempting to reuse trust instead of making it free.

This was a turning point in my life.

So far my ROBO reading was happening in the mode that many people read robotics tokens, which is as an additional effort to package machine behavior in crypto language. The further I sat on the bond design the more it was likely that Fabric was obsessed with pre-task credibility. That is a far more significant and narrower problem. In case the network is able to price belief badly, the rest of the market never cleans up enough to make a difference. When the network is able to price belief effectively, then routing, settlement and delegation begin resting on something more difficult than marketing.

And there is where ROBO loses its screwed-on quality.

The utility layer that Fabric uses in those operational requirements is ROBO. It is posted as access and work bonds by operators. The token holders may delegate ROBO to increase the capacity and the probability of selection and also serve as a market-based reputation signal since the slash risk is distributed among the delegators. Meanwhile, Fabric indicates that services can be charged using terms of the stable value, to be predictable, yet on-chain settlement is still settled in $ROBO. And there is the token, right at the point where this trust problem lurks; in the price of coming to the market with credibility, scaling capacity with credibility, and settling activity with nativity.

I believe it is what most readers overlook. When you add more robots to the project, it does not become interesting. It becomes even more interesting when you contemplate additional assertions of robots.

That is where networks tend to become messy. Not at the demo layer. At the selection layer. At the juncture where a market must make a choice on whose promises to flow.

The pressure test is self-evident though.

Will declared capacity remain truthful when incentives that are real appear? Is it possible to make slashing sufficiently tight that the bond remains significant once the first wave of edge cases has been made? Does delegation increase capital efficiency and not degenerate into lazy capital hunting the largest operators by reflex? And when bond requirements are put into check with oracle-based prices, is the system robust enough to take a hit when volatility, downtime and inconsistent operator quality all strike against each other? The very nature of the design of fabric itself makes it clear that the bond requirement is pegged to a stable-value unit and is paid off in $ROBO with the aid of an on-chain oracle, which helps with volatility, but the actual test comes in determining whether such a mechanism can withstand messy field conditions, not clean diagrams.

This is why this angle remained in me.

The machine inability to move is not the first reason why a robot economy fails. It does not work the first because markets are not able to identify which machine operators they should trust and only later when there is movement in the markets.

It seems that fabric is interesting to me in that it is attempting to make that trust surface expensive, explicit, and reusable.

When that layer sticks, then the other part of the story is given an opportunity.

Otherwise, the robot does not actually come.

$ROBO #ROBO @Fabric Foundation