What continued to nag me about SIGN was not the moment of purity when a rule would be transcribed on paper and become a permanent document. It was the uglier part of the time when one realizes that the record is not right.

Not fake. Not malicious. Wrong just in this sort of way that real systems continue to generate.

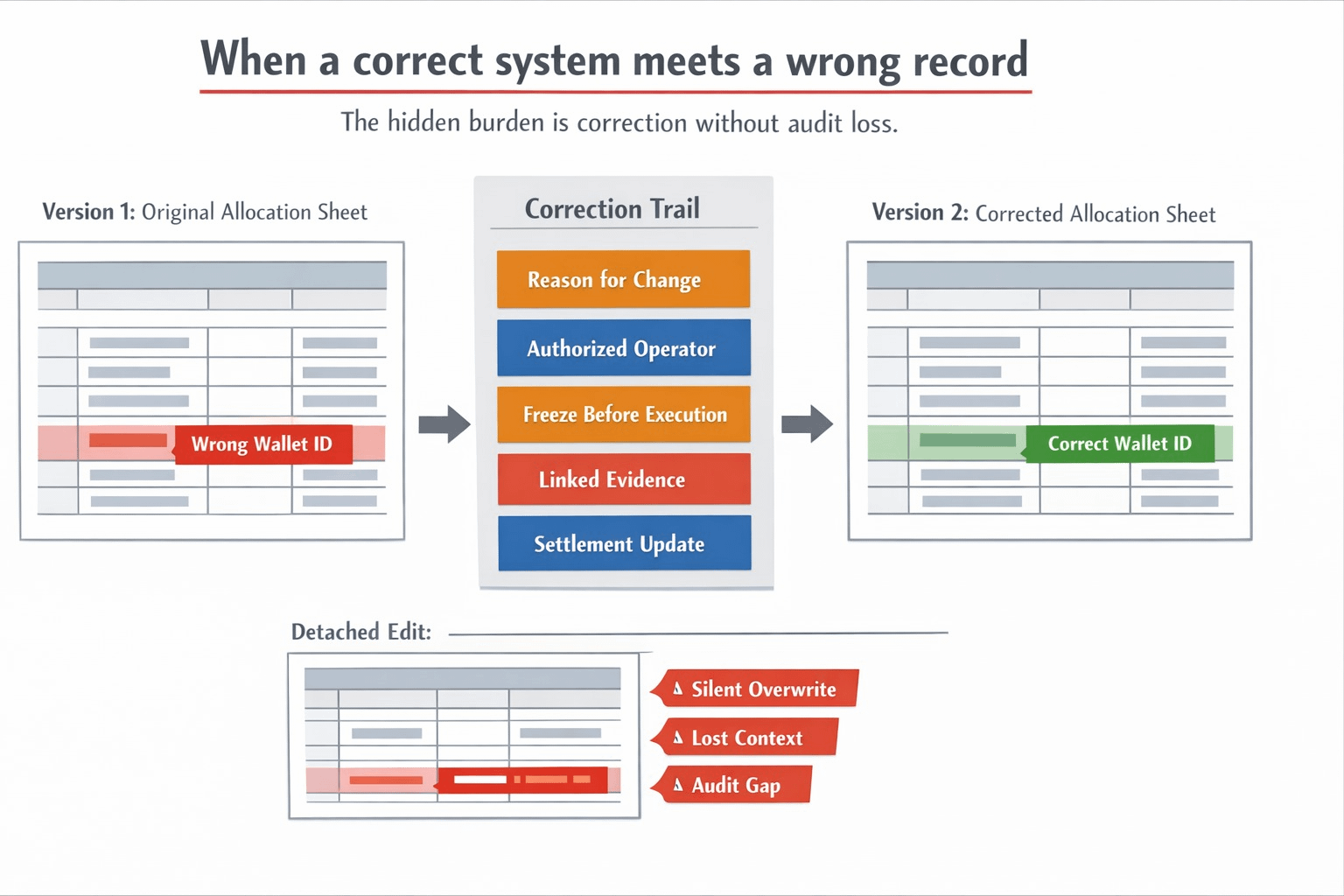

One beneficiary is cross-matched with misplaced wallet. One allocation row is holding an old identifier. A member of the team posts a correct but stale table which was posted correctly yesterday. One claim will be frozen and is waiting to be settled, yet the working process has already been adopted. The latter is where much of the so-called trust infrastructure ceases to appear trustworthy to me, as the actual issue is no longer establishing something. The actual issue is fixing something without erasing the audit trail that rendered the system credible in the first place without much ado.

This was the secret weight that caused SIGN to click more to me.

Most discuss verification in such a way that they think that the difficult part is to get a proof onchain or get a rule in the form of a structured format. I believe that the uglier side of it is that, once the structure is real, the proof is sound, and, yet, the decision is leading in the wrong direction due to the underlying record that must be changed. When you make silent edits, faiths are lost. When you leave the bad record there, and patch around it out of system, trust dying goes slower. When you change the old condition without recording the path that you have changed, the next operator can tell that something has changed, but never why, and when or by whom it was changed. It is not an edge case involving technicality. That is ordinary operations.

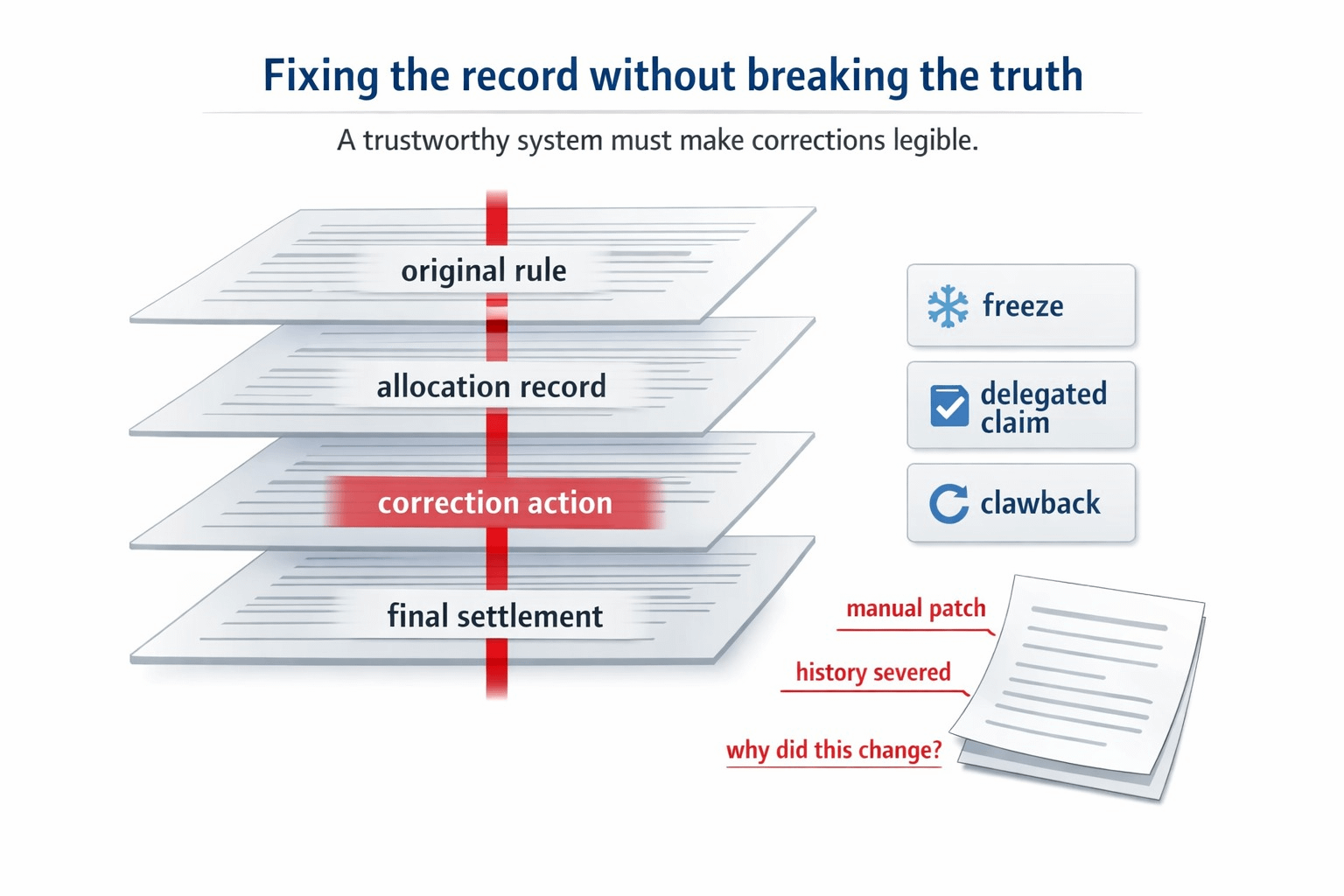

The scene in my workflow I could not get out of my mind was straightforward. An allocation has already been defined by a program, and an eligibility. Everything appears tidy on the first day. Then there comes a slip up on day three. Perhaps one recipient ought to be avoided. Maybe another needs a freeze. Perhaps a representative claimant may proceed to act since the original address is not effective any longer. The system must now perform a much more difficult task than checking the original record. It must maintain the fact that the original record was there, maintain the power to correct, maintain the cause that the correction was made and maintain the capability to play back the finished decision at a later time without making one labor to recreate the narrative based on side notes and internal discussions.

This is where having a project such as SIGN begins to become more than it initially appears to be.

It was not a glitzy thing that made me change my mind. It was how the stack appears to be created with revision pressure rather than making revision appear to be rare. The more I looked at TokenTable, the more that was noticeable. This is not presented as a single distribution sheet. It is constructed on versioned tables, freezes, revocations, claims delegation, and execution evidence which can later be replayed. Sign Protocol below is not present simply to assist a record to exist. It exists because the correction path is also connected to evidence rather than floating to the administration memory. The reason that is important is that any correction can only be credible when it is physically below the original logic as opposed to being external to it.

That is the bottleneck that I believe is overlooked by many.

Even rules-based systems do not tend to fail due to the fact that no one wrote a rule.

They collapse due to the reality pressure to make an adjustment and the system cannot swallow it honestly.

As soon as that occurs, teams begin to improvise. A spreadsheet gets edited. A backend flag changes. A support operator will be instructed to deal with this one manually. There is a stoppage in a payout that does not have sufficient surrounding context. The subsequent auditor is in a position to determine that something has changed, but not whether the change was valid, reasonable or even related to the initial ruleset. It does not stop being called the workflow, but not something I would refer to as clean infrastructure. It turns out to be a trail of exceptions that only makes sense to everybody who was there.

That is where SIGN became to me no longer a generic verification project but a sort of correction infrastructure.

It is not difficult to observe the project-native evidence, once you put on that lens. The architecture of TokenTable has been made to enable versioned allocation logic rather than assuming that the initial manifest is final always. It specifically specifies freeze, clawback and delegated execution paths, which is important since it is precisely there that corrections can be operationally hazardous when not tied to evidence. The use of Sign Protocol in the schema, attestations, and queryable records is relevant in this context due to the same reason. Re-correction lacks credibility since a person claims that it had to be the case. It is credible that the previous, the subsequent, the authority, and the settlement medium can be still read as the chain of one.

That marked the beginning with me.

I ceased to perceive the burden as how do we prove the decision.

I began to think of it as how can we mend the decision without perverting the fact of how it altered.

That is a far more exasperating issue. It is also much more valuable.

Many systems can demonstrate the ultimate state. Fewer can demonstrate to you the way to make corrections so that it can be legitimate under pressure. Such difference is not a trivial thing as people want to believe. A completed table may be clean despite the disorganized process that created it. What I am interested in is whether the mess could be made legible. When a system can continue to act even when fed inappropriate inputs, even when it is frozen late, even when it is getting delegated claims, even when it is being revised after the fact, then it begins to feel as though it was real infrastructure rather than simulated accuracy.

It is also the first point of view wherein the SIGN is most natural to me as it is designed and not branded. The token is not interesting to me due to the fact that the word, digital trust, sounds significant. Whether or not these rails become subordinated to workflows in which revision, evidence connectivity, version modification, and reproducible settlement are not aberrant but common operational content I find interesting. Should the hard work lives in rectifying records without cutting them off their own history, then the mechanism on the correction path becomes very important than the original issuance had been at the time. That is where I begin to believe the token is deserved, since it is the ugly middle that systems are actually tested.

I do believe that this is precisely where the pressure ought to remain. When revisions accumulate does the correction trail still stay clean? Are freezes and clawbacks still written as sound policy measures rather than smarter-looking administration overrulings? In the case of a delegated claimant, it is seen that the system maintains context to allow the change to feel natural to the rules rather than attached to them. And in the case when operators are running at top speed, does versioning really ensure integrity, or does it merely leave a more organized trail of widening dispensability?

This is the question that interests me more than the first attestation be verified.

It is because the easiest system in the world is the system that is only right once.

The more difficult it becomes, the more acceptance of a mistake can be followed, a correction can be made, and the truth will remain unchanged.

@SignOfficial #SignDigitalSovereignInfra $SIGN