What continued to trouble me about SIGN was not the section in which a program determines the eligible. The section is generally in which all systems appear persuasive. The ugly aspect then emerges later in the flow when money is already flowing and someone then has to demonstrate that what was sent out of the budget is what was actually sanctioned by the rules. It is easy to say but when you are with the workflow that is basic. allocations are defined by one table. There is another system that executes claims. A third surface captures settlement. The next and most dangerous question in the entire process must then be answered by an auditor or operator: was value transferred in the exact manner of which the evidence had spoken, or are we looking at an ugly face of a mismatch that cannot be detected until execution? That was the one that I returned to with SIGN. TokenTable is explicitly defined as deterministic allocation deliverables, budget traceability and replayable audits whereas Sign Protocol is the layer of evidence where eligibility, manifests, execution and settlement are joined.

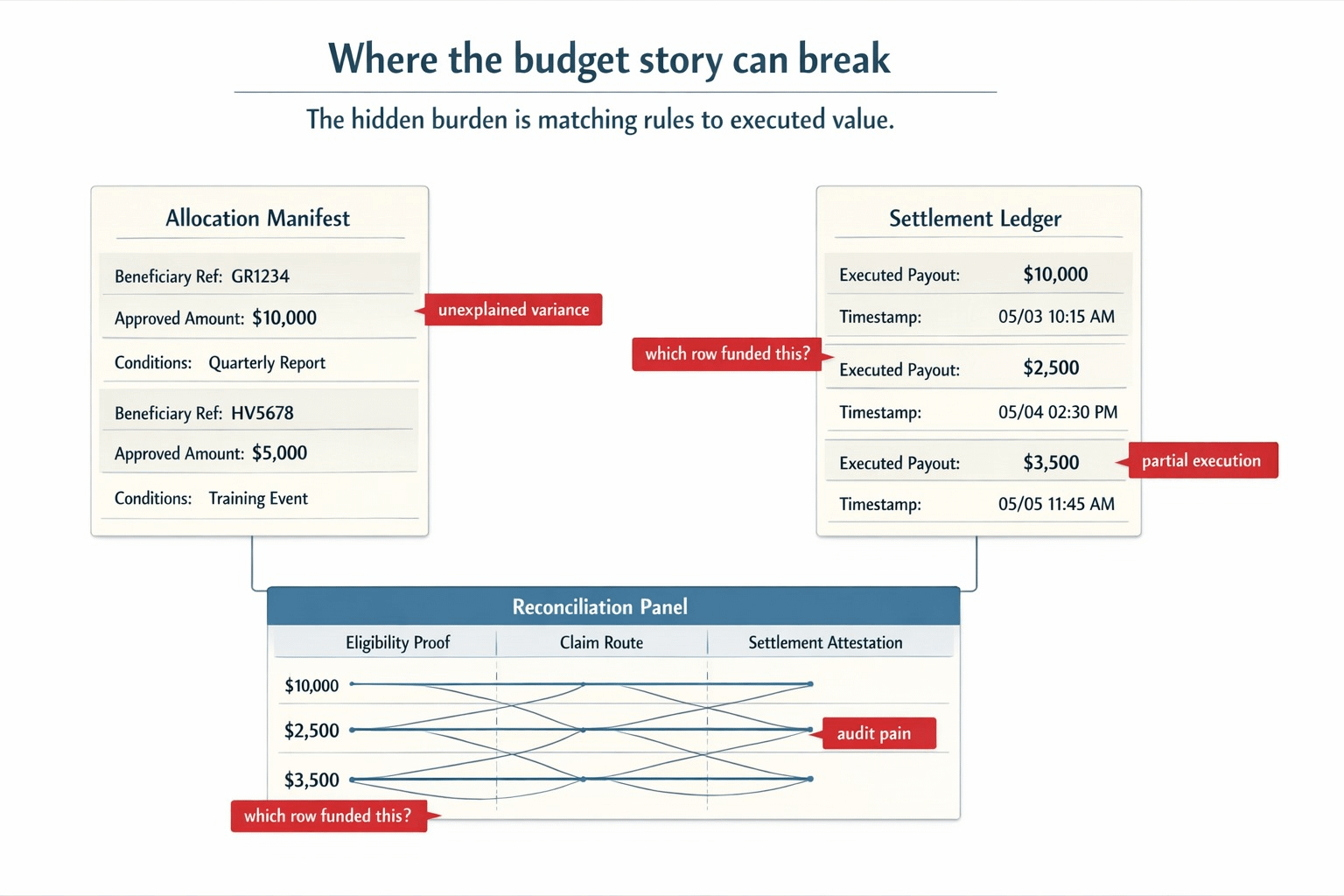

The burden I have hidden is that. Many individuals take distribution as though the tough part is establishment of eligibility prior to disbursement of funds. I believe that the more unpleasant issue begins thereafter where a program must balance what it wanted to deliver out with what was actually run. Not in theory. In records. In amounts. In timing. Under the same chain of evidence connecting a beneficiary reference with an allocation row followed with a claim path and then a settlement outcome. To the extent that such a chain is loose, then the system is far more vulnerable than it appears. You can still have good evidences at every step but you lack a single dependable response on how the budget has been used. The design language of TokenTable continues to revolve around this issue: allocation tables, claim execution, batched settlement, deterministic reconciliation, and audit tooling. That is not the language of decorative products. It is directly facing the mess.

It was agonizingly pedestrian to the workflow scene that struck me as real. An allocation table is completed by a program and seems complete. Claims made by beneficiaries occur later in other ways. Some claims are direct. Some are delegated. Some are batched. Certain hit proposals of varying times. Evidence of settlement is returned free enough to appear encouraging. However, once a person attempts to execute the program again, the labor becomes unattractive within just a short time unless all the motions are still pegged on the identical evidence trail. What allocation row was this payment a satisfying payment? What was the eligibility evidence that that row was based on? Was this freeze implemented prior to such the execution or not? Did this clawback revert the right paying out or merely another detached compensation? When those questions move off the evidence path and into side spread sheets or into the memory of the administration, the program does not stop, however, the credibility narrative is broken. The design of TokenTable is such that allocation manifests appear as evidence, execution results appear as settlement attestations, and can be re-executed in an audit-deterministic way. It is precisely that sort of detail that gave the project a lighter feel of a pitch rather than addressing a real operating headache.

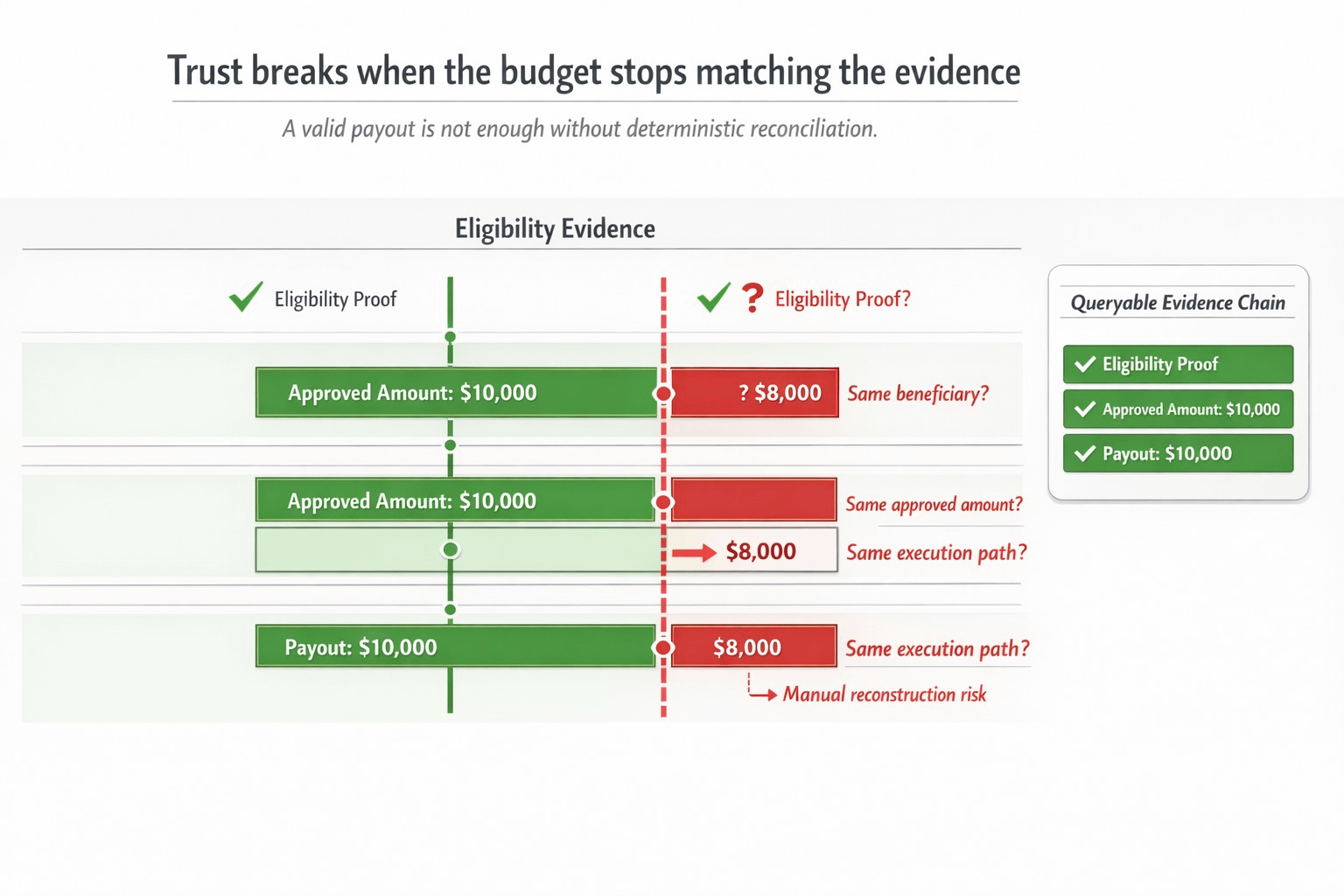

It is there that SIGN began to be of more significance to me than the clean headline enclosing verifiable records. It was not a single feature that made me alter my perspective on the project-native aspect. It was how the stack appears to be constructed in terms of remaining readable when the budget movement ceases being tidy once the workflow becomes untidy. Sign Protocol standardizes schema and attestation, allows full on-chain, off-chain, and hybrid data placement, and queries with SignScan to query supported chains and storage unanimously. It is important here because reconciliation discontinues when the evidence of where qualification was given, the account of what was given, and the evidence of what was settled, cease to be perused as a continuous series. The protocol is not merely assisting a claim being alive. It is assisting the subsequent read route remain in an organized form to enable the operator and auditors to respond to the difficult question without recreating the entire narrative manually.

What really struck me as a light bulb moment is the realization that it is not always fraud that is the worst failure in this case. It is unexplained variance. A program may spew out confidence even prior to spewing out money. The budget says one thing. The settlement book tells otherwise. There is technically the eligibility trail, but the joins are weak. It all depends now at the beginning of every subsequent review that it will be treated with suspicion, not since any one of the records is false, but since no one can reduce the entire trail into a path of one reliability quickly. There the so much "trust infrastructure" silently is turned into reconciliation theater. You have proof objects. You have logs. You have indexes. However, the operator is still left to do detective work to know whether the system really acted in a manner that was dictated by the rules. The more expansive contextualizing of evidence offered by Sign to audits and controversies, deterministic reconciliation, and traceability of a budget gave this a much more native feel to the stack than generic crypto storytelling could ever have achieved.

It was just a matter of turning point. I ceased considering the load as making a beneficiary qualified. I began to think that the actual burden was coming to prove that the budget remained honest when the execution had commenced. That makes me look differently on the entire project. A valid claim is not enough. It is not sufficient to have a valid allocation. A valid settlement event is in itself not sufficient. The question is, can one still read, even with claims, delegation, freezes, vesting or clawbacks complicating the program, those three things. It is an awful stinking problem than make this verifiable, and that is why a mixture of Sign Protocol and TokenTable is more serious to me than a number of cleaner-sounding systems.

It is also the original lens that $SIGN is more natural to me that is by design rather than by association. I do not care about the token since the words trust or infrastructure have a big sound. I am concerned when these rails go under programs where evidence must continue to match execution repeatedly multiple time in the real workflow. Assuming the hard work lies in repeated reconciliation of allocation logic, claim paths, settlement records and subsequent audits, then the repeated load mechanism is of more importance than the issuance moment of the original. It is an inference but the first that put the token to a sense of having a weight on its side as opposed to a loose anecdote about verification.

The hard part is yet to come under my watch. Does deterministic reconciliation remain clean when a program has a number of claim routes, number of operators and a number of vesting waves or exceptions? Are there lower levels of audit pain when linked settlement attestations are stored in hybrid storage and indexed query layers? In case a clawback/ freeze strikes on a half-executed budget, has the system maintained sufficient continuity to make it clear to the person who was not present at the time of decision what the ultimate budget narrative is? And is the concept of budget traceability in a smaller scale a reality or does it gradually transform into a prettier face over the same old reconciliation liability? That is what I consider more important than the fact that a proof was written in a clean form in the first place.

Owing to the fact that it is not the promise upon which the part of a distribution system really rests.

It is the point when one questions where the money had gone and the explanation does not need detective skills.

@SignOfficial #SignDigitalSovereignInfra $SIGN