@MidnightNetwork stands out, but not for the reason most people are chasing it.

It’s not just another project selling privacy as a virtue. If anything, it feels like a response to a quiet failure the industry still avoids admitting. We spent years treating transparency like a default good, as if more visibility automatically meant more trust. In reality, it exposed users, businesses, and workflows in ways that don’t scale once things get serious.

Midnight seems built with that tension in mind. Not everything should be public. That part is obvious. What’s less obvious, and much harder, is deciding what stays hidden, what stays visible, and how those boundaries hold when real usage begins.

That’s where this gets interesting.

Because Midnight isn’t taking the easy route either. It’s not going full black box and asking everyone to trust the system blindly. That model has already played out, and it usually ends the same way. A small group defends it, while everyone else hesitates to build on something they can’t properly inspect or debug.

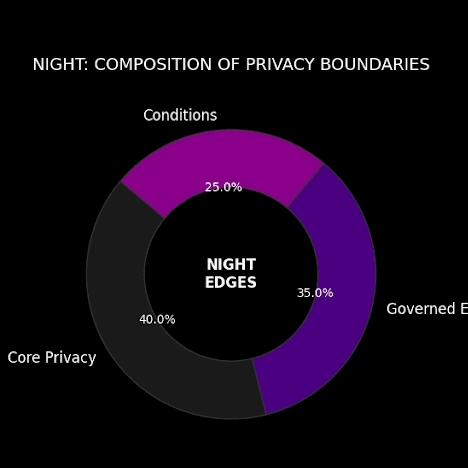

Instead, Midnight looks like it’s trying to balance both sides. Selective privacy. Controlled disclosure. A system where information can move without being fully exposed.

That sounds reasonable on paper. But paper is the easiest environment any system will ever operate in.

The real story starts later.

It starts when developers begin integrating it and run into edge cases that weren’t obvious before. When users hit friction and don’t understand whether it’s their mistake or the system’s design. When something breaks and the usual tools for diagnosing problems don’t work the same way because part of the system is intentionally hidden.

That’s the phase where most projects start to unravel.

Not in dramatic ways. Quietly. Through confusion, delays, and small failures stacking up. Support requests that don’t get clear answers. Documentation that made sense until it didn’t. Teams realizing that what looked elegant in theory becomes heavy in practice.

Privacy makes all of that harder.

Every hidden layer adds operational weight. Every protected interaction limits visibility into what went wrong. And someone, somewhere, has to translate that complexity into something users and developers can actually work with.

This is the part the industry consistently underestimates.

Midnight, whether intentionally or not, is walking straight into that challenge. Its structure suggests a deliberate split between public value and private execution. That’s a serious design decision. But serious decisions don’t just solve problems. They create new ones.

The question isn’t whether the architecture is clever. It’s whether it stays usable when things stop being ideal.

Can developers debug without turning the process into guesswork?

Can users trust outcomes without needing to understand every hidden step?

Can the system remain clear enough to work with, even when parts of it are meant to stay invisible?

Those are not philosophical questions. They’re operational ones.

And that’s why Midnight feels less like a privacy project and more like a stress test.

If it works, it won’t be because privacy sounds compelling. It will be because the system survives real conditions. Load, confusion, edge cases, and all the small pressures that expose whether something is actually built to last.

If it fails, it won’t be surprising either. Most systems do at this stage. Not because the ideas were wrong, but because the execution couldn’t carry the weight.

That’s the lens worth watching.

Not the narrative. Not the positioning. Just the behavior under pressure.

Because in the end, the only thing that matters is whether this holds up when people start using it like they mean it.