I noticed that even as systems became faster and more efficient, trust didn’t evolve alongside them. People still relied on screenshots, unverifiable claims, and social signals to establish credibility. Projects spoke about decentralization, yet quietly depended on centralized checkpoints, KYC providers, internal databases, curated access layers.

The architecture looked open, but the trust layer wasn’t.

That disconnect wasn’t dramatic. It was subtle. But it kept repeating.

Looking closer, the issue wasn’t a lack of innovation. If anything, there were too many identity frameworks, each introduced with strong conceptual backing, yet rarely embedded into real workflows.

Ideas sounded important but didn’t translate into practice.

And beneath that, there was friction. Every identity system seemed to ask users to do something extra, verify again, connect again, prove again. It never felt like part of the natural flow. Users didn’t reject these systems, but they didn’t rely on them either.

What felt off wasn’t just technical, it was behavioral.

Trust was still being approximated socially instead of verified structurally.

That realization changed how I started evaluating systems.

I stopped asking whether something made sense in theory and started asking whether it aligned with how people actually behave. I moved from narrative to execution, from features to workflows.

And one idea became central:

Systems should work quietly in the background without demanding attention.

The most effective infrastructure doesn’t introduce itself. It disappears into the experience. Payments are a simple example, no one thinks about settlement layers or clearing systems. They just transact. The system works because it removes the need to think about trust.

That became my filter: if identity required awareness, it probably wasn’t working.

This is where I started paying attention to attestations and more specifically, how they’re structured within Sign Protocol.

Not because it promises to solve identity, but because it reframes the problem in a more grounded way.

What if identity isn’t something you hold, but something that is continuously proven?

At first, this felt almost too minimal to matter. But upon reflection, that minimalism is what makes it usable.

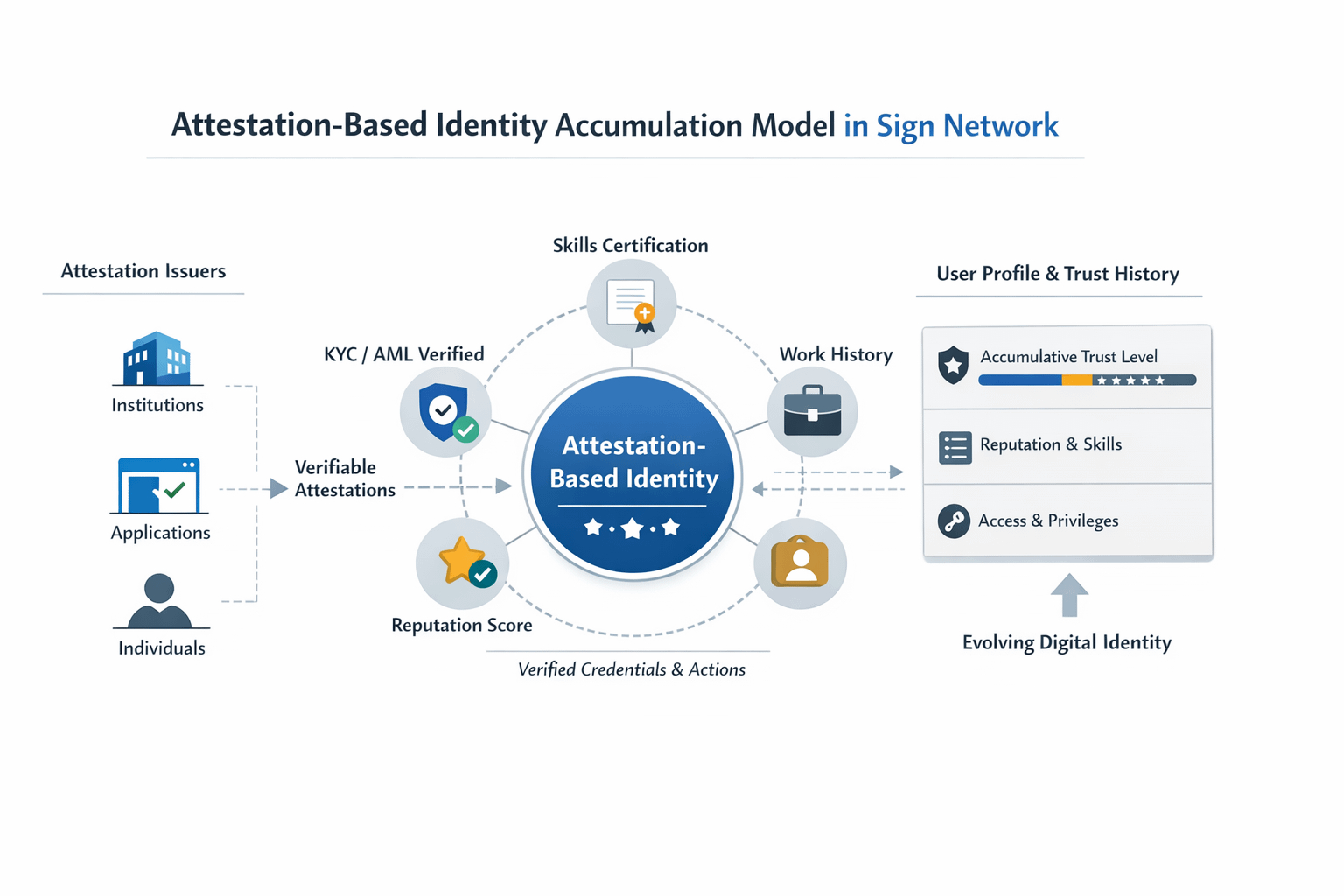

Instead of constructing a fixed identity profile, the system allows entities, applications, institutions, or individuals to issue attestations. These are verifiable claims tied to specific actions, credentials, or behaviors. They don’t attempt to define a user entirely. They capture fragments of verified truth.

And over time, those fragments begin to accumulate.

The deeper question this raises is structural:

Can identity become infrastructure instead of a feature?

Most systems treat identity as an optional layer, something you engage with when needed. But infrastructure behaves differently. It becomes embedded into every interaction, often without users noticing.

Attestations move identity closer to that model.

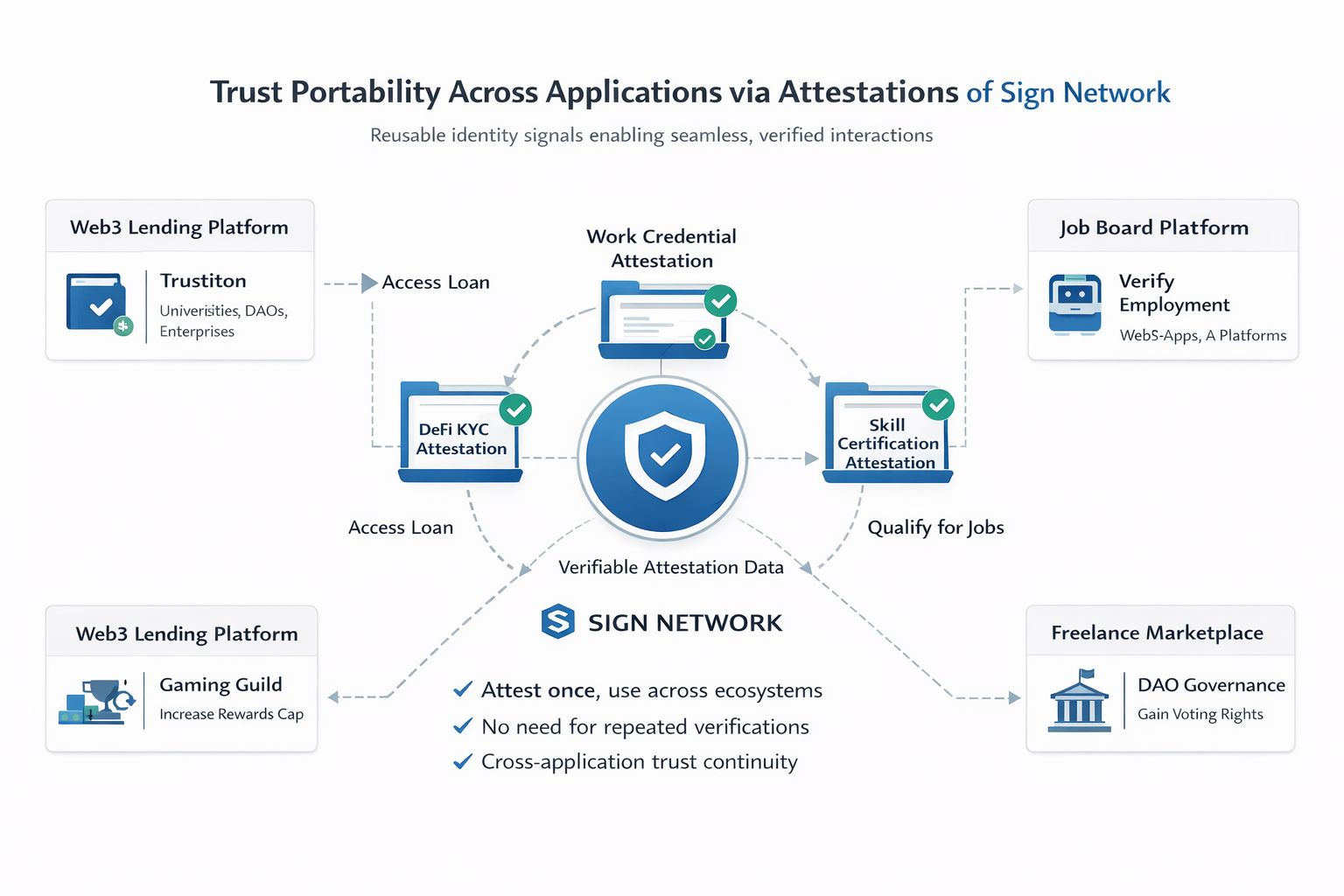

Because they are modular and reusable, a single verified claim doesn’t disappear after one use. It persists. It can be referenced across applications without requiring the user to repeat the process. This creates continuity—not just of data, but of trust.

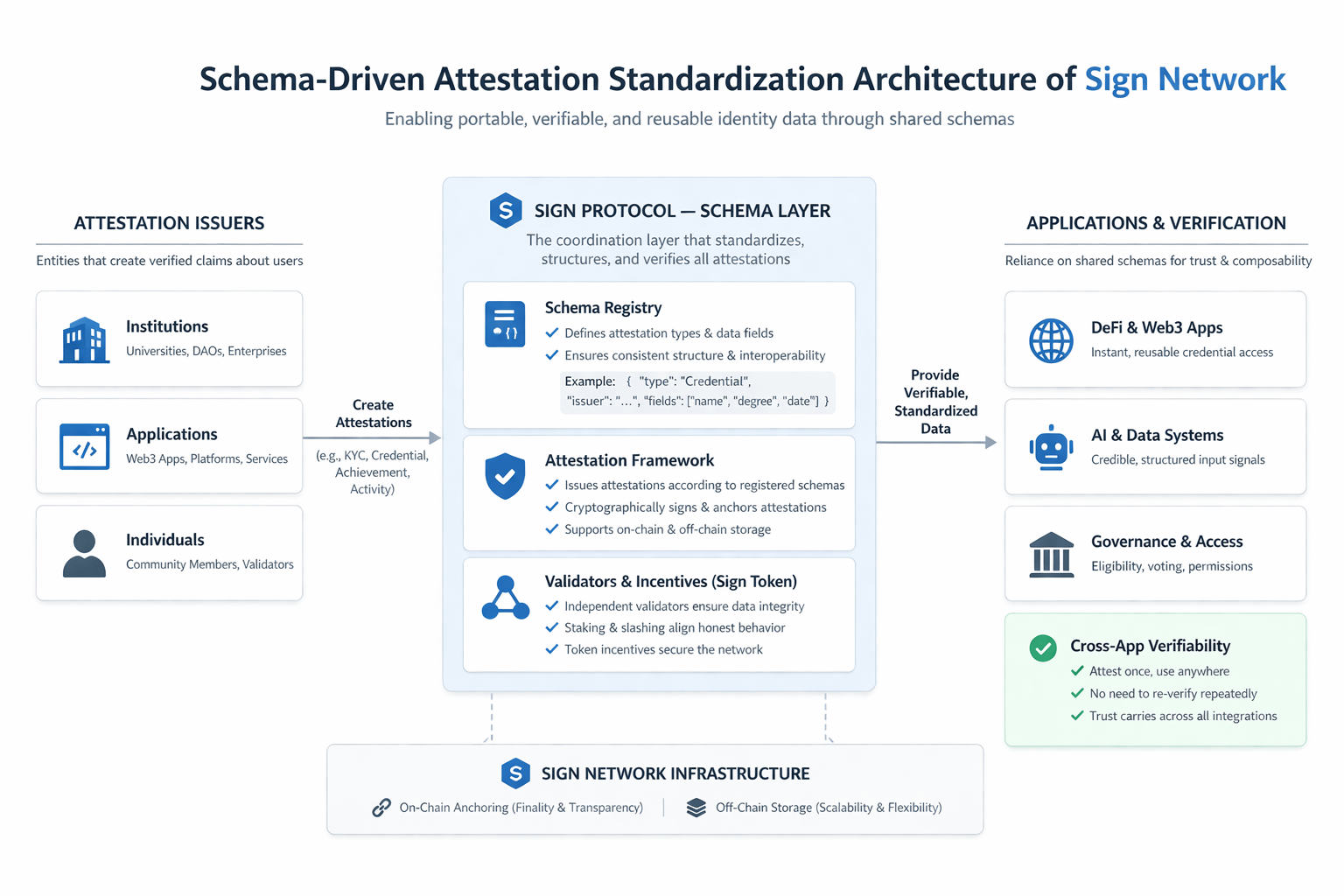

What makes this scalable isn’t just the existence of attestations, but the structure behind them. Shared schemas standardize how data is issued and interpreted. Without that standardization, verification remains fragmented. With it, trust begins to compound across systems.

That’s where the design shifts, from infrastructure to coordination.

From a system perspective, the mechanics are simple, but their implications are not.

Entities issue attestations based on defined schemas. These attestations can be anchored on chain or maintained through scalable off chain infrastructure, depending on the context. Verification mechanisms ensure integrity, while incentive layers potentially involving @SignOfficial encourage honest participation.

But what matters isn’t the mechanism. It’s what changes because of it.

In traditional systems, every platform rebuilds trust independently. Each interaction starts close to zero. In an attestation-based model, trust becomes portable. It carries forward.

It resembles financial systems in a quiet way. When you build a transaction history, that history informs future access, credit, permissions, opportunity. You don’t repeatedly prove your reliability from scratch.

$SIGN Protocol attestations introduce that kind of continuity into digital environments.

This becomes even more relevant when considering AI systems.

AI increasingly depends on data, inputs, feedback loops, behavioral signals. But one of its persistent challenges is verification. How does a system distinguish between authentic input and manipulated noise?

Attestations offer a subtle role here.

They act as verifiable anchors signals tied to known sources, validated actions, or credible entities. Instead of treating all data equally, AI systems could weigh inputs based on their attested credibility. Over time, this creates a form of memory that is not just persistent, but verifiable.

Not intelligence itself, but a foundation for more reliable intelligence.

In Sign governance systems, the implications become even more concrete.

Attestations don’t just represent identity, they quietly define eligibility. Who can participate, who can access resources, who gets a vote, and under what conditions.

Instead of static roles or easily manipulated criteria, governance can begin to rely on accumulated, verifiable history. Participation becomes less about claiming authority and more about demonstrating it over time.

That shifts governance from assumption to evidence.

Zooming out, this aligns with broader structural changes beyond crypto.

Trust is fragmenting. Digital interaction is increasing. Centralized institutions are no longer universally accepted as default sources of credibility. In many regions, especially emerging markets, formal identity systems don’t fully capture economic or social behavior.

Yet digital systems continue to expand.

In that environment, trust needs to become more flexible but not weaker. Systems that allow credibility to build incrementally, through verifiable and reusable signals, begin to feel less like innovation and more like necessity.

But only if they align with behavior.

And that’s where most systems fail not technically, but socially.

There’s also a persistent illusion in the market.

Attention is often mistaken for usage.

A protocol can generate discussion, attract capital, and build narrative momentum. But that doesn’t mean it’s being used in a way that creates dependency. Real usage is quieter. It shows up as repetition, integration, and necessity.

Markets tend to price expectations, not actual utility.

Attestations don’t naturally create visible momentum. They operate in the background. Which makes them harder to promote, but potentially more durable if they reach meaningful depth of integration.

The real challenge, though, is adoption.

For a system like this to work, identity cannot remain optional. It must be embedded directly into workflows where its absence creates friction. Developers need to integrate attestations not as an added feature, but as a simplification, something that removes repeated verification and improves coordination.

Otherwise, the system faces a threshold problem.

If users interact with attestations only occasionally, they don’t accumulate enough history to matter. Without repetition, there is no continuity. Without continuity, there is no trust layer.

And without that, the system remains conceptually sound but practically invisible.

At a more philosophical level, this all comes back to something I keep noticing:

The tension between signal and noise.

Technology has made it easier to produce information, but not easier to trust it. Identity systems risk becoming another layer of abstraction, complex, well designed, but detached from how people actually make decisions.

Attestations attempt to narrow that gap.

They don’t eliminate noise, but they introduce verifiable signal.

Whether that signal becomes meaningful depends less on the system itself and more on how consistently it is used.

I’ve become more careful about what I consider inevitable.

Identity feels fundamental. Attestations feel logically sound. But necessity isn’t determined by design, it’s determined by behavior.

At first, this felt like another promising abstraction.

But over time, I’ve started to see it differently.

Because the difference between an idea that sounds necessary and infrastructure that becomes necessary is repetition.

And repetition only happens when systems stop asking for attention and start becoming part of how trust naturally flows.