I’ve been staring at the RWA narrative for a while now, and honestly, most of the discourse feels like people discovered the word “tokenization” and decided that’s where the story begins and ends. You slap a realworld asset onchain, mint a token, and suddenly everyone acts like the job is done.

☝🏻 But if you’ve ever run a program that actually distributes capital whether it’s a grant, a subsidy, or an investment you know that’s where the real headache starts. What happens after the token exists? Who gets it, when, based on what verified conditions, and how do you prove to an auditor that you didn’t mess it up?

That’s where TokenTable from Sign clicked for me. I sat down with their docs expecting another vesting dashboard, and instead found something that reframes the whole problem.

TokenTable isn’t about creating tokens. It’s about controlling how they move after creation in a way that’s deterministic, inspection ready, and tied to verifiable evidence. The core output is something they call an allocation manifest a structured record that defines who receives what and when, generated before any execution happens.

💬 Think of it as the flight plan that gets filed before the plane takes off. Vesting schedules execute deterministically against those manifests. Outcomes are auditable before they happen, not reconstructed after the fact with spreadsheets and hope.

The part that most analyses skip is the inspection-ready reporting. Most tools treat audit trails as an afterthought you run the distribution, then later you scramble to piece together what happened. TokenTable generates the audit package as a structural output of the distribution itself. Every disbursement confirms that it followed the rules in effect at the time, that eligibility was verified beforehand, and that the outcome matches the approved manifest. For anyone who’s ever had to explain to a regulator why funds moved the way they did, that distinction is massive. It means the execution record *is* the audit trail. No reconstruction. No “we’ll get back to you with those logs.”

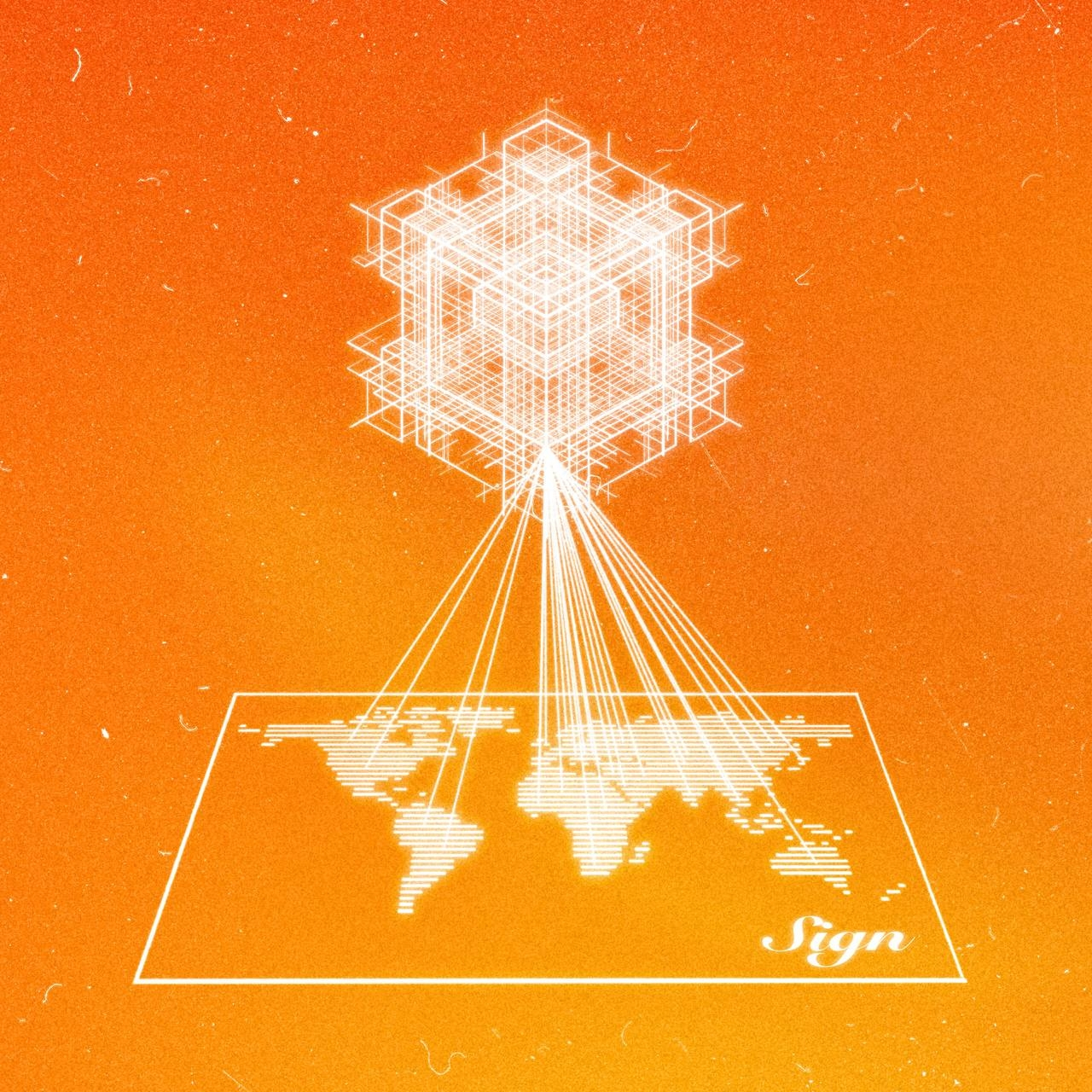

And here’s where the @SignOfficial ecosystem starts to compound. TokenTable doesn’t live in isolation. Every execution event produces a Sign Protocol attestation anchored onchain, queryable through SignScan. So a government running a subsidy program, an investment manager handling regulated vesting, and a foundation distributing grants are all generating interoperable, standardized evidence on the same infrastructure.

🙂↔️ That’s not just convenient; it makes cross-agency reconciliation and cross-program auditing technically feasible in a way that current systems absolutely do not support.

To put a number on it: the World Bank estimates government to person transfers exceed $1 trillion annually, with a significant chunk leaking through targeting errors duplicates, unverified recipients, funds released without eligibility checks. TokenTable structurally addresses each of those failure modes.

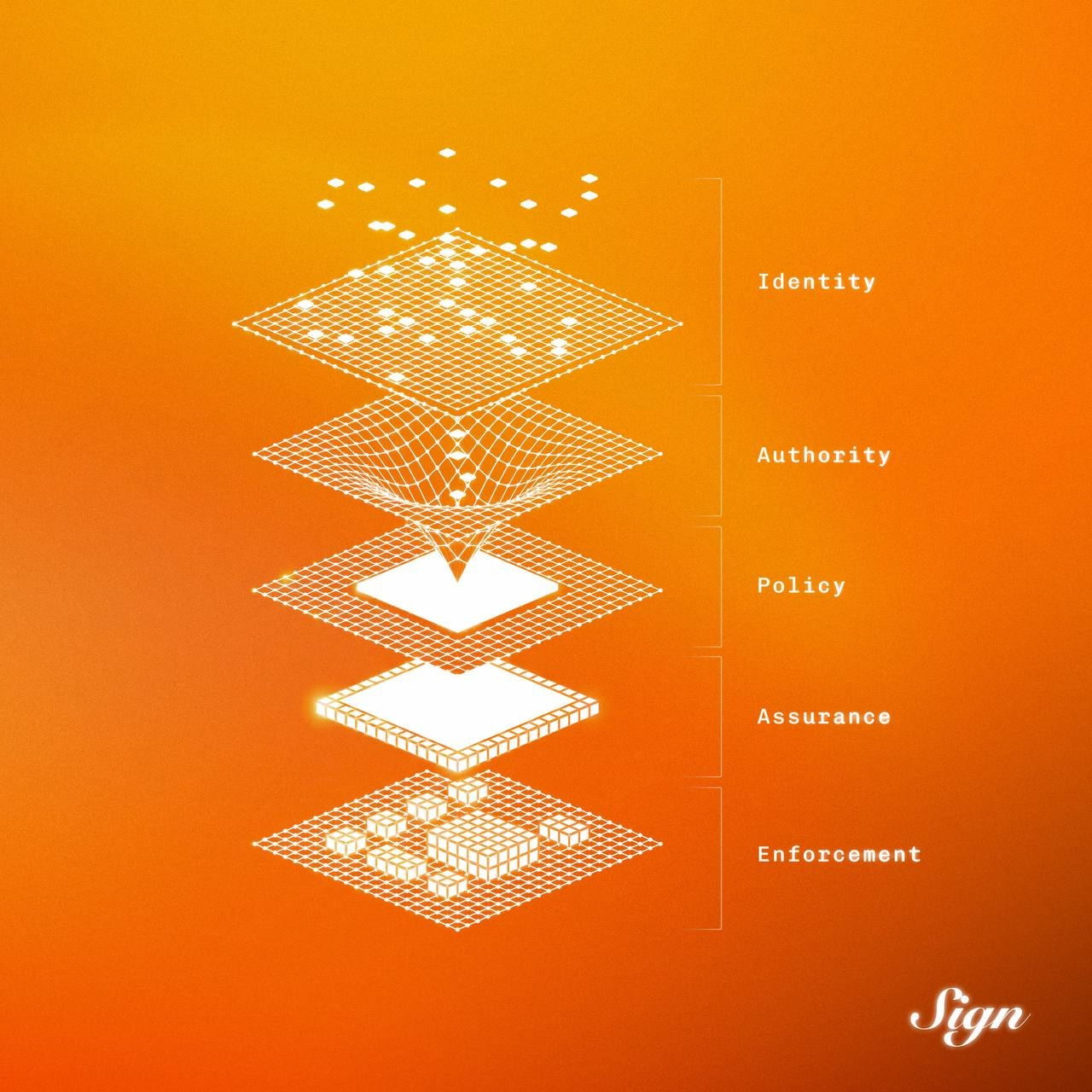

Eligibility verification produces a Sign Protocol attestation before any distribution executes. The allocation manifest is immutable once approved. Every execution event is queryable evidence. The technical solution maps directly onto the operational failure points. That’s not a “nice to have.” That’s the difference between funds reaching the right person or disappearing into a black hole.

What I find interesting is how Sign three products Sign Protocol, TokenTable, EthSign are designed to reinforce each other. TokenTable produces evidence, EthSign ties signed agreements to that evidence, and Sign Protocol makes everything queryable across chains and institutional contexts. It’s not three separate systems loosely connected by APIs; it’s different surfaces of the same underlying evidence layer. That integration is what separates them from vesting-only protocols that solve the timing but skip the auditability.

🙌 Now, I’ll be honest this isn’t a simple adoption story. Developer mindshare in the vesting space has already gravitated toward simpler tools. Getting a government treasury team, a national identity authority, and an external auditor to standardize on Sign’s attestation schemas requires the kind of cross institutional alignment that has delayed far simpler GovTech initiatives for years. The architecture being coherent doesn’t guarantee institutional adoption on any predictable timeline.

But here’s why I’m watching it anyway. @Sign is building in the right part of the stack. Distribution infrastructure that produces inspection-ready evidence as a structural output not a bolt on is exactly what regulated institutional programs need and currently can’t find. The market exists. The question is whether Sign can navigate the institutional adoption cycle fast enough to capture it before the window closes.

I don’t know how that plays out. But I do know that most projects are fighting over the same retail liquidity, and a few are quietly building the kind of infrastructure that governments, investment managers, and grant administrators actually need to function. TokenTable is one of those.