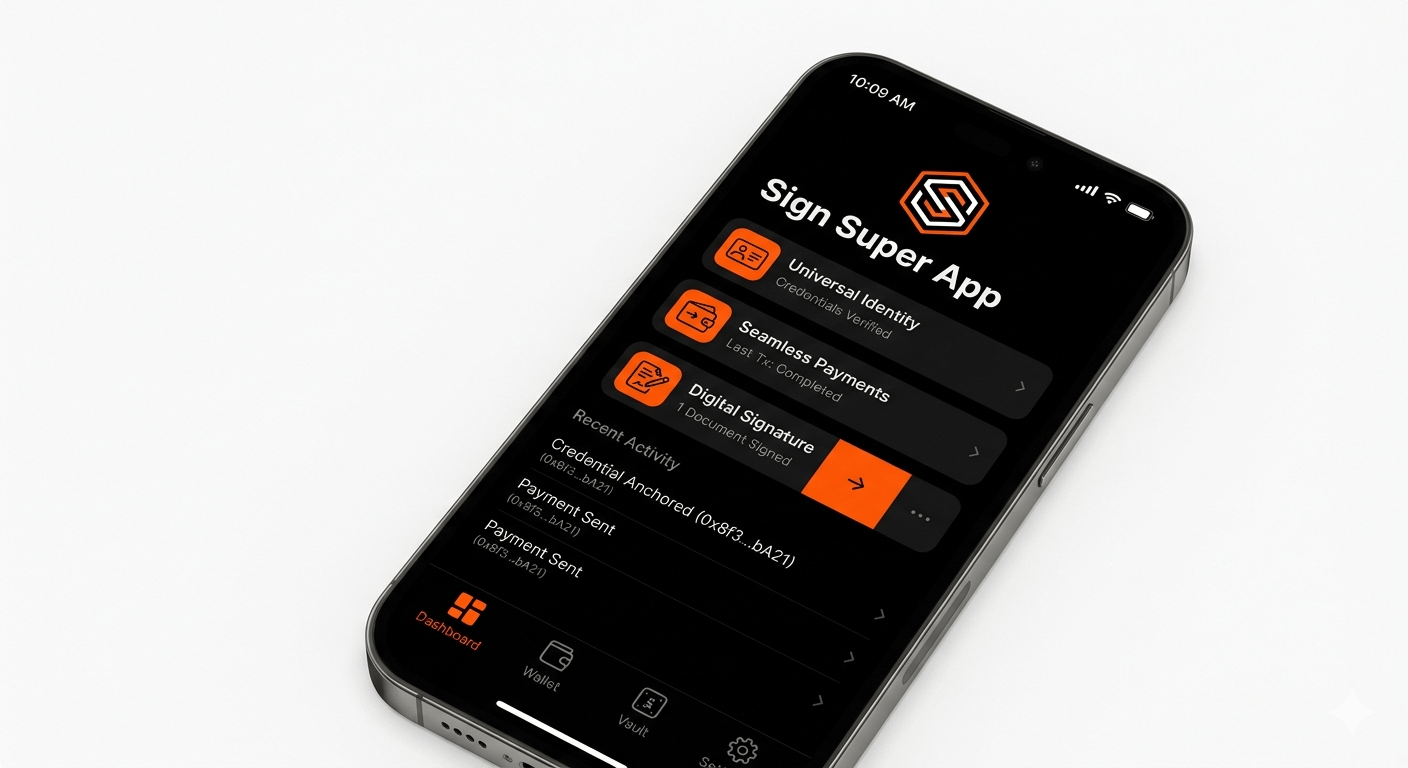

Yesterday night just hours after a quiet snapshot window closed for a credential distribution campaign, I found myself deep inside the documentation of @SignOfficial replaying a simulation that didn’t quite behave the way the vision promised. The idea itself still feels inevitable to me a unified super app where identity, payments signatures, and distribution collapse into one seamless interface. It reads like the endgame of Web3 infrastructure, something we hve been circling for years but never quite reaching. And yat the deeper I wentt the more that elegance started to show stress fractures at the execution layer.

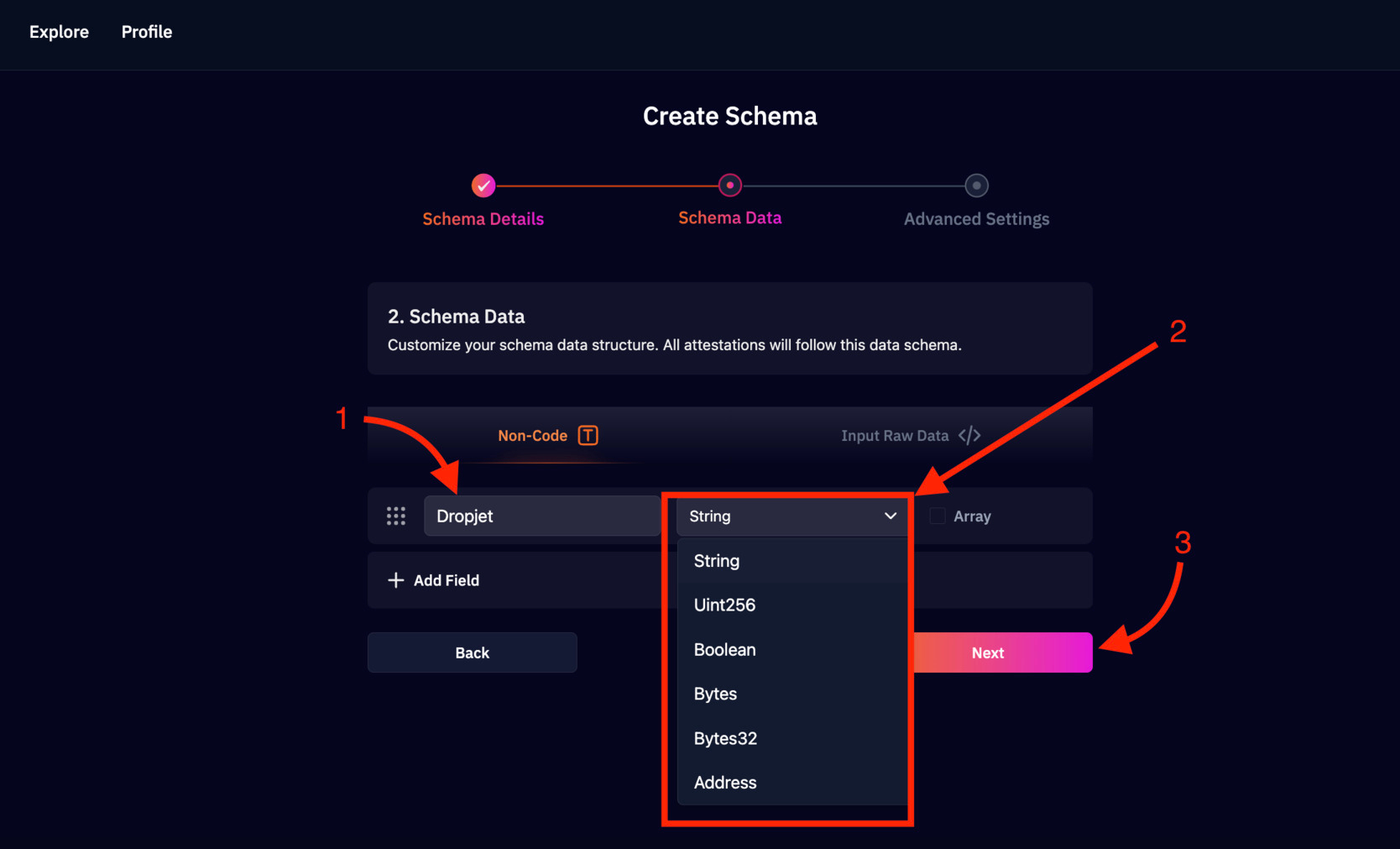

I tracked a simple credential anchoring flow tied to a test contract, something nothing complex, just a two megabyte credential pushed through an external storage layer and then hashed on-chain. The numbers were small in isolation but revealing in context. Around forty cents to pin externally, another thirty cents in gas even under relaxed testnet conditions, bringing the total close to a dollar for a single verifiable record. That’s manageable once, maybe even a hundred times, but when I tell yob mentally scaled it across thousands of users, dynamic credentials, and multi-chain distributions, the structure started to feel heavy. What stayed with me wasn’t just the cost, it was the repetition. Every update meant a new hash, a new anchor, a new payment. Nothing about that loop felt native to the fluid nature of identity or enterprise data.

At one point during the simulation, I hit a pause that I couldn’t ignore. A transaction didn’t fail, didn’t revert, it simply lingered. The indexing layer hadn’t caught up yet, and for a brief moment the system didn’t fully recognize its own state. It was only a few seconds, but it created a subtle dissonance. The super app vision assumes immediacy, a kind of real-time awareness where AI agents can read, decide, and act instantly, yet the underlying system still behaves with asynchronous hesitation. That gapr even when small, introduces a kind of cognitive friction that compounds at scale.

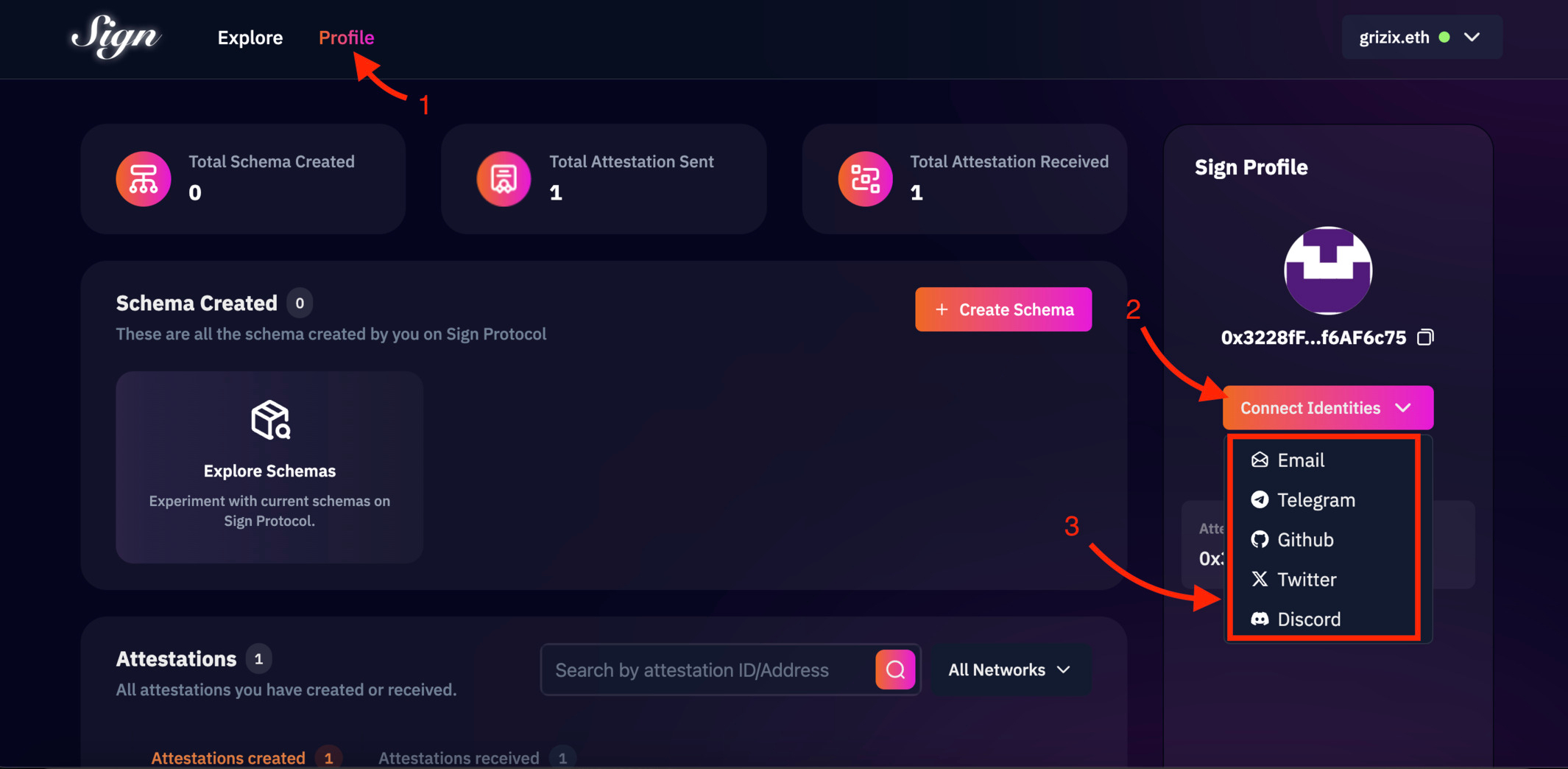

As I kept moving through the architecture, what became clear to me is that this system doesn’t really operate in layers the way we often describe it. The economic, technical, and identity components don’t stack neatly; they loop into each other constantly. The economic side, with a significant portion of token supply reserved to incentivize adoption, clearly aims to bootstrap scale, but every act of usage feeds back into cost pressure. The technical design, splitting data between on-chain anchors and off-chain storage, is logically sound and widely accepted, yet the retrieval layer introduces latency that feels out of sync with the expectations of AI-driven systems. The governance and identity layer is arguably the most elegant part, with programmable attestations removing human bias and automating verification, but identity itself is not static. Credentials expire, reputations shift, compliance rules evolve, and each of those changes pushes new data through the same cost and indexing loop again.

When I briefly compared this to systems like Fetch.ai or Bittensor, the contrast became sharper in my mind. Those systems feel more focused, almost disciplined in their optimization targets, whether it’s agent coordination or distributed intelligence. What Sign Protocol is attempting feels broader, almost like compressing an entire digital economy into a single interface. That ambition is what makes it compelling, but it also magnifies every inefficiency underneath.

The honest part I keep returning to is that the application layer already feels like the future. AI-assisted compliance, automated distribution, seamless user experiences, it all reads like something ready to deploy at scale. But the infrastructure beneath it still feels like it’s negotiating with older constraints, fragmented storage, inconsistent indexing, and latency that doesn’t fully disappear. It creates this strange sensation of a highly advanced system resting on a foundation that is nt fully synchronized with its own ambitions.

And the question that keeps sitting with me is not whether this can work, but whether it can work invisibly. If Sign Protocol succeeds in abstracting all of this away, if the super app truly becomes frictionless, then most builders will never see the complexity underneath. They’ll just trust that it works. But what happens when that trust is placed on a system where cost, latency, and state consistency are still variable? I keep wondering whether the next generation of builders will be empowered by this abstraction or quietly constrained by it, building on top of assumptions that only hold true most of the time.