I didn’t expect a simple architectural detail to change the way I was thinking about verifiable data systems, but that’s exactly what happened while I was going through the Sign Protocol documentation from @SignOfficial .

At first glance, “hybrid storage” sounded like another variation of the usual Web3 privacy discussion. But the deeper I followed the design, the more I realized it’s not just about storing data differently it’s about splitting what a claim actually is into two verifiable layers that behave independently, yet remain cryptographically tied together.

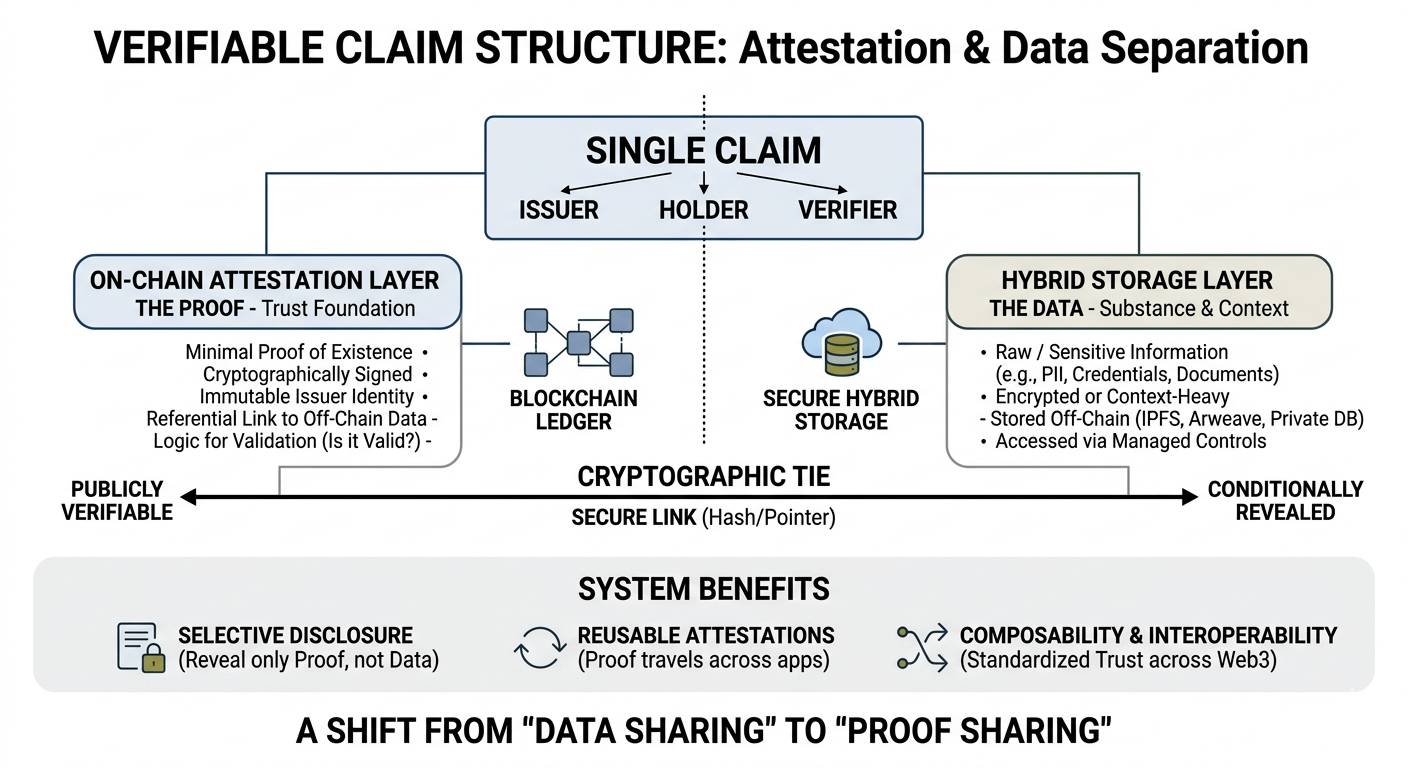

What stood out to me is this idea that a single claim is no longer a single object. It’s divided. One layer lives on-chain as a structured attestation minimal, readable, and verifiable. It carries the proof of existence, issuer identity, and the logic needed to validate it. The second layer holds the actual substance of the claim, often sensitive or context heavy, and is kept off-chain or encrypted, only revealed under controlled conditions.

While reading this, I kept pausing. Because it feels like Sign is not trying to eliminate privacy trade offs it’s reorganizing them.

In my view, this separation is where the system becomes interesting. The blockchain doesn’t try to store everything anymore. It only stores what is necessary for trust: the proof that something is valid, not the thing itself. That shift sounds subtle, but it changes how I interpret “on chain truth.” Truth becomes referential rather than fully exposed.

What really caught my attention is how selective disclosure naturally fits into this model. Instead of repeatedly sharing raw personal or institutional data, a user can present a verifiable signal derived from an attestation. So the same underlying claim can support multiple contexts without being re exposed each time. That changes the emotional weight of data sharing it feels less like broadcasting information and more like reusing proof.

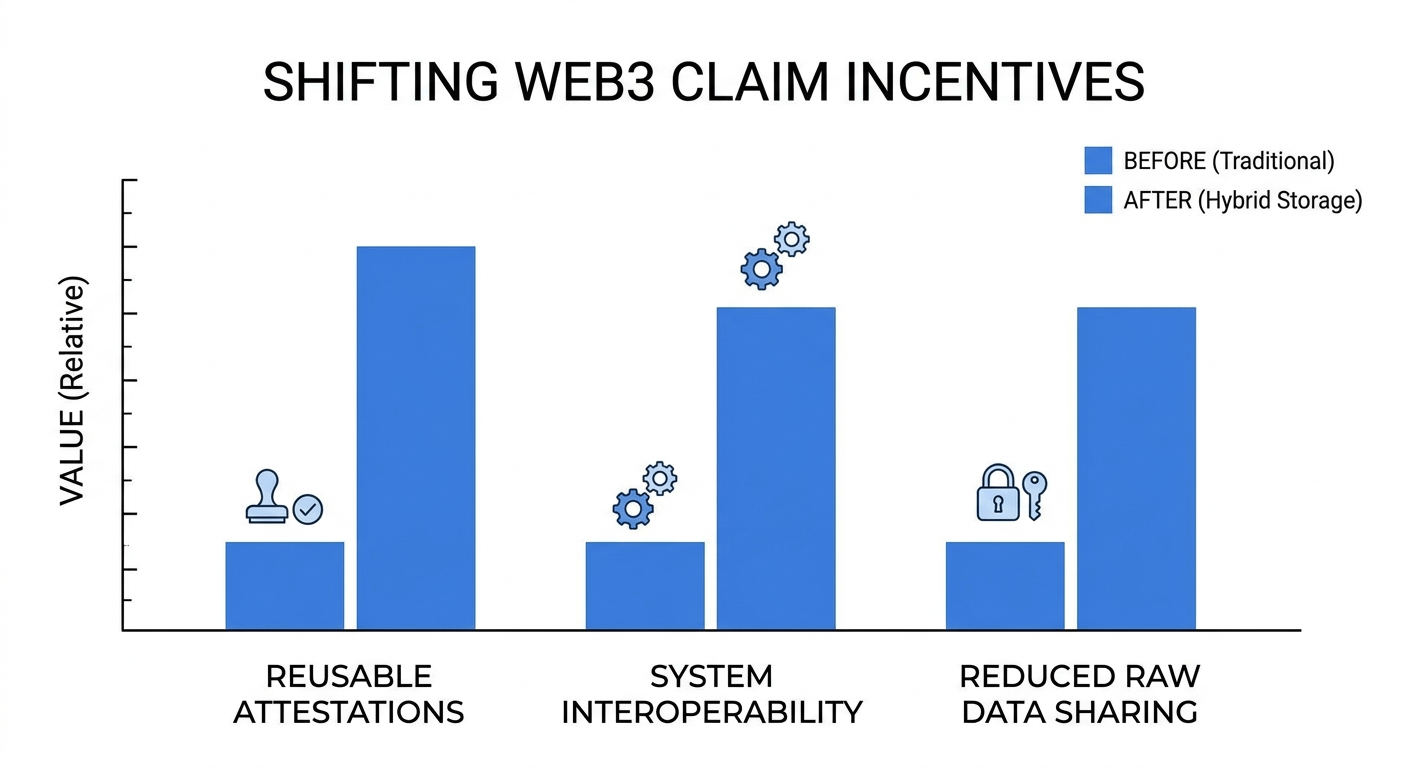

As I thought more about it, I started seeing a shift in incentives too. Applications don’t need to continuously collect sensitive datasets. Users don’t need to constantly reveal more than necessary. And trust starts to move away from repeated verification toward reusable attestations that can travel across systems. This is not just efficiency it’s a different assumption about how trust accumulates in digital environments.

Another detail I found important is composability. If attestations become standardized, they are no longer locked inside one application’s logic. They can be interpreted elsewhere, layered into other systems, or combined with different proofs. That makes the hybrid structure more than just storage design it becomes a potential coordination layer for identity and reputation across Web3.

My takeaway so far is that Sign Protocol is quietly reframing how we think about the boundary between transparency and privacy. It doesn’t force a choice between revealing everything or revealing nothing. Instead, it creates a structure where proof and data can exist separately but still remain mathematically connected.

And that makes me wonder whether future digital identity systems will rely less on “data sharing” and more on “proof sharing,” where what moves across networks is not information itself, but verified fragments of truth.

I’m still sitting with this idea, but I keep coming back to the same question: if claims are now split into verifiable layers, are we slowly moving toward a world where trust is no longer stored in data but in proofs that outlive the data itself?

Curious how others are interpreting this separation between attestation and underlying data.