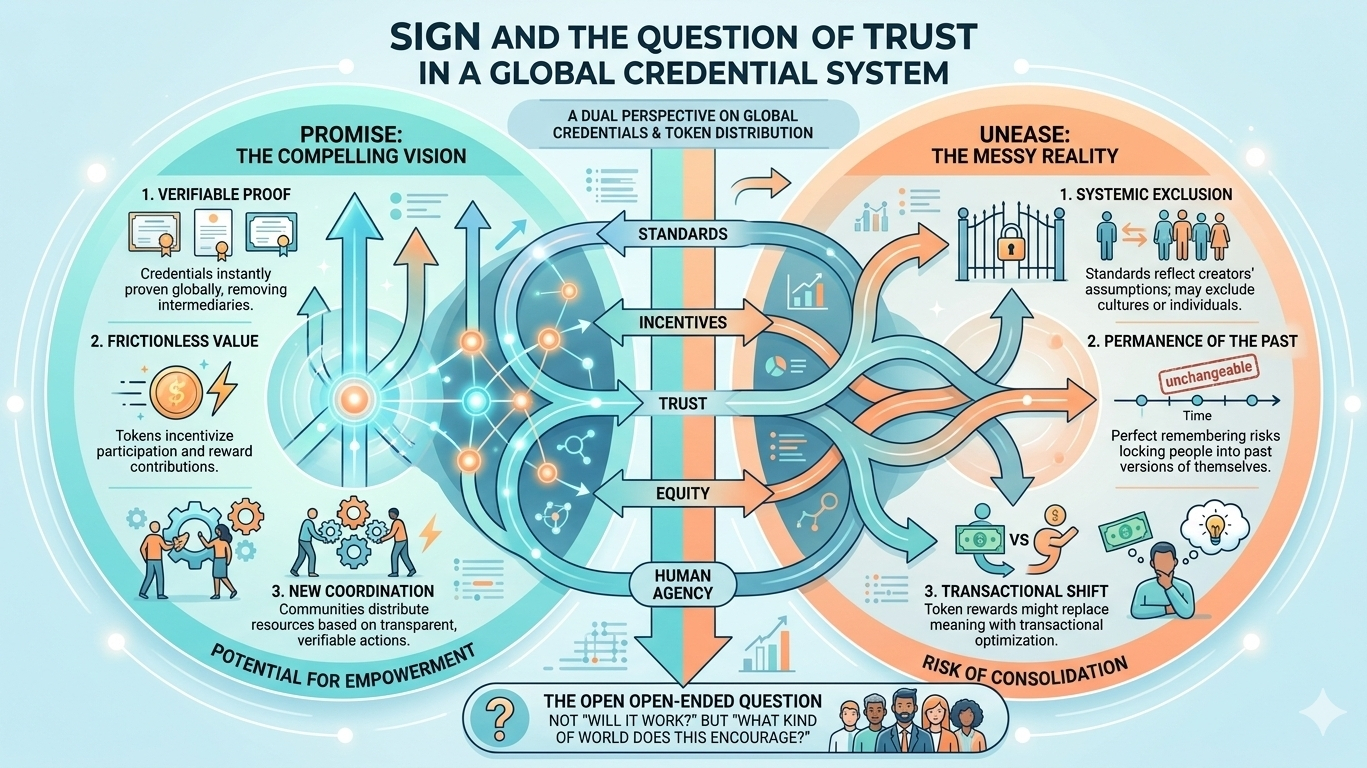

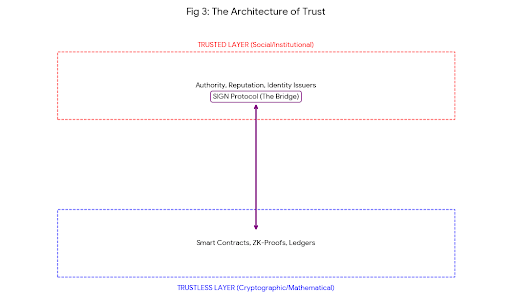

I keep coming back to this idea that we’re trying to build trust into systems that, by design, don’t trust anyone. That tension sits right at the center of something like SIGN—this notion of a global infrastructure for verifying credentials and distributing tokens. On paper, it sounds clean, almost inevitable. Of course we’d want a shared layer where credentials can be proven and value can move without friction. But the more I sit with it, the more complicated it feels.

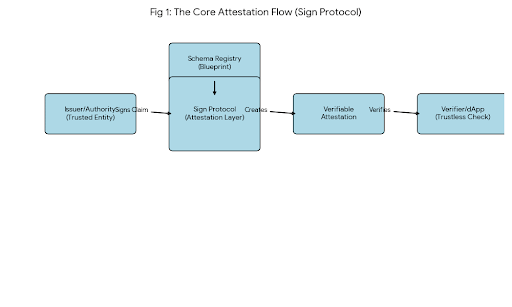

I think about how much of modern life quietly depends on credentials. Degrees, licenses, IDs, memberships—little fragments of proof that say, “yes, this person is allowed to be here, to do this, to access that.” Most of these are locked inside institutions that don’t talk to each other very well. You graduate from a university, and suddenly your achievement lives in a database you don’t control. You get certified somewhere, and proving it elsewhere becomes a small bureaucratic journey. So when I hear about a global system that could standardize and verify all of this, part of me immediately sees the appeal. It feels like fixing something that’s been broken for a long time.

But then there’s the other part of me that hesitates.

Because “global infrastructure” is one of those phrases that sounds neutral until you really unpack it. Who defines the standards? Who gets to issue credentials that actually matter? And maybe more importantly, who gets excluded, even unintentionally? Systems like this tend to reflect the assumptions of the people who build them, and those assumptions don’t always translate well across cultures, economies, or even individual circumstances.

I also find myself wondering about permanence. If credentials become cryptographically verifiable and widely accessible, what does that do to the idea of change? People evolve. They learn, unlearn, reinvent themselves. A system that perfectly remembers everything you’ve ever proven about yourself could be empowering—or it could quietly lock you into past versions of who you were. I’m not sure we’ve fully thought through that tradeoff.

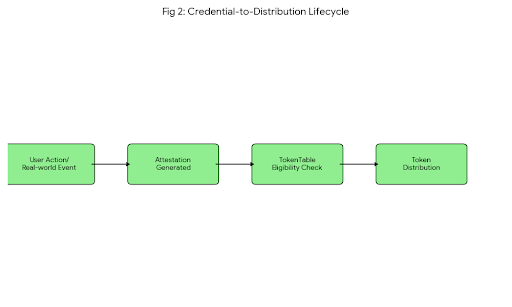

And then there’s the token distribution side of it, which feels like a different kind of complexity layered on top. Tokens are often framed as incentives, as a way to reward participation or contribution. In theory, that aligns interests nicely. But incentives shape behavior in ways that aren’t always obvious. If you start attaching tokens to credentials or actions, you risk turning genuine participation into something transactional. People might begin optimizing for rewards rather than meaning, which subtly changes the character of the entire system.

At the same time, I can’t ignore how powerful the combination could be. Verified credentials plus programmable incentives could unlock entirely new forms of coordination. Imagine being able to prove your skills instantly, anywhere in the world, without relying on intermediaries that may or may not recognize your background. Imagine communities distributing resources based on transparent, verifiable contributions rather than opaque decision-making. There’s something genuinely compelling about that vision.

Still, I keep circling back to trust—not just the technical kind, but the human kind. It’s one thing to verify that a credential is authentic. It’s another to decide whether that credential actually means something. A degree, a certificate, a badge—they’re all proxies for competence or experience, but they’re imperfect ones. If SIGN or anything like it becomes widely adopted, it won’t just be verifying credentials; it will be shaping what counts as a credential in the first place. That’s a subtle but significant kind of influence.

I also can’t shake the feeling that we might be overestimating how much infrastructure alone can solve. There’s a tendency, especially in tech, to believe that better systems lead to better outcomes. Sometimes they do. But sometimes they just make existing dynamics more efficient. If there are inequalities in who gets access to education, to opportunities, to recognition, a global verification system might simply make those inequalities more visible—and possibly more entrenched.

And yet, despite all of this skepticism, I don’t feel dismissive. If anything, I feel cautiously curious. There’s a part of me that wants to see how these ideas evolve in practice, not just in theory. Because systems like SIGN aren’t built all at once; they emerge through iterations, through mistakes, through unexpected uses that no one planned for.

Maybe that’s the real story here. Not whether a global infrastructure for credential verification and token distribution is a good idea in the abstract, but how it behaves once it meets the messy reality of human life. How people adapt it, resist it, reshape it. Whether it ends up empowering individuals or quietly consolidating control in new ways.

I guess what I’m left with is a kind of open-ended question. Not “will this work?” but “what kind of world does this encourage?” And I don’t think there’s a simple answer to that. It depends on choices that haven’t been made yet, by people we don’t know, in contexts we can’t fully predict.

So I keep thinking about it, turning it over in my mind, noticing both the promise and the unease. And maybe that’s the right place to be for now—not fully convinced, not fully skeptical, just paying attention to how something like this unfolds.