one idea i keep circling back to is how most systems still treat privacy and control like they cancel each other out.

you can usually tell which side a system picked within minutes.

either it leans so far into privacy that institutions start losing visibility when something actually needs to be verified. or it leans so heavily into control that the entire concept of verification starts to feel indistinguishable from surveillance.

and that tension doesn’t stay theoretical for long.

it shows up where it matters most — identity systems, financial rails, public distribution programs — anywhere sensitive data and real accountability intersect.

but i think the real problem isn’t that systems pick the wrong side.

it’s that they frame the problem incorrectly to begin with.

privacy was never meant to mean invisibility.

and control was never meant to require omniscience.

that misunderstanding is where most architectures quietly break.

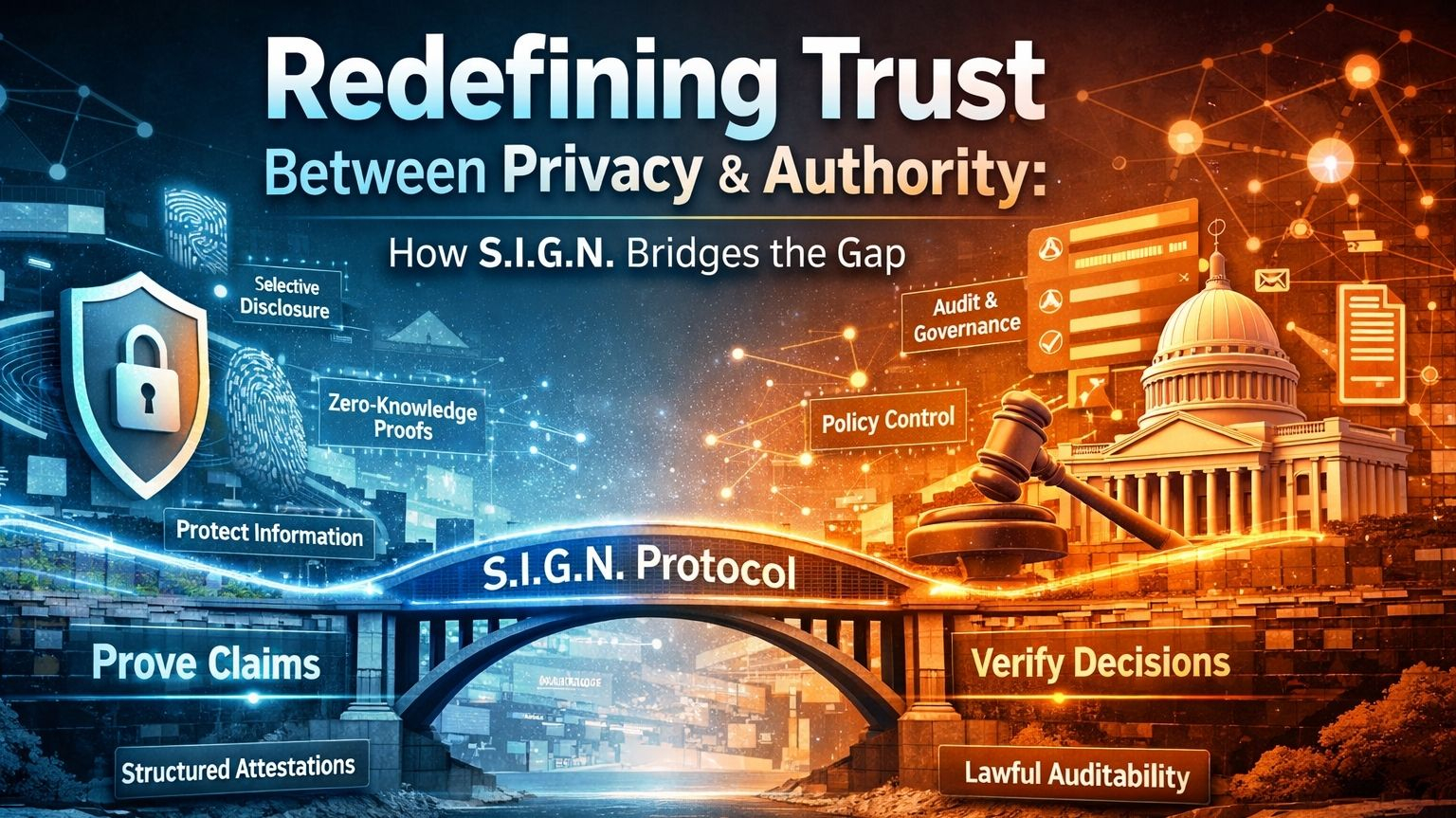

what’s interesting about S.I.G.N. is that it doesn’t try to resolve this tension by choosing a side.

it reframes the entire model.

the shift isn’t from privacy to control.

it’s from data access to claim verification.

and that’s a much narrower, more disciplined way to think about trust.

because once verification is separated from raw data access, the system no longer depends on constant visibility to function.

it depends on structured proofs.

in practical terms, this means you’re no longer exposing entire datasets just to answer simple questions.

you’re proving specific claims — and only those claims.

eligibility without full identity disclosure.

compliance without exposing transaction history.

authorization without revealing unnecessary context.

most systems optimize for visibility.

S.I.G.N. optimizes for provability.

and that distinction is doing more work than it seems.

because privacy doesn’t survive in systems that rely on broad access.

it survives in systems where exposure is minimized by design, not by policy.

but this is where most “privacy-preserving” systems quietly fall apart.

they focus so much on hiding data that they weaken accountability.

and in real-world systems, that trade-off doesn’t hold.

because sooner or later, someone needs to answer:

who approved this

under what authority

under which ruleset

and whether that decision still holds today

S.I.G.N. doesn’t treat that as an afterthought.

it builds around it.

instead of forcing raw data visibility, it structures evidence.

attestations become traceable.

approvals become attributable.

rules become referenceable.

not everything is exposed.

but nothing critical becomes unverifiable.

and that’s the balance most systems fail to achieve.

because sovereign control, in practice, was never about seeing everything.

it was about governing what matters.

who can issue

who can verify

who can revoke

what policies apply

and how systems respond when something breaks

control, in this model, is not visibility.

it’s authority over rules, actors, and outcomes.

and that’s a much more realistic definition of how institutions actually operate.

at the same time, privacy isn’t treated as secrecy.

it’s treated as selective provability.

the ability to prove something is true — without exposing everything behind it.

that’s a subtle shift, but it’s the difference between systems that scale and systems that collapse under their own exposure.

S.I.G.N. tries to sit in that middle layer most systems avoid.

not fully transparent.

not fully opaque.

but context-aware.

public where transparency is required.

private where confidentiality is necessary.

hybrid where reality demands both.

and all of it anchored in proofs that can hold up under scrutiny.

i think that’s the real transition happening here.

verification is becoming narrower.

more precise.

more intentional.

because systems don’t actually fail from lack of data.

they fail when they can’t prove the right thing, at the right time, to the right authority.

my only hesitation is where this balance lands in practice.

because “lawful auditability” always sounds clean in architecture.

but in deployment, it depends on governance quality, operator incentives, and how restraint is actually exercised.

the system can enforce structure.

it can define boundaries.

but it can’t guarantee how power is used within them.

and that part doesn’t live in code.

still, as a direction, this feels closer to institutional reality than most approaches.

S.I.G.N. doesn’t assume privacy means hiding everything.

and it doesn’t assume control requires exposing everything.

it treats trust as something that can be proven — selectively, precisely, and under governance.

and maybe that’s the point.

because at scale, trust isn’t about who can see the most.

it’s about who can prove enough — without revealing too much — when it actually matters.#Sign #SignDigitalSovereignInfra $SIGN @SignOfficial