Last Day:just after the CreatorPad snapshot window closed, I found myself still staring at the chain instead of logging off. It did not feel like the end of a campaign. It felt like I had just watched a system settle into itself.

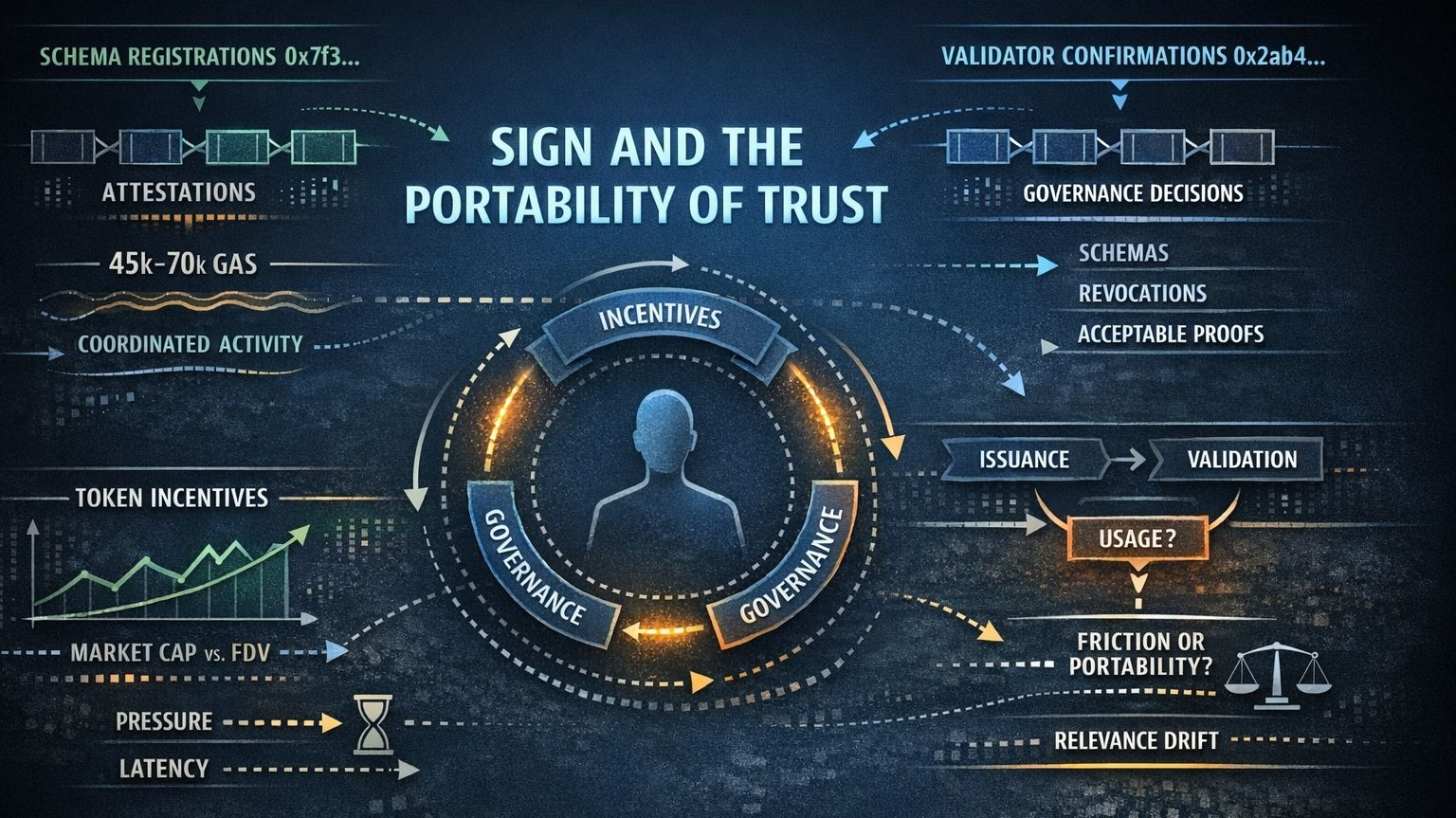

A few attestation calls were still moving through the network in small, disciplined bursts, and what held my attention was not scale or hype, but rhythm. Gas drifted slightly above its usual range, enough to suggest coordinated activity rather than random noise. I kept noticing repeating traces like 0x7f3.. pushing schema registrations and 0x2ab4.. finalizing validator confirmations inside tightly grouped blocks. The average cost per attestation appeared to hover around the 45k–70k gas range, but that was not the real signal. What stood out was consistency. The behavior felt engineered, deliberate, almost like something designed for repeated use rather than temporary attention.

At one point during a simulated credential flow, I hit a pause that stayed with me longer than I expected. The credential had already been issued, but validator confirmation lagged for a few seconds. Nothing failed. Nothing broke. Yet I was sitting in a strange in-between state where the proof technically existed, but could not fully function. That moment felt small on the surface, but conceptually it opened something larger. Systems like this assume a clean progression: issuance, validation, usage. Reality does not always move in that order. In that brief delay, I was not experiencing failure in the code. I was experiencing how timing itself can become a source of doubt inside a trust system. Even minimal latency can distort confidence, not because the logic is wrong, but because perception is fragile.

The more I traced the mechanics, the less I saw @SignOfficial as a stack of modules and the more I saw it as a loop. The incentives shaping validators are not neutral. They move in a direction, and that direction is influenced by token dynamics that still carry visible pressure, especially when there is a meaningful gap between circulating valuation and fully diluted expectations. That pressure does not stay contained at the market layer. It feeds into how validation is performed, which then shapes what becomes recognized as truth at the technical level. Once that truth is encoded, governance begins forming around it — schemas, revocation rights, acceptable proofs, legitimacy standards. But governance does not remain above the system. It bends back into incentives and starts shaping behavior all over again. Everything conditions everything else. That is why I do not experience Sign as layered architecture. I experience it as recursive design.

I kept mentally contrasting this with systems like Chainlink and Bittensor. Chainlink is fundamentally concerned with importing external truth into the chain. Bittensor is oriented around producing and ranking intelligence. Sign feels different. It operates on another axis. It is not primarily asking what is true or who is the most intelligent. It is asking a quieter and, in some ways, more foundational question: once something has been verified, how far can that verification travel before it begins to lose coherence?

That is where the deeper tension starts to emerge.

Every credential is a snapshot, but reality is never static. A verified identity, an attestation, a proof — each one captures a moment that has already passed. And yet the entire design aims to make that moment portable across contexts, platforms, and time. That portability is powerful, but it also introduces a subtle form of drift. Validity does not automatically preserve relevance. A proof can remain technically correct while gradually losing alignment with the context that once made it meaningful. There is no dramatic exploit in that process. No obvious system failure. Just a quiet widening gap between what is still valid and what is still alive.

Even the market structure around SIGN reflects a version of that same tension. On the surface, the price behavior looks familiar: post-TGE expansion, sharp repricing, fast correction, then partial recovery. That sequence belongs to a pattern the market knows well. But underneath it, the gap between market cap and FDV remains a structural reminder that future supply will eventually pressure the system in ways narrative cannot absorb on its own. Hype may create temporary lift, but only real usage can validate durability. The infrastructure will have to prove itself under demand, under latency, under governance strain, and under the pressure of incentives stretching over time.

What stays with me most is how unflashy the entire experience feels. There is no dramatic wow moment. No spectacle designed to force conviction. Instead, it produces a quieter kind of friction — the kind that keeps returning in thought long after the interface is gone. I keep coming back to the same question: are we actually removing friction, or are we simply relocating it into places that are less visible and therefore easier to ignore? The more I sit with Sign, the less I see it as a system that eliminates complexity. What I see instead is a system that compresses complexity, organizes it, and makes it transferable.

And beyond all the architecture, incentives, proofs, and governance loops, there is still a human being at the center of it. Not a validator. Not a schema designer. Just a person trying to prove something about themselves inside a system that prefers stable, reusable representations. That is the part I cannot stop thinking about. Does making trust portable actually empower the individual, or does it slowly translate them into fixed forms that fail to evolve as quickly as real life does?

That is the tension I keep sitting with.

And to me, that tension is exactly what makes Sign worth taking seriously.

#SignDigitalSovereignInfra @SignOfficial $SIGN