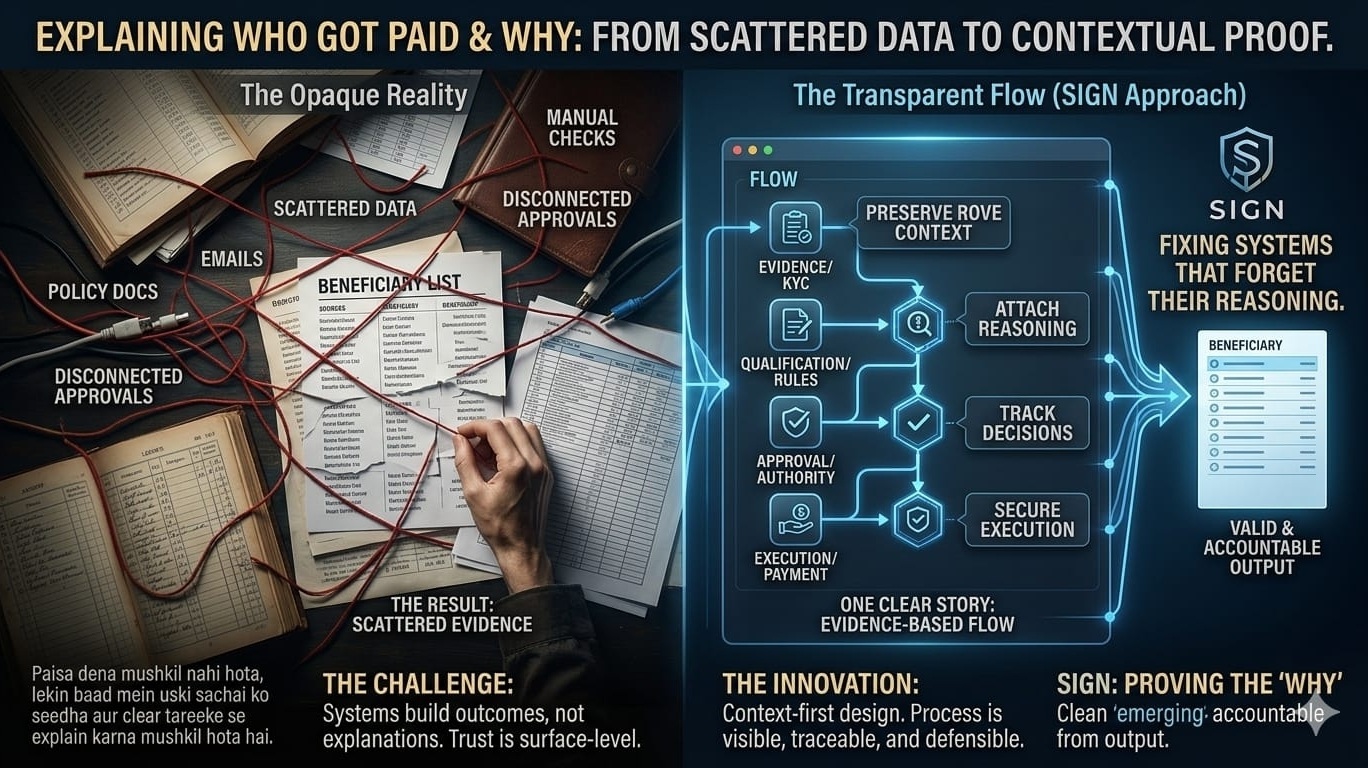

There’s something I’ve noticed over time, and it’s not dramatic or headline-worthy, but it keeps showing up in different forms. A payment goes out, a list gets finalized, and everything looks clean. On paper, it all feels complete. But the moment someone asks a simple question — why this person, based on what rule, approved by whom — things start to fall apart a little. Not because the answer doesn’t exist, but because it’s scattered. A bit of it lives in a spreadsheet, some in emails, some in a policy doc, maybe a KYC check from somewhere else. You can eventually piece it together, but it’s never really one clear story.

And I think that’s where the real issue begins. Most systems today are built to produce outcomes, not explanations. They’re good at getting things done, but not at showing how those things actually happened. A beneficiary list, for example, looks very final. It feels official. But if you look closer, it usually only reflects the result, not the journey behind it. Someone was marked eligible, someone else wasn’t, someone approved it, maybe someone made a small change along the way — but all of that thinking, all of those decisions, don’t really stay attached to the final list. They fade into the process that created it.

That’s what makes this problem deeper than just inefficiency. It’s not only about systems being messy or manual. It’s about the fact that once the outcome is produced, the reasoning behind it becomes fragile. And when that happens, trust becomes a bit surface-level. You’re expected to accept what happened simply because it exists, not because it can be clearly explained later.

This is the part where Sign started to feel different to me. Not because it’s trying to make things faster or more automated — a lot of projects already do that — but because it seems to focus on something more subtle. The idea that the process itself should remain visible, even after it’s finished. That instead of just storing who received what, the system should also preserve why they qualified, which rules were applied, who approved it, and what evidence supported that decision at the time.

And that shift feels small at first, but it changes everything.

Because automation alone doesn’t really solve this. In some ways, it can even make things worse. When a system generates a clean, polished output, it can look more trustworthy than it actually is. The logic underneath can still be unclear, but now it’s hidden behind something that looks even more official. What Sign seems to be doing instead is separating the idea of proving something from the act of executing it. One layer focuses on evidence, claims, and approvals. The other handles distribution, allocations, and execution. That separation feels intentional, like it’s trying to make sure actions don’t exist without context.

And when you start thinking in terms of flows instead of lists, things become harder to hide. A list is static — it’s just there, finished. But a flow forces you to think step by step. First there’s evidence, then qualification, then approval, then execution. Each part connects to the next. And if something goes wrong, you can trace it back more clearly. You can ask better questions. Who approved this? Under which version of the rules? Was it automatic, or did someone intervene? Those questions don’t disappear into the system anymore.

I think that’s where the real weight of this idea sits. Because the problem with opaque systems isn’t just technical, it’s structural. When the reasoning behind decisions isn’t preserved properly, it creates space for things that are hard to detect later — small biases, quiet exceptions, simple mistakes, or even intentional manipulation. And when issues come up, it becomes difficult to say what actually went wrong. Was it the data? The policy? The execution? Or just a broken handoff somewhere in between?

A system that keeps the full chain intact doesn’t eliminate those risks, but it changes how they behave. It makes them more visible. Less deniable. Harder to smooth over after the fact.

At the same time, I don’t think any system can fully solve this on its own. There’s always a gap between what technology allows and what people choose to do with it. A system can preserve evidence, structure decisions, and make everything traceable, but it can’t force transparency. That part still depends on the people running it, the rules they follow, and how much accountability they’re willing to accept.

So when I think about what Sign is really trying to address, it doesn’t feel like it’s just fixing messy lists. It feels like it’s trying to fix something more fundamental — the way systems forget their own reasoning. The way decisions turn into outcomes, and then lose the story that made them valid in the first place.

And once that story is gone, everything else becomes a bit performative. The list looks correct, the payment went through, the report checks out — but underneath it, the logic is thin or fragmented. You can’t easily reconstruct it. You can’t confidently defend it.

That’s why this direction stands out to me. Not because it sounds advanced, but because it focuses on something most people overlook. Sending money is usually the easy part. Being able to prove, later on, that it was sent for the right reason, under the right conditions, with the right authority — that’s where things get complicated.

Aur shayad yahi asal masla hai. Paisa dena mushkil nahi hota, lekin baad mein uski sachai ko seedha aur clear tareeke se explain karna mushkil hota hai. And that gap, more than anything else, is what feels worth paying attention to.

#SignDigitalSovereignInfra @SignOfficial $SIGN