The Quiet Architecture of Explainability

@SignOfficial I first noticed the problem not in a whitepaper but in a public meeting. A city official was presenting an automated decision on housing eligibility. The resident across the table asked a simple question: why. The official opened a laptop, scrolled through something, and said the system had flagged the application. No mechanism. No sequence. Just an outcome wearing the mask of explanation.

That moment keeps returning when I think about SIGN Token. The assumption most observers carry is that blockchain-based credentialing solves a transparency problem by making records immutable and public. But immutability is not the same as legibility. A record can exist on-chain and still be meaningless to anyone without the tools, context, or authority to interpret it correctly.

SIGN Token's architecture sits inside a broader class of infrastructure sometimes called attestation layers. What appears to be happening is that cryptographic signatures are attached to documents, decisions, or credentials, giving them verifiable origin. What is actually happening underneath is more structural: each attestation carries metadata that can be filtered, scoped, and surfaced differently depending on who is requesting it and under what authority. This is not a simple signature. It is a permissioned visibility system dressed in verification language.

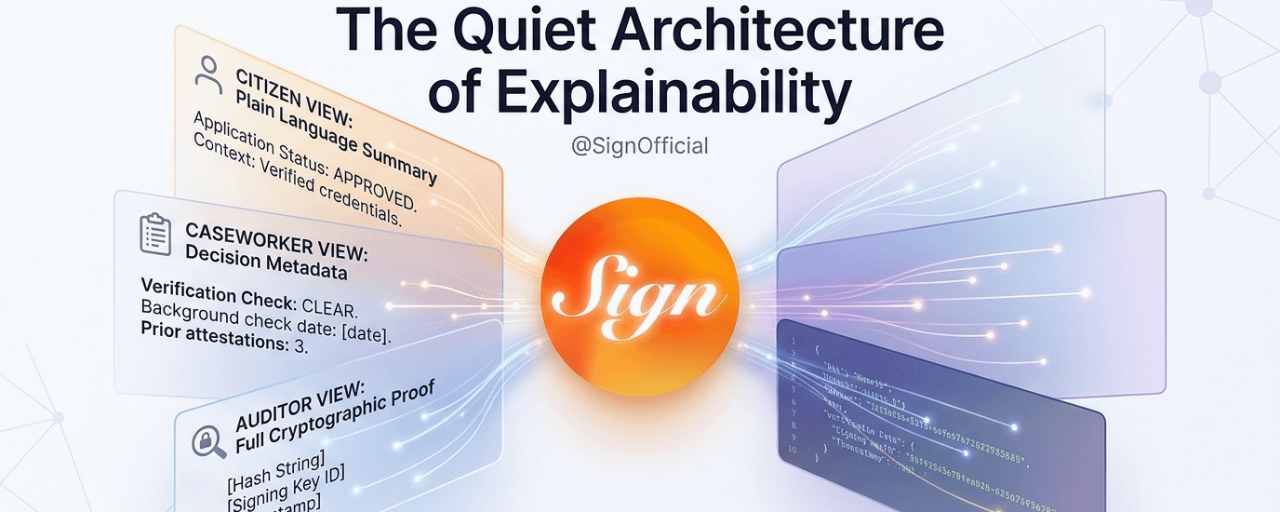

That design enables something important for public-sector use. A citizen asking why their benefit application was denied does not need the same explanation as a compliance auditor reviewing whether the denial followed proper protocol. A caseworker processing a new file does not need the cryptographic proof of a prior attestation; they need the human-readable summary of what it means for this case. One underlying record. Three different surfaces. The architecture has to carry all three simultaneously, or it collapses into either over-disclosure or opacity.

Ethereum processes roughly 12 to 15 transactions per second on its base layer under normal load, and attestation-heavy applications that reach those limits start queuing. Layer 2 deployments push that ceiling closer to several thousand operations per second, but the gain introduces latency in finality that matters when a caseworker needs a real-time status check. That operational tension is not a failure of the token design. It is a structural reminder that explanation is a live process, not a retrieval event.

Consider what happens when a caseworker queries an attestation. At the surface, they see a status indicator, perhaps a green confirmation that an identity document has been verified. What the system is actually doing is filtering a credential bundle against the caseworker's role permissions, then translating a machine-readable assertion into plain language using a pre-configured presentation layer. The caseworker never sees the underlying hash. They should not. But the auditor absolutely needs it, alongside the timestamp, the signing key, and the chain of custody that preceded it.

This layering introduces a real coordination challenge. If the presentation rules — what each role sees and how it is worded — are not governed carefully, the same underlying truth generates contradictory explanations. That risk grows as the system scales. Attestation networks in production environments today are handling upward of 400,000 credentials across municipal systems, and inconsistent presentation logic at that volume starts generating institutional confusion rather than clarity.

There is also the regulatory dimension. In jurisdictions where automated decisions must be explainable under law — GDPR Article 22 in Europe, emerging equivalents in Southeast Asia and the Gulf — the explanation has to meet a legal standard, not just a technical one. SIGN Token's infrastructure can anchor the data. But the interpretive layer sitting between the attestation and the citizen-facing explanation is where most governance failures occur. A number on-chain does not constitute a reason. It constitutes evidence for a reason. Someone, or some rule system, has to perform that translation.

What $SIGN Token actually represents, when placed in this context, is less a transparency tool and more a coordination substrate. It creates a shared, tamper-resistant record that multiple institutional actors can reference simultaneously while receiving different levels of detail. That is architecturally valuable in ways that most discussions about blockchain credentialing miss entirely. The headline is verification. The structural contribution is role-appropriate coherence across a system that would otherwise fragment into disconnected, unreconcilable versions of the same event.

The deeper implication reaches beyond any single token or protocol. As public-sector automation accelerates — processing rates in some welfare systems now exceed 60,000 decisions per month — the infrastructure underneath those decisions has to carry explainability as a first-class property, not an afterthought. SIGN Token, designed carefully, points toward what that infrastructure looks like.

Not a mirror. A filter. A structured, permissioned lens through which the same reality becomes legible to everyone who needs to see it, in exactly the form they can actually use. #SignDigitalSovereignInfra