Why the Quality Gate Is the Real Risk

i spent eighteen months doing user acquisition for a mid size mobile studio and honestly the moment i read through what Pixels is building with its publishing model i had to put the document down and recalculate everything i thought i knew about game economics 😂

because i know exactly what forty dollar user acquisition costs feel like in practice. i know what it means to spend that forty dollars acquiring a player who churns in week two and generates nothing. i know the math that makes most mobile games fundamentally unprofitable at scale unless you get extraordinarily lucky with a small group of high spenders.

the flywheel flips that math. and the mechanism that flips it is not the token and not the staking model. it is data portability.

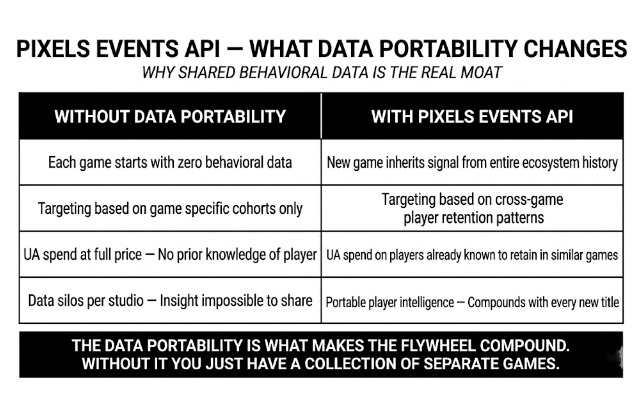

here is how it actually works. every player action inside any Pixels-integrated game gets logged through the Events API. that data feeds LTV curves, fraud scores, churn vectors, session depth patterns. it retrains nightly. and here is the part that changes the economics completely. that data is not siloed per game. it is shared across the entire protocol. so when a new studio integrates tomorrow it does not start from zero behavioral signal. it inherits everything that came before it. every cohort pattern. every retention curve. every churn indicator that prior games already paid to discover.

the second game in the network is categorically diferent from the first. the tenth is diferent again. the compounding is real and it is structural not speculative.

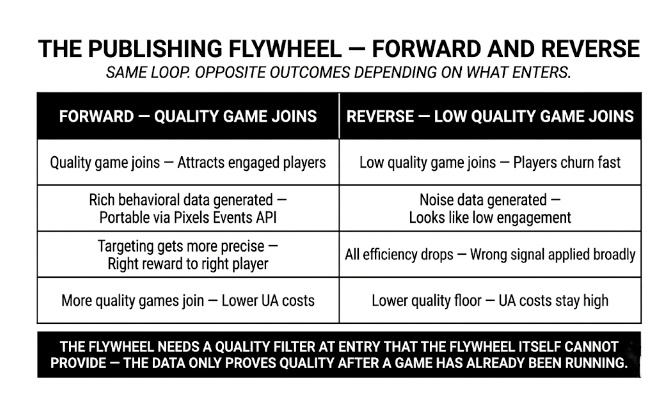

what bugs me: the loop only runs cleanly in one direction. and the direction depends entirely on what enters the system. a good game joining the network generates rich behavioral signal. engaged players. meaningful retention data. LTV curves that actually predict future behavior. that signal sharpens the targeting for every other game in the protocol.

a low quality game joining generates something else entirely. players who churn in week one from bad game design produce behavioral data that looks like low engagement. the model cannot always distinguish between a player who churned because of poor fit and a player who churned because the game was badly built. that noise enters the shared dataset. it degrades the signal quality for studios that did nothing wrong. and nobody has described a quality filter at entry that is strong enough to prevent this.

The tokenomics angle nobody discusses:

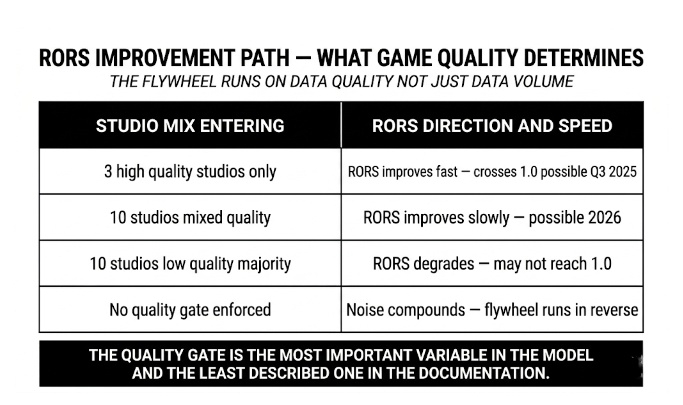

the staking model is supposed to function as this quality filter. games compete for $PIXEL ecosystem emissions based on community staking votes. in theory stakers allocate toward games with strong RORS performance which creates a selection pressure that keeps low quality titles from capturing meaningful emission share. in practice Phase 2 dynamic pools are not live yet. Phase 3 open pools require RORS above 1 which takes months of operational data to demonstrate. the quality filter the flywheel depends on is being built in phases while the flywheel is already running. there is a window where the selection mechanism is Pixel incomplete and the data loop is live simultaneously. nobody is modeling what happens inside that window.

My concern though: RORS is sitting at approximately 0.8 right now. that means every reward token distributed is still generating a net economic loss at the protocol level. the flywheel narrative assumes RORS crosses 1.0 as the data loop matures and targeting improves. that is the correct direction of travel. but crossing 1.0 requires the data improving fast enough to outpace the emission budget being deployed. and that race has a variable that the documentation does not quantify. how many low quality games can enter the system before the noise they generate slows the RORS improvement more than their studio count accelerates it. nobody has published that number. it may not exist yet as a calculated figure.

what they get right: the core insight behind the flywheel is correct and it is more sophisticated than anything else operating in Web3 gaming right now. most protocols treat each game as a separate economic unit. Pixels treats the player as the unit and the game as the acquisition channel. that reframe changes everything about how UA efficiency compounds. the behavioral data portability is not a marketing claim. it is a genuine structural advantage that gets harder to replicate with every month that passes and every game that contributes signal to the shared model. a studio trying to compete with this from scratch in two years would need to recreate not just the infrastructure but the entire dataset history. thats a real moat.

what worries me: the documentation describes the forward flywheel with precision. the quality gate problem that can run it in reverse gets one sentence. the whitepaper mentions that better games attract more stakers and games compete to demonstrate strong economics. but competing to demonstrate strong economics takes months of live data. during those months a low quality game is contributing noise to the shared dataset. the asymmetry between how precisely the upside is described and how vaguely the downside is described is itself a data point worth paying attention to.

honestly dont know if the publishing flywheel compounds into the self sustaining growth engine the documentation describes or whether it turns out to be a system that is only as strong as the quality of games it lets in and nobody has yet said clearly who decides that and how.

what's your take genuinely compounding infrastructure or a flywheel that depends on a quality gate that doesnt fully exist yet??

🤔