Honestly... I didn't expect to feel this specific kind of attention reading through how Binance Ai Pro describes its strategy workflow.

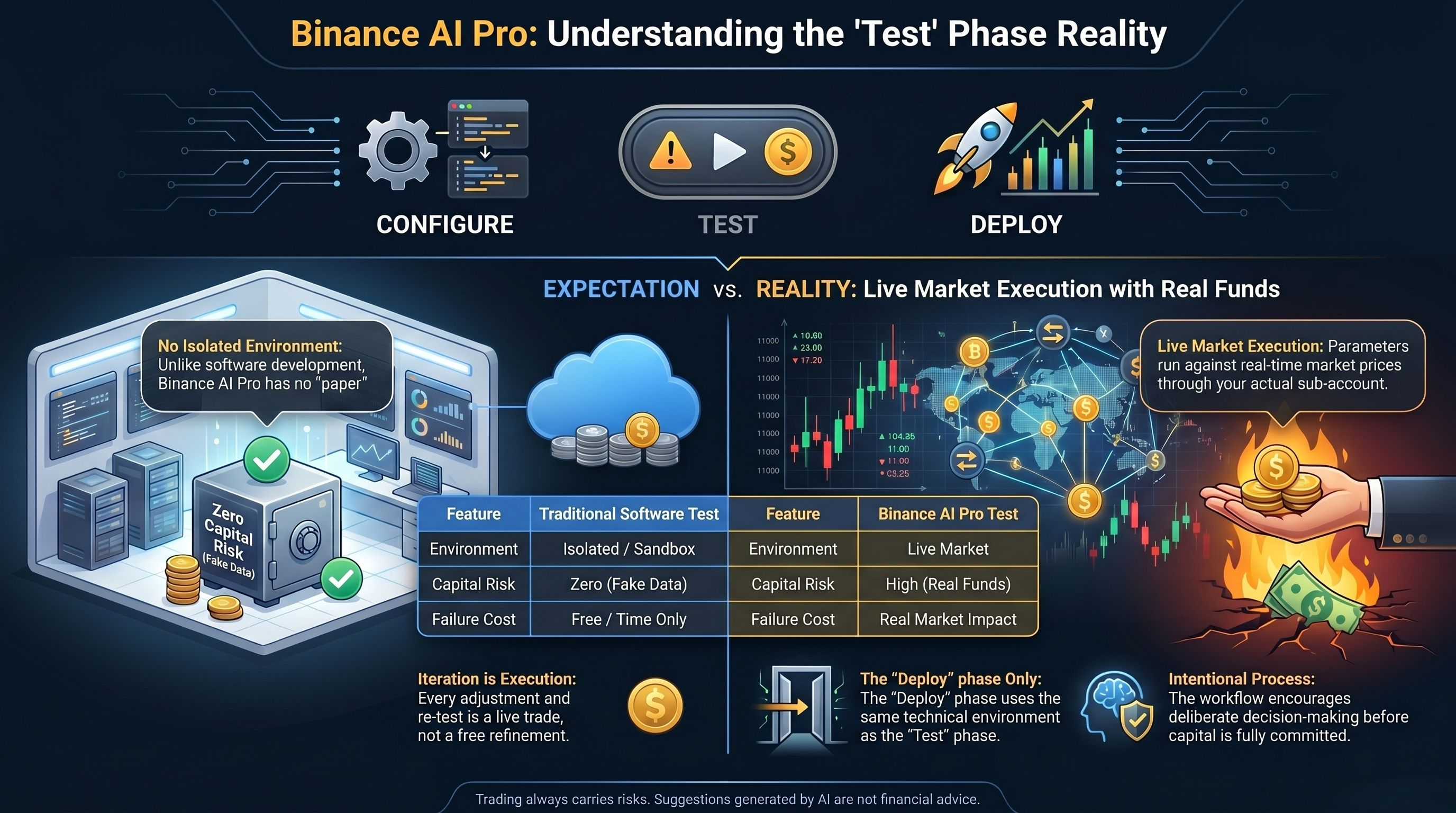

Not skepticism. not alarm. something closer to the feeling you get when a feature described as configure, test, and deploy turns out to compress two very different things into a single phrase, and the word you assumed carried the most protection turns out to carry the least.

because there's a pattern in how agentic platforms describe their strategy pipelines that this space accepts without examining what testing actually means at the execution layer. the pitch frames the workflow as sequential and safe. configure your parameters first. test them before anything goes live. deploy only when you are confident. the sequence sounds like a development environment with a staging layer between thinking and doing.

but testing in a no-code productivity tool is not the same as testing in an automated trading system. when you test a workflow in a productivity tool, the worst outcome is a draft email you did not mean to send. when you test a trading strategy in Binance Ai Pro, the test runs against real market conditions, in real time, through the same sub-account that holds your real funds.

because the product they are describing is real. Binance Ai Pro enables users to configure, test, and deploy their own trading parameters using third-party LLM tools and AI Skills to submit and manage trade orders. the workflow is genuine and the capability is significant.

so yeah... the testing step is real.

but a testing step has never been the hard part of strategy deployment.

the hard part is environment isolation. and this is where the assumption built into the word test becomes impossible to ignore once you examine what that test is running against.

because here's what I keep coming back to. in software development, a test environment is isolated from production. a bug discovered in testing does not affect live users. the whole point of a staging layer is that failure in it is cheap. you find the problem before the problem finds your users. the word test carries that assumption so strongly that most people import it automatically when they encounter it in any product context.

but Binance Ai Pro does not have a paper trading mode or a simulated environment for strategy validation. when the documentation describes testing your parameters, it means running those parameters through the system against the actual market, using the actual sub-account balance, at actual prices. a strategy that behaves unexpectedly during testing does not fail in a sandbox. it fails in the position.

the test and the deployment share the same environment. what happens in testing is not contained.

then comes the iteration question. because of course.

and here's where it gets harder to look away. the natural response to a test result that does not match expectations is to adjust the parameters and run again. in a true test environment, iteration is free. each adjustment is a refinement with no cost other than time. in Binance Ai Pro, each iteration of a strategy adjustment runs through the live market. a user who runs three versions of a parameter configuration to find the one that behaves correctly has not run three tests. they have run three live strategy executions, each of which interacted with their sub-account balance in whatever way the market required at that moment.

the cost of iteration is not the cost of thinking. it is the cost of executing.

there's also a deeper tension nobody names directly.

the configure, test, deploy framework implies a moment when testing ends and deployment begins. but in a system where both phases share the same environment, that boundary is defined only by the user's intention, not by any technical distinction in how the system processes the instruction. the user who decides they are done testing and now deploying has not crossed into a different execution environment. they have simply updated their own framing of what the same system is doing.

the transition from testing to live is a mental category, not a system state.

still... I'll say this.

the decision to offer a structured workflow of configure, test, and deploy rather than a single activation button reflects a genuine commitment to giving users a sense of process and intentionality before capital is put to work. a system that prompts users to think in stages is more respectful of deliberate decision-making than one that moves from setup to execution without any intermediate moment of review. the workflow exists and the intention behind it is real.

the question is whether users moving through the configure, test, deploy sequence understand that the testing phase is not a protected environment where mistakes are free, or whether they are carrying an assumption from other software contexts into one where that assumption does not hold.

and in this space, the difference between those two understandings matters most not when the test is running cleanly, but when the first unexpected result arrives and the user has to decide whether what just happened was a test outcome or a live one.

Trading always carries risks. Suggestions generated by AI are not financial advice. Past performance does not reflect future results. Please check the availability of the product in your region.

@Binance Vietnam $XAU #BinanceAIPro $CHIP $SPK