I keep coming back to this quiet tension that shows up once you start building something real.

There’s a point every builder hits where the trade-offs stop being theoretical.

You’re shipping something that touches real value. Suddenly compliance isn’t optional. You need logs, auditability, KYC in some cases clear visibility into what’s happening and why.

But at the same time, users don’t want to feel like they’re under a microscope. And your team doesn’t want to spend half their time dealing with exceptions, reviews, and edge cases.

That’s where things get messy.

Most systems weren’t designed with privacy in mind. So it gets added later piecemeal. A bit of encryption here, some zero-knowledge there, maybe a workaround when something feels too exposed. It holds together… until scale hits or something slips.

Then costs go up. Complexity increases. Trust quietly drops.

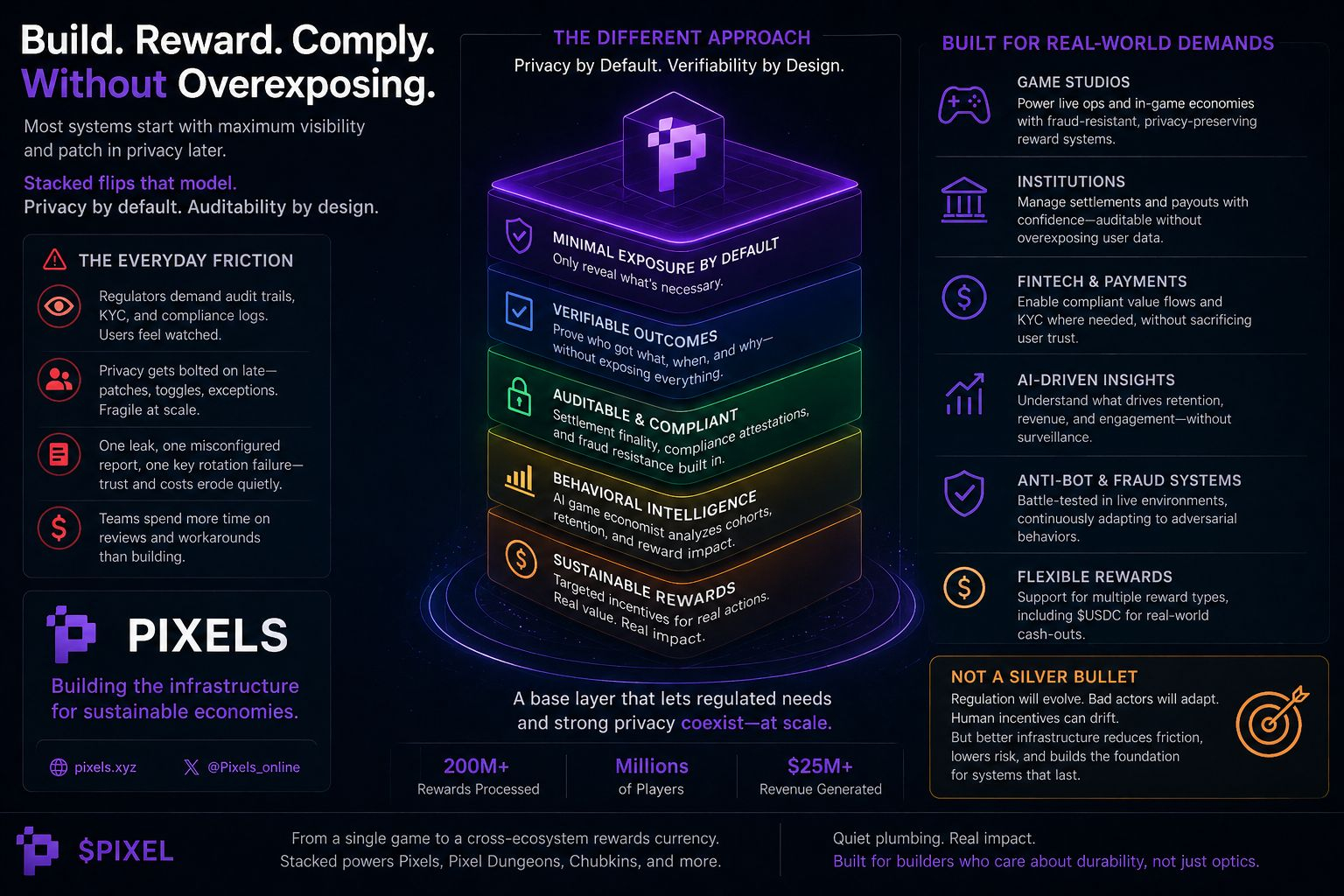

The core issue is simple: privacy is treated like a feature instead of a default.

And features can be bypassed, forgotten, or misconfigured.

What would actually change things is infrastructure that assumes minimal exposure from day one. Where you can still verify outcomes rewards, settlements, behavior without broadcasting everything behind them.

Not flashy. Not something you market heavily. Just something that reduces friction over time.

That kind of setup won’t solve everything. Regulation will keep evolving. Bad actors will keep adapting. But it could remove a lot of the daily drag that teams deal with right now.

And for builders who’ve already felt how fragile the current approach can be, that might be enough reason to care.

I have been sitting with this recurring friction that shows up no matter which side of the table you're on. You're a builder trying to ship something that actually works in the real world perhaps a game studio pushing new titles, a fintech team handling user flows, or an institution managing settlements and data obligations. Regulators want clear audit trails, KYC documentation, behavioral logs for compliance, and proof that nothing suspicious is slipping through. Meanwhile, users grow quietly resentful as every click, reward claim, or in-game action leaves a trail that feels overly exposed. Your own ops people mutter about the overhead too. The result is usually the same: privacy gets added late, as patches or negotiated exceptions a zero-knowledge layer here, a consent toggle there, or special clauses that regulators accept but never fully trust. It never feels clean. Systems hold until they don't.

A forgotten key rotation, a report that reveals more than intended, or clever users probing the edges. Costs pile up from repeated legal reviews, rework, and the slow bleed of trust when people sense their activity isn't truly contained.

Most fixes I've watched over time treat privacy like an optional module or something you negotiate case by case. That approach rarely survives scale. Human error creeps in, regulatory winds shift, and suddenly the exceptions become the weak points. When the default assumption is full visibility for compliance, you breed workarounds people routing around the system, operators adding more bandaids, and everyone paying in friction and eroded confidence. It feels incomplete because the foundation was built for transparency first, with privacy as an awkward retrofit.

What lingers in my mind is whether a base layer could be structured differently. Not with privacy as the exception you fight for, but as part of the default plumbing something that lets regulated requirements (settlement finality, compliance attestations, verifiable behavioral auditability) coexist with strong protections that limit unnecessary leakage of personal details. I'm not picturing grand promises here, just the boring, operational reality of infrastructure that has already been stress-tested against real adversarial conditions at volume.

This brings me to how something like the Stacked ecosystem from Pixels sits in that space. From what I've observed, it's not positioned as a flashy consumer app but more as battle-tested infrastructure that has processed hundreds of millions of rewards across millions of players while contributing meaningfully to revenue. It focuses on delivering targeted rewards for genuine engagement actions that matter inside games while incorporating fraud-resistant mechanisms and anti-bot systems honed through years of live operation. The AI-driven analysis looks at cohorts, retention patterns, and behavioral signals to suggest where rewards might make sense, all without turning every user move into a broadcasted event.

In practice, this kind of setup could speak to the compliance side by providing measurable, auditable loops: you can verify that rewards tied to real activity without needing to expose every granular detail of a player's full history. Settlements and reward distributions become more contained. For institutions or studios operating under regulatory scrutiny, the ability to attest to fair distribution and fraud prevention at scale might reduce some of the constant back-and-forth that drives up legal and operational costs. Human behavior plays into it too when users feel their legitimate play isn't being over-monitored or commoditized beyond what's necessary, the quiet resentment that fuels workarounds might ease. Builders I've seen tire of retrofitting privacy into systems that were never designed with containment in mind; they might quietly adopt layers that make daily compliance feel less brittle.

Of course, I'm skeptical by habit. Law and human nature have a way of complicating even well-intentioned designs. Regulatory expectations can tighten unpredictably, and any system handling real value attracts sophisticated actors testing the edges. $PIXEL itself is evolving within this, shifting toward a staking-focused role while Stacked expands as a broader rewards layer that can support multiple games and reward types, including moves toward more stable options like USDC for cash-outs. That infrastructure focus redirecting value directly to players in measurable ways rather than leaking it to intermediaries feels more grounded than many past attempts at sustainable economies.

Still, nothing here is certain. The approach might lower long-term friction for operators who value durability over short-term optics, especially those exhausted by constant exception management. It could fit studios or institutions that need to balance genuine user incentives with auditable compliance without turning every interaction into a surveillance point. What might make it work is steady, real-world integration where the plumbing proves reliable under pressure, and adoption grows enough to create network effects without forcing visibility as the price of participation. It would likely fail if incentives drift back toward maximum data exposure for "safety," or if the ecosystem stays too narrow to force meaningful pressure on how regulated flows are handled day to day. Or if the behavioral analysis, no matter how sophisticated, can't keep pace with evolving adversarial tactics or shifting legal demands.

At the end of the day, the users and builders who might actually lean on this are the pragmatic ones those who have watched systems fail under their own weight and are looking for quieter, more contained foundations rather than louder features. Trust builds slowly in these areas, through consistent operation rather than declarations. Whether Stacked and the broader Pixels approach can carve out that space remains to be seen, but the underlying friction it addresses feels real enough to watch closely

You need to prove things. Who got what. When. Why. That nothing’s being gamed.

At first, it feels manageable. Then slowly, the friction creeps in.

Users start noticing how much is being tracked. Teams spend more time reviewing logs than improving the product. Privacy gets added in small patches quick fixes, special cases, things that make the system “feel” safer without really changing how it’s built.

And everything becomes a bit fragile.

Not in a dramatic way. Just in that constant, low-level tension where you know one mistake could expose more than it should.

What I can’t shake is the idea that this is backwards.

We start with maximum visibility, then try to claw back privacy.

But maybe it should be the opposite.

Start with containment. Only reveal what’s necessary. Build systems that can prove outcomes without exposing every detail behind them.

No big announcements. No hype. Just infrastructure that quietly reduces the amount of things that can go wrong.

It won’t be perfect. Nothing is. But it might feel calmer. More stable. Less like you’re constantly balancing between compliance and trust.

And for people who’ve been in the trenches long enough, that kind of shift matters more than any feature list.