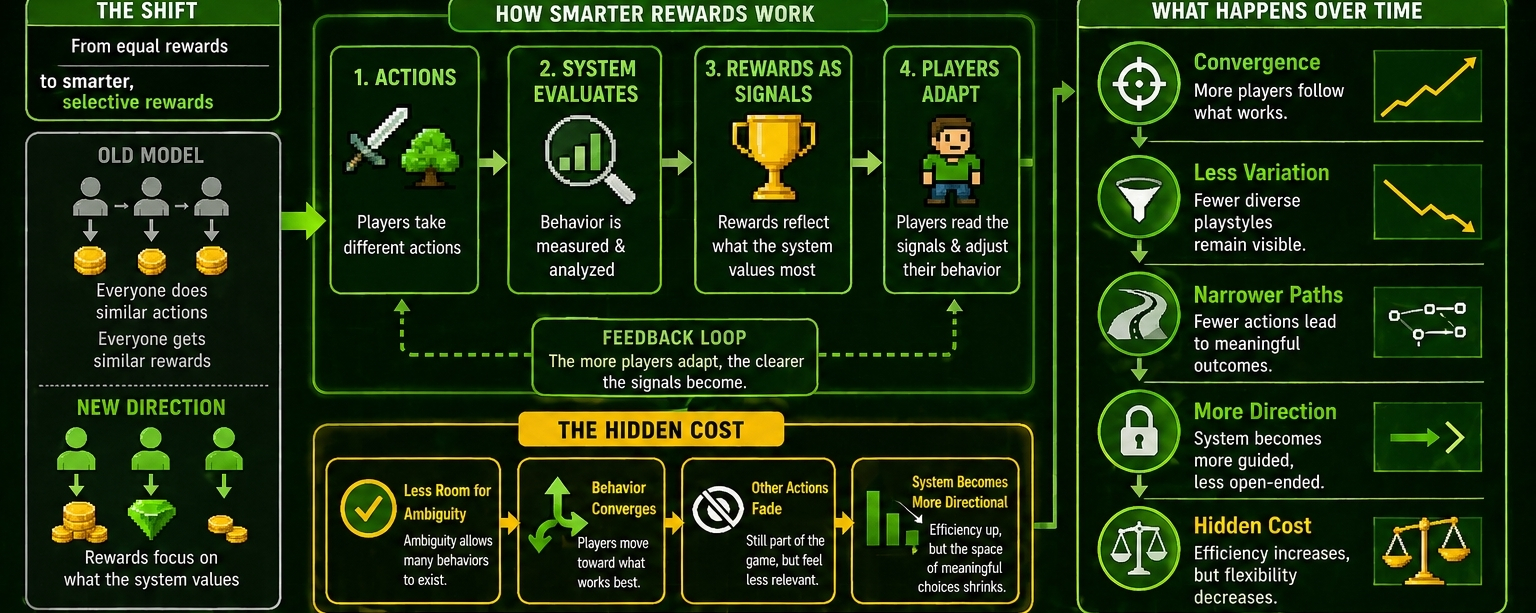

I was thinking about rewards in these systems, and how making them “smarter” tends to sound like a clear improvement.

Better targeting, better alignment, less waste. Instead of rewarding everything equally, the system starts focusing on what actually matters. On paper, that’s exactly what these economies need.

@Pixels seems to be moving in that direction

But when I look at it, I feel like that shift comes with something less obvious attached to it.

That’s the part I keep coming back to $PIXEL

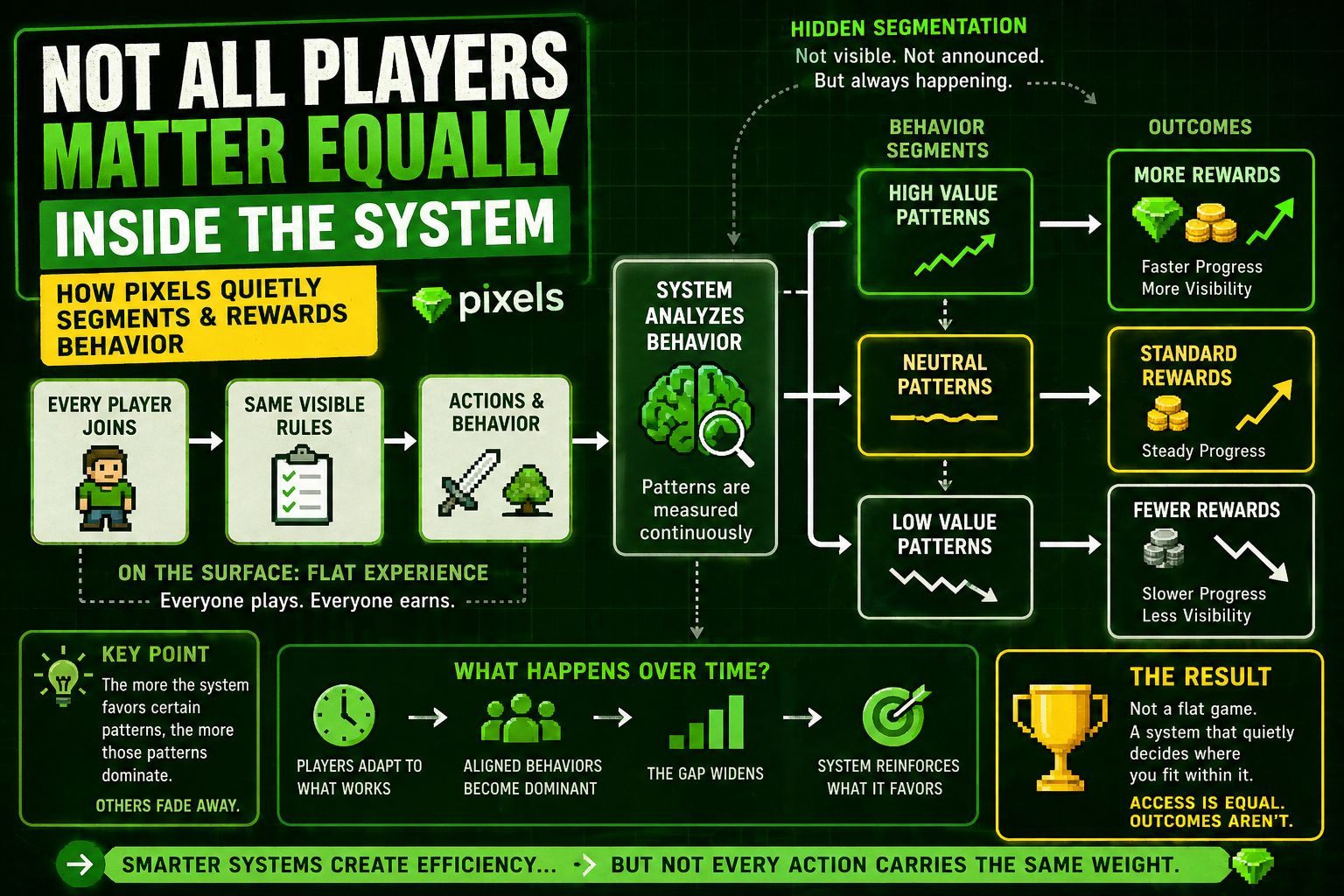

Because once rewards become more selective, they stop being just outputs. They become signals about what the system values. Not in a general sense, but in a very specific, evolving way & over time, players start reading those signals.

Not perfectly, but enough to adjust.

At first, it might feel like progress. Less randomness, more intention behind outcomes. You do something, and it feels like the system recognizes it more precisely. That kind of feedback loop can make the experience feel more refined.

But there’s another side to it.

Because the more precise the rewards become, the less room there is for ambiguity. And ambiguity, even if it feels inefficient, is what allows for a wider range of behaviors to exist.

When that space shrinks, so does variation.

Certain actions become clearly aligned with value. Others start to feel less relevant, even if they’re still part of the game. And once that pattern becomes visible, behavior begins to converge.

Not suddenly, but steadily.

Players move toward what works. They always do. And when rewards are sharper, that movement happens faster, more decisively.

That’s where the hidden cost starts to show.

Because while the system becomes more efficient, it also becomes more directional. Less open-ended, more guided. Not in a restrictive way, but in a way that reduces how many paths actually lead somewhere meaningful.

And over time, that changes the feel of the system.

It’s no longer just about participating. It’s about aligning.

I’m not sure that’s avoidable. Any system that tries to optimize behavior has to make those trade-offs. It has to prioritize some signals over others, even if that means narrowing the field.

But it does raise a question. How much precision is too much ?

Because beyond a certain point, smarter rewards don’t just improve the system. They start shaping it more tightly than before.

Pixels seems to be somewhere along that path right now. Not fully optimized, but clearly moving toward a model where behavior is evaluated more carefully.

As i see that continues, the balance between flexibility and efficiency becomes harder to maintain.

I’m not sure where it settles

But it’s one of those shifts that feels positive at first Until you start noticing what quietly falls away in the process