I have been watching how these onchain gaming setups actually play out once they grow beyond the early crowd. Not the pitch decks, but the day to-day friction when real money, real identities, and real rules start colliding. You run a rewards program tied to player behavior tracking what keeps someone logging in on day 7 versus day 30, spotting when a cohort starts leaking, routing $PIXEL or stable rewards to the right actions and suddenly you're sitting on detailed behavioral data. That's useful for the game studio running LiveOps. It's also the kind of data that regulators, tax offices, or compliance teams eventually want to see, especially as these systems scale and touch fiat off-ramps or institutional players.

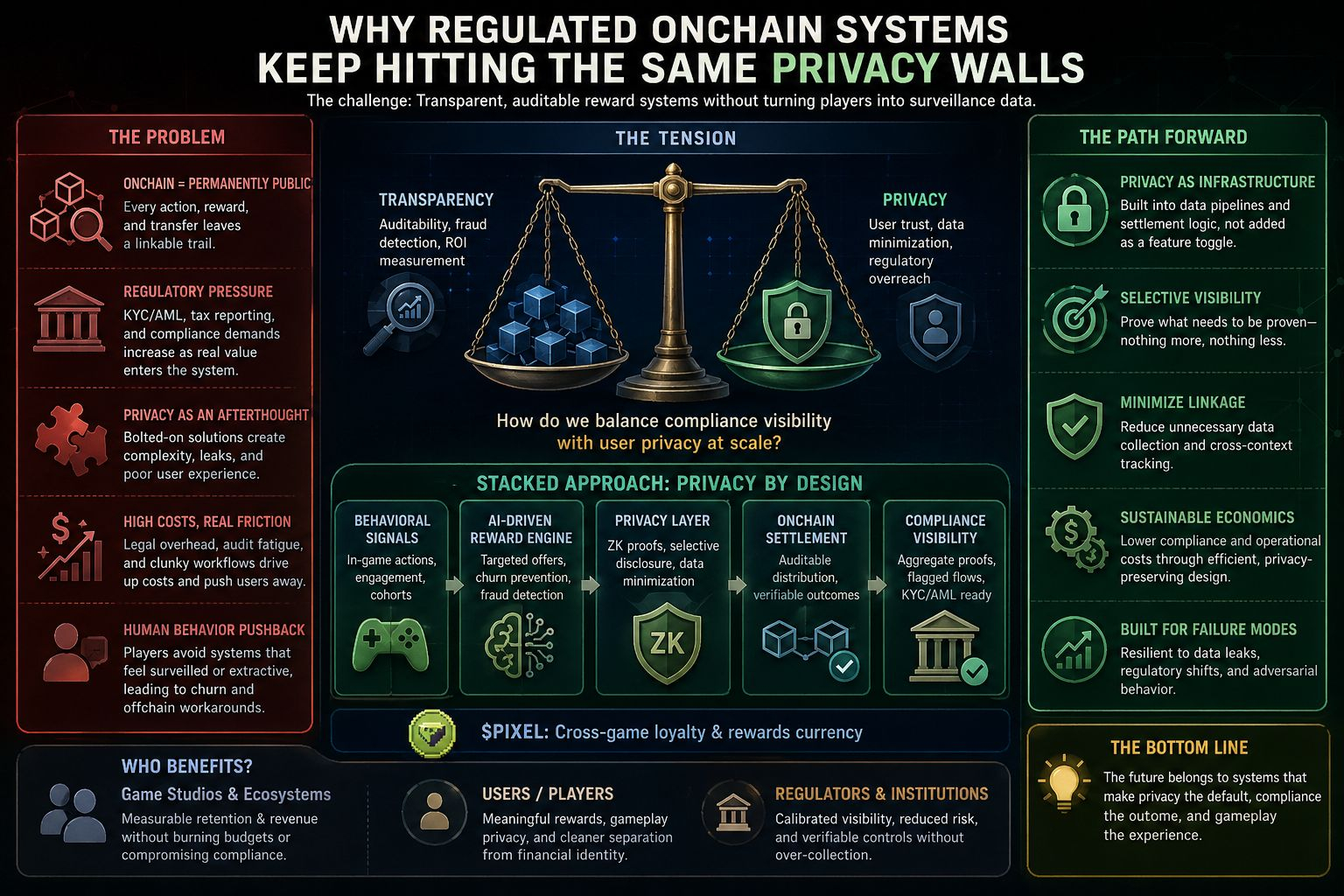

The practical question that keeps coming up isn't theoretical: how do you run transparent, auditable reward distribution and settlement in an environment where authorities demand KYC/AML visibility, while not turning every player interaction into a surveillance log that scares off normal users or invites overreach? Most current approaches feel awkward because they bolt privacy on as an exception or an afterthought. You build the core loop first monitor engagement, AI suggests targeted rewards via something like Stacked's engine, distribute PIXEL or other value across games and then try to layer compliance wrappers or selective zero-knowledge proofs on top when pressure mounts. It works until it doesn't. Data leaks happen, or the overhead of managing separate privacy tunnels kills the economics, or users sense the inconsistency and pull back.

Why does the problem exist so persistently?

Because onchain activity, by default, is public in ways that traditional game economies never were. Every transfer, every reward claim, every in-game action that triggers a token movement can leave a permanent, linkable trail. For a system like Stacked, built as battle-tested infrastructure from running hundreds of millions of rewards inside Pixels and now opening to other studios, that transparency is partly the point: it allows measurable ROI on retention campaigns, fraud detection (anti-bot, behavioral signals at scale), and honest settlement. You can actually audit whether the reward budget is leaking to farmers or genuinely lifting LTV. But once regulators look at this as a financial touchpoint especially with real-money rewards, cash-outs, or loyalty layers that start resembling tokenized incentives the demand for traceability spikes. Institutions won't touch settlement layers without compliance rails. Builders face mounting legal exposure if they can't demonstrate controls. Users, even privacy-conscious ones, get tired of the mental load of managing multiple wallets, VPNs, or hoping the project doesn't fold under a subpoena.

Most solutions I've seen in practice feel incomplete because they treat privacy as a feature toggle rather than infrastructure. You end up with awkward compromises: some actions stay pseudonymous until a threshold, then KYC kicks in; or you rely on trusted intermediaries that hold the private data offchain, which reintroduces single points of failure and the very opacity the chain was meant to reduce. Costs creep up not just gas or compute for proofs, but ongoing legal review, audit fatigue, and the human behavior side where players route around systems that feel extractive or leaky. I've watched similar setups in earlier Web3 experiments where the compliance layer became so heavy that genuine engagement dropped; people revert to offchain workarounds or simply churn because the experience stops feeling like play and starts feeling like paperwork. Skeptically, a lot of these "privacy modules" look more like regulatory theater than robust design they satisfy a checkbox today but won't hold when enforcement tightens or when cross-border settlement gets scrutinized.

This is where thinking about infrastructure like Pixels' Stacked makes me pause and reflect. It's not positioned as a consumer app chasing hype; it's the rewarded LiveOps layer that grew out of real production lessons inside Pixels—figuring out sustainable reward distribution, using AI to analyze cohorts without just blasting generic airdrops, preventing adversarial gaming of the system. #pixel acts as the cross-game loyalty and rewards currency, expanding utility as more titles plug in. In a regulated world, the value would come from embedding privacy considerations into how behavioral data flows into reward decisions and settlements, rather than patching it later. Imagine the engine suggesting a targeted reward to reduce churn, but the underlying signals and distribution logic are structured so that compliance can verify aggregate outcomes or specific flagged flows without exposing every granular player habit by default. Not perfect anonymity that's rarely compatible with settlement and anti-fraud but privacy by design that minimizes unnecessary linkage.

The friction shows up in human behavior too. Players want to engage because the rewards feel meaningful (real cash, crypto, or value tied to actual progress, not spam farming), but they also want plausible separation between their gaming life and broader financial identity, especially in jurisdictions where tax reporting on every micro-reward becomes a nightmare or where data privacy laws clash with AML demands. Builders and institutions face parallel headaches: high compliance costs can kill the unit economics of running targeted LiveOps at scale, yet ignoring them risks shutdowns or frozen assets. Regulators, for their part, aren't wrong to worry about illicit flows in reward systems that touch real value; the question is whether the architecture forces over-collection of data or allows calibrated visibility.

I'm not sure any single project has fully cracked this yet. It's conditional on how law evolves, how cheap and usable advanced cryptographic tools become for selective disclosure, and whether teams prioritize long-term robustness over short-term growth metrics. Treating Stacked-like infrastructure seriously means asking: does the reward engine reduce overall surveillance load by making distributions more precise and fraud resistant upfront, or does it amplify data collection in the name of optimization? The former feels more sustainable; the latter risks the same failures we've seen when systems optimize too hard for engagement without accounting for external pressures.

Grounded takeaway: the people who'd actually lean on something like this are probably mid-sized game studios and ecosystems trying to move beyond pure speculation into repeatable economics those who need measurable retention and revenue lifts without burning ad budgets or token inflation, while staying on the right side of evolving rules around digital assets and consumer data. It might work if privacy is baked into the data pipelines and settlement logic from the start, keeping costs manageable and user experience intact, so that compliance becomes a byproduct rather than a constant tax on operations. It would likely fail if it stays too opaque on one end or too exposed on the other, or if the economics don't hold once real regulatory scrutiny hits the reward flows. In the end, trust comes from seeing the system handle failure modes we've seen before leaky data, regulatory surprises, user churn without collapsing. That's the quiet test, not the launch hype.