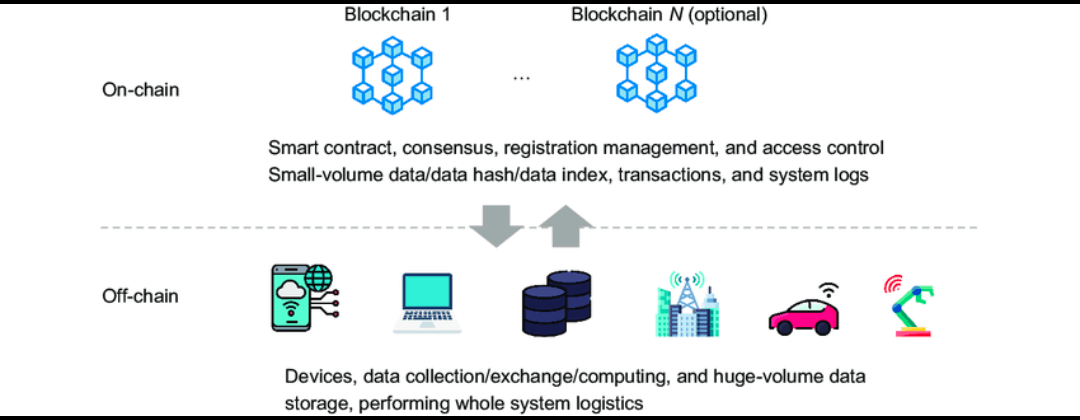

Most “AI stacks” in crypto are still the same two components taped together: a blockchain that stores proofs, and an AI system that lives off-chain and produces confident answers. It looks coherent in a diagram. It falls apart the moment you ask three basic questions:

Where does the intelligence actually live?

What parts of it can be verified without trusting a server?

And when something happens in the real world, how do you prove why it happened?

That gap is why Vanar’s Neutron + Kayon + Axon direction is worth paying attention to in 2026. Not because it’s loud, and not because it’s dressed up as “AI-native.” But because the design is aimed at a problem most teams already feel every day: meaning evaporates as data moves.

Files get uploaded, copied, versioned, and scattered. Decisions get made on partial context. Later, when someone asks why a call was made, you’re digging through folders, chats, dashboards, and half-remembered logic. The system technically worked — but the reasoning is gone.

Vanar’s bet is that a network can carry more than state and value. It can carry structured meaning, and it can carry the trail of how that meaning turns into decisions and then into actions. That loop starts with Neutron.

Neutron: Memory That’s Meant to Be Used

Neutron isn’t framed as ordinary storage. The pitch isn’t “we put files on-chain.” It’s “we transform files into something smaller, structured, and usable.”

Vanar calls these outputs Seeds. The label doesn’t matter; the intent does. A Seed is meant to be compact, searchable, and usable as an input for logic. That’s a real shift from how most chains treat documents, where you usually get a hash and a pointer. A hash proves a file hasn’t changed. It doesn’t help you work with it. It doesn’t let you ask questions. It doesn’t automate anything.

The obvious objection is compression. Anyone can shrink a file if they don’t care what gets lost. The real question is integrity. If Seeds are meant to matter, the verification path matters more than the size.

Can an independent party later prove that a Seed corresponds to a specific input under a defined process?

Can outputs be traced back to evidence without trusting a hosted service?

If verification depends on trusting an API, Neutron is useful infrastructure — but it’s not a trust layer. Vanar seems to be aiming higher than that.

Where Neutron becomes genuinely interesting is the claim that AI isn’t just bolted on top, but embedded into the validator layer itself. That forces real engineering tradeoffs. AI systems are probabilistic; consensus systems are not. You don’t get to be “mostly right” inside validation.

So if intelligence actually runs in the validator environment, it must be tightly constrained, or it must emit proofs and receipts that don’t destabilize consensus. Either path is hard. Both push Vanar out of the “we integrated a model” category and into something more serious.

Still, memory alone doesn’t solve the problem.

Kayon: Reasoning You Can Defend

You can store a perfect record and still end up with a system no one trusts. Interpretation is the bottleneck. That’s where Kayon sits — and it’s the layer that will determine whether this stack holds weight in 2026.

Plenty of AI systems can answer questions now. What’s rare is a system that answers a question and leaves behind something you can rely on later. In real operations, “the model said so” isn’t an explanation. You need to know:

What data was used

What was ignored

What assumptions were made

What tools were called

If Kayon produces auditable reasoning artifacts — signed outputs that point back to specific Seeds — it becomes more than an assistant. It becomes an accountability layer.

This is especially important in Kayon’s compliance framing. Anyone can sprinkle “compliance” into a product page. The hard version is designing compliance as explicit rules, versioned checks, and inspectable logic — with AI helping interpret messy reality rather than acting as the enforcement engine.

The strong version of Kayon maps reality into clearly defined rule objects and leaves a trail you can audit. The weak version produces confident paragraphs about compliance. Those two look similar in a demo. They are not similar in production.

Axon: Where Insight Either Becomes Impact — or Breaks

Axon is the layer that decides whether the entire stack becomes real. Because reasoning that never leaves a chat window is still just analysis.

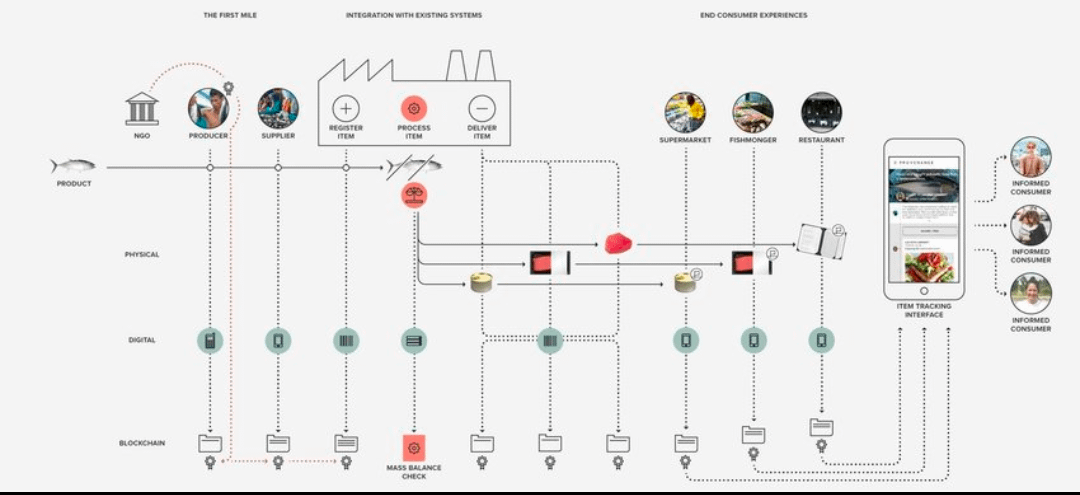

Axon is Vanar’s attempt to turn Neutron’s memory and Kayon’s reasoning into execution: workflows, triggers, and orchestrated actions that actually do things — without losing provenance.

This is also where AI systems become dangerous if they aren’t designed with restraint. “Agentic” execution sounds fine until you remember that real actions need guardrails: permissions, allowlists, approvals, retries, and failure handling. And above all, a way to prove why something happened.

If Axon can’t bind every action back to a reasoning artifact and the Seeds that reasoning relied on, you’re back in the old world: automation that works until it doesn’t, and then no one can explain what went wrong.

A Loop, Not Three Products

The clean way to understand Neutron, Kayon, and Axon is as a closed loop:

Neutron turns messy inputs into structured memory

Kayon turns that memory into reasoning plus receipts

Axon turns those receipts into controlled execution

If the loop is tight, Vanar becomes a practical foundation for applications that don’t lose context over time. If the loop is loose — if outputs collapse into text and actions aren’t provably linked to evidence — it becomes another “AI + chain” story that sounds better than it behaves.

One strategic detail that quietly matters is Vanar’s cross-chain posture. This doesn’t read like “everything must move onto Vanar.” It reads like “anchor intelligence and provenance here.” That changes adoption. Teams don’t have to migrate everything. They can use Vanar for memory, reasoning receipts, and workflow provenance while execution stays where it already lives. In practice, that’s often the only adoption path that works.

What Actually Matters in 2026

If I were judging whether this stack is landing for real, I’d watch for three things that are hard to fake:

Independent verification of Seeds — can outsiders validate input-to-Seed relationships without trusting a service?

Structured reasoning artifacts from Kayon — real receipts that reference data, transforms, and decisions, not persuasive prose

Safe execution in Axon — permissions, provenance, and failure handling that make workflows operable, not just demo-able

Underneath all of this is a tension Vanar has to manage carefully: intelligence is probabilistic, verification is not. The strongest version of this stack draws hard boundaries between what is provable, what is heuristic, what is suggested, and what is executed — so a model’s confidence is never confused with a system’s guarantees.

That’s why the Neutron + Kayon + Axon idea feels grounded when explained properly. It’s not about sounding futuristic. It’s about solving a very current problem: keeping meaning intact as data moves, and keeping decisions defensible once they turn into actions.

If Vanar delivers that as working infrastructure rather than slideware, the 2026 narrative won’t need hype. The product will speak in receipts, not slogans.