The more I observe robotics, the more I hallucinate human-machine alignment as an AI debate topic only. It gets converted into a real-world problem when smart machines commence real tasks in the vicinity of people.

A conversational agent making a mistake is one thing. A robot, however, doing a wrong move in a warehouse, a hospital, or a public place is an extremely different story. This is the point when the discussion acquires a lot more seriousness.

Fabric Foundation has a problem-oriented approach.

At least based on my understanding, one shouldn't make machines more capable only. They should even become easier to monitor, coordinate, govern, etc., as their presence in the physical world increases.

The Foundation states this as its objective: to construct the governance, economic, and coordination layers enabling the collaboration of humans and intelligent machines in a safe and productive manner.

Besides, it emphasizes that a machine's behavior should be predictable, observable, and accountable instead of being hidden inside closed systems."

However, what I feel was imprinted in my memory most is this - safety does not concern itself solely with a machine getting smarter. That is only one side of the coin. The main thing is if humans actually get to see what the machine is up to, who has decided the limitations, and who comes to the rescue when the operation goes awry."

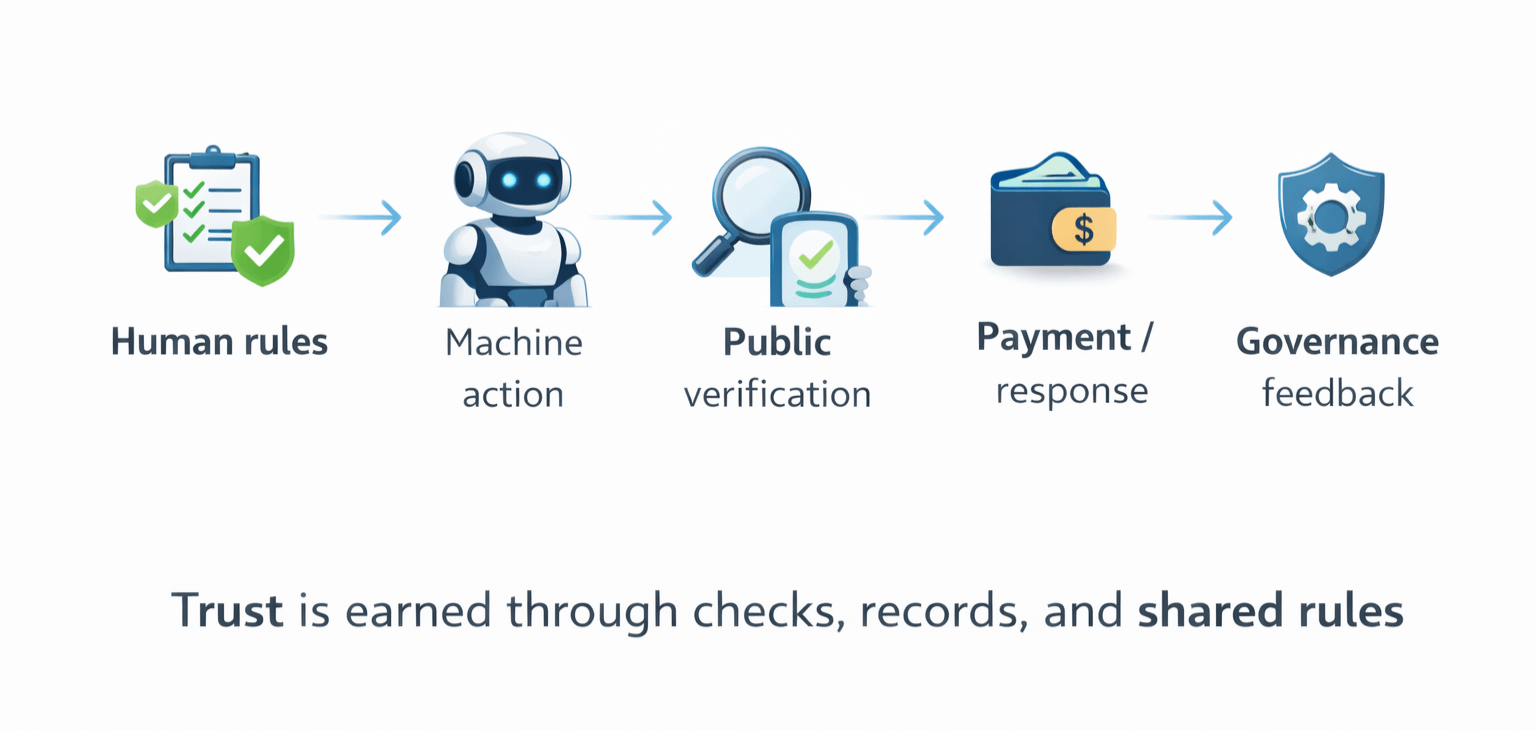

In my opinion, human-machine coordination is quite straightforward at the fundamental level. A machine has to operate within rules which humans can comprehend, verify, and amend if necessary.

It rings as quite simple, however, it becomes complicated once an automation process is scaled. Observing one robot is not a problem. A whole bunch of robots, along with operators, developers, validators, and users are a totally different story. At that point, closed supervision seems unconvincing.

Fabric's solution is to open infrastructure rather than private black boxes. Its white paper indicates that Fabric is a worldwide network for constructing, regulating, owning, and enhancing general-purpose robots via public ledgers and decentralized coordination.

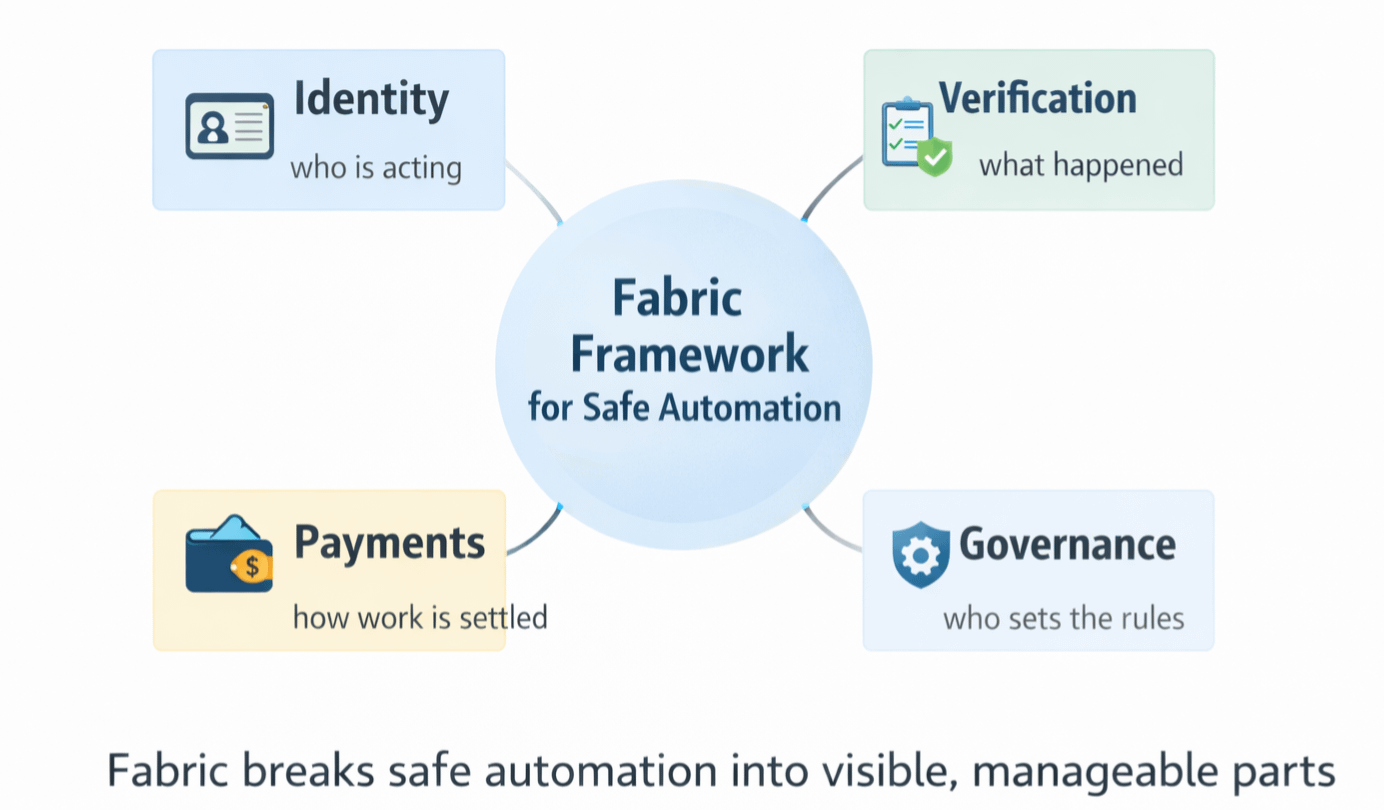

What intrigues me is that Fabric does not take safety as an empty phrase only. It judges the problem on a case-by-case basis. The blueprint spans over machine and human identification, decentralized task distribution, responsibility, communication between machines, and location- or human-gated payments.

Those components become crucials because safely automating hardly relies on intelligence solely. It needs also visibility, boundaries, assessment, and a definite means to authenticate work.

This is also where ROBO is used. Fabric Foundation qualifies ROBO as the utility and governance asset of the protocol. It is a primary mean for the network fee payment, identity, and verification. Staking and governance are to help the coordination of the network participation.

At present, the token page has the total supply set at 10 billion, while the Foundation post dated February 24, 2026, describes different allocation buckets such as the ecosystem and community, investors, team and advisors, and foundation reserve.

From where I stand, that is the main lesson from this. Trusting machines more is not really the path to automation at scale. Rather, it is creating the systems where one's behavior could always be audited, the rules could be changed, and the accountability could be seen at the same time.