I’ve noticed something weird about privacy narratives in crypto. They always sound important when you first hear them. Data protection, user control, zero exposure it all feels like something the world obviously needs more of.

But then you zoom out a bit.

Most of these systems never actually get used in real workflows. Not because they don’t work, but because nothing around them changes. Institutions keep operating the same way, users don’t adapt, and the “privacy layer” just sits there as an optional feature nobody relies on.

That’s the part I didn’t fully understand before.

Privacy isn’t valuable just because it exists. It only matters when something breaks without it.

That shift in thinking is what made me look at Midnight Network differently.

I don’t think Midnight is trying to win the usual privacy narrative game. It’s not about hiding everything or creating a fully anonymous system.

If anything, it’s doing the opposite.

It’s focusing on controlled disclosure proving specific things without exposing everything else. That sounds simple, but it changes how you think about privacy entirely.

So the real thesis I keep coming back to is this.

Midnight isn’t a privacy coin in the traditional sense. It’s infrastructure for selective trust, and whether it succeeds depends entirely on whether institutions actually need that level of precision every day.

When I tried to simplify how this works, I stopped thinking in blockchain terms and started thinking in everyday interactions.

Right now, most systems operate on over-sharing. You submit full data, and the system decides what to use. Your identity, your records, your history it all gets handed over even if only a small part is needed.

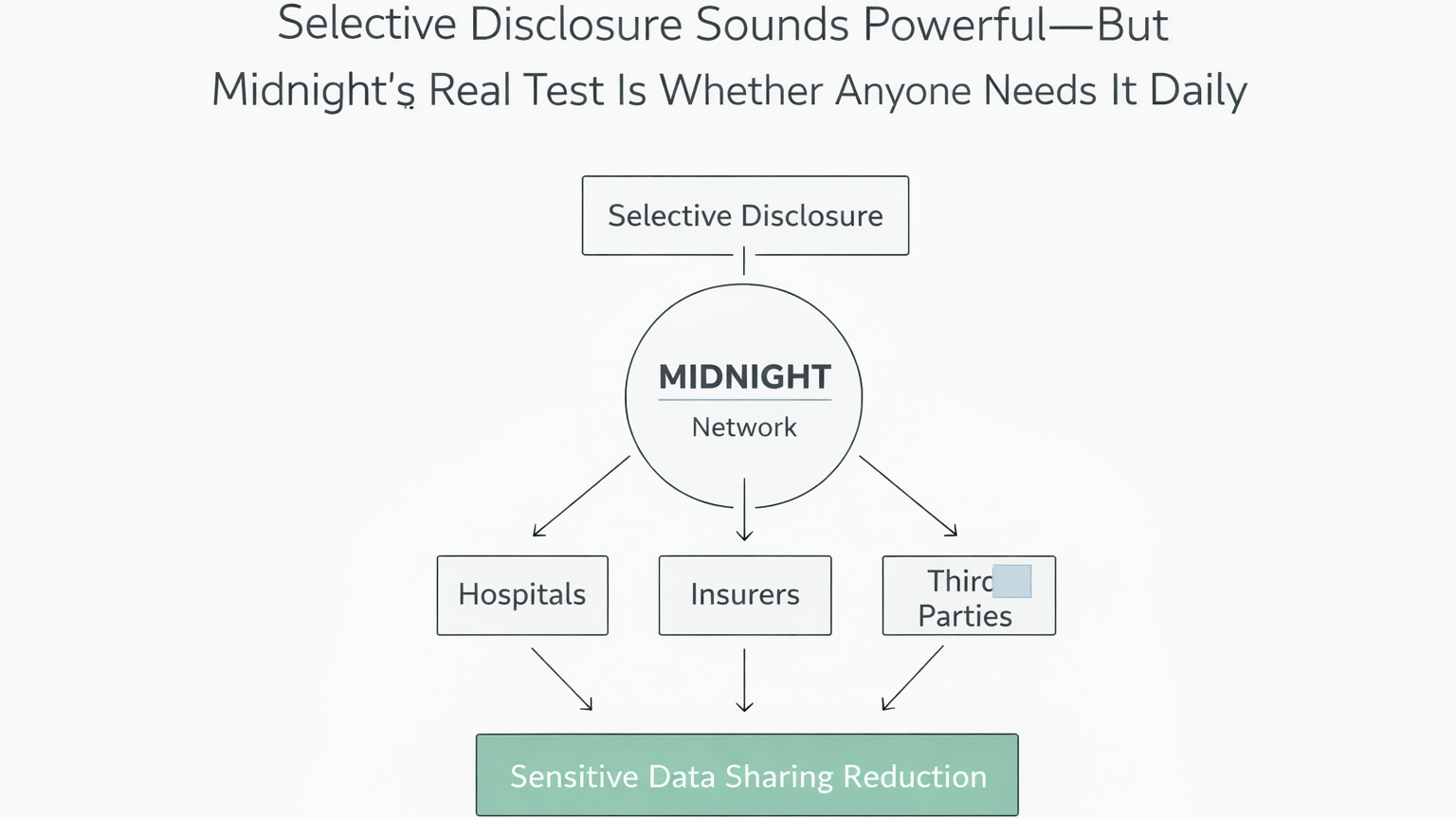

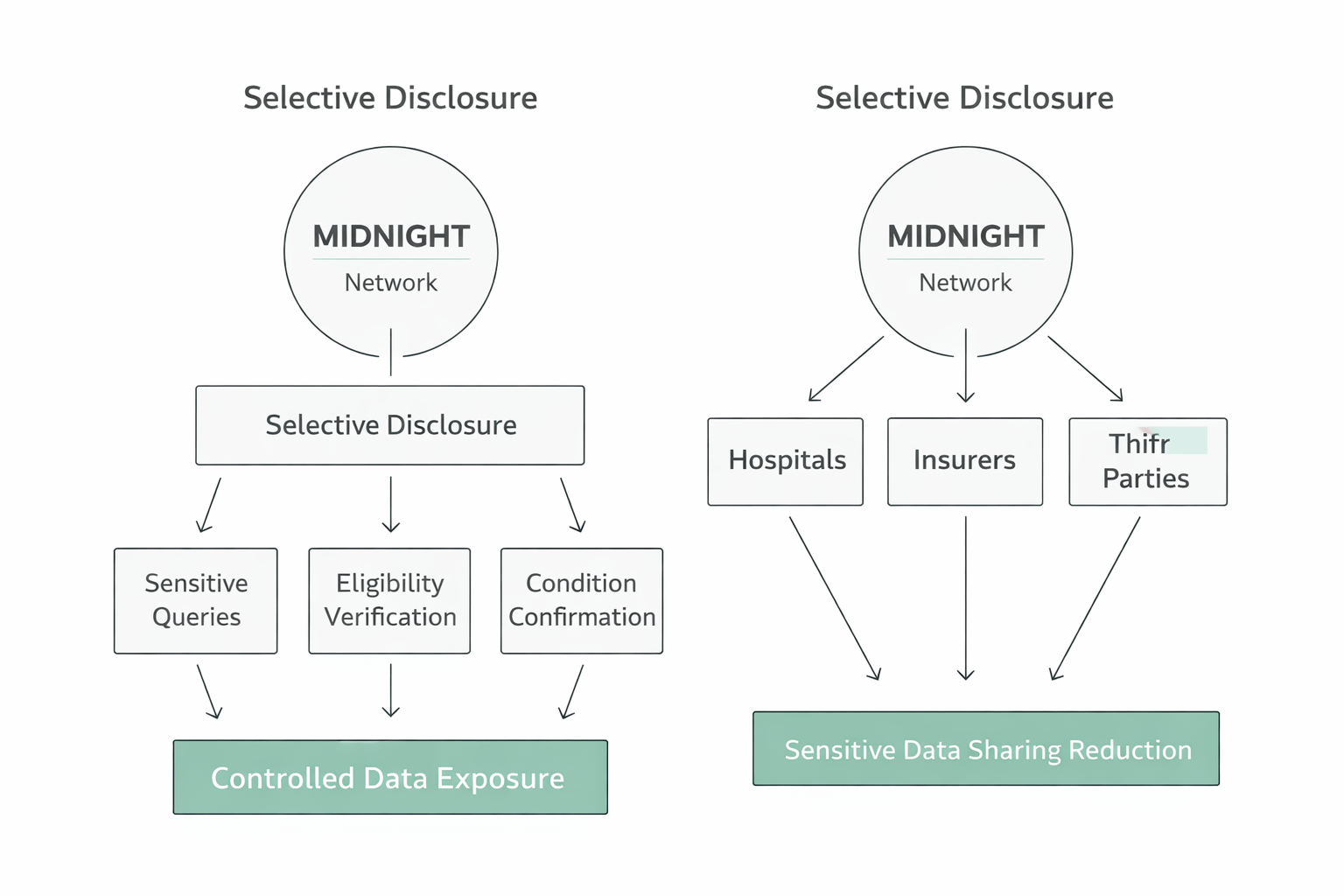

Midnight flips that.

Instead of giving raw data, you generate a proof. You don’t share your medical file, you prove a condition. You don’t reveal your identity, you confirm eligibility.

It’s like walking into a checkpoint and proving you’re over 18 without showing your name or address.

That’s what privacy-preserving smart contracts enable here. They validate truth without exposing the underlying data. The system still functions, but the data surface shrinks dramatically.

And in theory, that sounds like exactly what sensitive industries need.

Healthcare is where this becomes less theoretical and more uncomfortable to ignore.

Data moves constantly between hospitals, insurers, and third parties. And most of the time, it’s more than necessary. Full records get shared for simple checks. Systems rely on access instead of verification.

That creates friction, but also risk.

Patients don’t really control their data. They just exist inside a structure that assumes exposure is required for functionality.

So when I think about Midnight in this context, it’s not about adding privacy on top. It’s about removing unnecessary data flow entirely.

A patient proves eligibility without exposing history. An insurer verifies a claim without storing full records. A hospital confirms a condition without requesting everything else.

That’s a cleaner system.

But it also raises a question that’s harder than it sounds.

Do institutions actually want to change how they operate, even if the alternative is better?

From a market perspective, I don’t think there’s a clear answer yet.

There’s attention around Midnight, but it feels early. Not hype-driven, not ignored either. More like it’s sitting in that “watching closely” phase where people aren’t fully convinced.

That usually means one thing.

The market doesn’t know if this becomes infrastructure or stays a concept.

Price action reflects that kind of uncertainty. Not explosive, not dead. Volume shows interest, but not conviction. Holder distribution tends to expand slowly in this phase, which usually signals discovery rather than speculation.

So the story here isn’t about momentum. It’s about waiting for proof.

Where I think the market is getting this wrong is pretty specific.

People keep evaluating privacy as a feature instead of a workflow.

We’ve already seen that encryption alone doesn’t create adoption. Plenty of systems can hide data. Very few can integrate into existing processes without breaking them.

That’s the real challenge.

Midnight isn’t competing on who has better cryptography. It’s competing on whether its model fits into systems that were never designed for selective disclosure in the first place.

And that’s where things get messy.

Because the reality is, institutions don’t change easily.

Healthcare systems are layered with regulations, legacy infrastructure, and operational habits that have been built over decades. Even if Midnight offers a better model, adoption depends on how easily it fits into those constraints.

That’s why I keep coming back to one question.

Is this actually being used in real workflows, or just tested in controlled environments?

Because that’s the difference between something interesting and something essential.

If I had to define what would make me more confident here, it wouldn’t be announcements.

It would be repetition.

Hospitals using selective proofs daily. Insurance systems relying on them for verification. Developers building tools that assume this infrastructure is already there.

That kind of usage compounds.

On the flip side, if everything stays in pilot programs, if integration proves too complex, or if institutions hesitate to depend on it, then the signal is clear.

It means the idea works, but the system doesn’t.

One thing I keep thinking about is how privacy behaves when it actually matters.

When it’s visible, it’s usually still optional. When it becomes invisible, it’s already essential.

The systems that win are the ones users don’t even think about. They just trust them.

I think Midnight is trying to reach that point.

But getting there requires more than good design. It requires habits to change. And habits are harder to shift than technology.

Healthcare might be the toughest place to prove this. But if it works there, it probably works anywhere.

Right now, I don’t think the outcome is obvious.

There’s a version where Midnight becomes a quiet layer that powers sensitive systems without drawing attention. And there’s another where it stays in that familiar category of “technically impressive, practically unused.”

Both paths are still open.

So I’m not watching narratives anymore. I’m watching behavior.

Because if selective disclosure is only used occasionally, it stays a feature.

If it becomes something systems rely on daily, it turns into infrastructure.

If Midnight succeeds, privacy stops being a feature and becomes invisible infrastructure if it fails, it remains a concept the market keeps overestimating.

@MidnightNetwork #night $NIGHT