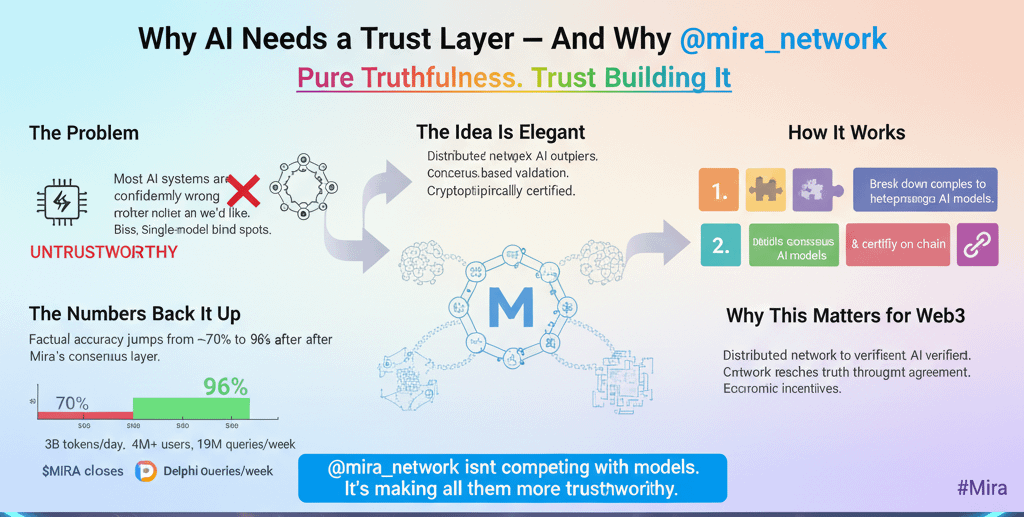

We talk a lot about AI being the future. But here's the part nobody wants to admit: most AI systems today are confidently wrong more often than we'd like to believe.

Hallucinations. Bias. Single-model blind spots. These aren't edge cases — they're structural features of how large language models work. And yet we're rushing to deploy AI in healthcare, finance, legal services, and autonomous decision-making. The gap between AI's potential and AI's reliability is enormous. That's exactly the problem $MIRA was built to close.

The Idea Is Elegant

Instead of trusting one AI model to get it right, @mira_network routes outputs through a distributed network of independent AI verifiers — each running different models — and only certifies a result when consensus is reached. Think of it like a jury system for AI. One juror might be biased. Twelve independent ones are much harder to fool collectively.

The process works in three steps. First, complex AI outputs are broken down into individual, checkable claims. Second, those claims are distributed across verifier nodes running heterogeneous AI models. Third, consensus is reached and the result is cryptographically certified on-chain. What comes out the other side isn't just an AI answer — it's a verified, tamper-proof AI answer.

The Numbers Back It Up

This isn't whitepaper theory. Mira's network currently processes 3 billion tokens per day, serves over 4 million users, and handles 19 million queries per week across real applications like Klok, Learnrite, and Delphi Oracle. Factual accuracy jumps from roughly 70% with a single model to 96% after passing through Mira's consensus layer. That's not a marginal improvement — that's the difference between a tool you can trust and one you have to babysit.

Why This Matters for Web3

Decentralized AI verification isn't just a technical achievement. It's a philosophical one. The blockchain space was built on the principle that you shouldn't have to trust a central authority — you verify. Mira applies that same logic to AI outputs. No single company decides what's true. No centralized model holds a monopoly on correct answers. The network reaches truth through independent agreement, backed by economic incentives that make dishonesty financially painful for bad actors.

Validators who consistently align with consensus earn rewards. Those who submit manipulated or inaccurate results get slashed. The system is self-enforcing at scale.

The Bigger Picture

We are moving toward a world where AI agents make autonomous decisions — managing portfolios, diagnosing patients, drafting contracts, executing code. In that world, unverified AI isn't just unreliable. It's dangerous. The infrastructure that makes AI trustworthy at scale is not optional — it's foundational.

@mira_network isn't competing with GPT or Claude or Llama. It's making all of them more trustworthy. That's a rare position to be in — infrastructure that every AI application eventually needs, regardless of which model wins the capability race.

If you believe AI adoption is inevitable, then verified AI is the next unlock. And right now, $MIRA is one of the few projects building that layer for real.

#Mira @Mira - Trust Layer of AI $MIRA