I came across Fabric the way most new crypto projects cross your path now, condensed into a polished paragraph that feels almost constitutional in tone. “Global open network.” “Verifiable computing.” “Agent-native infrastructure.” The kind of language that sounds carefully assembled, like it’s trying to prove it already belongs to the next decade.

But when I actually read the whitepaper, dated December 2025, the mood shifted. It didn’t read like marketing. It read like someone finally putting a quiet anxiety into words. If autonomous agents are going to start doing real work for people, the hardest part won’t be getting them to act. The hard part will be agreeing on what they actually did, whether it was done correctly, and who takes responsibility when something goes wrong.

That’s the line Fabric keeps circling back to. Not the obvious “robots are coming” narrative. We’re already there. The more uncomfortable point is this: once machines start handling ordinary tasks like inspections, deliveries, cleaning floors, tracking inventory, even security patrols, people won’t be satisfied with a private log sitting on the company’s server as proof that everything went smoothly. If something goes wrong, no one is going to accept “trust us” as documentation.

There will need to be records that don’t belong solely to the robot manufacturer, the operator, the client, or even the regulator. Something neutral. Something both sides can point to when they disagree.

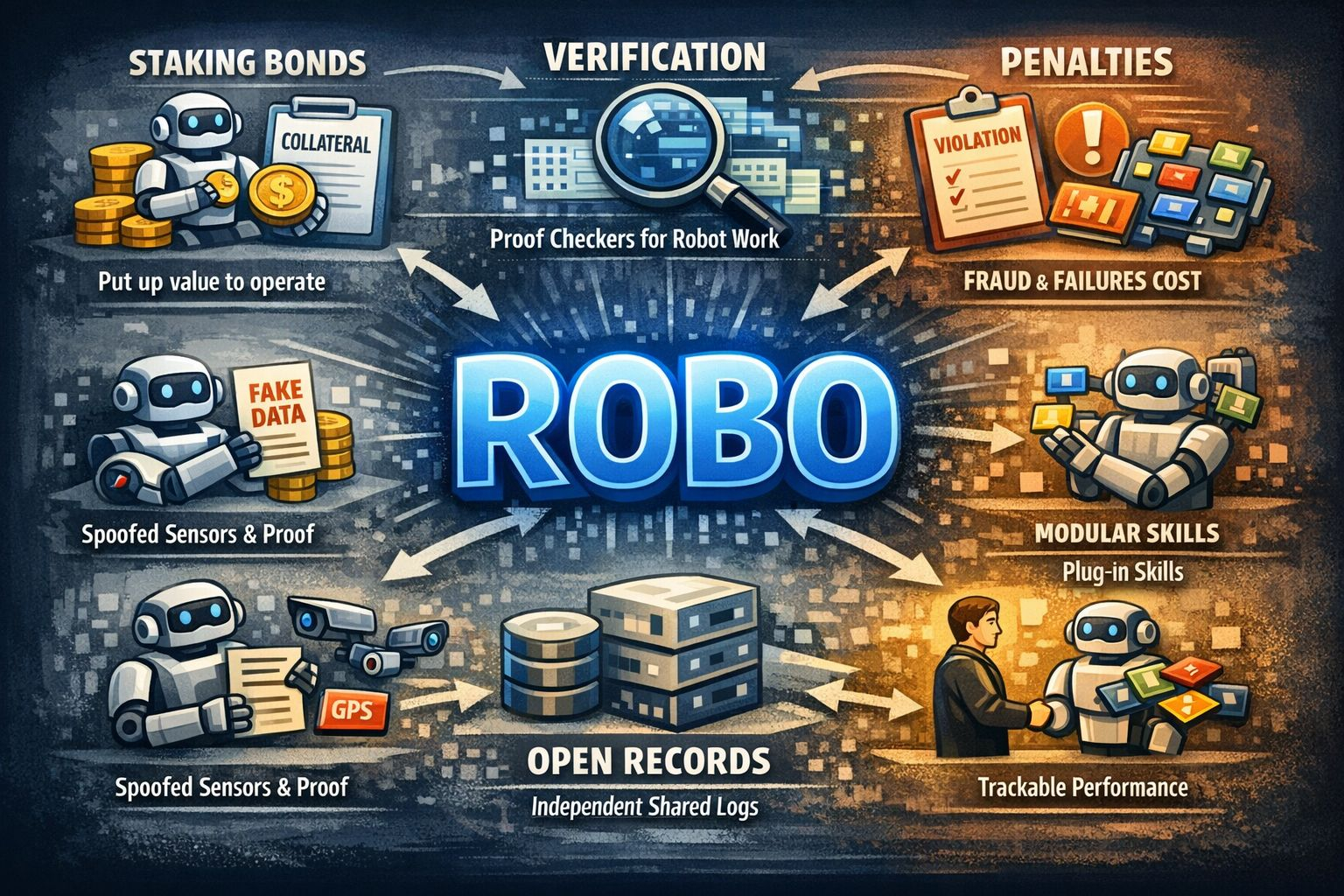

Fabric’s response sounds strange at first, until you picture an actual conflict. It suggests treating a robot less like a gadget and more like a contractor. Before it’s allowed to perform work, it has to put down collateral. In other words, it has skin in the game.

Not a token gesture. A real bond. Actual collateral on the line.

Fabric’s position is straightforward. If you want to register a robot and start earning through the network, you lock value into the protocol. That stake sits there as long as you operate. If the robot does what it’s supposed to do, the bond comes back. If it doesn’t, that bond can be cut into. The point isn’t cosmetic. It’s practical.

First, it makes it harder for someone to spin up endless fake identities and flood the system. Second, it forces operators to think twice before cutting corners. Bad behavior stops being a minor inconvenience and starts becoming expensive.

That phrase, “expensive enough,” is where Fabric shifts tone. It stops sounding like a futuristic sketch and starts sounding like it was written by someone who has seen online marketplaces collapse under spam, fraud, and low quality actors. It reads less like optimism and more like a precaution.

Any network that hands out rewards for “activity” eventually gets gamed. That’s just how incentives work. If you pay for movement, someone will manufacture movement. Fake users. Fake jobs. Fake confirmations. Numbers that look healthy from a distance but fall apart the second you poke them.

In robotics, that kind of manipulation does more than inflate a dashboard. It corrupts reputation. It pollutes performance data. It turns real machines into props in a staged economy where robots get paid to simulate work instead of doing it.

Fabric seems aware of that trap. The whitepaper doesn’t lean on a simple “tasks completed equals rewards” formula. Instead, it leans into something more skeptical. It asks whether the economic relationships around a robot actually look genuine. There’s a graph based approach behind it, but in plain language it’s checking who you’re interacting with and whether that interaction resembles real demand.

The logic is easy to grasp. If you and a cluster of accounts are mostly transacting with each other, paying one another back and forth, the network should flag that pattern. If your activity lives inside a closed loop, it’s probably not organic. Fabric is basically trying to teach the system to recognize when someone is talking to the wider market and when they’re just talking to themselves.

Will that actually hold up? Maybe. It comes down to execution and whether the rules adapt once people start probing for loopholes, which they always do. But the instinct makes sense. If you’re serious about building an economy around robots, you can’t treat it like a social platform where raw engagement is the win. It has to feel more like underwriting risk than chasing clicks.

That same insurance mindset shows up in how Fabric talks about penalties. It doesn’t rely on vague promises about “removing bad actors.” It lays out consequences the way an operating system would. Clear fraud leads to heavy slashing and suspension. Low uptime eats into your rewards and your stake. If performance quality slips under a defined line, your ability to earn can be paused until you fix it. It’s not soft language. It’s deliberate.

The message underneath is pretty direct: if machines are going to be paid for real-world work, reliability can’t be a suggestion. It has to be enforced in a way that actually hurts when someone cuts corners.

Even with all that structure, there’s a stubborn reality underneath it: the physical world doesn’t lend itself to clean verification.

On a screen, evidence feels binary. A program either executed or it didn’t. A transaction either settled or it failed. But when you’re talking about a robot that supposedly cleaned a corridor or inspected a machine, things get fuzzy fast. Sensors can be tricked. Camera footage can be staged. GPS signals can be spoofed. And if someone is motivated enough, they can fabricate “proof” the same way scammers fabricate invoices.

Fabric doesn’t pretend it can eliminate that messiness. Instead, it takes a more pragmatic stance: don’t promise perfect proof. Make it tougher to fake, make deception costly, and build a system where independent parties can step in to check and challenge what’s being claimed. The idea is to turn verification into paid work inside the network, not a courtesy handled behind closed doors by the platform itself.

That’s a bold move, and it’s exactly where many systems start to strain. Verification markets have a habit of narrowing over time. The best equipped participants, the ones with sharper tools and more resources, end up handling most of the oversight. If that circle shrinks too much, you haven’t really created decentralization. You’ve just dressed up a smaller group of gatekeepers in new language.

Another layer Fabric leans into, carefully but noticeably, is the idea of modular “skills.” The way they describe it, robots could load interchangeable skill modules, almost like installing apps. You can see the appeal. App ecosystems exploded because they let thousands of builders extend a single platform without reinventing the base every time.

But a robot isn’t a smartphone. If a phone app crashes, you roll your eyes and delete it. If a robotics skill fails, the consequences can be physical. Damage. Injury. Real costs. The moment you introduce a marketplace for robot capabilities, you inherit a heavy question that doesn’t disappear with good branding: who gets to decide what’s safe enough to deploy?

Fabric says the answer to all of this is “the network,” enforced by records, staking, and penalties. Part of me understands that logic. Another part of me keeps circling back to a tougher reality: when safety is on the line, people rarely wait for slow consensus. They want someone who can step in immediately. Networks are built for deliberation. Accidents move faster than governance. I wouldn’t be surprised if, in its early stages, Fabric ends up leaning more centralized than the word open suggests. Not because it wants to, but because letting everything float freely from day one can turn into chaos. The whitepaper seems aware of that tension, which is probably why it talks about gradual decentralization instead of pretending purity can be switched on overnight.

Then there’s the institutional layer, which is easy to overlook but hard to ignore once you think about it. Fabric places a Foundation at the center as a long term steward, with the token issued through a separate entity beneath it. The legal framing is careful. The token isn’t equity. It doesn’t promise profit rights. That’s standard language in this space. Still, it highlights the balancing act. The project wants the token to be seen as a functional tool that powers bonding, rewards, and penalties. The market, inevitably, will also see it as something to trade. Holding those two narratives in the same hand without letting one distort the other is never simple.

If you read Fabric’s own material closely, you can feel it pulling in two directions at once. It stresses that rewards come from actual work done on the network, not from simply sitting on tokens. At the same time, it explains that protocol revenue may be used to purchase tokens on the open market. That creates a clear economic bridge between real usage and token demand, even if it’s framed carefully as utility rather than profit sharing. You don’t have to be suspicious to see why that gray area attracts attention. It’s exactly the kind of nuance regulators and critics tend to zoom in on.

So when someone asks me what Fabric actually is, I don’t think “robot blockchain” really captures it. That label feels too loose. It’s trying to define rules for how machines earn, how they prove what they did, and how they get punished when they fail. That’s more than infrastructure. It’s an attempt to build an accountability layer for physical work carried out by software and hardware that don’t argue back.

Fabric is aiming to create a shared layer of accountability for machines doing real world jobs. Not the kind of accountability that lives in marketing statements, but the kind that’s built into the rules of participation. If a robot wants to earn, there are conditions attached. If an operator lies or cuts corners, the system is structured so the penalty actually stings. The vision feels less like a casual gig platform and more like a licensed trade. You post collateral. You meet standards. If you mess up, there are consequences.

If it plays out the way they hope, the result could be meaningful. Hiring a robot wouldn’t mean taking a company’s internal logs at face value. Operators could carry a track record that follows them, instead of having their reputation locked inside whatever platform they started on.

If this unravels, it probably won’t be in some dramatic, headline grabbing way. It will break in the obvious places. Verification might prove easier to manipulate than expected. Disputes could drag on long enough to frustrate everyone involved. Governance might slowly tilt toward a small circle of insiders. The bond system, meant to protect the network, could end up discouraging careful small operators while seasoned bad actors simply factor penalties into their operating costs.

Even with those risks, the central idea is difficult to wave away. Independent receipts for robot work, records that don’t belong to a single company or customer, feel less like a futuristic fantasy and more like a practical demand. It’s the kind of structure people start asking for the moment something goes wrong and no one can agree on what actually happened.

@Fabric Foundation #ROBO $ROBO