#BNBOTCKHAN阿拉法特 #OTCKHAN25 @undefined @Mira - Trust Layer of AI $MIRA #MIRA

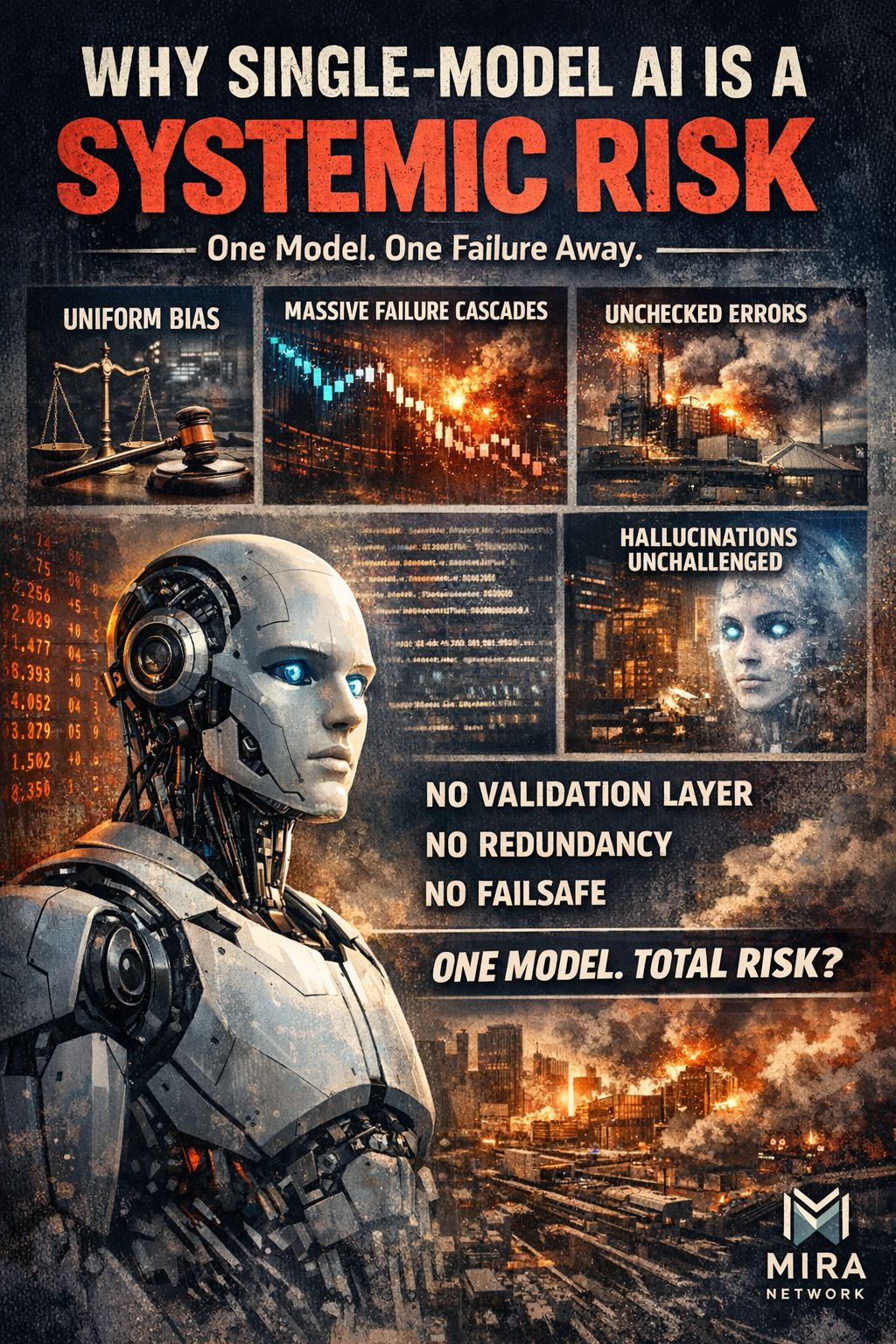

Most production AI today is effectively monoculture intelligence:

One model family

One training pipeline

One bias profile

One hallucination style

One silent failure surface

When that model is wrong, it’s wrong everywhere at once.

That’s not “intelligence at scale” — that’s error propagation at scale.

We already learned this lesson in other domains:

Single database = outage risk

Single server = downtime

Single firewall = breach risk

But AI?

We ship single-model systems into finance, healthcare, legal workflows, ops tooling, and just… hope the model behaves.

Hope is not a reliability strategy.

Why This Is Structurally Fragile

A single-model AI has no native mechanism to:

Challenge itself

Detect contradictions

Validate factual claims

Pressure-test outputs

Detect bias in-context

So hallucinations aren’t “bugs” — they’re unopposed outputs.

In human systems, we solve this with:

Peer review

Committees

Adversarial debate

Red teams

Audits

In AI systems, we mostly do:

“The model said it, ship it.”

That’s wild if you think about it.

Multi-Model Consensus Is Not a Feature — It’s Infrastructure

What you’re describing with Mira Network is basically importing distributed systems logic into AI trust:

Single-model AI:

“Trust me, I’m smart.”

Consensus-validated AI:

“Trust us, we independently checked each other.”

That’s a paradigm shift:

Old Trust Model

New Trust Model

Model authority

Network agreement

Probabilistic fluency

Verified claims

Centralized output

Distributed validation

Single failure surface

Redundant failure detection

This is the same leap:

from single servers → cloud redundancy

from single authority → blockchain consensus

from perimeter security → layered defense

AI is just late to this party.

Why This Becomes Existential at Scale

Once AI agents start:

Executing trades

Triggering contracts

Moving funds

Making medical triage calls

Acting autonomously

A single hallucination isn’t a “bad answer”

It’s a financial event, legal event, or safety event.

At that point:

Single-model AI isn’t innovation.

It’s operational risk concentration.

Your framing nails it:

Single-model AI = beta infrastructure

Consensus-validated AI = production infrastructure

That’s the difference between:

“It works in demos”

“It can safely run civilization-scale workflows”

Optional Tight One-Liners You Can Drop In

If you’re posting this publicly, these hit hard:

“Intelligence without verification becomes liability.”

“One model at global scale isn’t resilience — it’s monoculture risk.”

“AI hallucinations aren’t rare events. They’re unchallenged outputs.”

“Consensus is the missing trust layer of AI.”

“We don’t deploy single servers to run the internet. Why deploy single models to run decision-making?”

Big Picture

You’re not just talking about better AI.

You’re pointing at a missing layer of AI infrastructure:

A trust layer between probabilistic generation and real-world action.

That’s a foundational shift — the same category of upgrade as:

SSL for the web

Consensus for blockchains

Redundancy for cloud computing