Fabric Protocol makes more sense when you stop thinking about it as a crypto asset first and start treating it like an attempt to build an operating layer for robots that multiple parties can actually share. The project is basically saying: robots are going to be built by communities, companies, researchers, and independent operators at the same time, and we need a neutral way to coordinate who contributes what, who gets credit, who gets paid, and what rules constrain what the machines are allowed to do. That is the heart of it. Everything else only matters if that coordination works in the real world.

What I keep coming back to is that Fabric is trying to solve a kind of trust problem that robotics has avoided by staying closed. In the current model, a robot platform is usually one company, one stack, one set of policies, one set of logs, and one legal owner. Fabric is proposing the opposite direction: open participation, shared upgrades, and public accounting of what happened. It sounds clean on paper, but robotics is the place where clean architectures get messy fast. A robot does not just compute. It moves in environments that change, it reads sensors that drift, it makes mistakes that can damage property, and it creates liability the moment it interacts with people. So if Fabric wants to be taken seriously as an infrastructure layer, it has to show that it can handle that mess, not just describe a cleaner future.

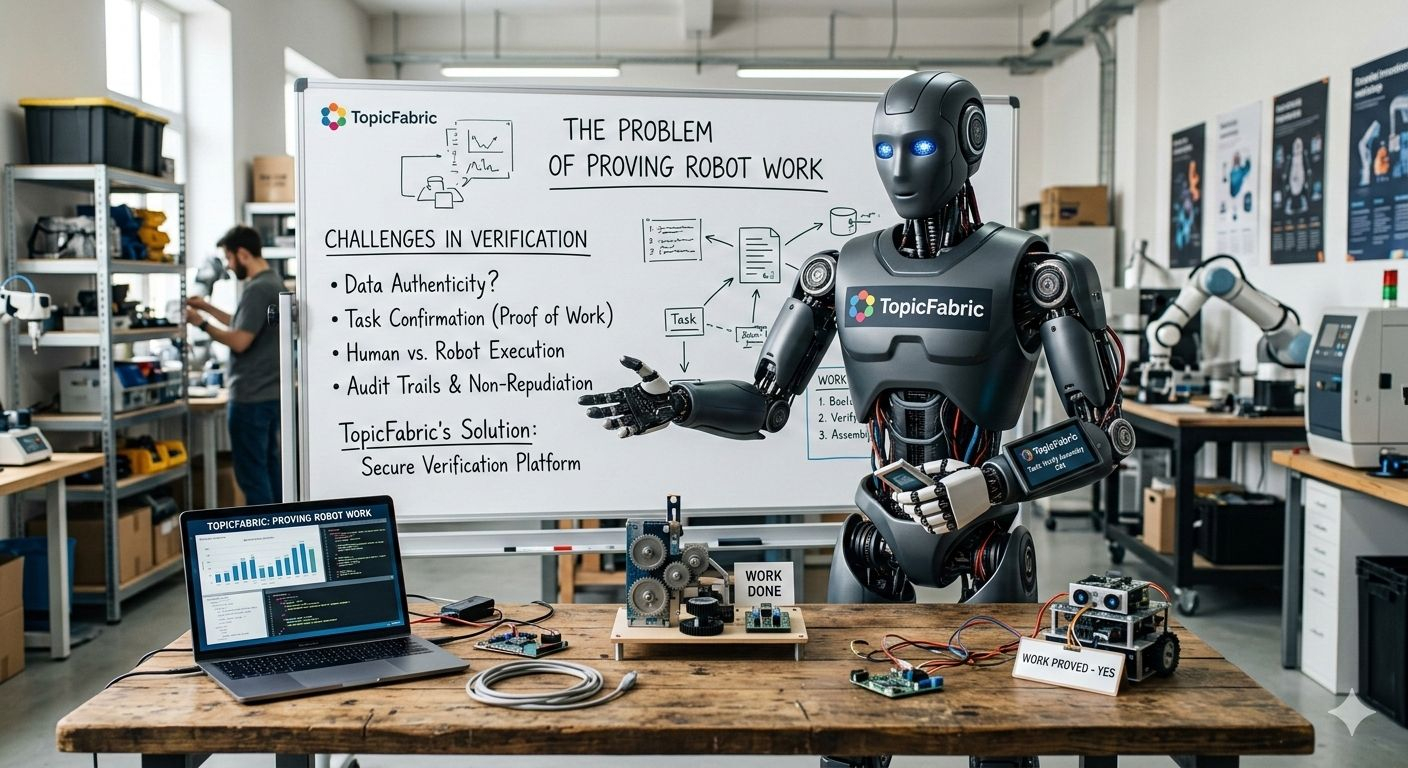

The project’s own description leans heavily on verifiable computing and agent native infrastructure. The key promise inside those words is that the network can coordinate data, computation, and oversight through a public ledger, and that contributions can be measured in ways that do not rely on trust. That ambition is understandable. In a robot network, you do not want rewards going to whoever shouts the loudest or has the best connections. You want rewards tied to work that can be checked. The question is what checkable means when the “work” is physical.

Uploading a dataset is easy to verify. Providing compute is easy to verify. Recording that a robot accepted a task is easy to verify. But proving a robot actually completed a task correctly and safely is not easy in the same way. That is where these networks either become impressive, or they become a machine that pays people for generating receipts. This is not a minor detail. If the protocol rewards what is easiest to prove, it will slowly drift toward activity that looks productive without necessarily improving robotics in a meaningful way.

To understand what Fabric needs to get right, I think it helps to break the project into three concrete layers.

The first layer is identity. Fabric’s whole idea depends on robots and agents being addressable entities in the network. A wallet for a robot is trivial. The hard part is binding that wallet to a specific device, with a known operator, a known software stack, and some level of tamper resistance.

Without strong identity and attestation, you get the worst of both worlds: the network looks open, but it cannot reliably distinguish a real machine from an impersonation, and it cannot reliably tie behavior to accountability. If Fabric’s identity layer becomes strong, then the network can start to matter for governance, compliance evidence, and audit trails. If identity stays weak, then the ledger becomes a record of claims rather than a record of reality.

The second layer is verification. Fabric is positioning itself around verifiable work. In robotics, verification has to go beyond “did a node submit something” and toward “did the thing actually happen in the physical world, in the way the task required.”

That usually forces uncomfortable design choices. Either you build heavy mechanisms like hardware backed attestations, redundant sensing, external validators, and dispute processes, or you accept that some tasks cannot be fully verified and you rely on trusted committees or reputational systems. Both directions can work in limited domains, but neither is free.

Trusted committees undermine the open network story. Reputation systems are notoriously gameable unless they are designed with strong penalties and anti collusion measures. The more general purpose the robot tasks are, the harder verification becomes.

The third layer is governance and oversight. Fabric talks about coordinating regulation via a public ledger. I interpret that less as “the ledger enforces laws” and more as “the ledger can hold the evidence trails that regulators, operators, and insurers might care about.” That is a reasonable use of a ledger. But it only becomes meaningful if the evidence is trustworthy, and if there is a clear incident response path when things go wrong.

Robotics does not give you the luxury of slow governance. If a task type turns out to be unsafe, if a model is misbehaving, if a particular operator is abusing the system, someone has to react quickly.

If governance is too centralized, that reaction can be fast but feels arbitrary. If governance is too decentralized, the reaction can be principled but too slow to matter. Fabric’s structure, being backed by a foundation, hints that the project expects an early phase where a smaller group can steer and stabilize the network. That can be the right call for a safety sensitive system, but it also means the project should be judged on transparency and limits, not on slogans about openness.

ROBO sits inside this as the coordination asset: fees, governance, and incentives. Token mechanics are not automatically a red flag here because without incentives you do not get participation from data providers, compute providers, robot operators, and validators. The issue is whether incentives point people toward the right behavior.

If ROBO rewards can be farmed by simulating tasks or producing low value verifications, then the network will attract the wrong kind of participation. If ROBO rewards require costly proofs and meaningful accountability, then participation will be slower, but the signal will be stronger. This is why I keep circling back to verification. In a robot network, verification design is not a feature. It is the entire product.

One more thing that is easy to miss: the project’s ambition is “general purpose robots.” That phrase is attractive, but it is also where most systems become vague. General purpose in robotics is not one problem. It is a stack of domain specific problems stitched together, each with different safety constraints and verification difficulty.

A careful approach would likely start narrow: tasks where the environment is controlled, success criteria are measurable, and proofs are realistic. If Fabric tries to jump too quickly into broad claims, the network will either become shallow or it will end up relying on centralized gatekeeping to prevent abuse. Neither outcome is fatal, but both would reshape what Fabric actually is.

So here is the honest, human read of Fabric as a project. There is a real thesis underneath it: robotics is moving toward multi party development, and the missing layer is coordination with accountability.

A public ledger plus verifiable computation can help with accountability and incentives, but only if the system can tie identities to real machines and can verify outcomes in a way that resists gaming. If Fabric proves that it can do that, even in narrow domains, it becomes interesting infrastructure rather than just an idea. If it cannot, the network risks becoming a rewards system that tracks activity without reliably improving robotics.

Right now, the most important evidence is not token distribution mechanics or exchange listings. It is whether Fabric can show real integrations, real robot operators, and real task flows where verification is strict enough that cheating is expensive. If those pieces appear, you will see it in the form of technical interfaces, developer documentation that people actually use, and case studies where the network’s rules held up under pressure. If those pieces do not appear, the story stays conceptual, and the ledger becomes more of a narrative anchor than an operational backbone.

That is the line I would use to evaluate the project: does Fabric build a verification and accountability stack that can survive contact with real robots, real operators, and real incentives, or does it mostly measure what is convenient because the physical world is too hard to certify.