We talk a lot about AI taking over the world. But here's the uncomfortable truth nobody in mainstream tech wants to say out loud: we have absolutely no reliable way to know when an AI is telling the truth.

That's not a minor bug. That's a foundational crisis.

Every time you rely on an AI-generated output — for a medical decision, a legal document, a financial analysis — you're trusting a system that can hallucinate facts with complete confidence. No audit trail. No verification. No accountability. Just a black box that sounds convincing.

@mira_network was built to solve exactly this problem, and the approach is genuinely clever.

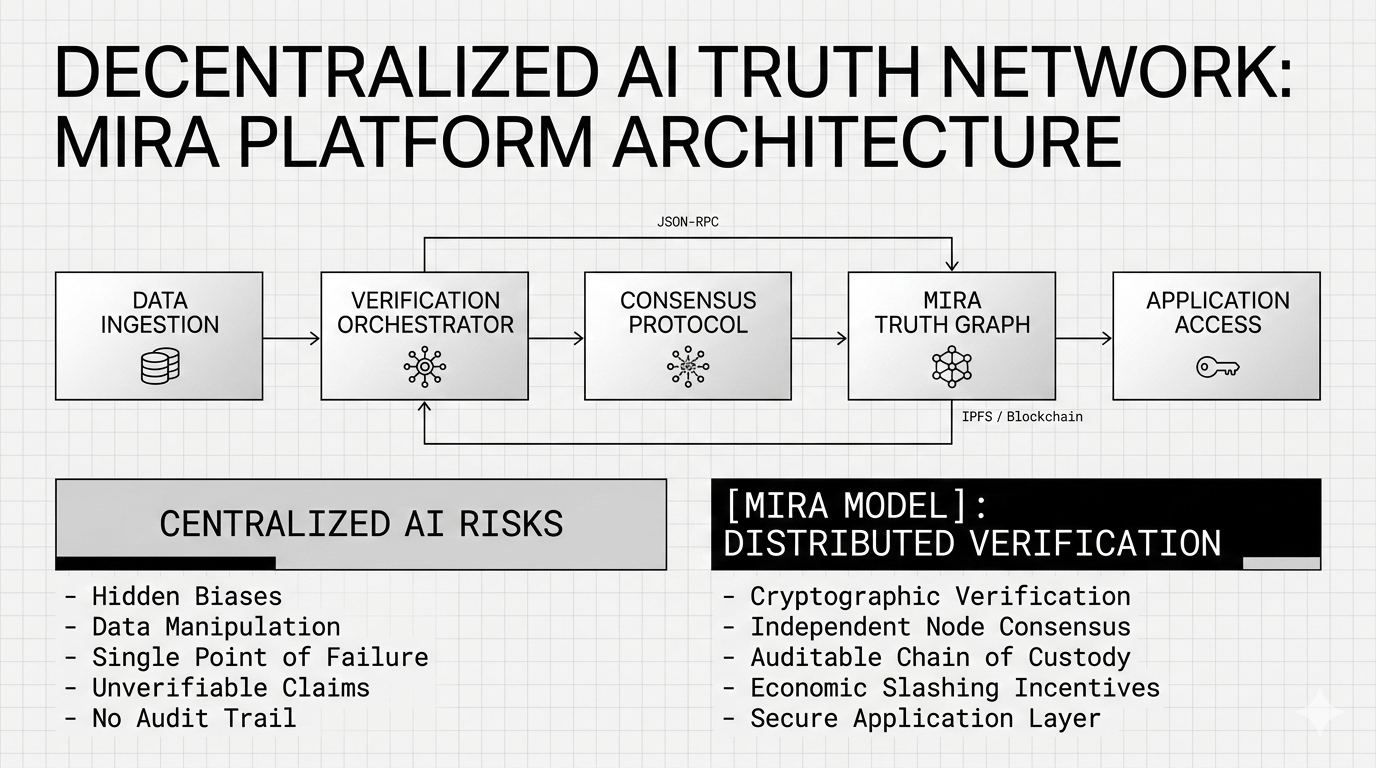

Instead of asking one AI model to verify another AI model (which is like asking a suspect to investigate themselves), Mira routes outputs through a distributed network of independent AI models that reach consensus on the truthfulness of individual claims. Think of it like a jury system — but for machine-generated information. Every response gets broken down into checkable sub-claims. Those claims are independently evaluated. Consensus determines what's verified. The result gets cryptographically certified on-chain.

No single point of failure. No centralized gatekeeper. Just math and consensus doing what blockchain does best — creating trust without requiring trust.

The scale they've already reached is hard to ignore. Over 4 million users. 19 million queries processed weekly. 3 billion tokens verified every single day. Applications like Klok, Learnrite, Astro, and Creato are already running on Mira's verification rails — and that's before the broader developer ecosystem gets fully activated.

$MIRA is the economic engine behind all of it. Token holders aren't passive spectators. Validators stake $MIRA to participate in the verification network, earning rewards for honest consensus and facing slashing penalties for bad behavior. This isn't just tokenomics for the sake of it — it's a carefully designed incentive structure that makes the network more secure the more it's used.

The $9M seed round from Bitkraft and Framework Ventures, with participation from Accel, Mechanism Capital, and Folius Ventures, was a serious signal that institutional money sees what retail hasn't fully priced in yet: AI verification infrastructure is not optional. It's the layer that has to exist before autonomous AI can operate in any high-stakes domain. Healthcare, law, finance, education — none of it works safely without it.

The question isn't whether a trust layer for AI will be built. It's who builds it, who controls it, and whether it's open or locked behind corporate walls.

Mira is betting on open. And with $MIRA, so can you.

Do your own research. Understand the risks. But don't ignore the signal.

#Mira #MIRA @Mira - Trust Layer of AI $MIRA