The "Institutional Trust" Narrative

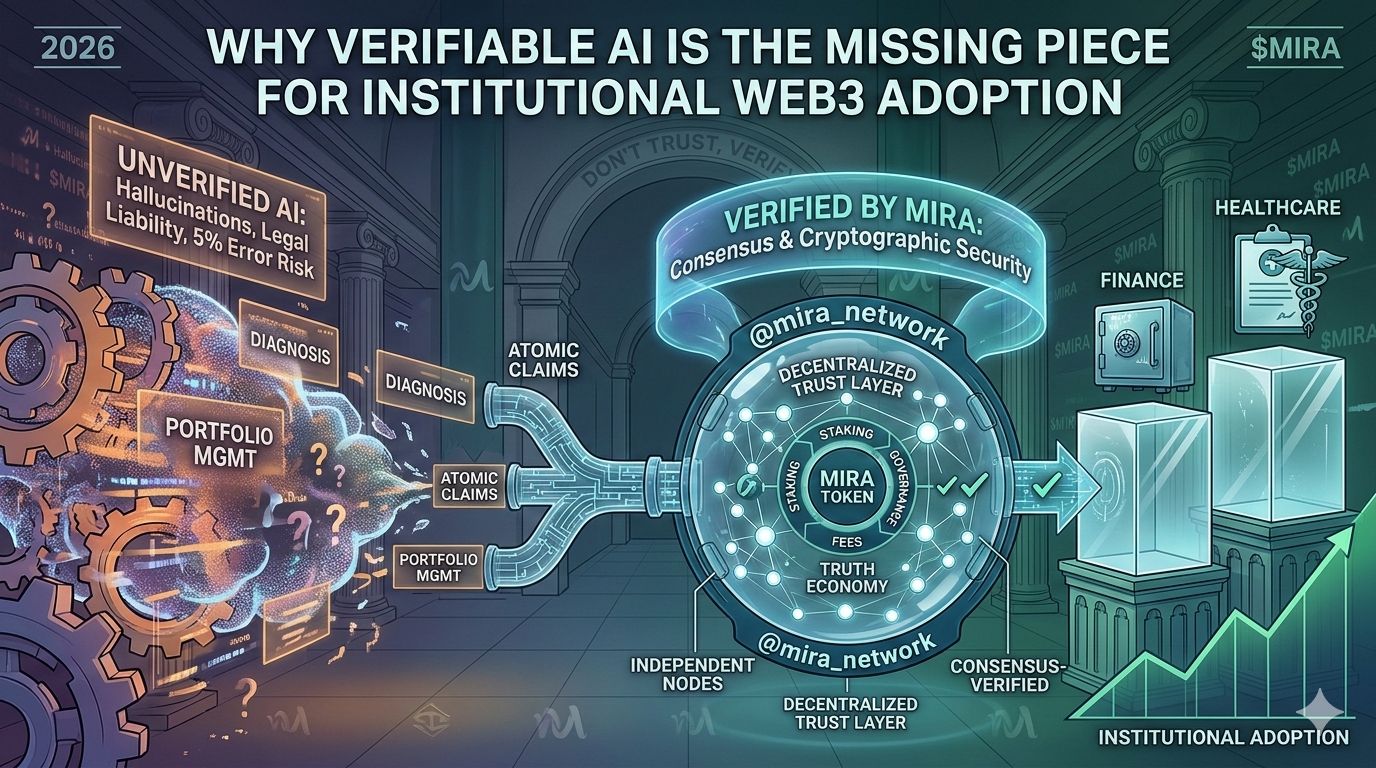

Title: Why Verifiable AI is the Missing Piece for Institutional Web3 Adoption

As we move further into 2026, the conversation around Artificial Intelligence has shifted from "what can it do?" to "how can we trust it?" For high-stakes industries like finance and healthcare, a 5% AI hallucination rate isn't just a minor bug—it's a massive legal and operational liability. This is exactly where @mira_network enters the frame as a critical infrastructure player.

By building a decentralized Trust Layer, Mira is effectively doing for AI what Chainlink did for DeFi data. The network doesn't just ask you to "trust" an output; it requires verification. Through its unique process of breaking down complex AI responses into "atomic claims" and verifying them via a decentralized network of independent nodes, @mira_network ensures that outputs are cryptographically secured and consensus-verified.

The $MIRA token sits at the heart of this "Truth Economy." It’s used for node staking to secure the network, paying API fees for verification services, and driving governance. As autonomous AI agents begin to manage real-world assets (RWAs) and on-chain portfolios, the demand for a "Don’t Trust, Verify" protocol will only grow. In a world of deepfakes and hallucinations, #Mira is providing the transparency the industry desperately ne eds.

eds.