Let me tell you about the most underrated problem in tech right now.

Every week, hundreds of millions of people ask AI something important. They ask it to explain a diagnosis their doctor gave them. They ask it to draft a contract. They ask it to summarize a financial report that will influence a real decision with real consequences. And in almost every single one of those cases, the AI answers with complete confidence — whether it's right or wrong.

That's not an edge case. That's the default behavior of every major AI system deployed today.

We built the most persuasive communication tools in human history before we built any way to verify what they're actually saying. And now we're surprised that people can't tell the difference between an AI that's accurate and one that's hallucinating at a doctoral level.

This is the problem @mira_network decided to actually solve — not theorize about, not write a whitepaper around — actually solve, with working infrastructure processing real queries at real scale right now.

Here's what makes Mira's approach different from everything else claiming to fix AI reliability.

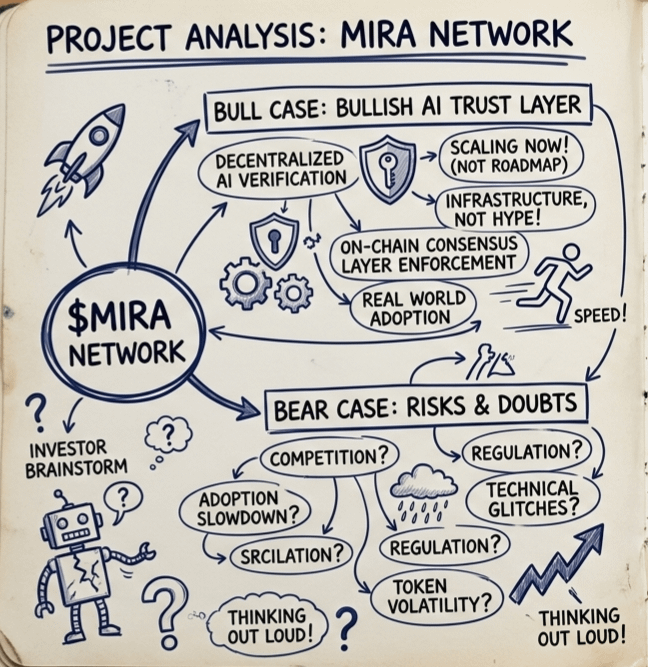

Most "AI verification" proposals fall into one of two traps. Either they use a single, centralized authority to fact-check AI outputs — which just replaces one black box with another — or they rely on human reviewers, which doesn't scale and introduces its own biases. Mira does neither.

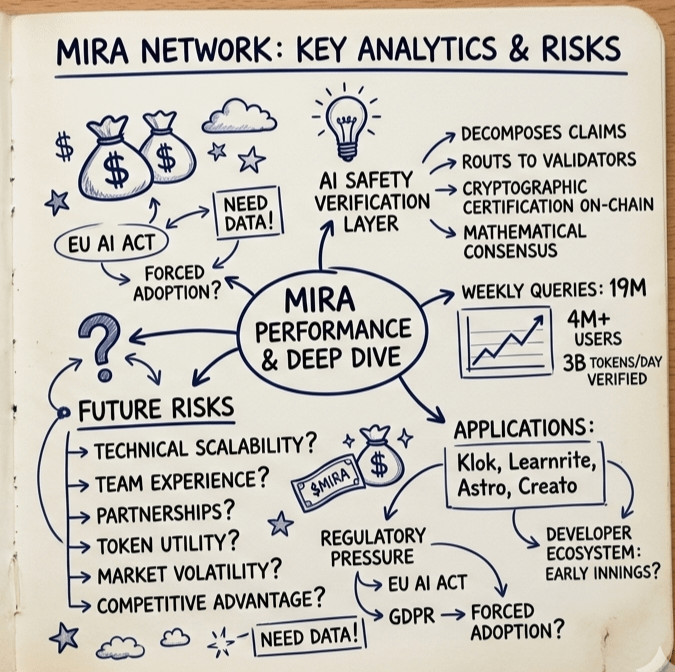

Instead, Mira built a distributed consensus network where independent AI models evaluate each other's outputs simultaneously. Every response gets decomposed into individual verifiable claims. Those claims get routed to multiple independent validator nodes running different models with different architectures and different training data. Consensus is reached mathematically. The verified result gets cryptographically certified and written on-chain.

No single model controls the outcome. No human bottleneck slows the process. No centralized gatekeeper decides what's true. Just distributed consensus doing what it does best — making trust an emergent property of the system rather than a feature you have to take someone's word for.

The numbers that are already coming out of this network are not small. Over 4 million users. 19 million queries processed every week. 3 billion tokens verified daily. Applications like Klok, Learnrite, Astro, and Creato are already built on top of Mira's verification rails — and the developer ecosystem is still in its early innings.

Now think about where this goes.

Autonomous AI agents are being deployed in healthcare workflows right now. AI is being used to generate legal documents that real people sign. Financial institutions are experimenting with AI-driven analysis that influences capital allocation. In every one of these domains, the cost of an undetected hallucination isn't an annoying chatbot response — it's a misdiagnosis, a flawed contract, a bad trade.

The regulatory pressure alone is going to force enterprises to adopt verification infrastructure. GDPR already has provisions around automated decision-making. The EU AI Act is creating mandatory accountability requirements for high-risk AI systems. In the United States, sector-specific regulators in healthcare and finance are watching AI deployments closely. Every one of these pressures points in the same direction: someone needs to be able to prove that an AI output was checked.

Mira is building the infrastructure that makes that proof possible.

$MIRA is the economic layer that holds it all together. Validators stake $MIRA to participate in the consensus network — putting real economic skin in the game for every verification they perform. Honest consensus earns rewards. Dishonest behavior triggers slashing. This isn't a governance token that lives in a multisig wallet somewhere — it's an active incentive mechanism that makes the network more reliable as it grows, because the cost of attacking it scales with the value it secures.

The $9 million seed round from Bitkraft and Framework Ventures, with participation from Accel, Mechanism Capital, and Folius Ventures, wasn't venture capital chasing a narrative. These are funds that do deep technical diligence. They saw a working network, a real use case, and a token model that creates genuine demand as adoption grows.

Here's what I keep coming back to when I think about $MIRA.

Every transformative infrastructure layer in tech history looked boring from the outside while it was being built. TCP/IP wasn't exciting — until the internet ran on it. HTTPS wasn't a headline — until e-commerce required it. The verification layer for AI isn't going to make the front page of a tech blog today. But five years from now, when AI is embedded in every consequential workflow in medicine, law, and finance, the question of who built the trust infrastructure is going to matter enormously.

@mira_network is building that layer. In the open. With working technology. At measurable scale.

That's the kind of bet worth understanding — even if the mainstream hasn't caught up yet.

Do your own research. Assess the risks carefully. But don't let the quiet building fool you into thinking nothing important is happening here.

#Mira #MIRA @Mira - Trust Layer of AI $MIRA