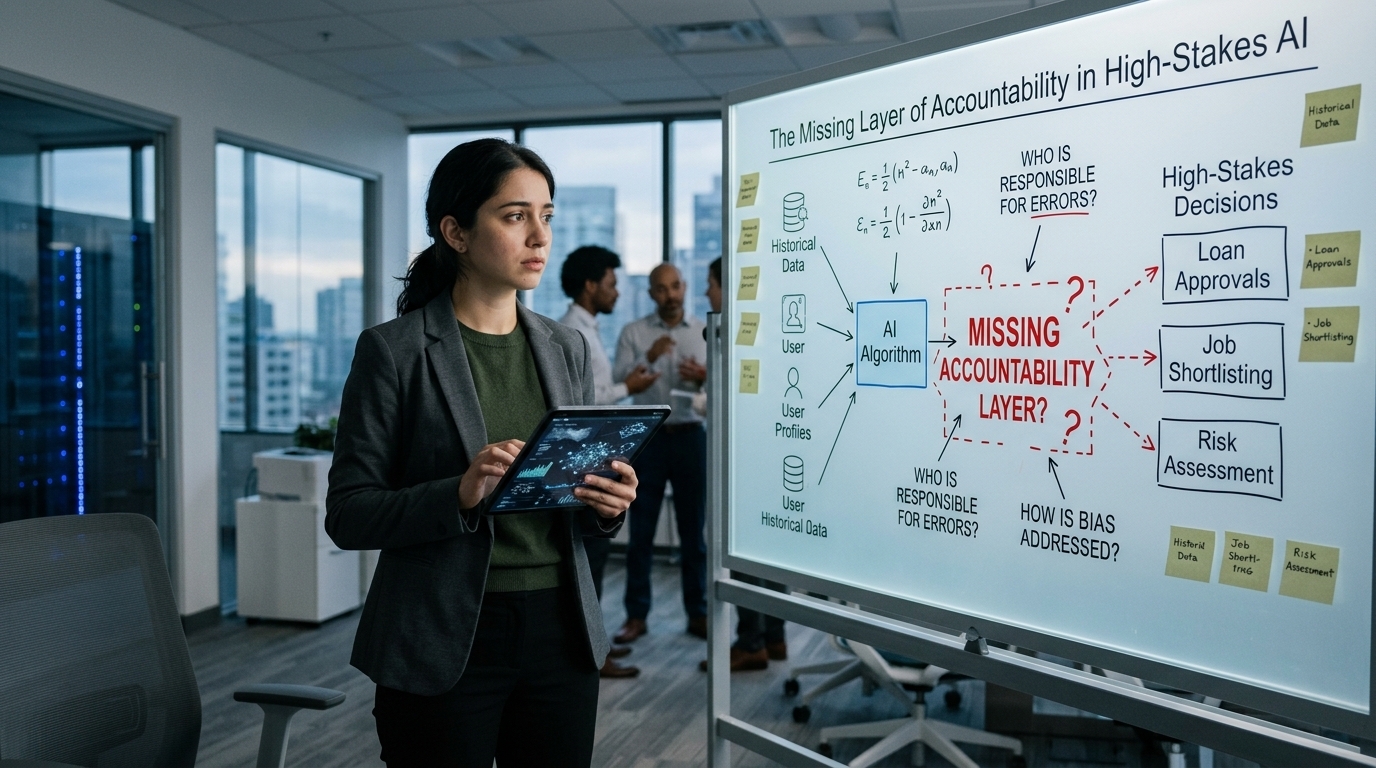

The AI industry has become very good at improving performance metrics. Models are faster, larger, more accurate. But there is one question that still sits in the background, unanswered: when an AI system causes harm, who is responsible?

Not in theory. In practice.

We are talking about responsibility that triggers investigations, regulatory action, financial penalties, reputational damage. The kind that boards and compliance teams lose sleep over. Right now, there is no clean answer. And that uncertainty—not model quality, not cost, not integration complexity—is what keeps institutions cautious.

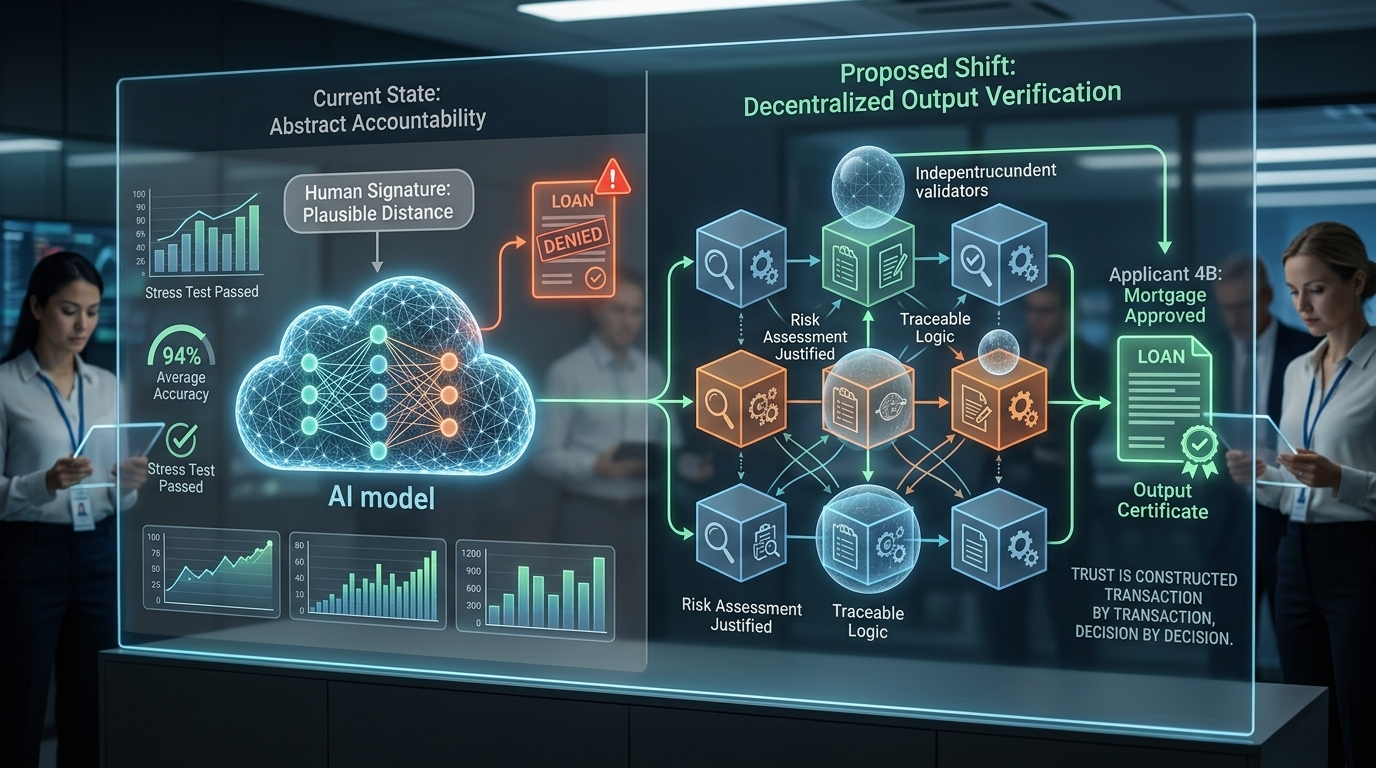

In sectors like credit scoring, insurance underwriting, and risk assessment, AI systems rarely “make” official decisions. They produce recommendations. A human signs off. On paper, the human is responsible.

But reality is more complicated. If an AI model has already filtered, ranked, and evaluated thousands of applications, the human reviewer is often confirming what the system has effectively decided. The organization gains the efficiency of automation while maintaining plausible distance from the outcome.

That grey zone is becoming harder to defend.

Regulators in regions like the European Union, through frameworks such as the AI Act, are pushing for explainability, auditability, and traceability in high-risk AI systems. The response from the industry has been predictable: model cards, bias audits, governance committees, explainability dashboards.

These tools are useful. But they do not solve the core problem.

They describe the model. They do not verify the output.

Most discussions about AI reliability focus on averages. A model is 94% accurate. It performs well on benchmarks. It passes stress tests. That sounds reassuring—until you are in the 6% of cases where it fails. When that failure affects someone’s mortgage, insurance claim, or freedom, averages lose their comfort.

High-stakes environments do not operate on statistical goodwill. They operate on records.

Auditors review specific decisions. Regulators examine individual cases. Courts assess particular outcomes. In those contexts, it matters less that a system is “generally reliable” and more that a specific output can be traced, reviewed, and justified.

This is where decentralized verification introduces a structural shift.

Instead of assuming a well-trained model will usually be correct, verification infrastructure evaluates outputs individually. Each result can be checked, confirmed, or flagged by independent validators. The emphasis moves from model-level trust to output-level accountability.

The difference is subtle but powerful.

It is the difference between a manufacturer saying, “Our products are safe on average,” and attaching a certificate that says, “This specific unit passed inspection.” In regulated industries, that distinction changes everything.

Economic incentives further reinforce this structure. When validators are rewarded for accuracy and penalized for negligence, accountability becomes embedded in the system’s design. Responsibility is no longer abstract. It is distributed and economically enforced.

Of course, this approach introduces trade-offs. Verification takes time. In environments where speed is critical—high-frequency trading, emergency response, real-time fraud detection—latency can undermine adoption. If accountability mechanisms slow systems to the point of impracticality, institutions will bypass them.

Speed and responsibility must coexist.

There are also unresolved legal questions. If a verified output turns out to be wrong, who carries the liability? The institution deploying the system? The decentralized network? The individual validators? Until regulators clarify how distributed AI verification fits into existing liability frameworks, caution will remain.

Yet the direction of travel is clear.

AI is no longer confined to drafting emails or recommending content. It is being integrated into domains that affect money, rights, and opportunity. These domains already have accountability standards built over decades. AI systems will not be granted exemptions simply because they are complex.

Trust in high-stakes systems is not declared. It is constructed—transaction by transaction, decision by decision—through mechanisms that make responsibility visible when something goes wrong.

Performance alone is not enough. Transparency alone is not enough. Governance layers alone are not enough.

For AI to operate confidently in regulated, high-consequence environments, accountability cannot be optional or implied.

It has to be built into the infrastructure itself.