The first time I started questioning reputation scores in a work network, it wasn’t because someone explained how they worked.

It was because the same operators kept landing the cleanest jobs.

Nothing in the documentation had changed. The system still described itself as open participation. Anyone with the right setup could submit work.

But over a few cycles something became obvious.

Certain operators were consistently getting tasks with lower dispute risk, cleaner verification paths, and predictable payout windows. Everyone else was technically participating — just not in the same lane.

At first people assumed it was luck.

Then someone pulled the activity logs and the pattern became harder to ignore.

Operators with slightly stronger reputation histories were entering the assignment pool earlier. Not dramatically earlier. Just enough that by the time the queue reached everyone else, the safest jobs were already gone.

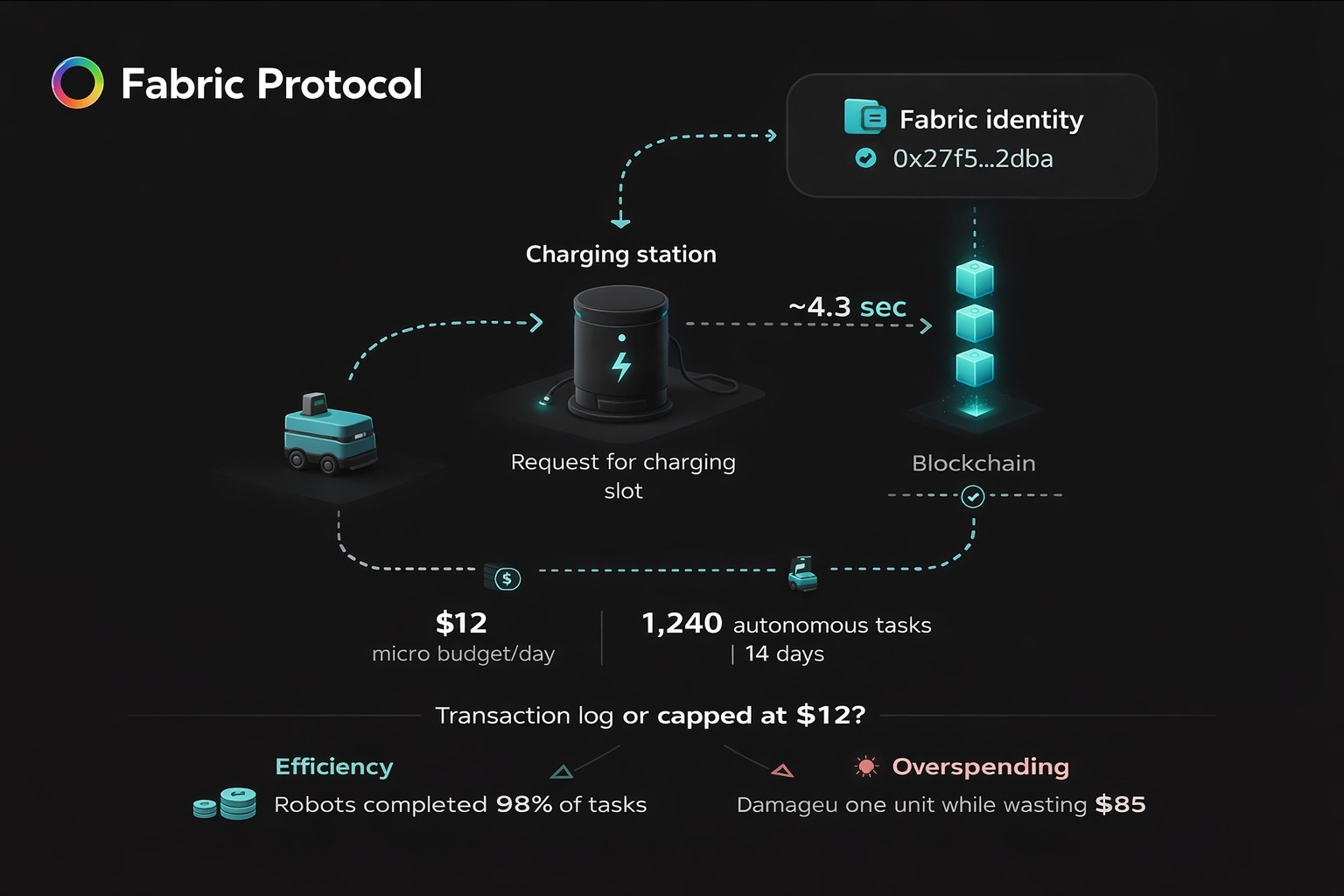

That’s the lens I’ve started using when I think about systems like Fabric.

Not robots.

Not throughput.

Reputation surfaces.

Because the moment a network introduces persistent identity and behavioral scoring, reputation stops being a passive metric.

It becomes an admission policy.

Most systems describe reputation as a feedback signal.

Complete tasks well, your score improves. Fail tasks, your score drops.

But once work begins flowing continuously, reputation starts doing something else.

It starts shaping who gets access to the best opportunities first.

And once opportunity distribution is tied to scoring, the score becomes a gate.

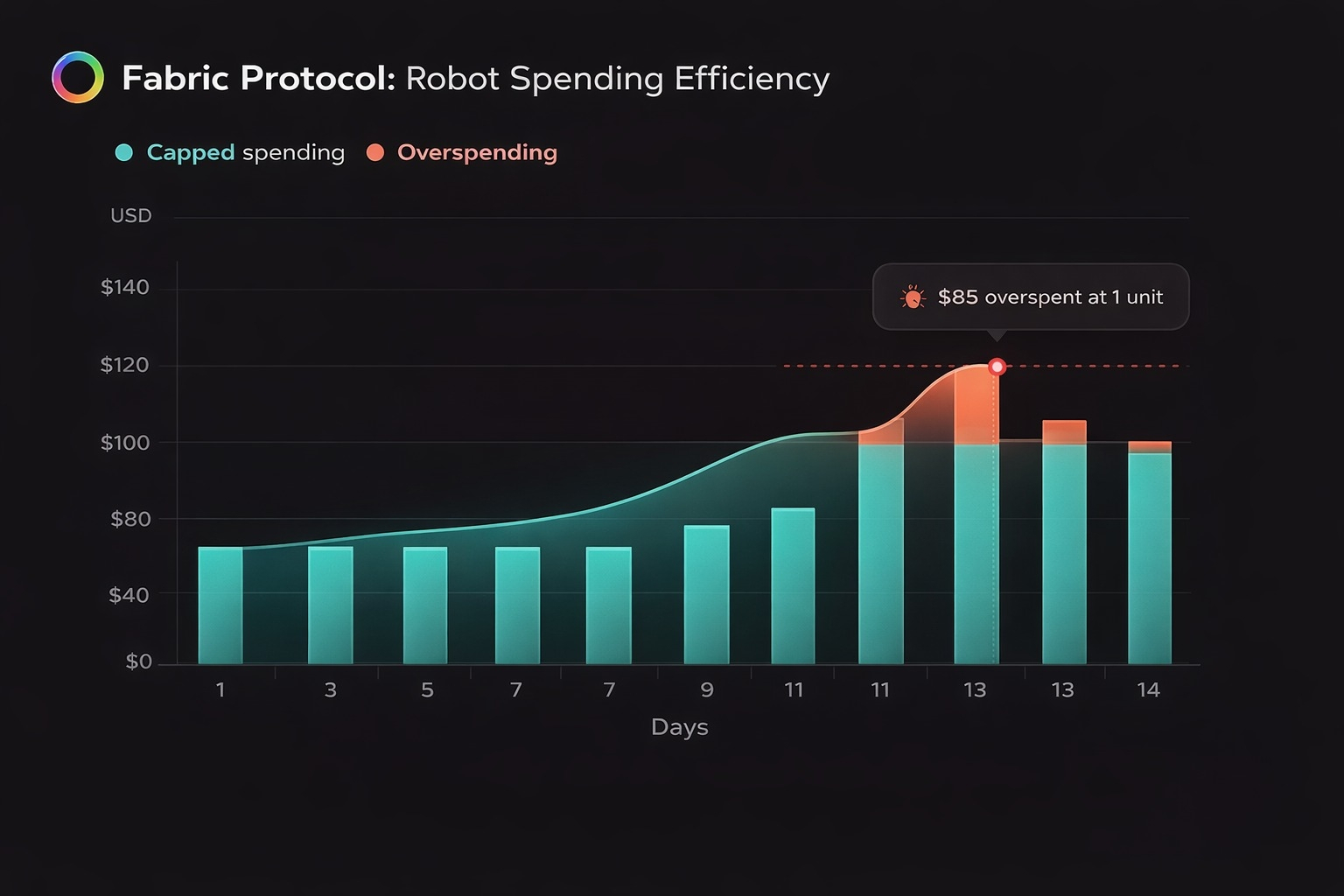

You can see the behavior change almost immediately.

Participants start protecting completion rate more than pursuing difficult work. Operators avoid tasks that might generate disputes, even if those tasks are economically valuable.

You even start seeing people skip perfectly profitable jobs simply because the dispute surface looks messy.

None of this requires manipulation.

It only requires a system where historical behavior influences future access.

Once that feedback loop forms, reputation stops acting like a record of performance and starts acting like a sorting mechanism.

High scoring operators get first look at clean work. Lower scoring operators inherit the leftovers — tasks with higher verification friction or lower margin.

The network hasn’t banned anyone.

It has just created lanes.

Over time those lanes stabilize.

Experienced operators learn how to protect their score. They cherry pick work that keeps dispute rates low. They automate the workflows that maintain smooth histories.

The scoring system quietly trains them to behave this way.

Meanwhile newcomers join the system technically eligible, but practically late.

Not because they lack ability.

Because reputation compounds.

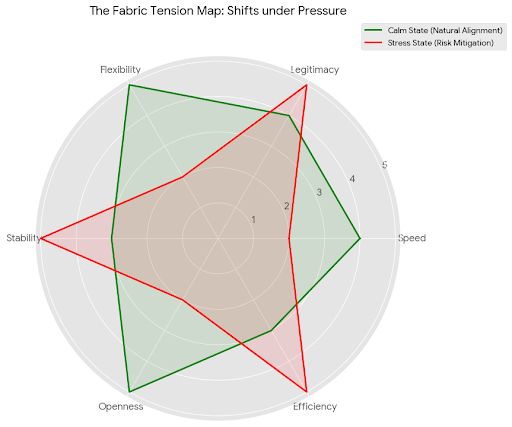

That’s where systems like Fabric face an interesting tension.

Reputation is necessary. Without it, networks struggle to filter unreliable operators.

But reputation is also a gravity well.

If scoring surfaces become too influential, open participation quietly turns into tiered access.

The network still looks open.

Opportunity just stops being evenly distributed.

That’s the part I’m watching with $ROBO.

Because the token isn’t just about payment for robotic work. It interacts with identity, reputation, and participation.

If reputation surfaces become too dominant, serious operators will optimize around protecting score rather than expanding capability.

And once that happens, the network stops selecting for the best operators.

It starts selecting for the safest ones.

The difference isn’t obvious early.

It appears later, when the system is busy.

Do high reputation operators keep absorbing the best work, or does opportunity rotate?

Do newcomers have a realistic path to build reputation?

And when reputation scores rise across the network, does the system still differentiate performance — or does everything collapse into a small elite tier?

Because the moment reputation stops reflecting performance and starts controlling access…

it stops being feedback.

It becomes governance.