Reducing Systemic Bias Through Distributed Validation: The Mira Network Approach

As artificial intelligence systems become a part of finance, healthcare, governance and self-driving technologies the issue of unfair bias in AI-generated content is getting really critical. Many modern AI models are trained on datasets that may have historical, cultural or structural biases. When these biases are built into model outputs they can affect decisions on a scale. Mira Network, a decentralized verification protocol built to solve the challenge of reliability in intelligence systems addresses this issue through distributed validation and trustless consensus.

The Problem of Centralized AI Bias

Traditional AI systems rely on one model or a controlled evaluation framework. Even when multiple models are used final decisions are often made by one authority. This structure creates two risks:

* Model-Level Bias – One model’s training data and architecture may skew results.

* Institutional Bias – Centralized oversight may unintentionally reinforce perspectives or incentives.

Because verification is not distributed there is limited opportunity to challenge or counterbalance biased outputs.

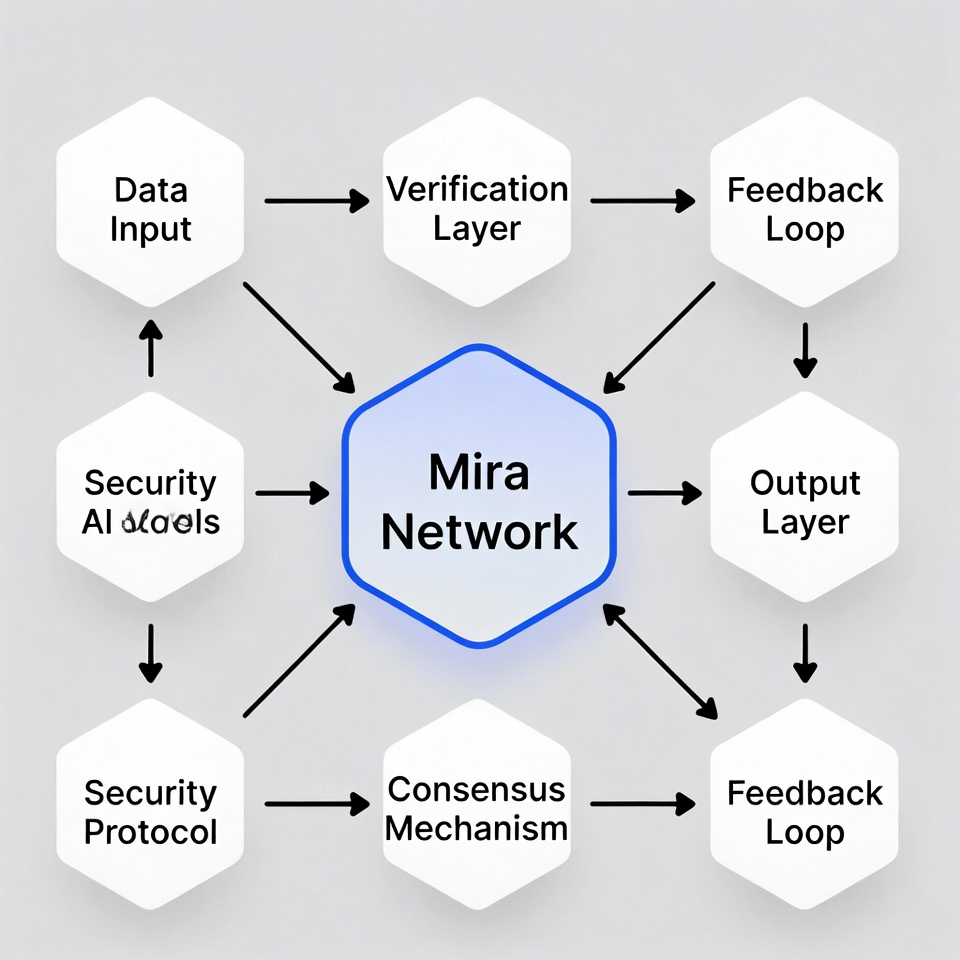

Mira’s Distributed Validation Architecture

Mira Network introduces an approach. Of accepting AI outputs as one response Mira breaks them down into structured verifiable claims. These claims are then distributed across a network of independent validators and AI models.

Each validator assesses claims. Final validation is achieved through blockchain-based consensus than centralized approval. This architecture ensures that no one model, dataset or governing entity has control over what's considered accurate.

How Distribution Reduces Systemic Bias

Mira’s distributed validation process reduces bias in ways:

* Diversity of Models and Validators

By distributing claims across multiple independent AI systems the protocol reduces reliance on any one training dataset or algorithmic framework. Different perspectives naturally balance each other out.

* Consensus-Based Truth Formation

Truth is established through agreement than one assertion. Biased evaluations are diluted when weighed against network consensus.

* Economic Incentives for Accuracy

Validators are incentivized to provide assessments. Biased validation carries risk while objective alignment with consensus is rewarded. This discourages manipulation. Encourages rational behavior.

* Transparency and Auditability

All validation outcomes are recorded. This transparency enables auditing and analysis allowing systemic bias patterns to be identified and addressed over time.

From Output to Verified Information

AI systems generate probabilistic responses. Mira transforms these responses into information by layering decentralized validation on top. Bias, which often thrives in centralized systems becomes harder to sustain in a transparent economically aligned and distributed network.

Building a Fairer AI Infrastructure

As AI adoption grows reliability must include not accuracy but fairness and neutrality. Mira Network’s distributed validation framework represents a solution to bias. By decentralizing verification aligning incentives and enforcing consensus-based validation Mira reduces the concentration of influence that enables bias to persist.

In doing Mira is not just improving AI reliability – it is building a more balanced and accountable AI ecosystem where trust emerges from transparent consensus rather, than centralized authority. Mira Network is making AI more reliable and fair. Mira Network helps to prevent AI outputs. Mira is creating an AI system.