Most people don’t think of themselves as fact checkers, but small habits suggest otherwise. Before buying a token, someone glances at the circulating supply. When a project announces funding, a few readers quietly search whether the number actually appears in the investor report. It’s rarely formal. Just small moments of doubt. A pause before believing something.

I’ve noticed this especially when reading long crypto threads. Someone posts an explanation, maybe about a new AI network or infrastructure token. The post gets attention quickly. Thousands of impressions within hours is normal on active days. Impressions simply mean the post appeared in people’s feeds, nothing more. But the interesting part happens in the comments. A reader asks where a statistic came from. Another questions whether the technical description matches the documentation. That quiet verification behavior already exists everywhere.

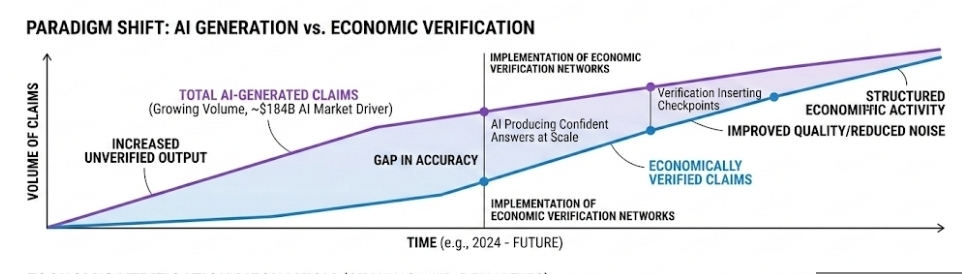

Now AI is producing explanations at a scale humans never did. Models summarize research papers, generate project breakdowns, even draft trading commentary. The surface experience feels efficient. Ask a question, get a confident answer. Underneath, the system works differently. The model is not checking facts in real time. It predicts what a correct answer might look like based on patterns in training data.

That gap between confident language and uncertain accuracy is where networks like Mira start to make sense.

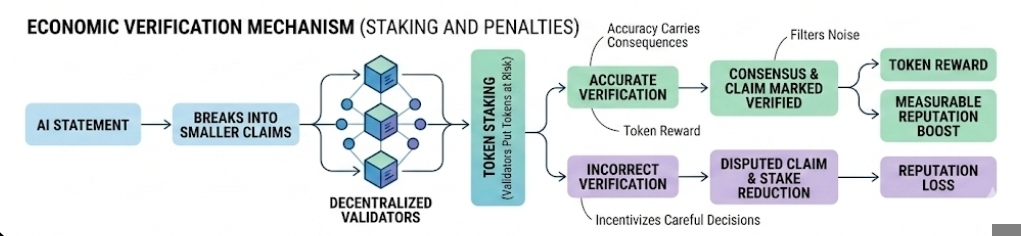

The idea is fairly simple if you look at it from a user’s perspective. AI generates a statement. The network breaks that statement into smaller claims. People inside the system check whether those claims are correct. That’s the visible part. What’s happening underneath is more economic than technical. Validators stake tokens and attach their reputation to the verification process. If their judgment proves wrong later, the system can penalize them financially.

That structure changes behavior. Suddenly accuracy carries consequences.

The demand side of this problem is growing quietly. The global artificial intelligence market was estimated around 184 billion dollars in 2024. That number measures spending on models, infrastructure, and enterprise adoption across industries. What matters here is not the size alone. It tells us something about output volume. When that much money flows into AI systems, the amount of generated information increases dramatically.

More generated information means more claims floating around.

Some of them are harmless. Others are not. A model might incorrectly summarize a funding round, misinterpret a technical upgrade, or exaggerate performance numbers. When those statements circulate across trading communities, small errors can spread surprisingly fast.

Markets react to information long before it gets verified.

A verification network tries to slow that process down just enough to insert a checkpoint. On the surface, users might only see a claim marked verified or disputed. Beneath that simple label sits a competitive system. Multiple participants review the same information independently. Over time, the network learns which validators consistently provide accurate judgments.

Reputation becomes measurable.

The interesting part is how this resembles trading psychology more than people expect. In trading, you constantly evaluate signals. Some traders trust momentum indicators. Others study order books or funding rates. Everyone is trying to filter noise from useful information. Verification markets apply that same instinct to factual claims rather than price movement.

Of course, incentives create their own problems. If rewards are tied to verification activity, some participants may rush through claims simply to collect fees. Decentralized networks have struggled with this before. When incentives prioritize volume, quality tends to slip.

The system design has to counter that.

This is where staking and penalties become important. Validators put their tokens at risk when they verify its is a claim. If they support incorrect information, their stake can be reduced. That financial pressure forces slower, more careful decisions. At least in theory.

Another layer appears when content platforms enter the picture. On Binance Square and CreatorPad, visibility is heavily influenced by engagement depth. Impressions matter, but they are only the first signal. Saves, thoughtful comments, and reading time tell the ranking systems that people actually trust the content.

Writers learn this quickly.

Posts that include careful reasoning often receive fewer immediate likes but stronger long term engagement. The algorithm seems to notice that pattern. Content with meaningful discussion tends to stay visible longer in feeds. It’s subtle, but you can see it if you watch performance over several weeks.

Verification systems may end up interacting with that same dynamic. If readers know claims are being checked economically somewhere in the background, trust in analytical content could shift slightly. Not dramatically. Just enough to change how information flows.

Still, I’m cautious about assuming these systems will work perfectly. Truth is complicated even without AI. Context matters. Data sources disagree. And sometimes what looks like a false claim today turns out to be correct later once new information appears.

Verification markets will have to live with that uncertainty.

What keeps the concept interesting is not the technology itself. It’s the possibility that accuracy might slowly become something markets compete over. If machines generate most of the statements and humans are paid to evaluate them, the act of checking information could evolve into a structured economic activity rather than the quiet, scattered habit people practice today.

Artikel

Verification Markets: Could Mira Turn AI Truth-Checking Into a Competitive Economic System?

Haftungsausschluss: Die hier bereitgestellten Informationen enthalten Meinungen Dritter und/oder gesponserte Inhalte und stellen keine Finanzberatung dar. Siehe AGB.

15

395

Krypto-Nutzer weltweit auf Binance Square kennenlernen

⚡️ Bleib in Sachen Krypto stets am Puls.

💬 Die weltgrößte Kryptobörse vertraut darauf.

👍 Erhalte verlässliche Einblicke von verifizierten Creators.

E-Mail-Adresse/Telefonnummer