The first thing you begin to notice, after spending enough time around modern artificial intelligence systems, is not how impressive they are. It is how fragile the trust around them feels. The outputs look polished. The reasoning appears confident. Yet underneath that confidence sits an uncomfortable uncertainty: no one is entirely sure when the system is correct and when it is merely sounding correct. That gap between confidence and verification is where much of the tension in AI now lives.

Most people who work with AI regularly develop their own quiet coping strategies. They cross-check answers manually. They run the same question through multiple models. They keep a mental filter for statements that feel plausible but slightly off. Over time, using AI becomes less like consulting an oracle and more like interviewing a witness whose testimony must be verified. The tools are powerful, but they require constant supervision.

The deeper problem is structural rather than technical. Modern AI models generate language, not guarantees. Their training encourages coherence and probability, not provable correctness. In casual applications this limitation is tolerable. In environments that require reliability finance, infrastructure, research, automation it becomes a fundamental barrier. Systems cannot safely make autonomous decisions when the underlying information cannot be independently verified.

It is from this quiet frustration that projects like Mira Network begin to make sense. Not as a sudden invention, but as a gradual response to a problem that many developers have been circling for years. The idea behind Mira does not begin with blockchain or consensus. It begins with a more basic question: how can a machine’s statement be treated less like an opinion and more like something that can be checked?

The design approach Mira takes feels less like building a smarter model and more like building a verification environment around models. Instead of asking one system to produce an answer and trusting its internal reasoning, the protocol breaks outputs into smaller, verifiable claims. Each claim can then be evaluated independently by other models across the network. What emerges is not a single answer, but a structured agreement process about what parts of an answer can actually be confirmed.

This shift sounds subtle at first, but it changes the role AI systems play in the ecosystem. A model is no longer expected to be right on its own. It becomes a participant in a broader process where statements must survive scrutiny from multiple independent evaluators. In practice, this moves AI closer to something resembling peer review rather than prediction.

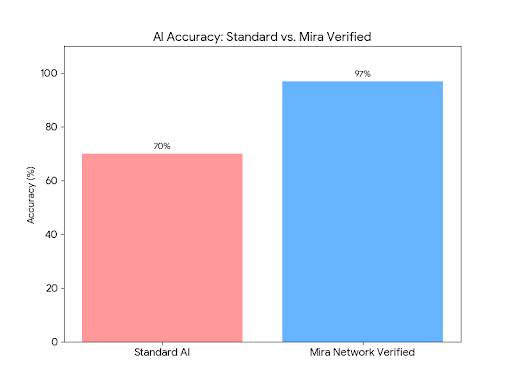

When watching early deployments of Mira’s verification process, what stands out is how differently users interact with AI when verification exists. In traditional workflows, users often treat AI outputs as drafts. They expect to rewrite, correct, and refine them manually. In a verified environment, the interaction becomes more structured. Users care less about the eloquence of an answer and more about whether its claims pass verification. Accuracy begins to replace fluency as the primary metric.

Early adopters of the system tended to be people who were already skeptical of AI outputs. Researchers, infrastructure engineers, and developers working with automation were among the first to experiment with it. They were not looking for faster answers. They were looking for answers they could safely rely on without rereading every sentence.

Later users approached the system differently. Many of them arrived because they had grown accustomed to AI tools but had also experienced their limitations. For these users, the value of verification was less philosophical and more practical. It reduced the mental overhead of constant checking. Trust, even partial trust, reduces cognitive load in a way that speed alone cannot.

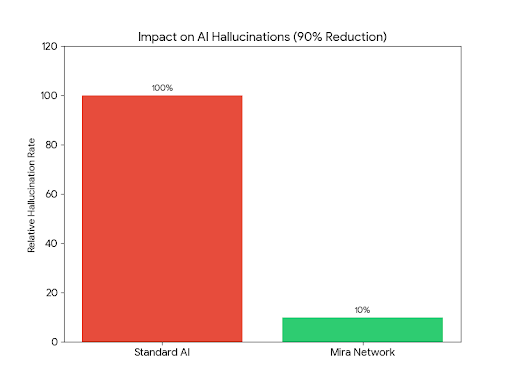

What becomes clear over time is that Mira is not primarily about improving models. It is about distributing doubt. Instead of trusting a single system completely, the protocol spreads responsibility across many evaluators. Each model checks pieces of information independently, and consensus emerges from the overlap between their judgments.

This design creates a different type of resilience. When a single model makes a mistake, the network does not collapse. The incorrect claim simply fails to achieve consensus. The system is built to tolerate disagreement and noise because its structure assumes that individual components will sometimes be wrong.

A surprising side effect of this approach is how it influences the behavior of the models themselves. Systems that consistently produce unverifiable claims begin to lose influence within the network. Those that produce structured, checkable outputs become more valuable participants. Over time, this encourages a style of AI reasoning that prioritizes transparency and traceability.

The use of blockchain in this context often gets misunderstood. It is not there to make the system fashionable or speculative. Its purpose is to anchor verification records in a neutral environment where results cannot be quietly altered after the fact. Once a claim has been evaluated by the network, that evaluation becomes part of a permanent history. This history slowly becomes a public ledger of reliability.

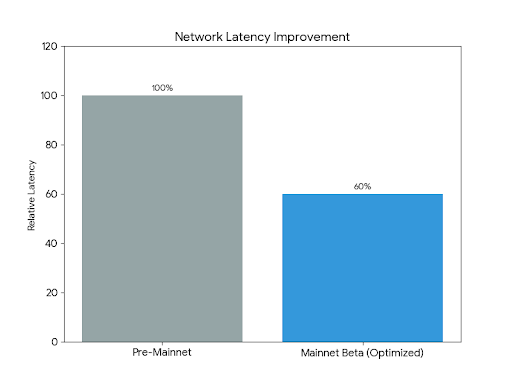

From a design perspective, the most interesting decisions in Mira are the ones that were deliberately postponed. The team resisted the temptation to support every possible type of AI output immediately. Instead, they focused on specific forms of verifiable claims where independent models could realistically reach agreement. This restraint may appear slow from the outside, but it reflects a deeper understanding that verification only works when the claims themselves are well structured.

Edge cases are where systems like this reveal their maturity. Ambiguous questions, subjective interpretations, and incomplete data all challenge the verification process. Rather than forcing consensus in these situations, Mira allows uncertainty to remain visible. Claims can remain unresolved when the network cannot reach reliable agreement. In many contexts, acknowledging uncertainty is safer than forcing a confident answer.

Risk management in the protocol also extends to economic incentives. Participants who evaluate claims must have some stake in the accuracy of their judgments. If verification were free of consequence, models could flood the system with careless evaluations. The economic layer introduces accountability without requiring centralized oversight.

If the ecosystem eventually includes a token, its real significance will likely lie here. Not as a speculative asset, but as a coordination tool that aligns the incentives of verifiers, model providers, and application developers. Tokens in these environments work best when they represent responsibility rather than opportunity.

Community trust in Mira has developed slowly, mostly through observation. Developers who integrate the protocol begin to see how it behaves under stress. They watch how disagreements are resolved, how quickly consensus forms, and how the system handles conflicting evidence. Trust grows not because of announcements, but because the system behaves predictably over time.

One of the more subtle indicators of the protocol’s health is retention among developers who build on top of it. Many verification systems attract initial curiosity but lose users once the integration costs become clear. Mira’s long-term viability will depend on whether teams continue to use it after the novelty fades.

Integration quality also reveals something deeper about the protocol’s trajectory. When tools begin to appear that treat Mira verification as a background layer rather than a visible feature, it suggests the system is moving toward infrastructure status. Infrastructure rarely announces itself. It becomes invisible precisely because it works consistently.

Usage patterns are beginning to hint at this shift. Instead of asking whether a model is “correct,” developers start asking whether a claim is “verified.” That small linguistic change reflects a larger philosophical shift in how information systems are evaluated.

The transition from experiment to infrastructure is rarely dramatic. It usually happens gradually, as more systems begin to rely on the same underlying mechanism without thinking about it. The internet itself evolved this way, through protocols that quietly solved coordination problems no single organization could manage alone.

Mira’s long-term significance will depend less on technological novelty and more on discipline. Verification networks must remain conservative about what they claim to prove. Expanding too quickly into areas that cannot be reliably verified would undermine the credibility the system is trying to build.

If that discipline holds, the project may eventually occupy a role that feels almost mundane. A background layer that quietly checks the claims generated by AI systems before they reach decisions that matter. Most users might never interact with it directly.

But in a world increasingly shaped by automated reasoning, the difference between believable information and verified information will only grow more important. Systems that can bridge that gap without demanding blind trust may end up becoming some of the most quietly essential infrastructure in the AI ecosystem.

And if Mira continues to evolve with patience prioritizing reliability over speed, and verification over spectacle it may slowly become one of the mechanisms that allows artificial intelligence to move from interesting tool to dependable collaborator. Not through grand promises, but through the steady accumulation of proof.

@Mira - Trust Layer of AI #Mira $MIRA