Most people think AI’s biggest problem is intelligence. In reality the real problem is trust.

For years, the AI conversation has focused on making models bigger, faster, and smarter. But intelligence alone has never guaranteed reliability. Large models generate answers based on probabilities not certainty. Most of the time the results look convincing. Occasionally they are quietly wrong.

Not dramatically wrong just subtly off. A citation that never existed. A financial insight built on a misread number. A legal explanation missing a critical clause.

These small cracks rarely explode instantly. Instead they slip quietly into tools workflows and decisions. When AI begins interacting with financial systems smart contracts or autonomous agents even a small mistake can cascade into something larger.

This is why the future of AI reliability may not come from building a single perfect model. It may come from designing systems that question AI itself.

Instead of treating an output as the final answer a different approach is emerging: treat every AI response as a claim that needs verification.

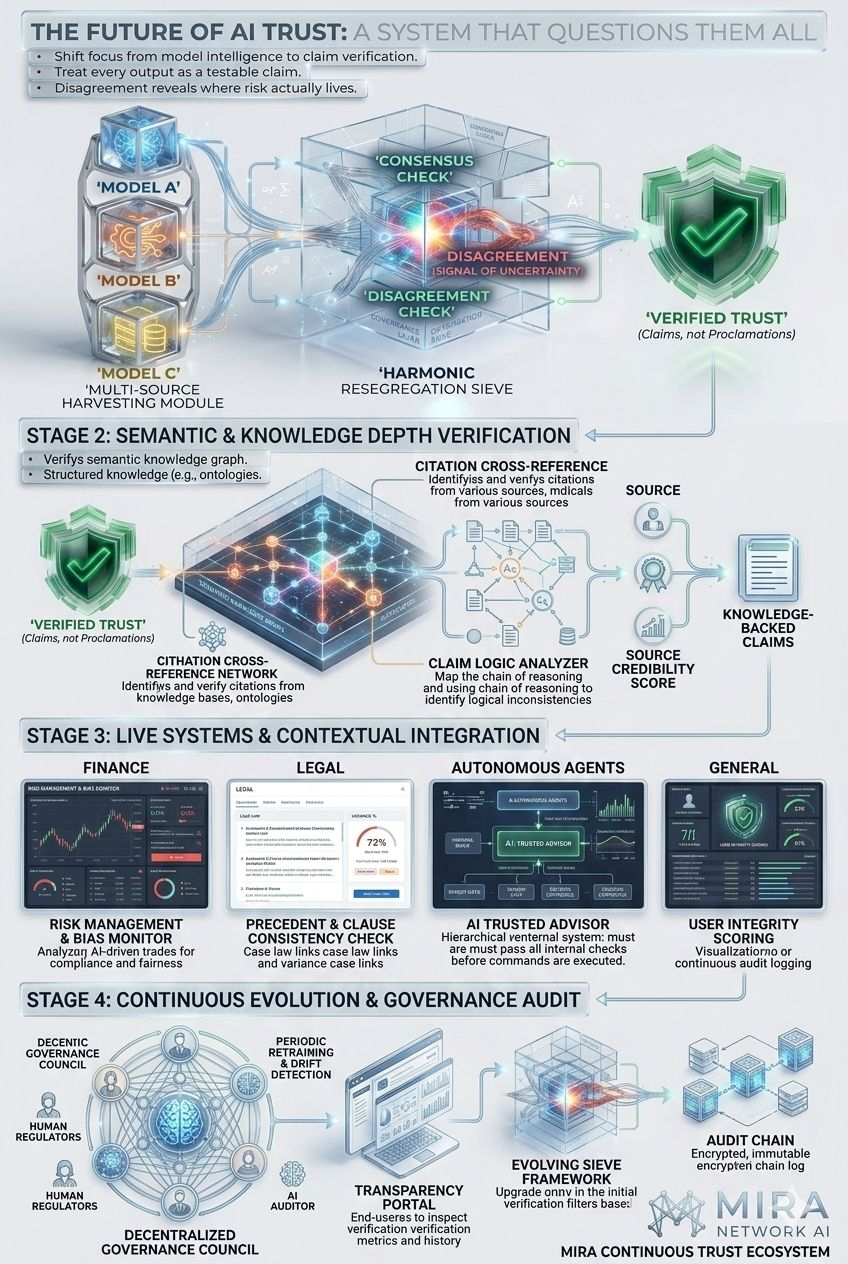

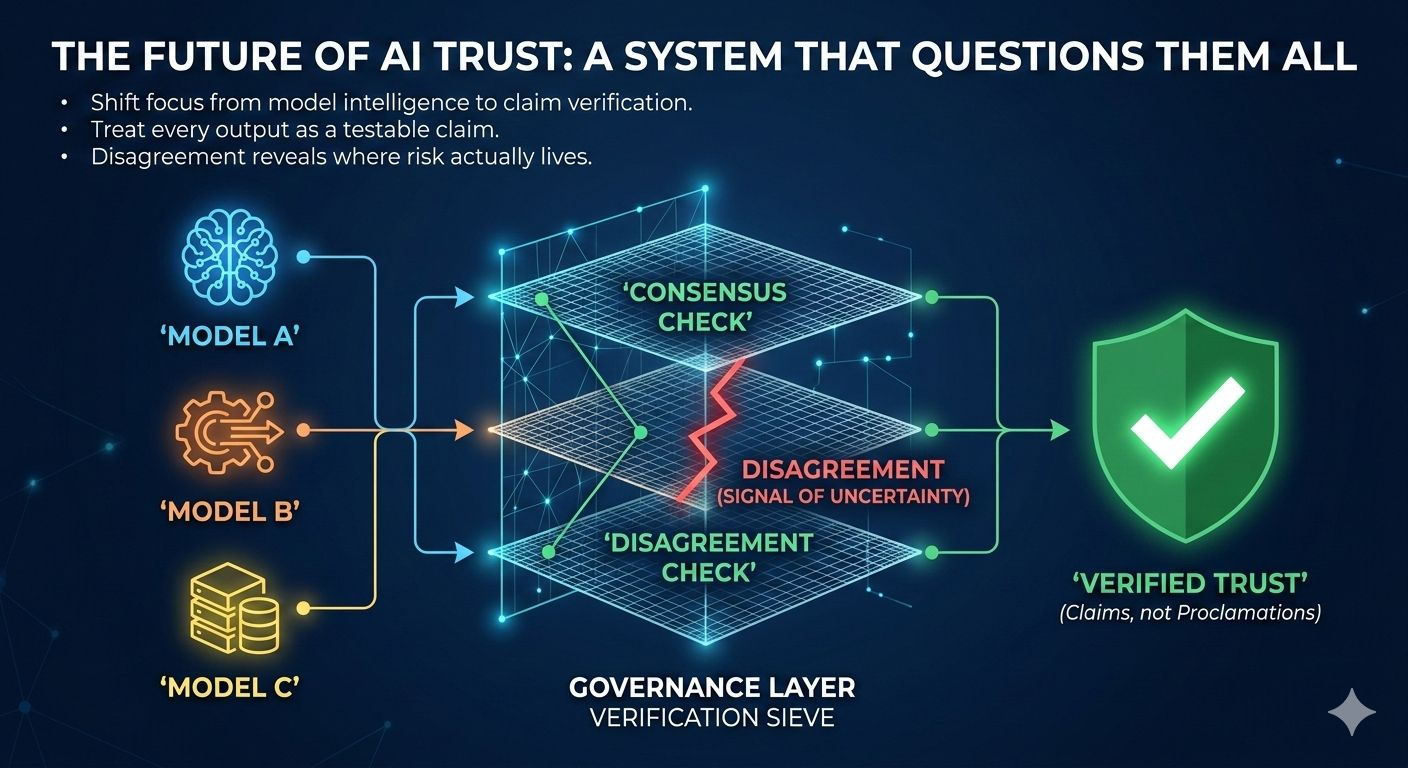

This is where Mira introduces a fascinating shift. Rather than placing trust in one model’s authority, Mira creates a multi-model governance layer where independent AI systems evaluate the same claim. Each model brings different training data, reasoning paths and architectural biases. Agreement becomes meaningful, but disagreement becomes even more valuable because it reveals where uncertainty actually lives.

In this structure answers are not simply accepted. They are broken down. A complex output becomes smaller, verifiable statements. A financial summary becomes traceable numbers. A legal interpretation becomes a chain of reasoning that can be inspected. The goal is not to magically make models smarter. The goal is to make their claims testable.

And that introduces a deeper shift. Trust moves away from individual models and toward verification infrastructure. An output is no longer considered reliable because “the model said so. It becomes reliable because independent systems reached compatible conclusions after examining the same claim.

Of course, consensus alone is not perfect. Models trained on similar datasets may share the same blind spots and agreement can still carry bias. That is why diversity in model architecture and transparency in verification processes matter just as much as the consensus itself.

There is also a practical side to this system Verification requires time computation and infrastructure. Applications integrating these layers must decide which outputs require deeper scrutiny and which can move quickly. Reliability becomes a strategic decision, not just a technical feature.

And that is where the competitive landscape of AI may quietly change. In the next generation of AI systems, the winners may not simply be the ones that answer the fastest or sound the smartest. The winners will be the ones that can prove their answers are trustworthy.

Seen from this perspective, Mira’s multi-model governance is not just another AI feature. It is an early glimpse of a larger shift in how machine intelligence will operate: a world where AI outputs are proposals, not proclamations, where disagreement is treated as signal, not failure, and where trust is engineered into the system itself.

@Mira - Trust Layer of AI #Mira