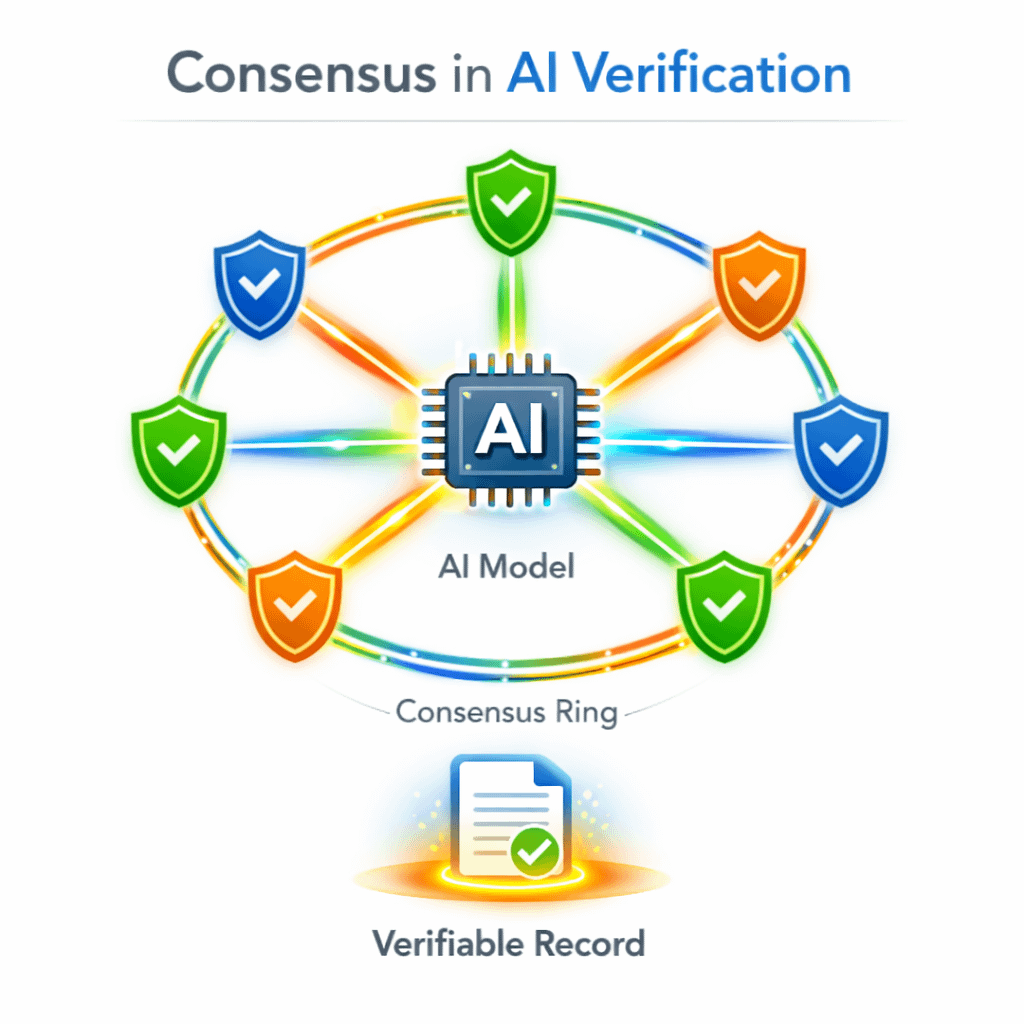

I’ve noticed that when people talk about artificial intelligence infrastructure, the conversation usually focuses on models, training datasets, and computational power. Consensus mechanisms rarely enter the discussion. That makes sense in some ways because consensus is traditionally associated with blockchain systems rather than AI. But when I started looking more closely at Mira Network, it became clear that consensus plays a surprisingly important role in how the network attempts to verify AI activity. At a basic level, consensus mechanisms exist to solve a simple but difficult problem. In decentralized systems, there is no single authority responsible for declaring what is true. Instead, multiple participants must agree on a shared record of events. That agreement process is what gives decentralized networks their credibility. In the context of Mira, the events being verified are not financial transactions in the traditional sense. They are records of AI behavior. That distinction caught my attention immediately. Most blockchain systems focus on transferring value or executing smart contracts. Mira’s infrastructure, on the other hand, attempts to verify how AI systems operate. Inputs, execution conditions, and outputs can be recorded through the network, and consensus mechanisms help determine whether those records are accepted as valid.

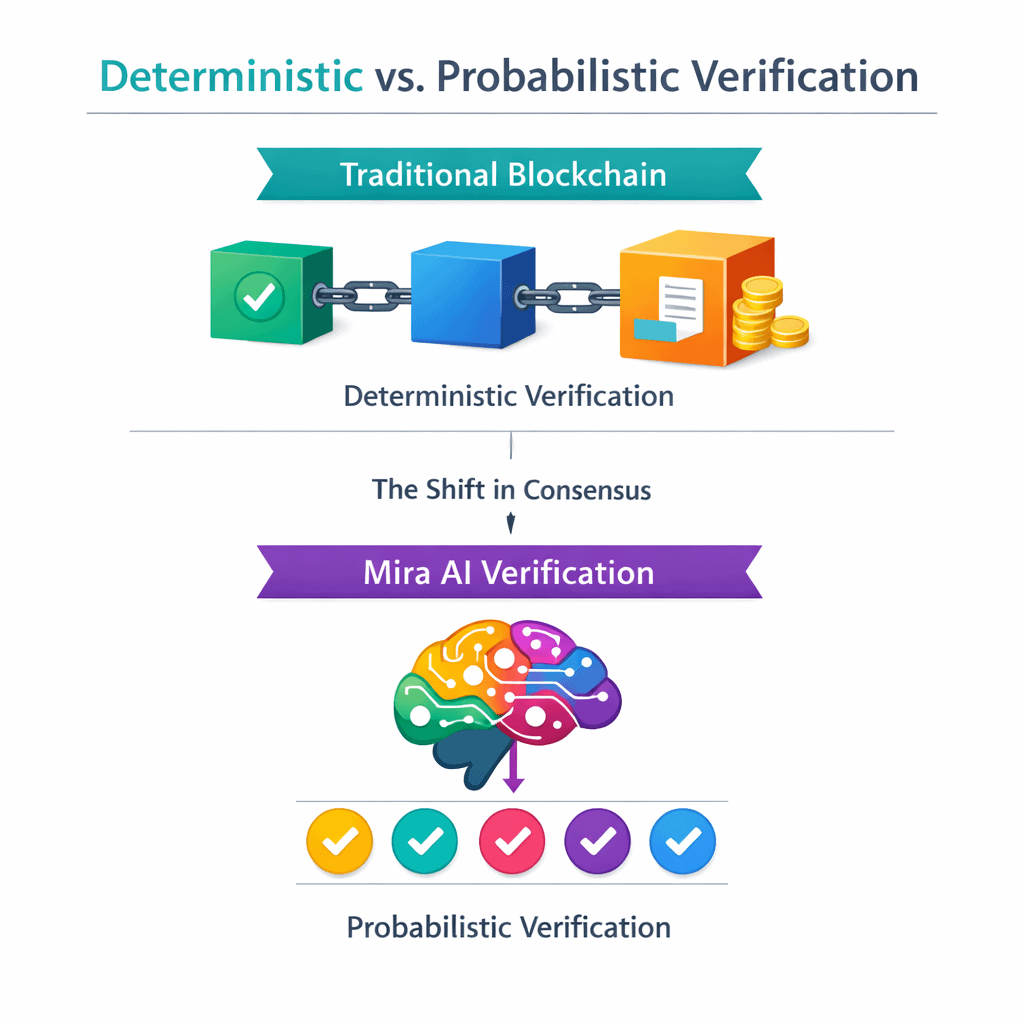

From my perspective, this creates a new type of consensus problem. Instead of validating currency transfers or contract execution, the network must validate claims about what an AI system did. That means consensus mechanisms need to address questions that are not always present in traditional blockchain systems. For example, how does the network confirm that an AI model actually followed a particular set of rules or constraints? How do validators determine whether reported outputs correspond to the claimed inputs? These are not trivial challenges. Consensus mechanisms are usually designed around deterministic events. A transaction either occurred or it did not. A smart contract either executed according to its code or it failed. AI systems behave differently. Their outputs often depend on probabilistic models and complex internal processes. This creates an interesting tension between deterministic verification and probabilistic intelligence. From what I can see, Mira’s ecosystem attempts to handle this tension by separating the verification of conditions from the interpretation of results. Validators are not necessarily judging whether an AI’s decision was correct or optimal. Instead, they verify whether the system operated under the conditions it claimed to follow. I find that distinction important because it narrows the scope of consensus. The network is not trying to decide whether an AI system made the best possible decision. It is attempting to confirm that the system behaved according to the rules and inputs it reported. That approach makes consensus more manageable, even if the underlying AI remains complex.

From my perspective, this creates a new type of consensus problem. Instead of validating currency transfers or contract execution, the network must validate claims about what an AI system did. That means consensus mechanisms need to address questions that are not always present in traditional blockchain systems. For example, how does the network confirm that an AI model actually followed a particular set of rules or constraints? How do validators determine whether reported outputs correspond to the claimed inputs? These are not trivial challenges. Consensus mechanisms are usually designed around deterministic events. A transaction either occurred or it did not. A smart contract either executed according to its code or it failed. AI systems behave differently. Their outputs often depend on probabilistic models and complex internal processes. This creates an interesting tension between deterministic verification and probabilistic intelligence. From what I can see, Mira’s ecosystem attempts to handle this tension by separating the verification of conditions from the interpretation of results. Validators are not necessarily judging whether an AI’s decision was correct or optimal. Instead, they verify whether the system operated under the conditions it claimed to follow. I find that distinction important because it narrows the scope of consensus. The network is not trying to decide whether an AI system made the best possible decision. It is attempting to confirm that the system behaved according to the rules and inputs it reported. That approach makes consensus more manageable, even if the underlying AI remains complex.

Still, implementing this kind of verification layer raises practical questions. Validators must have access to enough information to confirm AI behavior without compromising privacy or proprietary model details. Incentive structures must encourage honest validation without making the process excessively expensive. And the consensus mechanism itself must remain efficient enough that it does not slow down the systems relying on it. These challenges are not unique to Mira, but they become particularly visible in networks that attempt to verify non-financial activity. Another aspect I keep thinking about is scalability. If AI systems eventually operate across financial platforms, logistics networks, and digital services simultaneously, the number of verification events could grow quickly. Consensus mechanisms must handle that scale while maintaining reliability. Many decentralized systems struggle when transaction volumes increase dramatically. Whether verification of AI activity can scale efficiently within Mira’s architecture remains something I continue to watch closely. Despite these uncertainties, the concept itself is interesting. Consensus mechanisms have traditionally been used to maintain shared financial ledgers. Mira’s approach suggests that similar infrastructure might be applied to maintaining shared records of AI behavior. If that idea proves workable, it could represent a subtle shift in how decentralized networks are used. Instead of coordinating purely economic activity, they could also coordinate trust around increasingly autonomous digital systems. For now, I see Mira’s consensus mechanisms less as a finished solution and more as an evolving experiment in how decentralized verification might operate in an AI-driven environment. As artificial intelligence systems become more integrated into critical infrastructure, the question of how their actions are verified will likely become harder to ignore. And in that context, the role of consensus may expand beyond finance into the broader architecture of digital trust.