I’ve seen tons of projects swear they’ll keep our data locked down tight. They promise the moon—fixing everything from privacy leaks to seamless sharing—but the minute you ask for a real-world case study, they go quiet. Midnight feels different. It’s actually chasing down problems that are hitting people and companies right now: letting AI tap into data without the usual trust issues, letting doctors swap patient records safely, and helping banks stay on the right side of the rules. These aren’t fluffy ideas dreamed up in a lab; they’re headaches that organizations are already throwing serious cash at.

What really hooks me about Midnight is the way it’s trying to crack these nuts in practice.

The AI side is the one that grabs my attention most, because it’s both the coolest example and the clearest window into where Midnight’s whole approach could stumble.

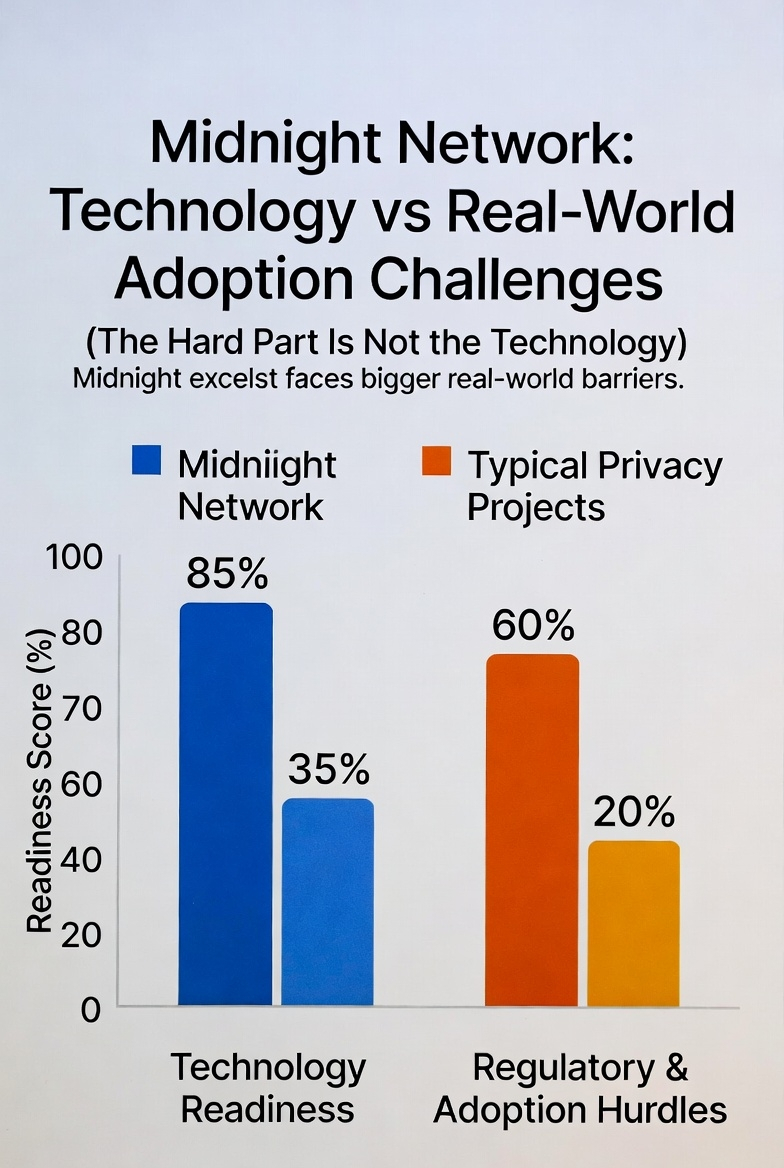

Midnight argues that AI is stuck in neutral because nobody trusts it with their sensitive stuff. Training those models takes mountains of data, yet individuals and big outfits are super guarded about letting anyone touch it. Their fix? A clever privacy layer that keeps everything above board. Using zero-knowledge tech, the AI can learn from the data without the people running the model ever peeking at the actual details. On paper, that sounds like exactly the breakthrough needed to rebuild trust.

But here’s where it gets messy. The outfits sitting on the really valuable training data—think big hospitals, banks, and government agencies—don’t just flip a switch. Midnight has to navigate a maze of legal sign-offs, compliance checks, and internal approvals before anyone will even consider changing their data habits. The engineering side of their solution is solid and ready. Getting actual humans and organizations to adopt it, though? That’s the mountain Midnight barely mentions.

Healthcare makes the problem even sharper. Midnight claims it can let patients and providers swap medical details without exposing private info. That’s a genuine pain point—anyone who’s bounced between specialists knows how frustrating it is when records don’t travel with you. But health data isn’t just private; it’s wrapped in heavy regulations like HIPAA in the U.S. and GDPR across Europe. Those laws spell out exactly how patient information can move and who can touch it. Even if Midnight’s system can mathematically prove that nothing leaks, it’s far from clear whether regulators will nod and say “good enough.”

Their white papers talk up “programmable privacy” as the magic key to finally unlocking health-data sharing. I really want to buy into that vision. The catch is, slick tech alone has never been enough. Almost every project that’s tried this before hits a wall when it can’t tick every single compliance box.

The exact same snag shows up with AI. Sure, Midnight might prove the data stayed hidden, but the company actually using the model still has to stand in front of regulators and lawyers and explain exactly how they’re applying it. So far, Midnight hasn’t laid out how its tech will hand over the paperwork that satisfies those gatekeepers.

The core idea is strong, and the direction feels right. Healthcare and AI are exactly the fields crying out for this kind of privacy-first approach. The big question hanging over everything is simple: how does Midnight plan to bridge the gap between its clever code and the mountain of regulatory demands that change from country to country?

When a hospital or an AI lab finally plugs in Midnight’s system, what kind of reports, audit trails, or official stamps will it spit out to prove it’s playing by the rules—HIPAA, GDPR, or whatever else the local authorities throw at them? That’s the part nobody’s really answered yet.

$NIGHT @MidnightNetwork #night