I was sitting in the dark last night, scrolling through my phone after a long day, when the usual noise of crypto chatter felt heavier than normal. People keep saying blockchain is all about transparency—like it's this pure, unbreakable virtue that will fix everything wrong with trust in systems. I used to nod along. Then I opened the CreatorPad campaign page for Midnight Network on Binance Square, clicked into the task to draft something about "Midnight Network Use Cases for Secure Decentralized Applications," and stared at the prompt asking me to outline protected data flows in dApps.

That moment hit differently. As I typed out examples—selective disclosure for KYC credentials, shielded credit scores, private yet verifiable votes—the screen's clean layout with those bullet-ready fields made the contradiction too sharp to ignore. Here we are, building tools to hide parts of the truth on purpose, while the whole crypto story still sells total openness as the only moral path. The task forced me to write about shielding sensitive data behind zero-knowledge proofs so apps can function without exposing everything, and it quietly unraveled something I'd accepted without question.

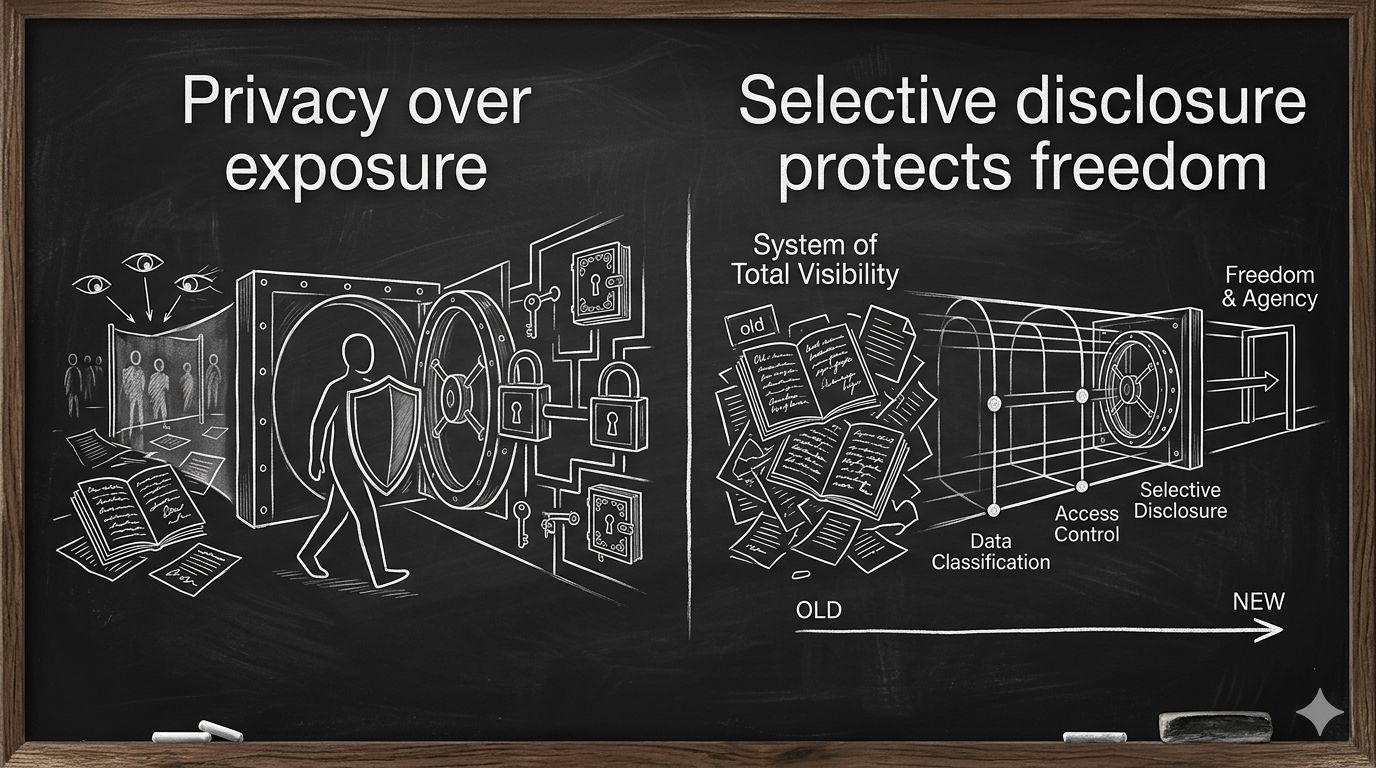

Transparency isn't always the hero we pretend it is. In fact, demanding full visibility on-chain often just creates new vulnerabilities—exposing personal habits, financial positions, or even political choices to anyone with a node and time. We've romanticized the public ledger as freedom, but it can feel more like mandatory surveillance dressed up as decentralization. Midnight's approach flips that: let the protocol handle what's provable without broadcasting the underlying details. You prove you're over 18 without showing your birthday, or that funds are legitimate without revealing your entire wallet history. It's not about secrecy for crime; it's about reclaiming control over what should stay personal in a world that hoards data by default.

The project itself shows this tension clearly. While most chains force everything into the open to "build trust," Midnight carves out space for rational privacy—public verifiability where it matters, confidential where it protects. That distinction disturbed me more than I expected because it challenges the foundational myth that more openness equals more integrity. Sometimes opacity, when programmable and selective, actually protects integrity instead of undermining it. We've spent years celebrating projects that expose users under the banner of immutability, but real adoption in finance, healthcare, or identity probably needs the opposite: enough privacy to make powerful institutions willing to participate without risking leaks or compliance nightmares.

So what happens when we stop treating transparency as sacred and start asking whether selective disclosure might actually deliver a freer, safer web3? If we keep insisting every transaction must be naked to the world, we might end up with chains full of bots and speculators but empty of meaningful, everyday use. Midnight isn't solving for maximum openness—it's solving for usable privacy. That shift feels riskier to admit than it should.

Isn't it strange that the more we expose, the less free we actually become? #night $NIGHT @MidnightNetwork