the thing that actually keeps me up about Midnight isn't the privacy layer itself — it's the boundary between private state and public state and what happens when an application has to cross it 😂

let me explain what I mean because this one takes a second to set up properly.

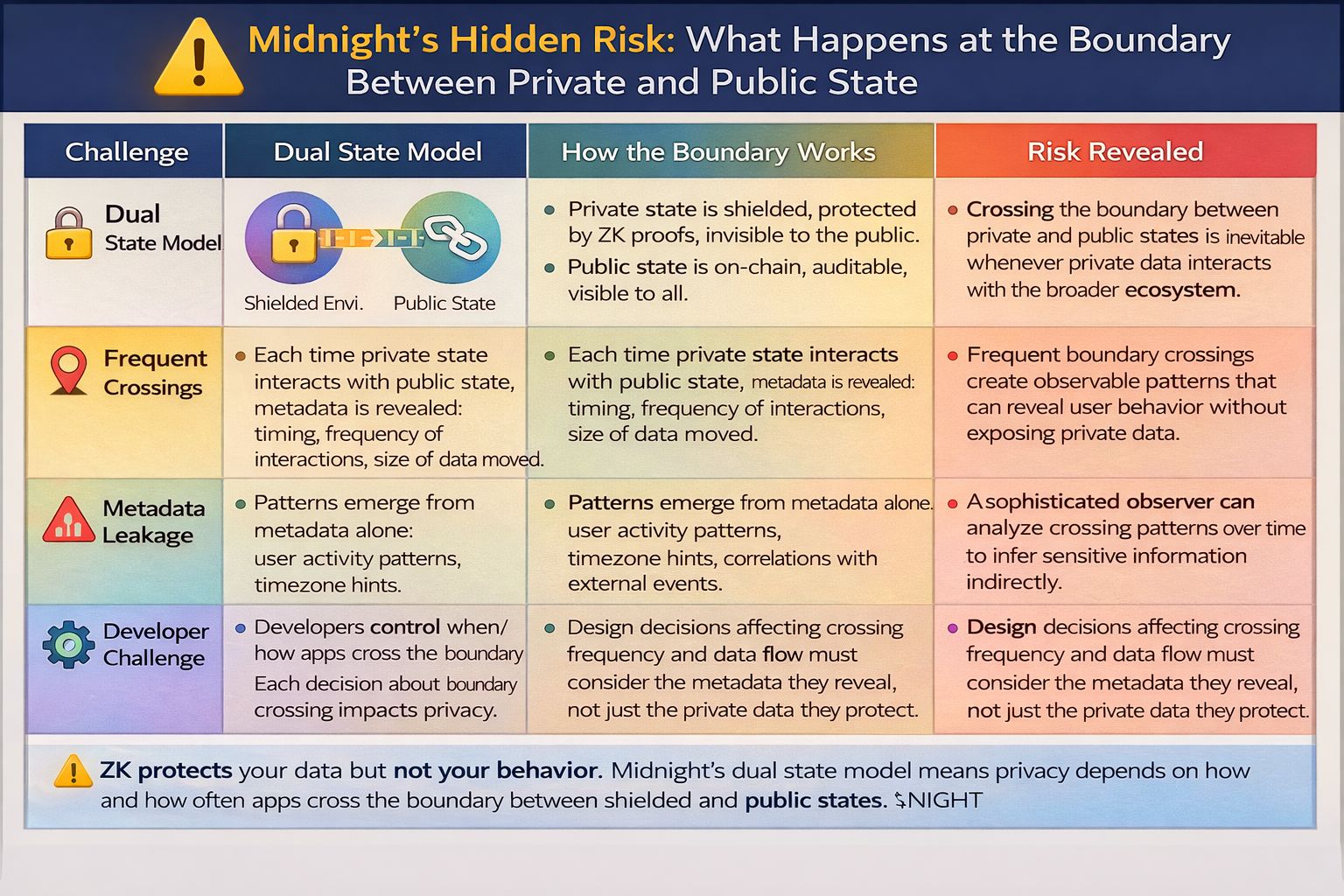

Midnight is built around a dual state model. you have private state — data that lives in the shielded environment, protected by zero knowledge proofs, invisible to the outside world. and you have public state — data that lives on chain, visible to everyone, permanent and auditable in the way that blockchain data always is.

most explanations of Midnight stop there. private state is private. public state is public. the ZK proofs connect them. elegant. clean. done.

but real applications don't live entirely in one state or the other.

real applications constantly need to move information between those two worlds. a private balance has to interact with a public liquidity pool. a shielded identity credential has to satisfy a public access control requirement. a confidential business agreement has to produce a public outcome that both parties can point to.

every time an application crosses that boundary something has to be revealed. not the underlying private data — the ZK proof handles that. but the fact that a crossing happened. the shape of the interaction. the timing. the frequency.

and that's where the analysis gets genuinely interesting.

because there's a body of research in cryptography and privacy engineering that has been quietly making the same point for years. the contents of a communication can be perfectly encrypted and the communication can still leak enormous amounts of information through its metadata alone.

who communicated with whom. when. how often. how much data moved. what pattern the interactions follow over time.

traffic analysis is real. it's been used effectively against encrypted communications systems that were cryptographically sound at the content level. the lesson from that history is that protecting the data is necessary but not sufficient. you also have to think about what the pattern of interactions reveals even when the data itself is invisible.

for Midnight applications that lesson translates directly to the public-private boundary.

every time a shielded transaction touches the public state it leaves a mark. not a mark that reveals the private data. but a mark that says something happened here at this time involving this application. over enough time and enough interactions those marks accumulate into a pattern. and patterns carry information even when the individual data points are opaque.

a sophisticated observer watching the public chain doesn't need to break the ZK proofs to learn things about a Midnight application's user base. they just need to watch the boundary.

high frequency of state crossings at certain times of day suggests a user base in a particular timezone. unusual spikes in crossing events correlate with external events and reveal what the application is responding to. the ratio of private transactions to public state updates reveals something about the application's architecture and use case. the growth rate of crossing events over time tells a story about adoption.

none of this breaks the cryptographic guarantee. the private data stays private. but the application becomes partially legible through its behavior at the boundary even when its internals are perfectly shielded.

and here's the thing that makes this a developer problem not just a theoretical problem.

application developers make architectural choices constantly about when to cross the public-private boundary and how. those choices feel like implementation details in the moment. where does this piece of state live. when does this interaction need to touch the public chain. how do we structure this data model to make the application logic clean and efficient.

but those implementation details are privacy decisions. every choice about boundary crossing frequency and pattern is a choice about what an outside observer can infer about the application and its users.

most developers don't think about it that way. they're thinking about correctness and performance and user experience. the privacy implications of their state model architecture are not the thing they're optimizing for because the ZK layer is supposed to handle privacy and they've mentally delegated that responsibility to the protocol.

the protocol handles the data. it doesn't handle the pattern. that's still on the developer.

and I don't think there's enough tooling or guidance right now to help developers understand what their state crossing patterns are revealing and whether those revelations are acceptable given the privacy promises their application is making.

imagine a healthcare application built on Midnight. private patient data fully shielded. ZK proofs sound. cryptographic guarantee intact.

but the application crosses the public state boundary every time a prescription is filled. and prescriptions for certain drug categories follow recognizable timing patterns. weekly. monthly. with specific gaps that correspond to refill schedules.

a sophisticated observer watching the public chain doesn't know whose prescription it is. but they can see that this application is producing state crossing events with a pattern that looks like a chronic condition management use case. over time with enough data points they can start building population-level inferences that compromise user privacy at the group level even when individual privacy is technically preserved.

that's not a hypothetical attack vector. it's a known class of privacy failure that has shown up in health data systems before.

the ZK proofs didn't fail. the application architecture leaked.

the question I keep coming back to is whether the current state of Midnight's developer tooling makes this failure mode visible enough that developers can actually design around it.

because the applications most likely to be built on Midnight first — the ones with clear privacy use cases, the ones that genuinely need what Midnight offers — are exactly the applications where this class of failure matters most. healthcare. legal. financial. personal safety.

these are not domains where you want to discover a privacy leak after the application has a real user base.

I want to be honest about the limits of my own analysis here. I don't know exactly what the current developer tooling looks like in practice. I don't know what guidance exists internally about state model architecture and boundary crossing patterns. it's possible this is already being addressed in ways I haven't seen yet.

but I haven't seen it discussed publicly. and the things that don't get discussed publicly are the things that developers building their first Midnight application are least likely to think about on their own.

the ZK architecture is genuinely impressive. the dual state model is genuinely clever. the cryptographic foundation is solid.

but privacy is still a system. and a system that protects the data while inadvertently broadcasting the pattern of interactions around that data is a system that's doing half the job.

the boundary is where the hard work actually lives. 🤔